Operations | Monitoring | ITSM | DevOps | Cloud

April 2022

Implementing access control policies in CI/CD pipelines

Imagine yourself in this situation: You are a motivated and skilled DevOps or DevEx engineer. You have a plan to implement automated, complete CI/CD pipelines. You know how to do it, and you know how the extra productivity and automation will benefit your team and the whole company. But the project is never approved, because of security concerns. Many organizations, especially those in regulated industries, have strict requirements for releasing their software, and rightfully so.

Continuous deployment of a Nest.js application to Heroku

If you have been around for a while in the field of software development, especially web development, then you know how tedious and stressful it has historically been to deploy your source code to a webserver. Most of the time, this was accomplished by uploading it using File Transfer Protocol (FTP). But now we have numerous ways of automating the deployment process. In this tutorial, we will learn how to set up continuous deployment of a Nest.js application to Heroku using CircleCI.

Setting up continuous integration with CircleCI and GitHub

Continuous integration (CI) involves the test automation of feature branches before they are merged to the main Git branch in a project. This ensures that a codebase does not get updated with changes that could break something. Continuous delivery (CD), on the other hand, builds upon CI by automating releases of these branches or the main branch. This allows small incremental updates that reach your users faster, in line with Agile software development philosophy.

Deploying Microservices with GitOps

Learn how to adopt GitOps for your microservices deployments. This blog post will explain the highlights listed below and how it’s possible to adopt GitOps and the benefits of deploying with Argo for your microservices.

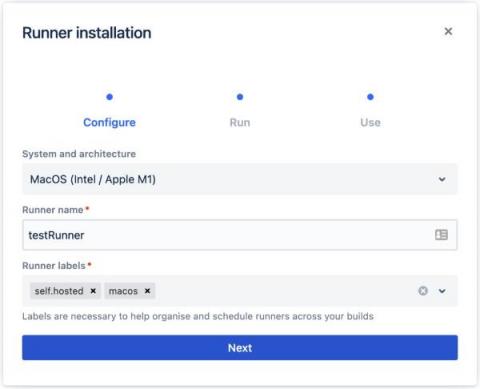

Install self-hosted runners in 5 minutes or less

We recently revamped our freemium plan to be the most generous free CI/CD tier for developers on the planet. In addition to making powerful features and resources available on the Free plan, we’ve extended self-hosted runners to all CircleCI plans.

Lockless code deploys in Puppet Enterprise & Continuous Delivery for PE

Your Puppet Enterprise (PE) installation’s primary job is to compile catalogs and send them to agents to be enforced. When you deploy code, all catalog compilation stops and waits for the code deploy to complete. This impacts performance in any installation, and the impact escalates with more frequent code deployments. Code deployments usually increase when you use Continuous Delivery for Puppet Enterprise, making the impacts of stopped catalog compilations even more apparent.

Cloud-Native Package Management for the Banking Industry

Software development in the banking and finance industry can make you feel like you’re wearing chains. Regulation, compliance, upfront costs, privacy, legacy systems, fear of cyberattacks, and an “if it ain’t broke” approach can lead to a lack of innovation. Despite these challenges, some technology-forward banks like Capital One, JP Morgan Chase, HSBC, and Wells Fargo have embraced the cloud and introduced DevSecOps and cloud-friendly architectural practices.

Break Silos and Foster Collaboration with DevOps

DevOps is a well established discipline. By now, most developers, IT engineers and site reliability engineers (SREs) have heard all about the importance of “breaking down silos” and achieving seamless communication and collaboration across all stakeholders in the continuous integration/continuous delivery (CI/CD) process — which extends from source code development through production environment management and incident response.

CI/CD & DevOps Pipeline Analytics: A Primer

Tracking application-level and infrastructure-level metrics is part of what it takes to deliver software successfully. These metrics provide deep visibility into application environments, allowing teams to home in on performance issues that arise from within applications or infrastructure. What application and infrastructure metrics can’t deliver, however — at least not on their own — is breadth.

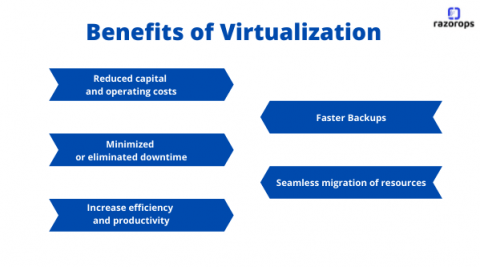

What is Virtualization & Top 5 Benefits of Virtualization

Virtualization uses software to create an abstract layer over the hardware. By doing this it creates a virtual computer system, known as the virtual machines (VMs). This will allow organizations to run multiple virtual computers, operating systems, and applications on a single physical server How Does Virtualization works: Virtual machine (VM) is a virtual representation of a physical computer.Virtual machines can’t interrelate directly with a physical computer, but.

Automating the deployment of LoopBack applications to Heroku

Before automation became commonly used by software development teams, bottlenecks, repetitive tasks, and human error were rampant. Automation has freed up valuable human resources for organizations while reducing the risk of human error caused by active human brains trying to perform mundane repetitive tasks. Recent strides in the area of continuous integration and continuous deployment (CI/CD) have made it more feasible to automatically deploy updates to software applications.

Deploy application environments on demand with the Quali Torque orb

Most developers care about building the next big thing. Automating your build, test, and release processes allows you to maintain focus on innovating and delivering value to your users. By combining the power of best-in-class CI/CD workflow orchestration with managed environments-as-a-service, developers can stay focused on building what’s next.

The Future is Continuous: Integration, Packaging and Delivery - DevOps Institute SKILup Day CI/CD

Test Splitting | Fundamentals, Benefits, & How to Split Tests on CircleCI

CircleCI & Arm Virtual Hardware

Synthetic Monitoring for CI/CD Pipelines

For DevOps teams, delivering quality software has long required reconciling a major tension: In a perfect world, you’d catch every issue in each new release of your application before you deployed the release into production. But in the real world, doing so is tricky, not least because it’s hard to collect data about application performance before the application is actually deployed.

Automating Flask deployments with PythonAnywhere

Now that development teams know about CI/CD, there is no reason for deployments to become a time-consuming and cumbersome process. CI/CD may start with continuous testing, but adding automated deployments takes your CI/CD practice to the next level. Continuous deployment slashes the time it takes to release so you can spend more time improving the quality of your applications.

A Guide To Continuous Integration

Before continuous integration was invented, developers had to work on code separately before merging it into the end product. This technique had a high chance of error. If something was left out, it took time to determine the problem. Furthermore, communication between team members became difficult as the project grew. The larger the project, the more developers, engineers, and project owners were supposed to be faithful to each other’s schedules.

How to write optimized and secure docker file to create docker image.

Functional vs non-functional software testing

When you think of software testing, what comes up first? For many developers, unit tests and integration tests are often top of mind. Both software testing methods are vital to writing and maintaining a high-quality production codebase. But they are not sufficient on their own. Your team’s testing practice should assess the entire application, observe the larger story of how it operates when functioning correctly, and raise alarms when deviations are found.

Continuous integration for LoopBack APIs

The explosion of talent available for remote work (and the widespread acceptance of remote first employment) allows for global collaboration on an unprecedented scale.

A modern approach to change management with Bitbucket Cloud and Jira Service Management

The Top 5 Harness.io Alternatives Compared (Updated 2022)

The key principles that doubled eBay's software delivery productivity ft. Randy Shoup

SAST vs DAST: what they are and when to use them

As digital transformation accelerates and more organizations use software solutions to facilitate work operations, security threats have become more commonplace. Cybercriminals tirelessly develop ways to exploit software application vulnerabilities to target organizational networks. A notable example is the 2017 Equifax data breach, which exposed the personal details of 145 million Americans.

Kubernetes Master Class: Securing the Supply Chain and Infrastructure for Kubernetes Deployments

Getting Real About Multi-Cloud DevOps

GitOps Benefits and Considerations

GitOps. The term appears everywhere, but what are its benefits? And is it as difficult as it sounds? Well, GitOps is a pretty easy paradigm to integrate with your current processes. However, my saying it is “easy” doesn’t help you decide whether you want to adopt it or not. So, let’s talk about it.

Build cloud infrastructure from your CI pipeline with Pulumi

Modern software systems are complex, with services distributed across data centers, in many zones, all around the world. Gone are the days when we managed individual servers dedicated to our organization, comfortable with the knowledge of the unique quirks of our setup. Now we rely on others to manage massive data centers where we borrow small slices of virtual space on shared hardware, traveling over shared networks, all in a system we call the cloud.

Managing Multi-Cluster, Multi-Cloud Deployments with GitOps and ArgoCD - Ricardo Rocha (CERN)

Codefresh Demo Webinar

How the Insights team uses Insights to optimize our own pipelines

Here on the CircleCI Insights team we don’t just develop stuff for CircleCI users, we are CircleCI users. Really, there’s no better way to get to know your product than to use it, and the Insights team is no exception. A few months ago, we realized that our pipeline configuration for the Insights UI left much to be desired.

Continuous Performance Regression Testing for CI/CD

Developers strive to produce efficient code. Many times, developers will add code to their repositories and test it to make sure it works, but they are forgetting one very important step: benchmarking! Benchmarking allows developers to see the performance impact on their code output. If properly integrated into a CI/CD pipeline, it could prevent catastrophic drops in performance before any code is shipped/deployed at all.

Using OpenID Connect identity tokens to authenticate jobs with cloud providers

Introducing OpenID Connect identity tokens in CircleCI jobs! This token enables your CircleCI jobs to authenticate with cloud providers that support OpenID Connect like AWS, Google Cloud Platform, and Vault. In this blog post, we’ll introduce you to OpenID Connect, explain its usefulness in a CI/CD system, and show how it can be used to authenticate with AWS, letting your CircleCI job securely interact with your AWS account, without any static credentials.

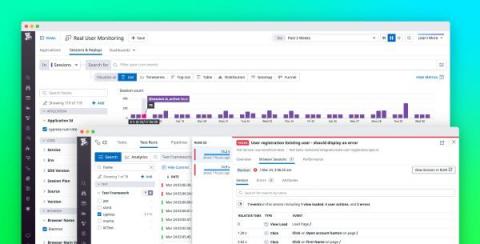

Troubleshoot end-to-end tests with CI Visibility and RUM

Adding automated testing to your CI/CD pipelines can help you ensure that you deploy changes safely. But as you continue to shift left, the number and complexity of tests are likely to increase, making them slower to run and harder to troubleshoot. Datadog CI Visibility can help you track the performance of your CI/CD pipelines and tests—and now you can also use Real User Monitoring (RUM) to monitor end-to-end (E2E) Cypress tests.

Welcome to the "New Normal" for Your Software Supply Chain

Understanding and Implementing a Software Bill of Materials

Software programs today can be likened to a complex stew, with multiple ingredients sourced from disparate places. In software, open-source tools are a major ingredient. According to the 2020 Open Source Security and Risk Analysis (OSSRA) report produced by the Synopsys Cybersecurity Research Center, 99 percent of the codebases contain at least one open source component, with open source comprising 70 percent of the code overall.

Continuous integration for Go applications

Go, an open-source programming language backed by Google, makes it easy to build simple, reliable, and efficient software. Go’s efficiency with network servers and its friendly syntax make it a useful alternative to Node.js. All network applications need well-tested features, and those developed in Go are no different. In this tutorial, we will be building and testing a simple Go blog.

What is end-to-end testing?

End-to-end testing, also known as E2E testing, is a methodology used for ensuring that applications behave as expected and that the flow of data is maintained for all kinds of user tasks and processes. This type of testing approach starts from the end user’s perspective and simulates a real-world scenario. For example, on a sign-up form, you can expect a user to perform one or more of these actions: You can use end-to-end testing to verify that all these actions work as a user might expect.

Announcing the beta for MacOS Runners in Bitbucket Pipelines

Automate the deployment of FeathersJS apps to Heroku

Automation goes beyond just building solutions to replace complex or time-consuming manual processes. As the popular saying goes, “anything that can be automated should be automated.” For example, deploying updates to applications can and should be automated. In this tutorial, I will show you how to set up hands-free deployment of a FeathersJS app to Heroku.