Operations | Monitoring | ITSM | DevOps | Cloud

September 2021

ScienceLogic Acquires Restorepoint, Expanding Portfolio Into NetOps & SecOps Domains

Hi, my name is Erik Rudin, and I have the privilege of leading our technical alliances and ecosystem team here at ScienceLogic. We are excited to announce that ScienceLogic has acquired the network configuration and change management vendor Restorepoint. With this acquisition, we’re expanding our IT operations business into the Network Operations (NetOps) and Security Operations (SecOps) domains.

ADS 11.4 - Built with Your Feedback

The new release of Kemp Flowmon ADS 11.4 brings you the most frequently requested features.

How to monitor a web server running NGINX|httpd

Web servers are software services that store resources for a website and then makes them available over the World Wide Web. These stored resources can be text, images, video and application data. Computers that are interfaced with the server mostly web browsers (clients), request these resources and presents to the user. This basic interaction determines every connection between your computer and the websites you visit.

EllaLink and BSO launch strategic partnership to develop financial markets between Europe and Latin America

Career and Networking Evolution with BGPMon's Founder Andree Toonk | Network AF Podcast Ep. 1

Backbone Engineering and Interconnection with Nina Bargisen | Network AF Podcast Ep. 2

Listen up: The Network AF podcast is here

Hear here! Today we’re very excited to share that our Co-founder and CEO Avi Freedman launched a new podcast, Network AF. If you like nerding out on all-things networking, cloud and the internet, this podcast is for you. If you like networking how-tos, best practices and biggest mistakes, this podcast is for you. If you want to up your poker game, well… this podcast might also be for you.

Lightning-fast Kubernetes networking with Calico & VPP

Public cloud infrastructures and microservices are pushing the limits of resources and service delivery beyond what was imaginable until very recently. In order to keep up with the demand, network infrastructures and network technologies had to evolve as well. Software-defined networking (SDN) is the pinnacle of advancement in cloud networking; by using SDN, developers can now deliver an optimized, flexible networking experience that can adapt to the growing demands of their clients.

Key concepts of systems and networks

In the middle of the information century, who has not surfed the Internet or used a computer, be it a desktop or a laptop? But do you really know what a computer is and what it is made of? and what about the Internet? It is important to know at least the most superficial layer of something as important as computer systems and networks, and therefore, we are going to talk about the key concepts of these two topics.

Why Do We Need Network Management? A Practical Explanation

Network management is a necessity. With today’s modern infrastructure, there isn’t a whole lot you can do effectively without it. It’s like tech’s version of the old American Express slogan, “Don’t leave home without it.” You have to deal with the ever-changing needs of the business, constant cybersecurity threats, and complex private and public networks.

Aligning Your Enterprise Network With SaaS

How to Set Up a Reverse Proxy in Nginx and Apache

To work efficiently, the client and server exchange information on a regular basis. A webserver typically employs reverse proxies. A client sees a reverse proxy or gateway as if it were a regular web server, and no extra configurations are required. The client sends standard requests to the reverse proxy, which then determines where to send the data, providing the final result to the client as if it were the origin.

All About Network Topology-Types and Diagrams

Streamline Migration and Application Onboarding in DX APM with EasySeries

To realize the full potential of APM, many customers are migrating from their existing APM 10.7 clusters to DX APM. In addition, they continue to onboard new applications for monitoring. These efforts require a series of steps, including the configuration of experience views, universes, and DX Operational Intelligence services.

3 ways OpUtils' IP address tracker fosters effective IP management

How a network’s IP address space is structured, scanned, and managed differs based on the organization’s size and networking needs. The bigger your network is, the more IPs you need to manage, and the more complex your IP address hierarchy gets. As a result, issues such as IP resource overutilization and address conflicts become challenging to avoid without an IP address management (IPAM) solution in place.

10 reasons you need a network configuration manager

On June 2, 2019, Google Cloud Platform had a major network outage that disrupted the services of Discord, Spotify, and Snapchat, among many others. The root cause was a benign misconfiguration coupled with a software bug that caused the loss of configuration data. The issue was resolved almost four hours later after the lost configuration data was rebuilt and redistributed.

What is Distributed Network Monitoring for SaaS and SD-WAN

Nowadays, companies are embracing flexibility. Many businesses are embracing remote offices and working from home, storing their data in the Cloud, ditching centralized data infrastructures, and moving towards networks using SaaS and SD-WAN. With distributed architectures becoming the new normal, it’s important to have a distributed monitoring solution that can keep up. In this article, we’re running you through everything you need to know about how distributed network monitoring works.

Monitoring Juniper networks with Grafana

This article will dive into your questions surrounding monitoring Juniper Networks. In addition, you will be able to learn how Grafana can help you to monitor Juniper networks systems. As you read through, you will be able to get the answers for the following questions...

MWC'21: Yogesh Lulla on Telecom Stars in Cars

The Challenge of Monitoring a Distributed Network

Three Network Challenges Keeping CIOs Awake At Night

Want to sell more managed services? Start with a focus

Imagine this: You’re about to talk to a new prospect. You have your pitch prepared. It’s your normal pitch deck, and it covers the full gamut of managed services—you tackle every device, maintain everything, and highlight the value of your services. You have charts, facts, and figures, and you pump yourself up with your favorite music before entering the room. They politely listen to the pitch and decide they want some time to think about it.

Maximize network traffic insights with extended raw data storage

Introducing HighPerf, our highly scalable raw storage database that facilitates smarter analytics, faster troubleshooting, and better bandwidth management.

Beyond the Mandate: Next Steps in the Fight to Combat Robocalls and Spoofing

Robocalling is no small problem. These nuisance calls are the major source of consumer complaints, with some estimates suggesting that more than 22 billion robocalls have been made in 2021 alone – and we’re only halfway through the year.

Proactively monitoring customer experience and network performance in real-time

According to a recent Capgemini research, fewer than half (48%) of consumers feel that the connectivity services that they have today adequately meet their remote needs. Still, many CSPs openly admit that they often hear about service issues via social media and sites like Downdetector. And with the fixed/mobile convergence, a negative home broadband experience now has the potential to cause churn for CSPs’ mobile customers too.

Network monitoring: The build vs. buy dilemma

AI-based monitoring and anomaly detection is the key to ensuring that businesses can keep pace with the high level of service required for mission-critical applications. Early, contextual detection is a basic requirement for speedy resolution. AI-based monitoring creates more visibility and provides the agility needed to mitigate the outages, blackouts, glitches and issues that do and will happen.

BSO partners with ImpactScope becoming the first connectivity provider to offer carbon offsetting for crypto traders

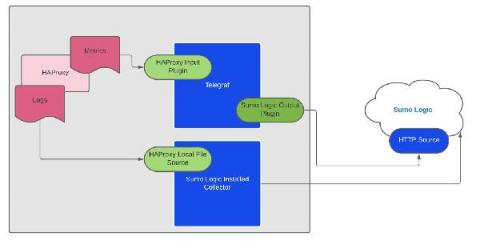

How to Enable Health Checks in HAProxy

HAProxy provides active, passive, and agent health checks. HAProxy makes your web applications highly available by spreading requests across a pool of backend servers. If one or even several servers fail, clients can still use your app as long as there are other servers still running. The caveat is, HAProxy needs to know which servers are healthy. That’s why health checks are crucial.

Empower Your Network Operations Team With AI

Broadcom Awarded Highest Vendor Score in EMA Radar Report For NPM

Broadcom is proud to be named a “Value Leader” in the 2021 EMA Radar Report For Network Performance Management. Broadcom received the highest vendor strength score and was selected as having the best alert and alarm management. We believe this recognition validates our strong NetOps vision and our ability to speed the delivery of new network monitoring software innovations that help address the network transformation challenges of our customers.

What is QUIC? Everything You Need to Know

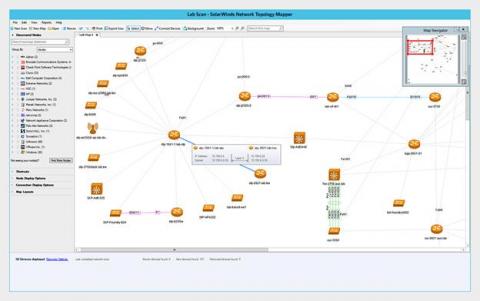

OP5's network monitoring as an alternative to SolarWinds' Orion

An infamous cyberattack in late 2020 made SolarWinds a household name in the tech industry after it was discovered to be at the center of a supply-chain attack on its Orion network management tool. That attack allowed state-sponsored actors to push a malicious update to nearly 18,000 customers, including U.S. government agencies and about 100 large private enterprises.

Best practices for getting started with Datadog Network Performance Monitoring

Whether running on a fully cloud-hosted environment, on-premise servers, or a hybrid solution, modern services and applications are heavily reliant on network and DNS performance. This makes comprehensive visibility into your network a key part of monitoring application health and performance. But as your applications grow in scale and complexity, gaining this visibility is challenging.

New Hive Data Center Monitoring Agent

Obkio announces a new Monitoring Agent operated by Hive Data Center, a retail data center colocation provider based in Montreal, Quebec. Learn how Hive Data Center’s new Obkio Monitoring Agent will allow them to better support their customers and improve their quality of service.

Incident Review - What Was Behind the September 7 Spectrum Outage: A Case of Dr. BGP Hijack or Mr. BGP Mistake?

September 7, 2021, 16:36 UTC: an outage hit Spectrum cable customers in the Midwest of the U.S., including Ohio, Wisconsin and Kentucky. Users of their broadband and TV services hit social media to voice their annoyance at the disruption it was causing. Everything was resolved at around 18:11 UTC, and services were restored to users.

All You Need To Know About HAProxy Log Format

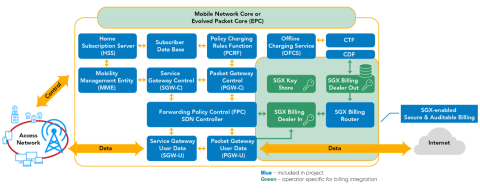

Evolution of Open-source EPC - A Revolution in the Telecom Industry

Open-source projects gravitate to some common problems in the industry. The use of open-source projects accelerates product/solution development and cuts down the costs. Open-source projects for embedded systems to the cloud are commonplace.

Rate Limiting with the HAProxy Kubernetes Ingress Controller

Add IP-by-IP rate limiting to the HAProxy Kubernetes Ingress Controller. DDoS (distributed denial of service) events occur when an attacker or group of attackers flood your application or API with disruptive traffic, hoping to exhaust its resources and prevent it from functioning properly. Bots and scrapers, too, can misbehave, making far more requests than is reasonable.

Painting a Complete Network Monitoring Picture: Why Context is Critical

In order to produce their masterpieces, artists like van Gough, Rembrandt, Picasso, and Monet painted with more than just one color. Being able to choose from multiple colors (not to mention an abundance of talent, inspiration, and creativity) is what allowed these artists to see their complete vision come to life on canvas. However, if you’re relying on a single set of data to troubleshoot network issues, it’s like you’re stuck painting with one color.

The 95th Percentile: How to Manage Capacity Before You Run Out

How to Minimize Downtime by Automating Remediation Actions With ipMonitor

Cloud or On-Prem? With Monitoring, It's Both-And, Not Either-Or

Nginx Logs in 30 Seconds | observIQ

Broadcom Enterprise Software Divison Capabilities Demo

What Is a Traffic Analysis Attack?

The times when it was enough to install an antivirus to protect yourself from hackers are long gone. We actually don’t hear much about viruses anymore. However, nowadays, there are many different, more internet-based threats. And unfortunately, you don’t need to be a million-dollar company to become a target of an attack. Hackers these days use automated scanners that search for vulnerable machines all over the internet. One such modern threat is a traffic analysis attack.

How NaaS Can Support Development and Testing Environments

Monitoring Load Balancers with Grafana

Load balancers play an important role in distributed computing. With load balancers, you can distribute heavy work loads across multiple resources, which allows you to scale horizontally. Since they are placed prior to computing resources, they need to endure heavy traffic and allocate it to the right resources fast. For this to happen, monitoring the health and performance of load balancers is key. In monitoring, visualization helps users to view various metrics quickly.

Monitoring Network Switches with Grafana

In monitoring, a target system or device is a deciding factor in designing your monitoring stack. You will have to consider various aspects starting from how you want to collect data in what frequency to how you want to surface metrics to end users. You will have to take this strategic approach when you want to monitor your network infrastructure. In this article, we will discuss how Grafana, an open-source visualization tool, can help you to monitor network switches.

What Is Network Monitoring?

Network monitoring is the practice of making sure the network as a whole, functions optimally by keeping a watch over all endpoints of a network, which is the heart of any business’s routine functioning. Any discrepancy in the form of a breach or slowdown could prove costly. Proactively monitoring networks helps administrators identify and prevent any potential issues that could occur at any time.

Root cause analysis using Metric Correlations

As complexity of systems and applications continue to evolve and change, the number of metrics that need to be monitored grows in parallel. Whether you’re on a DevOps team, an SRE, or a developer building the code yourself, many of these components may be fragmented across your infrastructure, making it increasingly difficult to identify the root cause when experiencing downtime or abnormal behavior.

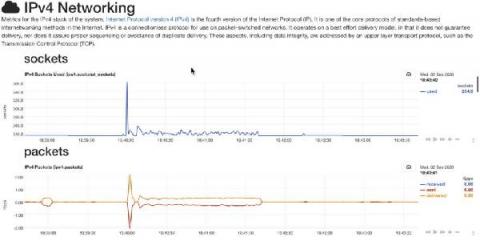

Difference Between IPv4 and IPv6: Why haven't We Entirely Moved to IPv6?

IPv4 and IPv6 are the two versions of IP. IPv4 was first released in 1983 and is currently widely used as an IP address for a variety of systems. It aids in the identification of systems in a network through the use of an address. The 32-bit address, which may store multiple addresses, is employed. Despite this, it is the most widely used internet protocol, controlling the vast bulk of internet traffic. IPv6 was created in 1994 and is referred to as the "next generation" protocol.