Operations | Monitoring | ITSM | DevOps | Cloud

August 2021

Five Key Focus Areas to Testing IoT Security

How To Significantly Tame The Cost of Autoscaling Your Cloud Clusters

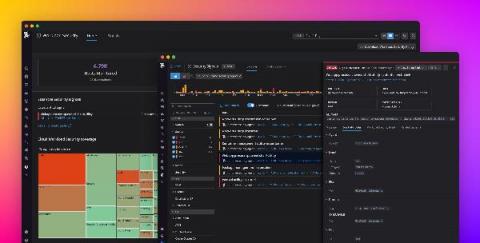

Serverless observability and real-time debugging with Dashbird

Systems run into problems all the time. To keep things running smoothly, we need to have an error monitoring and logging system to help us discover and resolve whatever issue that may arise as soon as possible. The bigger the system the more challenging it becomes to monitor it and pinpoint the issue. And with serverless systems with 100s of services running concurrently, monitoring and troubleshooting are even more challenging tasks.

How the Pandemic Impacted the Government's Cloud Migration Plans

How to Keep Up with the Constant Change of Cloud Environments

Many organizations are shifting vast portions of their applications and infrastructure to the cloud in pursuit of lower IT costs, greater business agility, improved security, and accelerated corporate growth.

Cloud PaaS through the lens of open source - opinion

Open source software, as the name suggests, is developed in the open. The software can be freely inspected by anyone, and can be freely patched as required to suit the security requirements of the organisation running it. Any publicly identified security issues are centrally triaged and tracked.

Deploy ASP.NET Core applications to Azure App Service

The ASP.NET Core framework provides cross-platform support for web development, giving you greater control over how you build and deploy your.NET applications. With the ability to run.NET applications on more platforms, you need to ensure that you have visibility into application performance, regardless of where your applications are hosted. In previous posts, we looked at instrumenting and monitoring a.NET application deployed via Docker and AWS Fargate.

Azure Service Bus: The Messaging Backbone for Cloud Applications

UKCloud launches specialist Strategic Data Practice in support of the National Data Strategy

UKCloud launches specialist Strategic Data Practice in support of the National Data Strategy

Get your AWS CloudWatch data in!

Troubleshooting Cloud Services and Infrastructure with Log Analytics

Enreach acquires cloud solutions provider DSD Europe

Understand your services with Cloud Logging

Pepperdata Transforms the Performance of Big Data Systems at Scale

August Monthly Roundup

Here is a quick round up of the newest product features, resources, and events!

What Are Spot Instances? And When Should You Use Them?

Elastic and Cmd join forces to help you take command of your cloud workloads

We are excited to announce that Elastic is joining forces with Cmd to accelerate our efforts in Cloud security - specifically in cloud workload runtime security. By integrating the capabilities of Cmd's expertise and product into Elastic Security, we will enable customers to detect, prevent, and respond to attacks on their cloud workloads.

Challenges and Opportunities of Going Serverless in 2021

While we know the many benefits of going serverless – reduced costs via pay-per-use pricing models, less operational burden/overhead, instant scalability, increased automation – the challenges of going serverless are often not addressed as comprehensively. The understandable concerns over migrating can stop any architectural decisions and actions being made for fear of getting it wrong and not having the right resources.

How to Monitor Your AWS Workloads

A WS is a comprehensive platform with over 200+ types of cloud services available globally. As organizations adopt these services, monitoring their performance can seem overwhelming. The majority of AWS workloads behind the scenes are dependent on a core set of services: EC2 (the compute service), EBS (block storage), and ELB (load balancing).

Fargate vs ECS - Comparing Amazon's container management services

Kubernetes and containerization of applications brings many benefits to software development, enabling speed, agility, and flexibility. The maturation of the Kubernetes ecosystem accelerated quickly in the last few years, leaving users with a multitude of choices when it comes to Kubernetes tooling and services. The major cloud providers (AWS, Azure, and Google Cloud) have introduced services specifically to help users run their Kubernetes applications more efficiently and effectively.

Who is Driving Enterprise-wide Innovation, Transformation, Cloud Strategy and More?

Today’s decision-making is different than even a few years ago. More “data” is used, and the data inputs take several forms, including humans. A big part of today’s strategy and decision-making at enterprise-class organizations are committees, made up of a company’s subject matter experts and relevant stakeholders for a critical company initiative.

New Trends In Multi-Cloud Connectivity

Securing Serverless Applications with Critical Logging

We’ve seen time and again how serverless architecture can benefit your application; graceful scaling, cost efficiency, and a fast production time are just some of the things you think of when talking about serverless. But what about serverless security? What do I need to do to ensure my application is not prone to attacks? One of the many companies that do serverless security, Protego, came up with an analogy I really like.

8 Risks You Need To Mitigate During Cloud Migration

Migrating workloads to the cloud can be tricky. In fact, a study Virtana conducted earlier this year found that 72% of respondents had to move applications back on-premises after migrating them to the public cloud because they ran into a variety of problems. Clearly, organizations need to address these showstoppers.

Kubernetes Fully Managed - half the cost of AWS

How can you run a fully managed Kubernetes in a private cloud at half the cost of Amazon EKS (Elastic Kubernetes Service)?

10 Application Migration Best Practices

When existing software applications fail to fulfill the complex requirements of evolved modern businesses, it requires an upgrade to a more higher-end version of the applications. This entire process of modernizing or shifting an existing application to a new computing environment is known as migration.

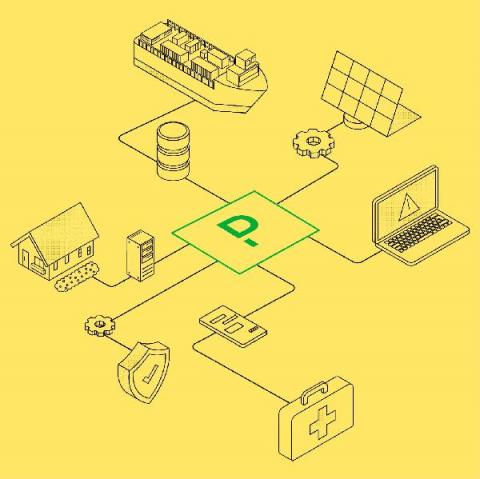

Platform.sh Introduction Demo

5 Easy Steps to Build your AWS Cloud Migration Business Case

Migrating your organization’s applications to the cloud is no small task. Before planning and execution can even be fathomed, many of our customers’ first challenge is to create a data-driven business case for management buy-in.

Cloud Key Management in a minute

Zero effort performance insights for popular serverless offerings

Inevitably, in the lifetime of a service or application, developers, DevOps, and SREs will need to investigate the cause of latency. Usually you will start by determining whether it is the application or the underlying infrastructure causing the latency. You have to look for signals that indicate the performance of those resources when the issue occured.

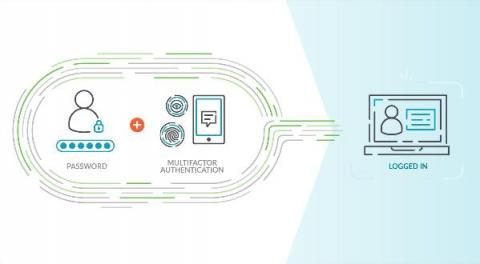

Cloud-Centric PCI Compliance Demands Cloud-Native Controls

Over the last 15-plus years, the Payment Card Industry Data Security Standard – a.k.a. PCI DSS – has endured as the bellwether of IT security standards. For today’s e-commerce vendors and cloud centric retailers, maintaining alignment with “PCI” remains as relevant as ever, especially given the continued proliferation of threats and diversity of cloud and hybrid environments.

How to Monitor Serverless Apps

How the technology you choose influences CloudOps maturity

As the world becomes increasingly digital-first, it’s more important than ever for organizations to keep services always-on, innovate quickly, and deliver great customer experiences. Uptime is money, so it’s no surprise that many have made the shift to cloud in recent years in order to make use of its flexibility and scale—while controlling costs. And while 2020 wasn’t easy for any organization, those that are thriving have embraced the digital mindset.

Serverless with AWS - Image resize on-the-fly with Lambda and S3

Handling large images has always been a pain in my side since I started writing code. Lately, it has started to have a huge impact on page speed and SEO ranking. If your website has poorly optimized images it won’t score well on Google Lighthouse. If it doesn’t score well, it won’t be on the first page of Google. That sucks.

Keeping afloat during the flood of worker turnover

Almost four million U.S. workers a month are quitting their jobs. More than half of North American employees plan to look for a new position this year. And an alarming percentage of the workforce describe themselves as “burnt out.” Statistics like these are the shadow cast by the looming “talent turnover tsunami.” The pandemic has unmoored millions of people from their familiar patterns both at home and at work.

Application Modernization Best Practices

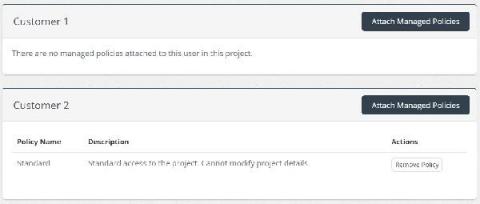

Projects Enhancements

Back in 2019, I introduced you to Skeddly Projects . Projects is a feature in Skeddly that allows you to separate actions, credentials, managed backup plans, and managed start/stop plans. Almost like a mini Skeddly account within an account. Over the last few months, Skeddly’s Projects feature has been significantly enhanced. And I’m going to tell you all about the wonderful new features within Skeddly Projects.

Use Process Metrics for troubleshooting and resource attribution

When you are experiencing an issue with your application or service, having deep visibility into both the infrastructure and the software powering your apps and services is critical. Most monitoring services provide insights at the Virtual Machine (VM) level, but few go further. To get a full picture of the state of your application or service, you need to know what processes are running on your infrastructure.

How to get started with StackState's cloud observability platform

FinOps for Engineering

What Is Cloud Management? The Ultimate Guide

New Google Cloud instance types on Elastic Cloud

We are excited to announce support for Google Compute Engine (GCE) N2 general purpose virtual machine (VM) types, and additional hardware configuration options powered by N2 custom machine types. N2 VMs leverage Intel 2nd Generation Xeon Scalable processors and provide a balance of compute, memory, and storage. N2 machine types also offer more than a 20% improvement in price-performance over the first-generation N1 machines.

How to Test JavaScript Lambda Functions?

Function as a service (FaaS) offerings like AWS Lambda are a blessing for software development. They remove many of the issues that come with the setup and maintenance of backend infrastructure. With much of the upfront work taken out of the process, they also lower the barrier to start a new service and encourage modularization and encapsulation of software systems. Testing distributed systems and serverless cloud infrastructures.

AWS Compute Optimizer: Pros and Cons

AWS offers a Compute Optimizer tool that uses machine learning to analyze your historical utilization metrics and then recommend optimal AWS resources to help you reduce costs and improve performance. And it is free, you just need to opt in to the service in the AWS Compute Optimizer Console. Sounds great, right? Well, yes and no. It is a useful little tool, but if you do not understand its pros and cons, you will not be as optimized as you may think. Here is a breakdown.

Platform.sh | Community Using AWS S3 snapshot repository for Elasticsearch

Contextual Code specializes in enterprise-level projects for state government agencies. We routinely tackle difficult web content management implementations, migrations, integrations, customizations, and operations. We know what it takes to get a project off the ground and onto the web. We use Platform.sh as our primary hosting platform because it’s incredibly flexible and it provides a vast list of services that can be set up very easily.

Access the Cloud Monitoring Console from Anywhere

Have you ever wanted to check the status of your Splunk Cloud Platform deployment but can't easily access your laptop? We've got you covered— the Cloud Monitoring Console is now available on Spunk Mobile.

Platform as a Service (PaaS): A guide

Cloud computing has conquered our lives, from massive on-premise systems and storage hubs to fully virtualized storage platforms. Today, organizations are reengineering their strategies rapidly into cloud-friendly which resulted in a rapid growth in cloud migration rate. Studies says, worldwide cloud infrastructure services investments are increased to $41.8 billion in the first quarter of 2021. Because, there is always a double-fold benefit from the cloud transformation.

A beginner's guide to Platform.sh

Hey friends, I recently joined Platform.sh as a Developer Relations Engineer, and I’ve been playing with the platform for a few weeks now. In this blog post, I’ll share some things I’ve learned about Platform.sh, how I was able to deploy my first Node.js application, and a few features that I think could be improved.

Manage Ocean GKE Virtual Node Groups using Terraform

Spot by NetApp allows its users to manage their application infrastructure using a variety of provisioning tools. One of these tools is Terraform, an infrastructure as code (IaC) tool that allows users to build, change, and version infrastructure safely and efficiently. Spot by NetApp solutions supports multiple Terraform resources, such as Elastigroup, EMR Mr scaler, Managed Instance, and Ocean clusters for different cloud providers, and many more.

Google Cloud's 23 regions for logging, Private Service Connect & more!

How CloudZero Manages Cloud Costs During Our Product Discovery Process

Auto scaling on Azure - Manual, HPA, Cluster Autoscaler

Verify GKE Service Availability with new dedicated uptime checks

Keeping the experience of your end user in mind is important when developing applications. Observability tools help your team measure important performance indicators that are important to your users, like uptime. It’s generally a good practice to measure your service internally via metrics and logs which can give you indications of uptime, but an external signal is very useful as well, wherever feasible.

Cloud Agnostic: What Does It Really Mean And Why Do You Need It?

Monitor and troubleshoot your VMs in context for faster resolution

Troubleshooting production issues with virtual machines (VMs) can be complex and often requires correlating multiple data points and signals across infrastructure and application metrics, as well as raw logs. When your end users are experiencing latency, downtime, or errors, switching between different tools and UIs to perform a root cause analysis can slow your developers down.

Archiving Logs directly to AWS for Extended Retention | observIQ

Orchestration in Telcos: the multi-vendor and multi-cloud environments...

The use of NFV migration is becoming commonplace, it is made apparent there is a need for a higher degree of software management, smoother upgrades, and deployment process. Due to the complexity of the migration, Telcos have been deterred from adoption. A solution should be out there to aid businesses in managing and deploying network automation, orchestration, and managed services. In general, a telco network is complex and needs to be managed using multiple perspectives.

Distributed tracing with OpenTelemetry and Cloud Trace

Product Explainer Video: Splunk Infrastructure Monitoring for Real-time Monitoring in the Cloud

Google Cloud Asset Inventory 101

What Is AWS Auto Scaling? And When Should You Use It?

Dashbird Explained: the why, what and how

Here’s everything you need to know to get started with Dashbird – the complete solution for End-to-End Infrastructure observability , Real-time Error Tracking, and Well-Architected Insights. When working with AWS, One cannot emphasize enough the architectural best practices for designing workloads. One of those best practices is to design the solution in such a way that the monitoring of infrastructure and troubleshooting of errors and problems is achieved effortlessly.

Going Beyond with Hybrid Cloud using CloudHedge - The Best of Both Worlds

Lately, enterprises are moving towards a hybrid solution that offers the best of both worlds. A hybrid cloud setup combines two infrastructures like a private cloud with one or more public cloud further enabling communication between each distinct service. To maximize returns, a hybrid cloud strategy equips the enterprise with greater flexibility and control by moving workloads between clouds as costs and resources fluctuate.

Troubleshoot GKE apps faster with monitoring data in Cloud Logging

When you’re troubleshooting an application on Google Kubernetes Engine (GKE), the more context that you have on the issue, the faster you can resolve it. For example, did the pod exceed it’s memory allocation? Was there a permissions error reserving the storage volume? Did a rogue regex in the app pin the CPU? All of these questions require developers and operators to build a lot of troubleshooting context.

Use log buckets for data governance, now supported in 23 regions

Logs are an essential part of troubleshooting applications and services. However, ensuring your developers, DevOps, ITOps, and SRE teams have access to the logs they need, while accounting for operational tasks such as scaling up, access control, updates, and keeping your data compliant, can be challenging. To help you offload these operational tasks associated with running your own logging stack, we offer Cloud Logging.

6 Strategic Recommendations FP&A Can Make With Cloud Cost Intelligence

Customer Story: Stor.ai

Monitoring for app right-sizing in GKE

Elastigroup now supports step scaling

Together with the release of Spot by NetApp’s Elastigroup support for multiple metrics, we are pleased to share that Elastigroup now also support step scaling, a new functionality that allows Elastigroup customers to configure multiple actions under a single, simple scaling policy. In Elastigroup, each simple scaling policy contains two parts. The first is the AWS metric, which is the metric the data is collected from.

Elastigroup scaling now supports multiple metrics

Scalability is one of the main reasons why cloud computing has become so popular. Cloud customers can rapidly react to changes in market needs and demands by automatically launching or terminating resources. This way only the exact number of resources required to serve all incoming requests are running (and being paid for) at any given moment.

What Is AWS Sizing? 10+ Best Practices And Tips

Key metrics for monitoring Amazon EFS

Amazon Elastic File System (EFS) provides shared, persistent, and elastic storage in the AWS cloud. Like Amazon S3, EFS is a highly available managed service that scales with your storage needs, and it also enables you to mount a file system to an EC2 instance, similar to Amazon Elastic Block Store (EBS).

Amazon EFS monitoring tools

In Part 1 of this series, we looked at EFS metrics from several different categories—storage, latency, I/O, throughput, and client connections. In this post, we’ll show you how you can collect those metrics—as well as EFS logs—using built-in and external tools.

EFS Monitoring with Datadog

In Part 1 of this series, we looked at the key EFS metrics you should monitor, and in Part 2 we showed you how you can use tools from AWS and Linux to collect and alert on EFS metrics and logs. Monitoring EFS in isolation, however, can lead to visibility gaps as you try to understand the full context of your application’s health and performance.

Trinity Fire & Security Systems Selects iland to Lead Cloud Transformation

Connect your AKS cluster to Ocean using Terraform

Spot by NetApp serves hundreds of customers across industries, with different systems, environments, processes and tools. With this in mind, Spot aims to develop our products with flexibility so that whatever the use case, companies can get the full benefits of the cloud. Spot easily plugs into many tools that DevOps teams are already using, from CI/CD to infrastructure as code, including Terraform.

Lightning-fast scale-out with Ocean for container workloads

Spot Ocean offers best-in-class container-driven autoscaling that continuously monitors your environment, reacting to and remedying any infrastructure gap between the desired and actual running containers. The way this typically plays out is that when there are more containers than underlying cloud infrastructure, Ocean immediately starts provisioning additional nodes to the cluster so the container’s infrastructure requirements will be satisfied.

Welcome to Virtana Optimize

Securing AWS IAM with Sysdig Secure

Last year’s IDC’s Cloud Security Survey found that nearly 80 percent of companies polled have suffered at least one cloud data breach in the past 18 months.

Debugging Cloud Functions

Why Do You Need to Document Your Microsoft Azure Usage?

End to End Tracking for Azure Integration Solutions

My honest review: I tried AWS Serverless Monitoring using Dashbird.io

As a startup, we always want to focus on the most important thing — to deliver value to our customers. For that reason, we are a huge fan of the serverless options provided by AWS (Lambda) and GCP (Cloud Function) as these allow us to maintain and quickly deploy bite-size business logic to production, without having to worry too much about maintaining the underlying servers and computing resources.

Hunting for threats in multi-cloud and hybrid cloud environments

Remote work and its lasting impact: What our global research uncovered

The COVID-19 pandemic has not only had a profound impact on everyone across the globe; it has also fundamentally changed the way organizations function. We are nearing one and a half years since remote work became the norm and organizations had to adapt to this new mode of working almost overnight. This rapid transition wouldn’t have been possible without the massive technology, workflow, and process upgrades undertaken by IT departments.

Secure your infrastructure in real time with Datadog Cloud Workload Security

From containerized workloads to microservice architectures, developers are rapidly adopting new technology to scale their products at unprecedented rates. To manage these complex deployments, many teams are increasingly moving their applications to third party–managed services and infrastructure, trading full-stack visibility for simplified operations.

Best practices for monitoring a cloud migration

When you migrate workloads from on-premise infrastructure into a public cloud, you can improve the performance, reliability, and security of your application, and you might also lower your costs.

How can forecasting help to fine-tune your AWS monthly budget ?

The word "forecast" typically brings to mind weather predictions that have evolved through sources like snippets on television, newspaper columns, or the very popular, "Alexa, what's today's weather?" However, a financial forecast is something very different.

Monitoring for efficient cluster binpacking in GKE

New histogram features in Cloud Logging to troubleshoot faster

Visualizing trends in your logs is critical when troubleshooting an issue with your application. Using the histogram in Logs Explorer, you can quickly visualize log volumes over time to help spot anomalies, detect when errors started and see a breakdown of log volumes. But static visualizations are not as helpful as having more options for customization during your investigations.

What is Splunk Security Analytics for AWS?

Introducing the All New Serverless360!

How PagerDuty Helps Manage Hybrid Infrastructure and Complex Ops Across Industries

If there’s one thing we learned from the 80+ sessions from Summit 2021, it’s that across the industries, companies are continuing to accelerate innovation in a bid to meet growing customer expectations of always-on services across all channels. In financial services, disrupting traditional banking or rethinking access to advisory services comes with operational and regulatory challenges.

Elastic recognized for innovation by Google Cloud and Microsoft

Elastic received honors from two key partners, Microsoft and Google — a recognition of our efforts to ensure that customers can easily find and use Elastic products in the environments that best suit their needs. Elastic was named the 2021 Microsoft US Partner Award Winner in Business Excellence in the Commercial Marketplace. In addition, for the second year in a row, Elastic was selected by Google Cloud as the 2020 Technology Partner of the Year for Data Management.

Monitor containerized ASP.NET Core applications on AWS Fargate

The ASP.NET Core framework enables you to build and deploy .NET applications on a wide variety of platforms, each of which has different observability concerns. In a previous post, we looked at monitoring a containerized ASP.NET Core application. In this guide, we’ll show how Datadog provides visibility into ASP.NET Core applications running on AWS Fargate. We’ll walk through.

IGEL Launches the UD Pocket2, a Portable Dual Mode USB Device for Secure End User Computing from Anywhere

Override Jenkins default executor configuration for more control over cloud infrastructure

The Spot Jenkins plugin allows Jenkins to manage Elastigroups, enabling users to run servers with spot instances and take advantage of the other Elastigroup features. It’s a powerful integration that Spot is continuously improving in order to give our users even more control over their application infrastructure.

How to move your VDI workloads to the Public Cloud?

A couple of weeks ago, I had a webinar together with Goliath Technologies about what we need to consider before moving your VDI workloads to Public Cloud. In this blog post I will try and write a summary of the various stages that should be part of the journey and also some of the pitfalls that you can encounter when moving your VDI workload to Public Cloud.

Automate EKS Node Rotation for AMI Releases

In the daily life of a Site Reliability Engineer, the main goal is to reduce all the work we call toil. But what is toil? Toil is the kind of work tied to running a production service that tends to be manual, repetitive, automatable, tactical, devoid of enduring value, and scales linearly as a service grows. This blog post describes our journey to automate our nodes rotation process when we have a new AMI release and the open source tools we built on this.

Cycle Podcast | Episode 6 "BDG Web Design" with Brandon Gottier

Why Should My Business Consider Private And Direct Connections To Huawei Cloud?

A Stunning Cloud Mistake Too Many Companies Are Making

4 Cloud Monitoring Capabilities That Really Matter

9 Signs Your Cloud Readiness Isn't What It Needs to Be

Optimize costs in GKE with monitoring systems

5 Tactical Ways To Align Engineering And Finance On Cloud Spend

8 Must-Know Tricks to Use S3 More Effectively in Python

AWS Simple Storage Service (S3) is by far the most popular service on AWS. The simplicity and scalability of S3 made it a go-to platform not only for storing objects, but also to host them as static websites, serve ML models, provide backup functionality, and so much more. It became the simplest solution for event-driven processing of images, video, and audio files, and even matured to a de-facto replacement of Hadoop for big data processing.

Application view for Azure Serverless Integrations

Application view for Microsoft Azure Resources

How to Drive Innovation and Agility Using Digital Twins Technology?

In our rapidly changing world, product manufacturing organizations are increasingly searching for ways to improve their efficiency in delivering outstanding throughputs. Digital Twins is becoming an ideal technology solution for manufacturers and process engineers that helps companies to monitor operations in real-time, control and even enable machines to learn, improve and heal themselves.