Operations | Monitoring | ITSM | DevOps | Cloud

March 2021

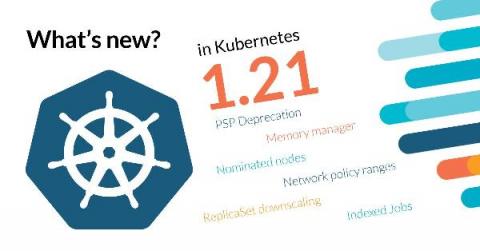

What's new in Kubernetes 1.21?

This release brings 50 enhancements, up from 43 in Kubernetes 1.20 and 34 in Kubernetes 1.19. Of those 50 enhancements, 15 are graduating to Stable, 14 are existing features that keep improving, and a whopping 19 are completely new. It’s great to see old features, that have been around as long as 1.4, finally become GA. For example CronJob, PodDisruptionBudget, and sysctl support.

Kubernetes Master Class - Using Hybrid and Multi-Cloud Service Mesh Based Applications

TeamTNT: Latest TTPs targeting Kubernetes (Q1-2021)

In April 2020, MalwareHunterTeam found a number of suspicious files in an open directory and posted about them in a series of tweets. Trend Micro later confirmed that these files were part of the first cryptojacking malware by TeamTNT, a cybercrime group that specializes in attacking the cloud—typically using a malicious Docker image—and has proven itself to be both resourceful and creative.

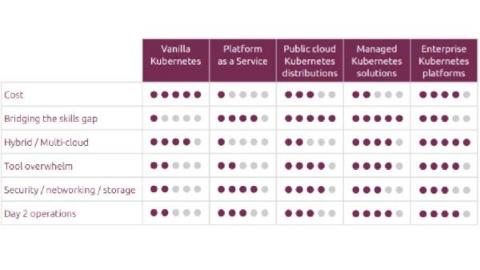

How to choose the best enterprise Kubernetes solution

While containers are known for their multiple benefits for the enterprise, one should be aware of the complexity they carry, especially in large scale production environments. Having to deploy, reboot, upgrade or apply patches to patches to hundreds and hundreds of containers is no easy feat, even for experienced IT teams. Different types of Kubernetes solutions have emerged to address this issue.

Transforming WebSphere ND on AIX to WebSphere Liberty containers using CloudHedge's App Modernization platform

In my last post (read here), we saw how CloudHedge enables enterprises to execute the transformation of WebSphere ND on Linux Apps to WebSphere Liberty Container in a non-intrusive way. As an addition to the previous post, this one talks about transforming WebSphere ND on AIX to WebSphere Liberty containers using CloudHedge’s App Modernization platform.

Sysdig Adds Unified Threat Detection Across Containers and Cloud to Combat Lateral Movement Attacks

Cloud lateral movement: Breaking in through a vulnerable container

Lateral movement is a growing concern with cloud security. That is, once a piece of your cloud infrastructure is compromised, how far can an attacker reach? What often happens in famous attacks to Cloud environments is a vulnerable application that is publicly available can serve as an entry point. From there, attackers can try to move inside the cloud environment, trying to exfiltrate sensitive data or use the account for their own purpose, like crypto mining.

AWS CIS: Manage cloud security posture on AWS infrastructure

Implementing the AWS Foundations CIS Benchmarks will help you improve your cloud security posture in your AWS infrastructure. What entry points can attackers use to compromise your cloud infrastructure? Do all your users have multi-factor authentication setup? Are they using it? Are you providing more permissions that needed? Those are some questions this benchmark will help you answer. Keep reading for an overview on AWS CIS Benchmarks and tips to implement it.

Unified threat detection for AWS cloud and containers

Implementing effective threat detection for AWS requires visibility into all of your cloud services and containers. An application is composed of a number of elements: hosts, virtual machines, containers, clusters, stored information, and input/output data streams. When you add configuration and user management to the mix, it’s clear that there is a lot to secure!

Flux Tutorial: Implementing Continuous Integration Into Your Kubernetes Cluster

This hands-on Flux tutorial explores how Flux can be used at the end of your continuous integration pipeline to deploy your applications to Kubernetes clusters.

Getting started with cloud security

It's official - Civo Kubernetes is certified by CNCF

We're very proud to announce that we have been accepted by the Cloud Native Computing Foundation (CNCF) as a conformant Certified Kubernetes provider for our v1.20 Civo Kubernetes product based on K3s. Every small business starts with a goal of competing with the big fish of the industry, and a huge part of that is having the certification to prove you're providing a compatible service. We're now in the company of some really inspirational organisations...

SpeedChat #002: Shift-Left vs. Shift-Right Throwdown - Nate Lee & Ken Ahrens

SpeedChat: Shift-Left vs. Shift-Right Throwdown

Speedscale ‘SpeedChat’ Episode 2: Shift-Left vs. Shift-Right Throwdown featuring Nate Lee (Founder, Speedscale), Ken Ahrens (Founder, Speedscale) and Jason English (Principal Analyst, Intellyx).

How to Perform a Basic Rolling Upgrade of a Kubernetes Cluster

In today’s digital landscape, users expect applications to be available at all times and developers are expected to deploy new versions of these applications several times a day. Both of these expectations can be met by upgrading your Kubernetes cluster. Kubernetes is constantly getting new features and security updates, so your Kubernetes cluster needs to be kept up-to-date as well.

Windows containers on Kubernetes with MicroK8s

Kubernetes orchestrates clusters of machines to run container-based workloads. Building on the success of the container-based development model, it provides the tools to operate containers reliably at scale. The container-based development methodology is popular outside just the realm of open source and Linux though.

VMware Tanzu Advanced Introduction

Introduction to VMware Tanzu Basic

Techworld with Nana: DevOps Tool of the Month

DevOps tool of the month is a series, where each month I will introduce one new useful DevOps tool in 2021 For March I chose: Shipa – Shipa’s cloud native application management framework makes working with Kubernetes for developers extremely easy.

Kubernetes operators and Open Operator Collection integration - Juju 2.9

Following the Open Operator Collection announcement from November, Canonical is today proud to announce the availability of Juju Operator Lifecycle Manager (OLM) 2.9. This new release of Juju brings new capabilities for Kubernetes operators as well as smooth integration with the Open Operator Collection.

The Future of Qovery - Week #4

During the next seven weeks, our team will work to improve the overall experience of Qovery. We gathered all your feedback (thank you to our wonderful community 🙏), and we decided to make significant changes to make Qovery a better place to deploy and manage your apps. This series will reveal all the changes and features you will get in the next major release of Qovery. Let's go!

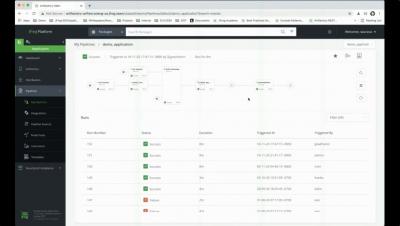

Minimize failed deployments with Argo Rollouts and Smoke tests

Argo Rollouts is a progressive delivery controller created for Kubernetes. It allows you to deploy your application with minimal/zero downtime by adopting a gradual way of deploying instead of taking an “all at once” approach.

The Civo roadmap for 2021 - Saiyam Pathak

Civo Online Meetup #7 - Kubernetes security focus

Create On-Demand Kubernetes Clusters for CI/CD With Kind and Codefresh

Wouldn’t it be handy to quickly spin up a Kubernetes cluster for CI testing, on-demand? Interested? If so, read on! Here at Codefresh, many of our customers develop Kubernetes-native applications. A common CI task is to create a Kubernetes cluster to test out deployment processes and integrations. Often, such tests can be greatly simplified if this cluster is ephemeral – that is, it is created on-demand for each build of a test pipeline.

Secure Kubernetes by default with support for GKE Shielded Nodes on Ocean

Security remains a consistent priority for cloud providers to ensure that customers are always protected, data is secure and applications are safe. Users of Google Kubernetes Engine (GKE) are provided with ways to maintain the integrity of the compute instances that applications are running on top of.

Container deployment showdown: Docker or Kubernetes?

Monitoring the current state and performance of applications is critical for IT Ops and DevOps teams alike. Understanding the health of an application is one of the most effective ways of anticipating potential bottlenecks or slowdowns, yet it’s one of the largest challenges faced by many organizations that build and deploy software. This is largely due to applications’ distributed and diversified nature.

Comparing Native FinOps Tools from AWS, Azure & Google

Komodor Product Overview

What's new in Sysdig - March 2021

Welcome to another monthly update on what’s new from Sysdig. Our team continues to work hard to bring great new features to all of our customers, automatically and for free! This month was mostly about compliance and a PromQL Query Explorer! Have a look below for the details. We have added a number of new compliance standards to our compliance dashboards page, making it even easier for our customers to quickly (and continuously!) check how well they’d do from an audit.

How to install Kubeflow on Kubernetes

Modernizing database operations using Google Cloud Anthos and Robin Cloud Native Storage

How to Perform an Advanced Kubernetes Upgrade with Fine-Grained Controls

D2iQ Konvoy provides controls to easily upgrade Kubernetes itself, Kubernetes add-ons, like Prometheus, or the Konvoy CLI independently. Best of all, upgrades can be performed in place without disruption. This tutorial will cover how to upgrade node pools, or specify the upgrade based on specific node pools. We’ll also walk through how to upgrade in parallel, or specify the number of concurrent nodes to upgrade. To get started, take a look at the cluster config.

CircleCI introduces server 3.x to bolster security, compliance for enterprise engineering teams

Civo is coming out of beta! Everything you need to know

It’s with immense pride and excitement we can announce that Civo is coming out of beta! When we planned out our beta program, which we called #KUBE100, our first priority was getting real-world feedback from a wide range of developers, all at various stages in their Kubernetes journey. We wanted to create a Kubernetes platform that plugged the gaps we saw in the industry – speed of deployment, ease of use, and a simple billing model.

Introducing CivoStack: The new Civo Kubernetes platform - Andy Jeffries

Introducing server 3.x: enterprise-focused Kubernetes for self-hosted CircleCI installations

Server 3.x, which is now available to all CircleCI customers, was designed to meet the strictest security, compliance, and regulatory restraints. This self-hosted solution can scale under heavy workloads, all within your team’s Kubernetes cluster on your private network, but with the dynamically scaling cloud experience of CircleCI. Offering an exceptional on-premise service means keeping that service up-to-date with the latest releases.

Top 10 Container Orchestration Tools

Containers have revolutionized how we distribute applications by allowing replicated test environments, portability, resource efficiency, scalability and unmatched isolation capabilities. While containers help us package applications for easier deployment and updating, we need a set of specialized tools to manage them.

Aggregating Application Logs From EKS on Fargate

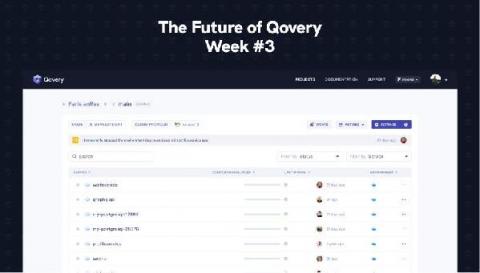

The Future of Qovery - Week #3

During the next eight weeks, our team will work to improve the overall experience of Qovery. We gathered all your feedback (thank you to our wonderful community 🙏), and we decided to make significant changes to make Qovery a better place to deploy and manage your apps. This series will reveal all the changes and features you will get in the next major release of Qovery. Let's go! Read the previous article: The Future of Qovery - Week #2.

Speedchat #001 "Dogfooding and Software Product Management" w/ Leonid, Matt - March 2021

SpeedChat: Product Management and Eating Dog Food

Speedscale ‘SpeedChat’ Episode 1: Discussing software product management, ‘dogfooding’ and scaling quickly for product/market fit without breaking things.

VirtualMetric Webinar Cloud Native Applications on VMware & Kubernetes

Rancher Online Meetup - March 2021 - Rancher KIM

Coffee & Containers - "3 Things You Should Be Doing in Cloud Native in 2021"

How to Deploy a Kubernetes Cluster on Azure

D2iQ Konvoy simplifies the deployment on Azure by providing a command line interface to automate the deployment and operations of Kubernetes clusters all in one place. In this tutorial, we’ll show you the provisioning of an enterprise-grade Kubernetes cluster on Azure using a single command. Before we get started, let’s talk about a few prerequisites you’ll need: First, download the D2iQ Konvoy installer and authenticate it to your Azure account.

3 Things You Should Be Doing in Cloud Native in 2021

As we wrap up the first quarter of 2021, we wanted to talk about things we should be doing as part of a cloud native strategy for the remaining 3/4 of the year. Moving from traditional monolithic. architectures to a modern microservices approach has many benefits, but still has the greater majority of us baffled in terms of tapping into its full potential.

Implementing DevSecOps in a Federal Agency with VMware Tanzu

Unifying three distinct teams—development, security, and operations—around a common approach to get application releases to production is challenging. This post explores how Tanzu Labs partnered with a major branch of the Department of Defense (DoD) to build an automated DevSecOps process using VMware Tanzu and several open source tools.

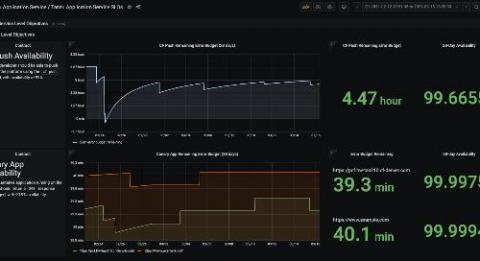

Healthwatch for VMware Tanzu 2.1 Offers Breakthrough Platform Monitoring

Keeping your distributed systems running smoothly has never been easy. To that end, Healthwatch for VMware Tanzu created an “out of the box” option for tracking the health of your app platform. The module proved to be a big upgrade from homegrown monitoring toolchains. Platform teams have since come to rely on Healthwatch’s curated indicators, alerts, and visualizations.

Automating optimization for Azure Kubernetes Service (AKS)

When running AKS clusters, ideally you want the compute infrastructure to adapt to your Kubernetes workload and not the other way around. VMs should automatically match your application requirements all the time without labor-intensive, hands-on management, and of course, your Azure bill should be as low-cost as possible. However, in trying to achieve this ideal, AKS and Kubernetes users in general, still face significant operational challenges.

The New Wave of Kubernetes: Introducing Serverless Spark

It’s been six years since Kubernetes v1.0 was released in 2015, and since then it’s become a critical technology foundation to deploy modern, cloud native applications with speed, develop them with agility and scale them with flexibility. With a fast-maturing ecosystem, advancements in tooling are making it possible for a new wave of applications to be deployed on Kubernetes.

Honeypods: Applying a Traditional Blue Team Technique to Kubernetes

The use of honeypots in an IT network is a well-known technique to detect bad actors within your network and gain insight into what they are doing. By exposing simulated or intentionally vulnerable applications in your network and monitoring for access, they act as a canary to notify the blue team of the intrusion and stall the attacker’s progress from reaching actual sensitive applications and data.

Tanzu Observability Named Fast-Moving Leader in GigaOm Cloud Observability Report

We are excited to share that technology research and analysis provider GigaOm has named VMware Tanzu Observability as a fast-moving leader in its forward-looking assessment of the cloud observability vendor space in 2021. Its cloud observability report considered solution connections; data integration and processing; performance management; root cause analysis; and full-stack observability.

ECS Fargate threat modeling

AWS Fargate is a technology that you can use with Amazon ECS to run containers without having to manage servers or clusters of Amazon EC2 instances. With AWS Fargate, you no longer have to provision, configure, or scale clusters of virtual machines to run containers. This removes the need to choose server types, decide when to scale your clusters, or optimize cluster packing. In short, users offload the virtual machines management to AWS while focusing on task management.

Kubernetes Master Class - Thanos and Istio

What is a Docker Container?

The rapid pace of updates and upgrades to operating systems, software frameworks, libraries, programming language versions – a boon to the future of fast-paced software development, has also come to slightly bite us in the back because of having to manage these very many dependencies with their different versions across different environments.

App Modernization of WebSphere Applications on Linux to WebSphere Liberty Containers

App Modernization is the way forward, especially when you have hundreds of enterprise WebSphere applications nesting on AIX. These applications are age-old, heavy, and expensive to manage and modernize. This causes a huge roadblock especially when your business is growing and your apps need to be scalable, cost-efficient to run and should be highly available. CloudHedge removes the major barrier to AIX WebSphere containerization using the Automated Application Modernization Platform.

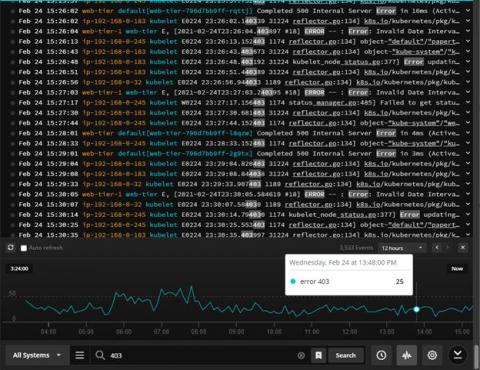

Painless Kubernetes monitoring and alerting

Kubernetes is hard, but lets make monitoring and alerting for Kubernetes simple! At iLert we are creating architectures composed of microservices and serverless functions that scale massively and seamlessly to guarantee our customers uninterrupted access to our services. As many others in the industry we are relying on Kubernetes when it comes to the orchestration of our services.

Kubernetes Master Class: Declarative Security with Rancher, KubeLinter, and StackRox

Running commands securely in containers with Amazon ECS Exec and Sysdig

Today, AWS announced the general availability of Amazon ECS Exec, a powerful feature to allow developers to run commands inside their ECS containers. Amazon Elastic Container Service (ECS) is a fully managed container orchestration service by Amazon Web Services. ECS allows you to organize and operate container resources on the AWS cloud, and allows you to mix Amazon EC2 and AWS Fargate workloads for high scalability.

How to Deploy a Kubernetes Cluster on AWS

If your organization is planning to use AWS for deploying new releases, the deployment process can be tricky for teams outside of operations to learn and use, especially for those who don’t have expertise in the tooling to automate deployment. And, because there are several ways to deploy Kubernetes on AWS, including Amazon’s own EKS, understanding the different deployment options can be tough to navigate.

Deploying applications to Kubernetes from your CI pipeline with Shipa and CircleCI

Kubernetes can bring a wide collection of advantages to a development organization, but efficiently deploying applications to Kubernetes is something many organizations are still working to perfect. Properly using Kubernetes can significantly improve productivity, empower you to better utilize your cloud spend, and improve application stability and reliability. On the flip side, if you are not properly leveraging Kubernetes, your would-be benefits become drawbacks.

How to Make Smart Decisions When Moving Apps to the Cloud

One of the major considerations when modernizing applications is how and where they’re going to be hosted—what we call landing zones. Today, you have a wide variety of options that includes, at least, some combination of on-prem, public cloud(s), Kubernetes, VMs, PaaS, and bare metal. Because of the dynamic nature of applications and the complexities of enterprise IT budgets, choosing is rarely as simple as just identifying the least expensive option.

The Future of Qovery - Week #2

During the next nine weeks, our team will work to improve the overall experience of Qovery. We gathered all your feedback (thank you to our wonderful community 🙏), and we decided to make significant changes to make Qovery a better place to deploy and manage your apps. This series will reveal all the changes and features you will get in the next major release of Qovery. Let's go!

Kubernetes Master Class - Addressing the Amount of Pull Requests in Rancher

Lesson 7: Kubernetes basics, because we need it.

Lesson 8: What is GitOps and why it's worth your time!

High Availability and etcd management in K3s Kubernetes - Darren Shepherd

Splunking AWS ECS And Fargate Part 3: Sending Fargate Logs To Splunk

Welcome to part 3 of the blog series where we go through how to forward container logs from Amazon ECS and Fargate to Splunk. In part 1, Splunking AWS ECS Part 1: Setting Up AWS And Splunk, we focused on understanding what ECS and Fargate are, along with how to get AWS and Splunk ready for log routing to Splunk’s Data-to-Everything Platform.

Getting started with PromQL - Includes Cheatsheet!

Getting started with PromQL can be challenging when you first arrive in the fascinating world of Prometheus. Since Prometheus stores data in a time-series data model, queries in a Prometheus server are radically different from good old SQL. Understanding how data is managed in Prometheus is key to learning how to write good, performant PromQL queries. This article will introduce you to the PromQL basics and provide a cheat sheet you can download to dig deeper into Prometheus and PromQL.

Kubernetes Master Class: A Seamless Approach to Rancher and Kubernetes Upgrades

How to Successfully Deploy Kubernetes Across Multi-Cloud Environments

Today’s enterprise organizations are using some form of multi-cloud infrastructure, and the numbers don’t lie. According to Flexera’s 2020 State of the Cloud report, an average of 2.2 public clouds are being used per enterprise company. And in a different report from the Everest Group, 58% of enterprise workloads are on hybrid or private cloud. The sheer increase in multi-cloud usage illustrates it’s growing popularity across enterprises.

Shifting Complexities in DevOps

In this episode of ShipTalk, Jim Shilts, Developer Advocate at Shipa and the Founder and President of North American DevOps Group (NADOG), chats with Ravi Lachhman, Evangelist at Harness on the “Shifting Complexities in DevOps.” Jim has been working on solving engineering efficiency problems for over 20 years, working at firms such as Build Forge and Electric Cloud, pre-dating the inception of Hudson/Jenkins.

Modern Application Development: A Step-by-Step Guide

Every business is looking for ways to win new customers and retain existing ones. To that end, they need to provide a compelling user experience and consistently push new business ideas into the market before their competitors do by running software in production in a way that is fast, secure, and scalable.

Kubernetes Management For Dummies

Detecting and mitigating Apache Unomi's CVE-2020-13942 - Remote Code Execution (RCE)

CVE-2020-13942 is a critical vulnerability that affects the Apache open source application Unomi, and allows a remote attacker to execute arbitrary code. In the versions prior to 1.5.1, Apache Unomi allowed remote attackers to send malicious requests with MVEL and OGNL expressions that could contain arbitrary code, resulting in Remote Code Execution (RCE) with the privileges of the Unomi application.

Secret Management: Codefresh Quick Bites

Gitlab CI/CD from build to production with MicroK8s

Tanzu Talk: DevSecOps in Fed, end to end overview

Comparing Top Container Software Options for 2021

Each day, more and more companies consider opting for cloud-based solutions, and they almost always end up adopting them to some extent. While the increasing popularity of cloud services may be a significant factor in accelerating the adoption rate of cloud-based solutions, some individuals remain skeptical of migrating their applications to the cloud due to unfamiliar territory.

Top 20 Dockerfile best practices

Learn how to prevent security issues and optimize containerized applications by applying a quick set of Dockerfile best practices in your image builds. If you are familiar with containerized applications and microservices, you might have realized that your services might be micro; but detecting vulnerabilities, investigating security issues, and reporting and fixing them after the deployment is making your management overhead macro.

How to Leverage Your Kubernetes Cluster Resources to Run Blazingly Fast and Secure CI/CD Workflows in Just a Few Minutes

This blog will take you on a step-by-step journey to show you how you can leverage your Kubernetes cluster resources to run your CI/CD workflows using the Codefresh hybrid solution. What Is the Codefresh Hybrid Solution and How Does It Work? The Codefresh hybrid solution provides you with a way of running the platform’s workflows on your Kubernetes resources, keeping your private resources safe while enjoying the benefits of a SaaS solution.

Deploying applications to Kubernetes from your CI pipeline with Shipa

Kubernetes can bring a wide collection of advantages to a development organization. Properly using Kubernetes can significantly improve productivity, empower you to better utilize your cloud spend, and improve application stability and reliability. On the flip side, if you are not properly leverag Kubernetes, your would-be benefits become drawbacks. As a developer, this can become incredibly frustrating when your focus is on delivering quality code fast.

Speedscale 3-Minute Intro: Continuous Resiliency for Cloud-Native Development

What's New with JFrog Artifactory and Xray

Multi-cloud Kubernetes management with Portainer

If you feel intimidated by Kubernetes’ complexity but still need to modernize your business applications with containers, rest assured you’re not the only one. The container orchestration platform solves many problems but also creates new ones, so read on to find out about a new approach that can help you get just the benefits.

Improving Workload Alerts with the VMware Reliability Scanner

With the recent release of the VMware Customer Reliability Engineering (CRE) team’s Reliability Scanner, we wanted to take some time to expand on the namespace label check, including how labelling can be used for alert routing. The Reliability Scanner is a Sonobuoy plugin that allows an end user to include and configure a suggestive set of checks to be executed against a cluster.

Load Balancers, Private Registries, and More: What's New in vSphere with Tanzu U2

vSphere with Tanzu brings together an integrated Kubernetes experience for VI admins and developers. Using vSphere as the infrastructure platform, managing the Kubernetes lifecycle becomes easier than ever. New features in vSphere with Tanzu U2 add more capabilities that make Kubernetes operations even more seamless. Let’s check it out. VMware NSX Advanced Load Balancer (formerly Avi Networks) provides a highly available and scalable load balancer and container ingress services.

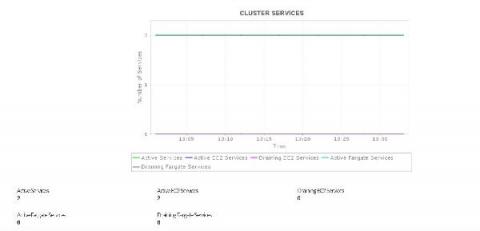

Gain deep insight into ECS cluster performance

Keep track of various tasks and services of containers running within ECS instances.

Fueling 5G with Kubernetes: The next step for telcos

5G is in the process of transforming communications technology, enabling never-before-seen data transfer speeds and high-performance remote computing capabilities.

Azure Kubernetes Service in action

Talking Shipa - "Installing Shipa"

The Future of Qovery - Week #1

During the next ten weeks, our team will work to improve the overall experience of Qovery. We gathered all your feedback (thank you to our wonderful community 🙏), and we decided to make significant changes to make Qovery a better place to deploy and manage your apps. This series will reveal all the changes and features you will get in the next major release of Qovery. Let's go!

Civo: The story so far and our vision for the future - Mark Boost

Unlock Windows Container Visibility in Kubernetes with AppDynamics

Windows containers have a Kubernetes home, but there’s a missing piece — end-to-end monitoring across the full stack. Learn how AppDynamics addresses this gap.

Debugging Development Logs with Papertrail and rKubeLog

It's TIIIIIIME - LIVE Main Event: Ready, Set... Serverless vs. Containers

Pro-Serverless: Forrest Brazeal, AWS Serverless Hero

Pro-Containers: Kevin McGrath, CTO of Spot by NetApp

Panelists: Cheryl Hung, Josh Atwell & Kaslin Fields

Moderator: Greg Knieriemen

See more.👇

http://ntap.com/itstime

#NetApp #SpotbyNetApp

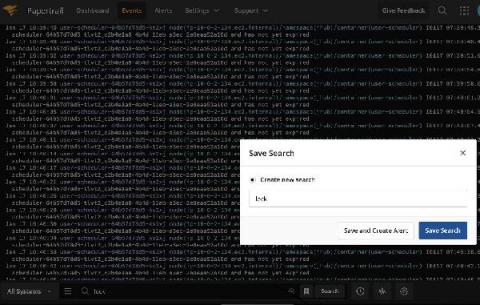

Write Prometheus queries faster with our new PromQL Explorer

We are announcing the new PromQL Explorer for Sysdig Monitor that will help you easily understand your monitor data. The new PromQL Explorer allows you to write PromQL queries faster by automatically identifying the common labels among different metrics. It also allows you to interactively modify the PromQL results by using the visual label filtering

CloudHedge's Automated App Modernization platform accelerates Chitale's journey from Farm to Fridge.

Start Kubernetes monitoring in 5 minutes with Netdata

Automate Your AWS Lambda Development Cycle

AWS Lambda is a serverless compute service that lets you run code without provisioning or managing servers. It is great if you want to create a cost-effective, on-demand service. You can use it as part of a bigger project where you have multiple services or as a standalone service to do a certain task like controlling Alexa Skill.

Introduction to k3d: Run K3s in Docker

In this blog post, we’re going to talk about k3d, a tool that allows you to run throwaway Kubernetes clusters anywhere you have Docker installed. I’ve anticipated your questions…so let’s go!

Learn the basics of Kubernetes with Civo KubeQuest - Part One

Detecting MITRE ATT&CK: Privilege escalation with Falco

The privilege escalation category inside MITRE ATT&CK covers quite a few techniques an adversary can use to escalate privileges inside a system. Familiarizing yourself with these techniques will help secure your infrastructure. MITRE ATT&CK is a comprehensive knowledge base that analyzes all of the tactics, techniques, and procedures (TTPs) that advanced threat actors could possibly use in their attacks.

All together now: Bringing your GKE logs to the Cloud Console

Troubleshooting an application running on Google Kubernetes Engine (GKE) often means poking around various tools to find the key bit of information in your logs that leads to the root cause. With Cloud Operations, our integrated management suite, we’re working hard to provide the information that you need right where and when you need it. Today, we’re bringing GKE logs closer to where you are—in the Cloud Console—with a new logs tab in your GKE resource details pages.

Robin.io Presents at Mobile World Congress - Shanghai, China Feb 2021

Tigera to Provide Native Kubernetes Support for Mixed Windows/Linux Workloads on Microsoft Azure

Tigera, in collaboration with Microsoft, is thrilled to announce the public preview of Calico for Windows on Azure Kubernetes Service (AKS). While Calico has been available for self-managed Kubernetes workloads on Azure since 2018, many organizations are migrating their .NET and Windows workloads to the managed Kubernetes environment offered by AKS.

A real-world application deployment on Kubernetes

CEO and Founder, Shipa Corp We see people talking more and more about Kubernetes these days, and if I have to guess, these conversations will continue to grow. Still, the reality is that most enterprise companies are just starting to explore Kubernetes, or they are at the very early stages of scaling it. As you deploy production-grade apps on Kubernetes, both developers and DevOps teams realize that operationalizing applications on Kubernetes can be way more complicated than expected.

Key metrics for monitoring AWS Fargate

AWS Fargate provides a way to use AWS container orchestration services—Amazon Elastic Container Service (ECS) and Amazon Elastic Kubernetes Service (EKS)—without needing to provision and maintain the infrastructure that runs your containers. Fargate is similar to serverless container platforms from Google (Cloud Run) and Microsoft (AKS virtual nodes).

How to collect metrics and logs from AWS Fargate workloads

In Part 1 of this series, we showed you the key metrics you can monitor to understand the health of your Amazon ECS and Amazon EKS clusters running on AWS Fargate. In this post, we’ll show you how you can: You can use Amazon CloudWatch and related AWS services to gain visibility into your ECS clusters and the Fargate infrastructure that runs them.

AWS Fargate monitoring with Datadog

In Part 1 of this series, we looked at the important metrics to monitor when you’re running ECS or EKS on AWS Fargate. In Part 2 we showed you how to use Amazon CloudWatch and other tools to collect those metrics plus logs from your application containers. Fargate’s serverless container platform helps users deploy and manage ECS and EKS applications, but the dynamic nature of containers makes them challenging to monitor.

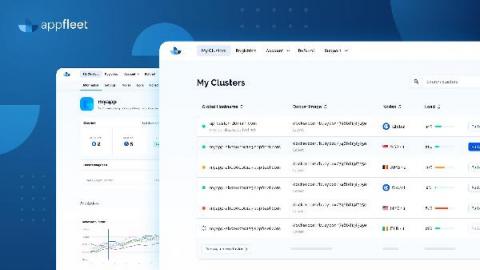

appfleet is now production ready!

First of all what is appfleet? appfleet is an edge compute platform that allows people to deploy their web applications globally. Instead of running your code in a single centralized location you can now run it everywhere, at the same time. In simpler terms appfleet is a next-gen CDN, instead of being limited to only serving static content closer to your users you can now do the same thing for your whole codebase. Run the whole thing where just your cache used to be.

Managing Docker Images with the JFrog Platform

Coffee & Containers - "Microservices in the Financial Industry"

In this episode of Coffee & Containers, North American DevOps Group‘s (https://nadog.com) Jim Shilts speaks with Shipa‘s (https://www.shipa.io) Bruno Andrade and Fiserv‘s (https://www.fiserv.com) Ken Owens.

Talking Shipa - "Installing Shipa"

Talking Shipa - "Developer Portal"

Talking Shipa - "Application Policy Management"

GitOps in Kubernetes, the easy way-with GitHub Actions and Shipa

Putting it simply, it is how you do DevOps in Git. You store and manage your deployments using Git repositories as the version control system for tracking changes in your applications or, as everyone likes to say, “Git as a single source of truth.”