Operations | Monitoring | ITSM | DevOps | Cloud

October 2021

Calico is celebrating 5 years

October marks the five-year anniversary of Calico Open Source, the most widely adopted solution for container networking and security. Calico Open Source was born out of Project Calico, an open-source project with an active development and user community, and has grown to power 1.5M+ nodes daily across 166 countries. When Calico was introduced 5 years ago, the world—and technology—was much different from what it is today.

Kubernetes Monitoring Resources

Heaven knows we all could use some luck these days, and observability may be just the thing we need. But observability isn’t luck, and it isn’t really new either. A few people even know that observability is an aspect of control theory, which dates back to the 1800s! In this blog post, I’ll cover some of the history of observability vs.

Introduction to Kubernetes Storage

Clone your production environment instantly

I am super excited to announce that we have released our "clone environment" feature. It is a massive update!! With one click, you can duplicate an existing environment. The cloning environment has been a significant feature expected by our customers and users for a long time. Thanks to our beta testers and our team for making it live for everyone. Here is a short video showing the clone environment in action

Tanzu Talk: Securing Apps in Kubernetes with the Tanzu Build Service

Various policy engines for Kubernetes policies - Saiyam Pathak

Understanding the 2021 State of Open Source Report

How are organizations managing security and compliance for open source packages nowadays? As you may recall from our annual State of Kubernetes surveys, security and compliance are always a top concern. Our recent survey, The State of the Software Supply Chain: Open Source Edition 2021, gives some great insight into how people are addressing those concerns. It also gives some guidance on how to build your own policies.

Robin.io and StorCentric Announce Hyperconverged Cloud-Native Solutions for VM-as-a-Service and Virtual Desktops with Lower Cost, Faster Provisioning Than Public Cloud

Forecasting Kubernetes Costs

The benefits of containerizing workloads are numerous and proven. But, during infrastructure transformations, organizations are experiencing common, consistent challenges that interfere with accurately forecasting the costs for hosting workloads in Kubernetes. Planning the proper reservations for CPU and memory before migrating to containers is a persistent issue Densify observes across our customers.

Zero to hero: Enterprise multi-cloud application management from Day 0 to Day 2, on any substrate

Guide To AWS Load Balancers

The AWS Elastic Load Balancing (ELB) automatically distributes your incoming application traffic across multiple targets, such as EC2 instances, containers, and IP addresses, in one or more Availability Zones, ultimately increasing the availability and fault tolerance of your applications. In other words, ELB, as its name implies, is responsible for distributing frontend traffic to backend servers in a balanced manner.

Is Kubernetes the answer to the challenges in Multi-access Edge Computing - or is there more to this equation?

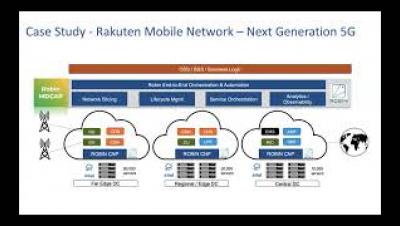

The market for Multi-access Edge Computing (MEC) is pegged at $4.25 billion in 2025. The reasons are many – from the recent surge in AR/VR gaming, to a growing preference for video calling and Ultra-High-Definition, and of course, the Internet of Things (IoT) that spawn SmartX applications, including cities, manufacturing, agriculture, and logistics. The majority of MEC opportunity is both driving and driven by 5G, and the two will grow hand-in-hand in the days to come.

Robin.io and AirHop Announce Strategic Partnership to Modernize Open RAN Solutions for 4G/5G Networks

Kubernetes Networking: CNI & Network Policies | Kublr Webinar

Civo General Availability Announcement

The 15 Best Container Monitoring Tools For Kubernetes And Docker

How to monitor your Kubernetes metrics server

In this article, we will look at what the Kubernetes metrics server is and what it is used for. We will also learn how to set up a metrics server and use it to monitor Kubernetes metrics. Finally, we will explore how to use hosted Graphite by MetricFire for monitoring Kubernetes metrics.

Building out the Open RAN ecosystem for end-to-end cloud structure deployments at scale

Observability trends 2021

Observability has gained a lot of momentum and is now rightly a central component of the microservices landscape: It’s an important part of the cloud native world where you may have many microservices deployed on a production Kubernetes cluster, and a need to monitor these microservices keeps rising. In production, quickly finding failures and fixing them is crucial. As the name suggests, observability plays an important role in this failure discovery.

Metrics for improved Docker container management and performance

When running a cloud service, it’s never good for customers to be the first people noticing an issue. It happened to our customers over the course of a few months, and we began to accumulate a series of reports of unpredictable start-up times for Docker jobs. At first the reports were rare, but the frequency began to increase. Jobs with high parallelism were disproportionately represented in the reports.

Best Practices and Tips for Writing a Dockerfile

Docker is a high-level virtualization platform that lets you package software as isolated units called containers. Containers are created from images that include everything needed to run the packaged workload, such as executable binaries and dependency libraries. Images are defined in Dockerfiles. These resemble sequential scripts that are executed to assemble an image.

The Best Tools for Monitoring Your Docker Container

It can be difficult to comprehend and successfully scale your services as modern orchestrated settings grow larger and more sophisticated. Container monitoring allows you to see the health and performance of your dynamic container infrastructure in real-time. Container monitoring is the practice of collecting and analyzing performance metrics to track the performance of containerized applications built on cloud-based microservices.

Workload access control: Securely connecting containers and Kubernetes with the outside world

Containers have changed how applications are developed and deployed, with Kubernetes ascending as the de facto means of orchestrating containers, speeding development, and increasing scalability. Modern application workloads with microservices and containers eventually need to communicate with other applications or services that reside on public or private clouds outside the Kubernetes cluster. However, securely controlling granular access between these environments continues to be a challenge.

The What and The Why of Cloud Native Applications - An Introductory Guide

Companies across industries are under tremendous pressure to develop and deploy IT applications and services faster and with far greater efficiency. Traditional enterprise application development falls short since it is not efficient and speedy. IT and business leaders are keen to take advantage of cloud computing as it offers businesses cost savings, scalability at the touch of a button, and flexibility to respond quickly to change.

Leading Kubernetes Management Tools For 2022

Kubernetes is the leading container-orchestration tool that was open-sourced in 2014 by Google and has helped engineers across the globe to significantly lower their cost of cloud computing ever since. Kubernetes also provides a resilient framework for deploying applications. Kubernetes management tools are quickly becoming essential to those that wish to monitor their containers on an ongoing basis, test, export and create intuitive dashboards.

Announcing $2M THG investment into Civo

Demystifying the complexity of cloud-native 5G network functions deployment using Robin CNP

Ketch and Kubernetes Quickstart

Bulletproofing Your Kubernetes Build

Enterprise Kubernetes use cases: 4 real-world stories

FluxCD and GitOps for enterprises

Why DevOps Tools Do Not Speak Developer Language And How to Overcome This

Installing Additional Modules in the Icinga Web 2 Docker Container

The Docker images we provide for both Icinga 2 and Icinga Web 2 already contain quite a number of modules. For example, the Icinga Web 2 image contains all the Web modules developed by us. But one of the main benefits of Icinga is extensibility, so you might want to use more than what is already included. This might be some third-party module or a custom in-house module.

FluxCD and GitOps in the Enterprise

Flux is a CNCF based open source stack of tools. Flux focuses on making it possible to keep Kubernetes clusters and cloud-native applications in sync with external resources and definitions hosted in environments such as GitHub. Implementing tools like FluxCD should enable you to achieve results such as: The results above can bring obvious benefits, and many teams are adopting FluxCD as their tool of choice for GitOps.

Rancher Online Meetup - October 2021 - Introducing Longhorn 1.2

Introducing Codefresh Argo Platform

Getting Started with VMware Tanzu Community Edition

VMware Tanzu Community Edition is a freely available, community-supported, open source distribution of VMware Tanzu that was announced at DevOps Loop 2021.

Designing Open RAN Platforms

Designing Modern 5G and 4G Core Platforms

Faster CI Builds with Docker Remote Caching

What Managed Kubernetes Service is Best for SREs?

A comparison of EKS, AKS, GKE, Rancher and OpenShift from an SRE’s perspective.

Overview: See the VMware Tanzu Application Platform Developer Experience

Live with SUSE Rancher and jFrog

KubeCon Los Angeles 2021 Wrapup

After a week in sunny Southern California, KubeCon North America 2021 is a wrap in Los Angeles. For many of us, KubeCon was the first in-person event we have attended since the start of the pandemic. Though this year, KubeCon is a hybrid event even if you missed the in-person talks, you can still catch the virtual talks online. Compared to KubeCons of years gone by, the event has been scaled back slightly to respect social distancing and slowly easing folks back into in-person conferences.

Silicon Valley Tech Start-up consortium will accelerate Edge monetisation

Live with SUSE Rancher and Speedscale

Live with SUSE Rancher and NGINX

Live with SUSE Rancher and Tigera

Live with SUSE Rancher and Codefresh

Calico Cloud: What's new in October

Calico Cloud is an industry-first security and observability SaaS platform for Kubernetes, containers, and cloud. Since its launch, we have seen customers use Calico Cloud to address a range of security and observability problems for regulatory and compliance requirements in a matter of days and weeks. In addition, they only paid for the services used, instead of an upfront investment commitment, thus aligning their budgets with their business needs.

One cloud IDE to rule them all - Philippe Charrière, GitLab

Implementing Istio in a Kubernetes cluster

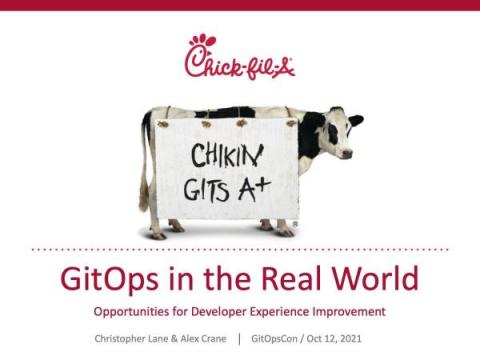

Kubernetes GitOps at Scale from Chick-fil-A

Matt and I are out in Los Angeles this week for KubeCon 2021 this week. At the GitOpsCon event Tuesday we were excited to attend this Kubernetes session: GitOps in the Real World: Opportunities for Developer Experience Improvement.

Live with SUSE Rancher and Microsoft

Docker Swarm vs Kubernetes: how to choose a container orchestration tool

Businesses around the world increasingly rely on the benefits of container technology to ease the burden of deploying and managing complex applications. Containers group all necessary dependencies within one package. They are portable, fast, secure, scalable, and easy to manage, making them the primary choice over traditional VMs. But to scale containers, you need a container orchestration tool—a framework for managing multiple containers.

Live with SUSE Rancher and Sysdig

Live with SUSE Rancher and StackState

What if the industry didn't use Docker? - Geoffrey Huntley, GitPod

From Terraform to GitOps to Pulumi

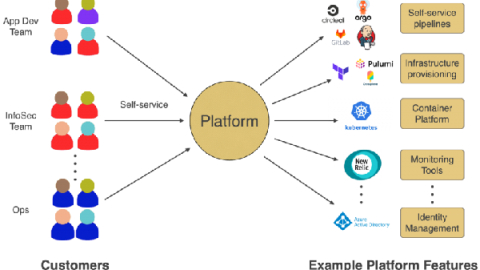

In a previous post, we talked about the increasing adoption of Platform Engineering teams. The post covered topics such as defining Platform Engineering and the roles and responsibilities of the team. When building an internal platform, a clear goal that many teams want to achieve is: Even though this is key to a successful platform team, this responsibility increases complexity, costs, support time, and more. Not to mention that this can be a long, very long journey.

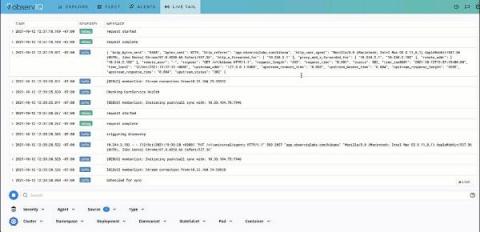

Troubleshooting Pod issues in Kubernetes with Live Tail

With the advent of IaaS (Infrastructure as a service) and IaC (Infrastructure as Code), it is now possible to manage versioning, code reviews, and CI/CD pipelines at the infrastructure level through resource provisioning and on-demand service routing. Kubernetes is the indisputable choice for container orchestration.

Kubespray 2.17 released with Calico eBPF and WireGuard support

Congratulations to the Kubespray team on the release of 2.17! This release brings support for two of the newer features in Calico: support for the eBPF data plane, and also for WireGuard encryption. Let’s dive into configuring Kubespray to enable these new features.

Leveraging Your First GitOps Engine - Flux

Not to muddy the waters with one more prefix in front of ops, GitOps is a newer DevOps paradigm that slants towards the developer. As the names states, GitOps is focused around Git, the source code management tool. As a developer, leveraging an SCM is one of the quintessential tools of the trade; allowing for collaboration and more importantly saving your hard work off of your machine.

General availability announcement

After nearly 2 years of perfecting our product during our beta phase and just over 5 months since we opened for early access, I have great pleasure in announcing that Civo is now 'General Availability'. It's taken us over 4 years to get to where we are today, with a colossal amount of work being contributed by our team to build the Civo platform and developer eco-system. I can honestly say that we're lucky to have so many amazing people at Civo.

General availability announcement

After nearly 2 years of perfecting our product during our beta phase and just over 5 months since we opened for early access, I have great pleasure in announcing that Civo is now 'General Availability'. It's taken us over 4 years to get to where we are today, with a colossal amount of work being contributed by our team to build the Civo platform and developer eco-system. I can honestly say that we're lucky to have so many amazing people at Civo.

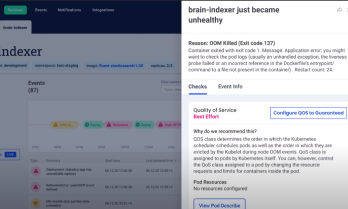

Komodor Workflows: Automated Troubleshooting at the Speed of WHOOSH!

Today, just in time for Kubecon 2021, I am happy to announce the beta availability of Workflows. For me, this is our most exciting product announcement to date – a completely new capability that expands the definition of what Komodor is, as it charts the course for its next evolution. Let me start with the feature first. In a nutshell, Workflows is a series of smart algorithms that operate within the “depths” of Komodor.

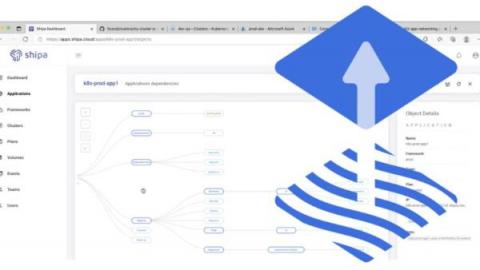

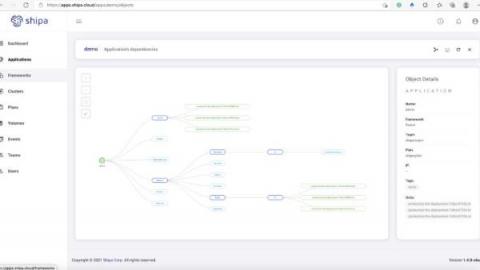

Local Shipa Deployment - k3d/s and Telepresence

Shipa can be deployed on most Kubernetes environments (EKS, GKE, AKS, OKE, Linode, minikube and so on). How about k3s? Let us try to deploy WordPress on k3s cluster using Shipa. We are going to use k3d to create a k3s Kubernetes cluster.

Introducing Codefresh Software Delivery Platform powered by Argo

With KubeCon 2021 upon us, we look forward to seeing many exciting announcements from our peers in the open-source DevOps community in the days ahead. Codefresh is honored to make some exciting news of our own. Today we officially unveiled the Codefresh Argo Platform – a fully featured, enterprise-class implementation of Argo.

I reveal how Qovery works under the hood

Transparency is essential when running production infrastructure. As we are onboarding more and more customers, we strive to make Qovery as open as possible and fully transparent on how it manages your applications. Check out our updated documentation to learn more on how Qovery works.

Announcing Workflows - Fix k8s issues on the fly

Best Practices Guide for Kubernetes Labels and Annotations

Kubernetes is the de facto container-management technology in the cloud world due to its scalability and reliability. It also provides a very flexible and developer-friendly API, which is the foundation of its control plane. The effectiveness of the Kubernetes API comes from how it manages the Kubernetes resources via metadata: labels and annotations. Metadata is essential for grouping resources, redirecting requests and managing deployments.

Application dashboard for FluxCD

Using Thanos to gain a unified way to query over multiple clusters by Wiard van Rij

Self Healing Kubernetes at the edge

10 years of cloud infrastructure with Eric Brewer

What Is Kubernetes Pod Disruption?

Rethinking observability for Kubernetes

Observability is a staple of high-performing software and DevOps teams. Research shows that a comprehensive observability solution, along with a number of other technical practices, positively contributes to continuous delivery and service uptime.

Mapping FluxCD Applications

Flux is a CNCF based open source stack of tools. Flux focuses on making it possible to keep Kubernetes clusters and cloud-native applications in sync with external resources and definitions hosted in environments such as GitHub. Implementing tools like FluxCD should enable you to achieve results such as: The results above can bring obvious benefits, and many teams are adopting FluxCD as their tool of choice for GitOps.

Provisioning bare metal Kubernetes clusters with Spectro Cloud and MAAS

Bare metal Kubernetes (K8s) is now easier than ever. Spectro Cloud has recently posted an article about integrating Kubernetes with MAAS (Metal-as-a-Service. In the article, they describe how they have created a provider for the Kubernetes Cluster API for Canonical MAAS (Metal-as-a-Service). This blog describes briefly the benefits of bare metal K8s, the challenges it presents, and how the work by Saad Malik and the team from Spectro Cloud solves those challenges.

Getting Started With the InfluxDB Template for NGINX Ingress Controller

Today, many of the internet’s busiest websites and applications rely on NGINX to run smoothly. And many of those websites and apps are run as cloud-native services in Kubernetes. In particular, the NGINX Ingress Controller is a best-in-class traffic management solution for cloud‑native apps in Kubernetes and containerized environments that uses NGINX as a reverse proxy, load balancer, API gateway, cache, or web application firewall.

Software development from anywhere with a dash of noyaml.com - Civo Online Meetup #14

Trigger a Kubernetes HPA with Sysdig metrics

In this article, you’ll learn, through an example, how to configure Keda to deploy a Kubernetes Horizontal Pod Autoscaler (HPA) that uses Sysdig Monitor metrics. Keda is an open source project that allows using Prometheus queries to scale Kubernetes pods. In Trigger a Kubernetes HPA with Prometheus metrics, you learned how to install and configure Keda to create a Kubernetes HPA triggered by a standard Prometheus query.

Trigger a Kubernetes HPA with Prometheus metrics

In this article, you’ll learn how to configure Keda to deploy a Kubernetes HPA that uses Prometheus metrics. The Kubernetes Horizontal Pod Autoscaler can scale pods based on the usage of resources, such as CPU and memory. This is useful in many scenarios, but there are other use cases where more advanced metrics are needed – like the waiting connections in a web server or the latency in an API.

Get Up and Running with VMware Tanzu Community Edition in Minutes

Observing container environments with Cloud Operations

What is VMware Tanzu Community Edition?

The History of CI/CD

What's New with VMware Tanzu Observability by Wavefront

VMworld 2021 is upon us. Today, we are announcing several updates that improve VMware Tanzu Observability by Wavefront’s ability to deliver analytics-driven insights for site-reliability engineers (SREs), developers, and platform teams.

How Rapid Iteration with GraphQL Helped Reenvision a Government Payments Platform

When embarking on digital transformation, success often comes down to using the right tools for the job. Emerging technologies have the ability to enable organizations to deliver better customer experiences more efficiently. This truism can serve as a forcing function for engineering teams to routinely reevaluate their tech choices and make sure they aren't missing out on a better solution.

Monitoring Kubernetes with Prometheus

Kubernetes is among the emerging open-source products expanding in the market at a very fast rate. It is a portable, extensible, and open-source platform used for managing containerized workloads and services. Companies are widely adopting it for the development of their major products. Docker is always used for running Kubernetes servers on local systems for testing purposes. It becomes essential for companies to monitor their Kubernetes container.

Better Kubernetes application monitoring with GKE workload metrics

The newly released 2021 Accelerate State of DevOps Report found that teams who excel at modern operational practices are 1.4 times more likely to report greater software delivery and operational performance and 1.8 times more likely to report better business outcomes. A foundational element of modern operational practices is having monitoring tooling in place to track, analyze, and alert on important metrics.

Building a Developer Experience on Kubernetes

Looking forward to KubeCon

KubeCon + CloudNativeCon North America is just around the corner. I’ve been looking forward to this event for a long time, especially since 2020 was virtual and it looks like there will be an in person option this year. This should be a great event and there are going to be a ton of awesome sessions. Last year was simply enormous with over 15K attendees who joined virtually.

Why we founded Komodor? Founders video

Calico on EKS Anywhere

Amazon EKS Anywhere is an official Kubernetes distribution from AWS. It’s a new deployment option for Amazon EKS that allows the creation and operation of on-premises Kubernetes clusters on your existing infrastructure.

Introducing VMware Tanzu Community Edition: Simple, Turnkey Access to the Kubernetes and Cloud Native Ecosystem

Open source is the foundation on which Tanzu stands, and we take inspiration not only from the engineering community, but also from the end-user community that has taken an upstream-first approach to deploy cloud native technologies in production. As with many things Kubernetes-centric, it is still quite challenging to operationalize a pure, clean, open source–only, upstream-aligned platform for enterprise use. We are looking to change that with VMware Tanzu Community Edition.

VMware Tanzu Kubernetes Grid Now Supports GPUs Across Clouds

While AI workloads are becoming more pervasive, challenges with deploying AI have slowed adoption. Blockers like data complexity, data silos, and lack of infrastructure contribute to the difficulty of deploying AI workloads, and to address these issues, organizations need an integrated, scalable, and high-performing solution.

Your First Shipa Cloud Deployment in 5 Minutes

Your First Shipa Cloud Deployment

After registering for Shipa Cloud or installing Shipa on your own infrastructure, you are now ready to deploy your first application. The beauty of Shipa is that in the spectrum of source code to a built image, Shipa can help you get these applications into the wild.

Introducing VMware Tanzu Community Edition

Today is a big day for the Tanzu team at VMware. It’s a day we’ve long been looking forward to, and a day that we’d like to celebrate with you. Just this morning we released VMware Tanzu Community Edition, a freely available, community-supported, open source distribution of VMware Tanzu that you can install and configure in minutes on your local workstation or your favorite cloud. This release is big news for us, and exciting news for you too!

Building a successful Platform Engineering team

We see an increase in the number of companies building internal platform engineering teams. With this increase, we have seen some successful and some not-so-successful approaches. The goal of this article is to go through some of the practices we see out there.

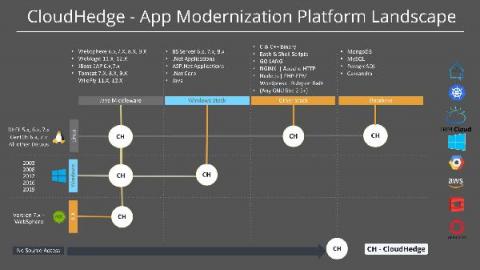

Accelerating App Modernization to AWS within Days using CloudHedge

Applications are the backbone of any digital business! They allow organizations to deliver exceptional customer experiences, innovate at supersonic speeds, and harness the true power of breakthrough technology. However, to truly extract the advantages of any business applications, organizations need to ensure that their applications are agile, flexible, cost-efficient, scalable, support continuous delivery, etc. basically – the applications need to be cloud-ready.

Accelerating App Modernization to AWS within Days using CloudHedge

Applications are the backbone of any digital business! They allow organizations to deliver exceptional customer experiences, innovate at supersonic speeds, and harness the true power of breakthrough technology. However, to truly extract the advantages of any business applications, organizations need to ensure that their applications are agile, flexible, cost-efficient, scalable, support continuous delivery, etc. basically – the applications need to be cloud-ready.