Operations | Monitoring | ITSM | DevOps | Cloud

September 2023

How to deploy Grafana on Kubernetes (Grafana Office Hours #13)

Amplifying Developer Efficiency: Leveraging Internal Developer Platforms for Optimal Performance

New Software Development Concepts You Should Know

Kubernetes Load Testing: Top 5 Tools & Methodologies

Beyond savings: Overlooked aspects of container optimization

DIY or managed suite: Choosing the right AWS container optimization solution

Hands-on Hacking Containers and ways to Prevent it - Civo Navigate NA 23

CFEngine: The agent is in 29 - Basic Docker inventory with CFEngine

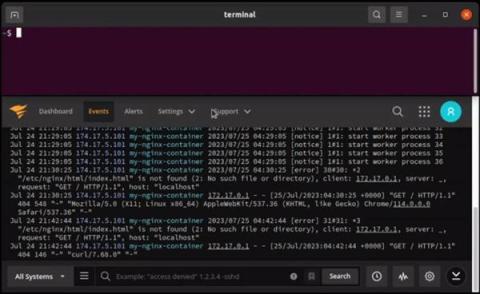

How to SSH into Docker containers

A Docker container is a portable software package that holds an application’s code, necessary dependencies, and environment settings in a lightweight, standalone, and easily runnable form. When running an application in Docker, you might need to perform some analysis or troubleshooting to diagnose and fix errors. Rather than recreating the environment and testing it separately, it is often easier to SSH into the Docker container to check on its health.

Navigating Multi-Cloud Environments: Managing Deployments with Ease

Multi-cloud seems like an obvious path for most organizations, but what isn’t obvious is how to implement it, especially with a DevOps centric approach. For Cycle users, multi-cloud is just something they do. It’s a native part of the platform and a standardized experience that has led to 70+% of our users consuming infrastructure from more than 1 provider.

Unlocking Kubernetes Deployment Excellence with CI CD Automation

Software development, agility and efficiency are paramount. Continuous Integration and Continuous Deployment (CI/CD) practices have revolutionised the way we build, test, and deploy software. When coupled with the power of Kubernetes, an open-source container orchestration platform, organisations can achieve a level of deployment excellence that was once only a dream.

Reaping the Benefits of Multi-Cluster Orchestration in Kubernetes

The rise of containerization has precipitated an unprecedented shift in the software development landscape, with Kubernetes emerging as the de facto standard for managing large-scale containerized applications. One of the more nuanced aspects of Kubernetes that is gaining attention is multi-cluster orchestration. This approach to cluster management offers several compelling advantages that reshape how businesses operate and innovate in a cloud-native context.

Virtual Kubernetes Clusters - Tips and Tricks - Civo Navigate NA 2023

Failing in the Cloud-How to Turn It Around

Success in the cloud continues to be elusive for many organizations. A recent Forbes article describes how financial services firms are struggling to succeed in the cloud, citing Accenture Research that found that only 40% of banks and less than half of insurers fully achieved their expected outcomes from migrating to cloud. Similarly, a 2022 KPMG Technology Survey found that 67% of organizations said they had failed to receive a return on investment in the cloud.

Automate Kubernetes Platform Operations

Scaling in Kubernetes: An Overview

Kubernetes has become the de facto standard for container orchestration, offering powerful features for managing and scaling containerized applications. In this guide, we will explore the various aspects of Kubernetes scaling and explain how to effectively scale your applications using Kubernetes. From understanding the scaling concepts to practical implementation techniques, this guide aims to equip you with the knowledge to leverage Kubernetes scaling capabilities efficiently.

Rootless Containers - A Comprehensive Guide

Containers have gained significant popularity due to their ability to isolate applications from the diverse computing environments they operate in. They offer developers a streamlined approach, enabling them to concentrate on the core application logic and its associated dependencies, all encapsulated within a unified unit.

How to ensure your Kubernetes Pods have enough memory

Memory (or RAM, short for random-access memory) is a finite and critical computing resource. The amount of RAM in a system dictates the number and complexity of processes that can run on the system, and running out of RAM can cause significant problems, including: This problem can be mitigated using clustered platforms like Kubernetes, where you can add or remove RAM capacity by adding or removing nodes on-demand.

Operationalizing Kubernetes within DoD - Civo Navigate NA 2023

Cycle's New Interface Part III: The Future is LowOps

We recently covered some of the complex decisions and architecture behind Cycle’s brand new interface. In this final installment, we’ll peer into our crystal ball and glimpse into the future of the Cycle portal. Cycle already is a production-ready DevOps platform capable of running even the most demanding websites and applications. But, that doesn’t mean we can’t make the platform even more functional, and make DevOps even simpler to manage.

[Webinar] Unified container visibility: Managing multi-cluster Kubernetes environments

Optimizing Workloads in Kubernetes: Understanding Requests and Limits

Kubernetes has emerged as a cornerstone of modern infrastructure orchestration in the ever-evolving landscape of containerized applications and dynamic workloads. One of the critical challenges Kubernetes addresses is efficient resource management – ensuring that applications receive the right amount of compute resources while preventing resource contention that can degrade performance and stability.

Rescue Struggling Pods from Scratch

Containers are an amazing technology. They provide huge benefits and create useful constraints for distributing software. Golang-based software doesn’t need a container in the same way Ruby or Python would bundle the runtime and dependencies. For a statically compiled Go application, the container doesn’t need much beyond the binary.

10 Best Internal Developer Portals to Consider in 2023

Speed up your Team by Moving your Development Environments to Kubernetes - Civo Navigate NA 2023

Merging to Main #7: Scaling CI/CD Across Languages & Technologies

Using Imposter Syndrome to your Advantage - Civo Navigate NA 2023

What I Learnt Fixing 50+ Broken Kubernetes Clusters - Civo Navigate NA 23

How I cut my AKS cluster costs by 85%

Master Class: Deep Dive with SLE BCI

How to Build an Internal Developer Platform: Everything You Need to Know

Application Dependency Maps: The Secret Weapon for Troubleshooting Kubernetes

Picture this: You're knee-deep in the intricacies of a complex Kubernetes deployment, dealing with a web of services and resources that seem like a tangled ball of string. Visualization feels like an impossible dream, and understanding the interactions between resources? Well, that's another story. Meanwhile, your inbox is overflowing with alert emails, your Slack is buzzing with queries from the business side, and all you really want to do is figure out where the glitch is. Stressful? You bet!

Building a Secure By-Design Pipeline with an Open Source Stack - Civo Navigate NA 23

Database Schema Migrations in Ephemeral Environments: Best Practices

Deploying a multi-availability zone Kubernetes cluster for High Availability

Many cloud infrastructure providers make deploying services as easy as a few clicks. However, making those services high availability (HA) is a different story. What happens to your service if your cloud provider has an Availability Zone (AZ) outage? Will your application still work, and more importantly, can you prove it will still work? In this blog, we'll discuss AZ redundancy with a focus on Kubernetes clusters.

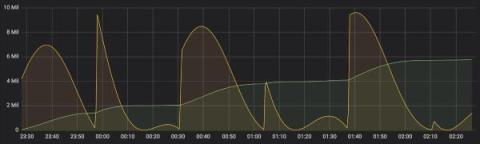

Monitoring Kubernetes tutorial: Using Grafana and Prometheus

Behind the trends of cloud-native architectures and microservices lies a technical complexity, a paradigm shift, and a rugged learning curve. This complexity manifests itself in the design, deployment, and security, as well as everything that concerns the monitoring and observability of applications running in distributed systems like Kubernetes. Fortunately, there are tools to help developers overcome these obstacles.

The Difficulties of Measuring Engineering

The report is so absurd and naive that it makes no sense to critique it in detail. - Kent Beck responding to the McKinsey Report. Luckily this was a hollow threat, because a few days later he and fellow blogger Gergely Orosz released a two part blog series critiquing not exactly Mckinsey's report but... any report that tried to put “effort based” metrics at the top of the list for things to track.

What is new in Rancher Desktop 1.10

We are delighted to announce the release of a new version of Rancher Desktop. This release includes significant enhancements to features such as Deployment Profiles, mount types support, networking proxy configuration, and other important bug fixes.

Best Practices for TDM Masking with Docker

Our Cloud Cost Optimization Framework That Works

We’ve seen two general approaches to getting optimization done to reduce cloud costs in our customers; the first and most popular is “the stick” where FinOps teams campaign against lines of business development teams with a mantra of “spend less”!

Announcing the Harvester v1.2.0 Release

Ten months have elapsed since we launched Harvester v1.1 back in October of last year. Harvester has since become an integral part of the Rancher platform, experiencing substantial growth within the community while gathering valuable user feedback along the way. Our dedicated team has been hard at work incorporating this feedback into our development process, and today, I am thrilled to introduce Harvester v1.2.0!

Understanding Monoliths and Microservices - VMware Tanzu Fundamentals

Using Ephemeral Environments for Chaos Engineering and Resilience Testing

How to Gain the Advantages of an Immutable and Self-Healing Infrastructure

Among the benefits D2iQ customers gain by deploying the D2iQ Kubernetes Platform (DKP) is an immutable and self-healing Kubernetes infrastructure. The benefits include greater reliability, uptime, and security, reduced complexity, and easier Kubernetes cluster management. The key to gaining these capabilities is Cluster API (CAPI). DKP uses CAPI to provision and manage Kubernetes clusters, which imposes an immutable deployment model and enables state reconciliation for Kubernetes clusters.

Realtime Collaboration in Kubernetes to Supercharge Team Productivity - Civo Navigate NA 2023

VMware Named an Outperformer in 2023 GigaOm Radar Report for GitOps

VMware is pleased to reveal that we have been named an Outperformer in the 2023 GigaOm Radar Report for GitOps. In the outperformer ring and moving closer to a leadership position, VMware has been placed in the Platform Play and Innovation quadrant. The GigaOm Radar for GitOps shows VMware as an outperformer.

Feature Flags Management with Ephemeral Environments

Container Network Observability

Microservices on Kubernetes: 12 Expert Tips for Success

In recent years, microservices have emerged as a popular architectural pattern. Although these self-contained services offer greater flexibility, scalability, and maintainability compared to monolithic applications, they can be difficult to manage without dedicated tools. Kubernetes, a scalable platform for orchestrating containerized applications, can help navigate your microservices.

See How Kubernetes Traffic Routes Through Data Center, Cloud, and Internet with Kentik Kube

Kubernetes Management | A Short History of D2iQ

Continuous Verification - It's More Than Just Breaking Things On Purpose - Civo Navigate NA 23

Testing Microservices at Scale: Using Ephemeral Environments

Internal Developer Platform vs. Internal Developer Portal: What to choose?

Running OpenSearch on Kubernetes With Its Operator

If you’re thinking of running OpenSearch on Kubernetes, you have to check out the OpenSearch Kubernetes Operator. It’s by far the easiest way to get going, you can configure pretty much everything and it has nice functionality, such as rolling upgrades and draining nodes before shutting them down. Let’s get going 🙂

ECS Vs. EC2 Vs. S3 Vs. Lambda: The Ultimate Comparison

Internal Developer Platform vs Internal Developer Portal: What's The Difference?

What is a Service Mesh? And Why Would You Want One?

Monitoring Kubernetes with Graphite

In this article, we will be covering how to monitor Kubernetes using Graphite, and we’ll do the visualization with Grafana. The focus will be on monitoring and plotting essential metrics for monitoring Kubernetes clusters. We will download, implement and monitor custom dashboards for Kubernetes that can be downloaded from the Grafana dashboard resources. These dashboards have variables to allow drilling down into the data at a granular level.

#90DaysOfDevOps - The DevOps Learning Journey - Civo Navigate NA 23

Kubernetes Logging with Filebeat and Elasticsearch Part 1

This is the first post of a 2 part series where we will set up production-grade Kubernetes logging for applications deployed in the cluster and the cluster itself. We will be using Elasticsearch as the logging backend for this. The Elasticsearch setup will be extremely scalable and fault-tolerant.

Kubernetes Logging with Filebeat and Elasticsearch Part 2

In this tutorial, we will learn about configuring Filebeat to run as a DaemonSet in our Kubernetes cluster in order to ship logs to the Elasticsearch backend. We are using Filebeat instead of FluentD or FluentBit because it is an extremely lightweight utility and has a first-class support for Kubernetes. It is best for production-level setups. This blog post is the second in a two-part series. The first post runs through the deployment architecture for the nodes and deploying Kibana and ES-HQ.

Day 2 Navigate Europe 2023 Wrap Up

We kicked off the start of the Day 2 with our host Nigel Poulton as he prepared us with a quick rundown of the highlights from the first day before giving attendees a taste of what to expect from the rest of the event. After this point, Nigel brought Kelsey Hightower to the stage for his keynote session with Mark Boost and Dinesh Majrekar. If you missed our Day 1 recap, check it out here.

Multi-cluster Failover With A Service Mesh - Civo Navigate NA 2023

Why GDIT Chose D2iQ for Military Modernization

General Dynamics Information Technology (GDIT) is among the major systems integrators that have chosen D2iQ to create Kubernetes solutions for their U.S. military customers. I spoke with Todd Bracken, GDIT DevSecOps Capability Lead for Defense, about the reasons GDIT chose D2iQ and the types of solutions his group was creating for U.S. military modernization programs using the D2iQ Kubernetes Platform (DKP).

Deploying Single Node And Clustered RabbitMQ

RabbitMQ is a messaging broker that helps different parts of a software application communicate with each other. Think of it as a middleman that takes care of sending and receiving messages so that everything runs smoothly. Since its release in 2007, it's gained a lot of traction for being reliable and easy to scale. It's a solid choice if you're dealing with complex systems and want to make sure data gets where it needs to go.

VMware Tanzu Service Mesh Named a Leader in 2023 GigaOm Radar Report on Service Mesh

GigaOm has once again placed VMware Tanzu Service Mesh within the leader ring of its Radar Report on Service Mesh. This year Tanzu Service Mesh has been upgraded to the Outperformer label, moving closer to the center and marking its heightened recognition as an industry leader. This is not only a testament to our robust enterprise capabilities and broad support for various application platforms, public clouds, and runtime environments, but also a validation of our strategic approach.

K3s Vs K8s: What's The Difference? (And When To Use Each)

Using GitOps for Databases

In our previous article about Database migrations we explained why you should treat your databases with the same respect as your application source code. Database migrations should be fully automated and handled in a similar manner to applications (including history, rollbacks, traceability etc).

Monitoring EKS in AWS Cloudwatch

As Kubernetes adoption surges across the industry, AWS EKS stands out as a robust solution that eases the journey from initial setup to efficient scaling. This fully managed Kubernetes service is revolutionizing how businesses handle containerized applications, offering agility, scalability, and resilience.

Monitoring Weather At The Edge With K3s and Raspberry Pi Devices - Civo Navigate NA 2023

Getting Started with Cluster Autoscaling in Kubernetes

Autoscaling the resources and services in your Kubernetes cluster is essential if your system is going to meet variable workloads. You can’t rely on manual scaling to help the cluster handle unexpected load changes. While cluster autoscaling certainly allows for faster and more efficient deployment, the practice also reduces resource waste and helps decrease overall costs.

How to keep your Kubernetes Pods up and running with liveness probes

Getting your applications running on Kubernetes is one thing: keeping them up and running is another thing entirely. While the goal is to deploy applications that never fail, the reality is that applications often crash, terminate, or restart with little warning. Even before that point, applications can have less visible problems like memory leaks, network latency, and disconnections. To prevent applications from behaving unexpectedly, we need a way of continually monitoring them.

Logging in Docker Containers and Live Monitoring with Papertrail

Day 1 Navigate Europe 2023 Wrap Up

Navigate Europe 2023 has come to an end, and we couldn’t be more grateful for everyone involved in this, from our sponsors, attendees, and most importantly, the Civo team. Whilst we have already announced the next event for next year in Austin, Texas, we want to spend some time reflecting on the amazing few days we’ve just had, and everything we took away from it.

Manage Kubernetes environments with GitOps and dynamic config

Most modern infrastructure architectures are complex to deploy, involving many parts. Despite the benefits of automation, many teams still chose to configure their architecture manually, carried out by a deployment expert or, in some cases, teams of deployment engineers. Manual configurations open up the door for human error. While DevOps is very useful in developing and deploying software, using Git combined with CI/CD is useful beyond the world of software engineering.

How To Gain Back Your Velocity When Working With Kubernetes - Civo Navigate NA 2023

Simplifying your Kubernetes infrastructure with cdk8s

Kubernetes has become the backbone of modern container orchestration, enabling seamless deployment and management of containerized applications. However, as applications grow in complexity, so do the challenges of managing their Kubernetes infrastructure. Enter cdk8s, a revolutionary toolset that transforms Kubernetes configuration into a developer-friendly experience.

Amazon ECS Vs. EKS Vs. Fargate: The Complete Comparison

Building an E2E Ephemeral Testing Environments Pipeline with GitHub Actions and Qovery

Exploring Kubernetes 1.28 Sidecar Containers

Kubernetes v1.28 comes with multiple new enhancements this year and we’ve already covered an overview of those in our previous blog, Do check this out before diving into sidecar containers. We’re going to completely focus on the new sidecar feature for this post, which enables restartable init containers and is available in alpha in Kubernetes 1.28.

Maximizing Efficiency and Collaboration with Top-tier DevOps Services

In today’s fast-paced digital landscape, where software development and deployment happen at lightning speed, DevOps has emerged as the key to achieving operational excellence and maintaining a competitive edge. DevOps is more than just a buzzword; it’s a culture, a set of practices, and a collection of powerful tools that streamline collaboration between development and operations teams.

Understanding Kubernetes Network Policies

Kubernetes has emerged as the gold standard in container orchestration. As with any intricate system, there are many nuances and challenges associated with Kubernetes. Understanding how networking works, especially regarding network policies, is crucial for your containerized applications' security, functionality, and efficiency. Let’s demystify the world of Kubernetes network policies.

Speakers & Sponsors Highlights from Navigate Europe 2023

Cycle's New Interface, Part II: The Engineering Behind Cycle's New Portal

In our last installment, we covered the myriad of new UI changes added to Cycle’s portal. In this part, we walk through five of the tough engineering choices made when developing the new interface, discussing the alternatives that were considered, and shining a light on some of the technology our engineering team utilizes today.

Using Argo CDs new Config Management Plugins to Build Kustomize, Helm, and More

Starting with Argo CD 2.4, creating config management plugins or CMPs via configmap has been deprecated, with support fully removed in Argo CD 2.8. While many folks have been using their own config management plugins to do things like `kustomize –enable-helm`, or specify specific version of Helm, etc – most of these seem to have not noticed the old way of doing things has been removed until just now!

DKP 2.6 Features New AI Navigator to Bridge the Kubernetes Skills Gap

The latest release of the D2iQ Kubernetes Platform (DKP) represents yet another significant boost to DKP’s multi-cloud and multi-cluster management capabilities. D2iQ Kubernetes Platform (DKP) 2.6 features the new DKP AI Navigator, an AI assistant that enables DevOps to more easily manage Kubernetes environments. As Forbes noted in Addressing the Kubernetes Skills Gap, “The Kubernetes skills shortage is impacting companies across sectors.”

Understand Your Kubernetes Telemetry Data in Less Than 5 Minutes: Try Mezmo's New Welcome Pipeline

Most vendor trials take quite a bit of effort and time. Now, with Mezmo’s new Welcome Pipeline, you can get results with your Kubernetes telemetry data in just a couple of minutes. But first, let’s discuss why Kubernetes data is such a challenge, and then we’ll overview the steps.

Overcoming Shared Environment Bottlenecks

Deploying the OpenTelemetry Collector to Kubernetes with Helm

The OpenTelemetry Collector is a useful application to have in your stack. However, deploying it has always felt a little time consuming: working out how to host the config, building the deployments, etc. The good news is the OpenTelemetry team also produces Helm charts for the Collector, and I’ve started leveraging them. There are a few things to think about when using them though, so I thought I’d go through them here.

How to ensure your Kubernetes Pods have enough CPU

Gremlin's Detected Risks feature immediately detects any high-priority reliability concerns in your environment. These can include misconfigurations, bad default values, or reliability anti-patterns. A common risk is deploying Pods without setting a CPU request. While it may seem like a low-impact, low-severity issue, not using CPU requests can have a big impact, including preventing your Pod from running.

When to scale tasks on AWS Elastic Container Service (ECS)

Since its inception, Amazon Elastic Container Service (ECS) has emerged as a strong choice for developers aiming to efficiently deploy, manage, and scale containerized applications on AWS cloud. By abstracting the complexities associated with container orchestration, ECS allows teams to focus on application development, while handling the underlying infrastructure, load balancing, and service discovery requirements.

10 Tips To Reduce Your Deployment Time with Qovery

Using Solr Operator to Autoscale Solr on Kubernetes

In this tutorial, you’ll see how to deploy Solr on Kubernetes. You’ll also see how to use the Solr Operator to autoscale a SolrCloud cluster based on CPU with the help of the Horizontal Pod Autoscaler. Let’s get going! 🙂

The Foundation of Cloud Optimization - Cloud Tagging Strategy

Cloud Tagging should be automated, not left to humans. Optimization in the cloud is actually really simple. Here’s how we get our customers thinking differently which in turn makes them successful. “To your developers the cloud is like a candy store is to a kid where all the candy is free”. Just as parents need to teach their kids the value of money, you need to teach your developers the value of cloud spend.

Calico monthly roundup: August 2023

Welcome to the Calico monthly roundup: August edition! From open source news to live events, we have exciting updates to share—let’s get into it!

Progressive delivery for Kubernetes Config Maps using Argo Rollouts

Argo Rollouts is a Kubernetes controller that allows you to perform advanced deployment methods in a Kubernetes cluster. We have already covered several usage scenarios in the past, such as blue/green deployments and canaries. The Codefresh deployment platform also has native support for Argo Rollouts and even comes with UI support for them.

Are all Kubernetes services in the cloud the same? Azure Container Apps: Limitations & Awesome Features

An advanced, open-source technology called Kubernetes is used to manage, scale and deploy containerised applications automatically. Kubernetes offers a strong architecture that enables development and operations teams to effectively manage applications of several containers. Kubernetes was made by Google engineers. They shared it for free in 2014, and now a group called CNCF takes care of Kubernetes. People really like using Kubernetes to manage apps inside containers.

Helm deployments to a Kubernetes cluster with CI/CD

Containers and microservices have revolutionized the way applications are deployed on the cloud. Since its launch in 2014, Kubernetes has become a de-facto standard as a container orchestration tool. Helm is a package manager for Kubernetes that makes it easy to install and manage applications on your Kubernetes cluster. One of the benefits of using Helm is that it allows you to package all of the components required to run an application into a single, versioned artifact called a Helm chart.

Building Organizational Trust for Cloud Optimization Software

You’ve loaded data into Densify and after reviewing recommendations your developers don’t want to take the recommended action – they aren’t trusting, yet. This is one of the top issues identified by the FinOps Foundation and many of our customers of Densify – but not all. Let’s explore what some customers do differently to build organizational trust in taking optimization recommendations.

Streamlining Kubernetes Workflows The Power of CI/CD Integration

In the ever-evolving landscape of software development, where agility and reliability are paramount, Kubernetes has emerged as a game-changer with its container orchestration capabilities. However, as applications become more complex and distributed, managing Kubernetes workflows presents a unique set of challenges.