Operations | Monitoring | ITSM | DevOps | Cloud

January 2023

MetTel and Megaport Enable High Performance Applications in the Cloud

Before Megaport, MetTel’s customers battled impaired application performance and deployment delays when moving to the cloud. Now, their connectivity enables high-performance applications in the most distributed cloud environments. Here’s how.

Kubernetes network monitoring: What is it, and why do you need it?

In this article, we will dive into Kubernetes network monitoring and metrics, examining these concepts in detail and exploring how metrics in an application can be transformed into tangible, human-readable reports. The article will also include a step-by-step tutorial on how to enable Calico’s integration with Prometheus, a free and open-source CNCF project created for monitoring the cloud.

Outages Happen. Now What?

Network outages happen more often than you think. We may not experience them directly or even know they're occurring at all. When outages affect household names like Facebook, Amazon, Microsoft, and others, however, we're sure to find out after the fact that there was an issue. Depending on the user's activities and the duration of the issue, stress and frustration levels can vary. When a marketer can’t get that ground-breaking advertisement up on Facebook, they can get antsy.

What are Network Operation Centers (NOC) and how do NOC teams work?

Modern-day markets are highly competitive and in order to foster stronger customer relations, we see businesses striving hard to be always available and operational. Hence, businesses invest heavily to ensure higher uptime and to have dedicated teams that constantly monitor the performance of an organization's IT resources. In this blog, we will explore what NOC teams are and why they are important.

How to Perform Packet Loss Tests to Prevent Network Issues

What is New in Flowmon 12.2 and ADS 12.1

Our development teams continue to improve Progress Flowmon. The latest update takes the core Flowmon product to version 12.2, while our industry-leading Anomaly Detection System (ADS) gets incremented to ADS 12.1.

Network and Infrastructure Monitoring - Is Every Tool the Same?

Every organization reaches a certain size where network and infrastructure monitoring becomes a necessity. And while that “certain size” will depend on whether you’re running a private company, non-profit organization or government agency, the time to act always comes. Network and Infrastructure Monitoring tools enable organizations to harness greater benefits from their computing infrastructures. How you use these tools can even give you a competitive advantage.

Infovista unveils NLA Cloud Platform to unify cloud-native network planning, testing and automated assurance and operations

Microsoft Outage on 25th Jan 2023 MO502273

Microsoft had its corporate earnings call yesterday and posted weaker guidance. But guess what? Several hours later, the tech giant was hit by a networking outage that took down Azure and other services like Teams and Outlook, affecting millions of users globally.

Ask Me Anything: Solving the Top 7 WhatsUp Gold Support Issues

Wireless Troubleshooting Made Easy-How Wi-Fi Monitoring Helps

There is no question that wireless networks are taking over. Offices may still have Ethernet cables to each cubicle, but usually, they go unused. Wi-Fi is the new LAN. And so many devices, tablets, smartphones and even some laptop-type devices are now wireless only.

How Quantum Computing Can Better Protect Your Data

Bringing the power of quantum encryption to the cloud, we take a look at the emerging technology that’s changing how we protect our data.

The True Cost of Switching to Auvik

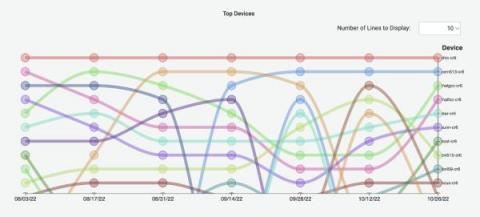

Five eye-catching Grafana visualizations used by Energy Sciences Network to monitor network data

ESnet (Energy Sciences Network) is a high-performance network backbone built to support scientific research. Funded by the U.S. Department of Energy and part of Lawrence Berkeley National Laboratory, ESnet provides fast, reliable connections between national laboratories, supercomputing facilities, and scientific instruments around the globe. Our mission is to allow scientists to collaborate and perform research without worrying about distance or location.

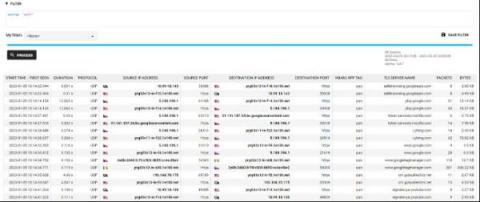

Easily analyze AWS VPC Flow Logs with Elastic Observability

Elastic Observability provides a full-stack observability solution, by supporting metrics, traces, and logs for applications and infrastructure. In a previous blog, I showed you how to monitor your AWS infrastructure running a three-tier application. Specifically we reviewed metrics ingest and analysis on Elastic Observability for EC2, VPC, ELB, and RDS.

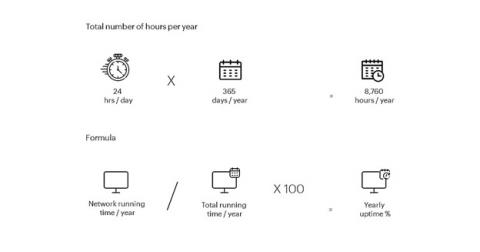

Uptime monitoring: How to track your network availability, 24/7

Understanding Cloud Costs In 2023

Modernizing LinkedIn's Traffic Stack | Sanjay Singh & Sri Ram Bathina

Network Fault Management and Monitoring: Definition, Benefits, and Guide

Can companies afford to have network breakdowns or downtime in this digital-first era? No, they can't. With digital transformation taking place across industries and increasing expectations to stay connected wherever you are, companies need to up their game and ensure they provide uninterrupted network services and high performance. Therefore, understanding network fault management and monitoring - what they are, and the benefits of using a fault management system can help you manage your network more effectively.

A DWDM Guide: Definition, Benefits, and When You Should Use It

Today’s telecom, cable, and data providers constantly compete to provide the best, most reliable service to customers, while investing in the right technologies to maintain the integrity of data and enable the separation of users. Because of this, service providers often spend countless hours searching for cost-effective, yet future-proof solutions that fit their business needs. This is where DWDM comes in.

What is a NMS?

BICS to power its suite of advanced Software as a Service (SaaS) solutions through Infovista

How to Perform a Network Audit

What Millions of Requests per Second Mean in Terms of Cost & Energy Savings | Willy Tarreau

How Much Does That Minute Cost?

Network outages are both common and expensive – usually far more expensive than people realize. Yes, the network is down and the organization is losing money, but do you really appreciate how much money? And how much an outage can actually cost on a per minute basis? It’s not only more than most people think, it’s something that can be mitigated fairly easily.

SASE: A Long-term Play for Security

Secure Access Service Edge (SASE) is a strong trend emerging in enterprise network security, representing the long-term capability to integrate and consolidate a variety of networking and cybersecurity tools. Let’s do a quick dive on the technology to understand why it’s necessary. SASE emerged as an outgrowth of the software-defined wide-area networking (SD-WAN) technology movement, which made it easier to configure, orchestrate, and manage WAN connectivity from enterprise branches.

What is a Network Protocol and How Does it Work?

Understanding the Advantages of Flow Sampling: Maximizing Efficiency without Breaking the Bank

The whole point of our beloved networks is to deliver applications and services to real people sitting at computers. So, as network engineers, monitoring the performance and efficiency of our networks is a crucial part of our job. Flow data, in particular, is a powerful tool that provides valuable insights into what’s happening in our networks for ongoing monitoring and troubleshooting poor-performing applications.

Megaport Simplifies AWS Outposts Networking

Combining AWS Outposts Rack with Direct Connect optimizes performance for demanding enterprise workloads. Megaport’s Outposts Ready Direct Connect solution simplifies the networking side.

Save 30% of your time with automation

Held for Ransom - Ransomware Detection & Response with Flowmon ADS

How To Optimise Your Cloud Infrastructure In 2023

How to Monitor Distributed Networks

Progress Flowmon Ranked as a Technology Leader in SPARK Matrix 2022 NDR Report

The threat landscape that organizations faced in 2022 and continue to face in 2023 is large, complex, and continuously changing. Defense requires a multi-layered approach that delivers monitoring, detection, and response at many points within on-premise and cloud-based infrastructure and systems. A Network Detection and Response (NDR) solution is critical to a modern cybersecurity defense strategy.

Cuba and the Geopolitics of Submarine Cables

This week marks a decade since the ALBA-1 submarine cable began carrying traffic between Cuba and the global internet. On 20 January 2013, I published the first evidence of this historic subsea cable activation which enabled Cuba to finally break its dependence on geostationary satellite service for the country’s international connectivity. ALBA-1 was one of my first lessons on how geopolitics can shape the physical internet.

What's in Store for NetOps in 2023?

There are many factors making networking both more complicated and more critical than ever. The advent of cloud infrastructure, web-based applications, and increasingly diverse network environments demand a new approach to network operations, or NetOps, as it’s referred to in the industry. Networks are bigger than ever: they now connect everything ranging from automobiles to cloud servers.

Catchpoint Announces the World's First Complete Solution to Monitor and Protect the Internet's Leading Companies from BGP Incidents in Seconds

Data Gravity in Cloud Networks: Massive Data

I spent the last few months of 2022 sharing my experience transitioning networks to the cloud, with a focus on spotting and managing some of the associated costs that aren’t always part of the “sticker price” of digital transformation.

Cyberinsurance NOT a substitute for good policy

Detecting Network Anomalies With Graylog Security

New Year, New BGP Leaks

Only two days into the new year, and we had our first BGP routing leak. It was followed by a couple more in subsequent days. Although these incidents were brief with marginal operational impact on the internet, they are still worth analyzing because they shed light on the cracks in the internet’s routing system.

Standardization & Automation Key for MSPs in 2023

The "New Last Mile" of the Office Network

The NetOps Expert - Episode 7: The Evolution of Networking

Announcing HAProxy Data Plane API 2.7

HAProxy Technologies is proud to unveil the 2.7 release of HAProxy Data Plane API. This release was a huge undertaking, and as with the 2.6 release, we focused on extending support for configuration keywords. We are happy to announce that with this release we support all HAProxy configuration keywords in the Data Plane API. Along with enhanced keyword coverage, we’ve added the ability to specify multiple named defaults sections through the API.

100 Funny Wifi Names For Your Home, Office, or Hotspot

How to Use Redfish Discovery in WhatsUp Gold

Kubernetes and the Service Mesh Era

Kubernetes is a game-changer for enterprise organizations. Automating deployment, scaling, and management of containerized applications allows organizations to embrace a cloud-native paradigm at scale and more easily employ best practices, such as microservices and DevSecOps. But as with all tech, Kubernetes has its limits. Kelsey Hightower famously tweeted that “Kubernetes is a platform for building platforms. It’s a better place to start; not the endgame.”

Static IP addresses for your data infrastructure

Encouraging Employee Movement and Growth Benefits Everyone

From hybrid work opportunities to celebrating milestones, we share best practices from our experience.

Network Security for Banks-Preventing Breaches, Protecting Data

It is no surprise that cybercriminals are after the money, and banks have plenty lying around. They also have gobs of data, making banks irresistible to hackers who have a field day attacking complex banking IT systems flush with more connections than a movie agent. Here are a few recent facts to know.

Datadog Network Device Monitoring (NDM)

The Reality of Machine Learning in Network Observability

For the last few years, the entire networking industry has focused on analytics and mining more and more information out of the network. This makes sense because of all the changes in networking over the last decade. Changes like network overlays, public cloud, applications delivered as a service, and containers mean we need to pay attention to much more diverse information out there.

How to Mitigate Network Risks to Achieve Highly Resilient Business Services

They say change is good. But in IT operations, change is also the number one cause of outages. According to the Uptime Institute, 49% of all service outages are attributed to configuration and change management errors. That's a lot of avoidable headaches. And because errors often have downstream effects, it may not be obvious what caused an outage, resulting in prolonged downtime that affects revenue-generating business services, results in service level agreement (SLA) penalties, and causes a loss of customer trust. And those costs add up quickly. Gartner figures the meter for an average downtime event runs at $5,600 per minute.

What's Using Your Bandwidth? Here's a Monitoring Tool

Bandwidth monitoring provides IT administrators with the assurance that the network has sufficient capacity to run business-critical applications. In addition, network ops team have end-to-end visibility to identify network hogs that cause the congestion. Typically, when a single component overloads in any network, it can bring the entire operation to its knees and impact the employee digital experience. For example, even if you may have a dedicated service plan from your ISP, employees will end up complaining about issues like large file transfer time and slower applications.

Business Benefits of Network Detection and Response (NDR)

When we talk about the business value of a tool or a system that at first glance may seem like a “nice to have” or a “helpful but not absolutely necessary” technology, it is a good idea to start any discussion on the merits of the tool by putting some things into perspective.