Operations | Monitoring | ITSM | DevOps | Cloud

August 2023

PagerDuty Expands Generative AI Offerings and Enhances Analytics Capabilities PagerDuty

Using generative AI to improve customer support

We’ve all been there: at that frustrating moment when you have a problem or an urgent need and the customer support line is busy. Endless searching for answers turns up nothing that matches your predicament. That’s the friction ServiceNow teams are diving into headfirst to provide each of our customers satisfactory answers with promptness that matches the urgency they feel. Generative AI is helping the teams improve customer support at a rapid pace.

Democratize Automation with AI-Generated Runbooks

Operational efficiency is as critical within the IT and engineering teams as any other part of the business. Automating repetitive tasks and reducing escalations within and to these teams is of immense value. While automation saves time and boosts productivity, the complexity of developing automation can be a limiting factor and bottleneck. Generative AI is a paradigm shift here, in that it brings consumer-style simplicity to assisting in the development of enterprise-grade automation.

Monitor Google Cloud Vertex AI with Datadog

Vertex AI is Google’s platform offering AI and machine learning computing as a service—enabling users to train and deploy machine learning (ML) models and AI applications in the cloud. In June 2023, Google added generative AI support to Vertex AI, so users can test, tune, and deploy Google’s large language models (LLMs) for use in their applications.

Better Together: Creating an AI-friendly Culture to Optimize Business Outcomes

Are business outcomes, with the potential to make or break an organization’s future, becoming more important than they’ve ever been before? It sure seems that way. Embarking on the journey of business growth despite a treacherous path of challenges, all departments are biting at the bit for stability, solid strategies, and a reliable plan for what’s ahead.

AI in Customer Service: The Future of Support - Infraon

Beyond the Hype: The Power of AI and Large Language Models in Networking

Artificial intelligence is certainly a hot topic right now, but what does it mean for the networking industry? In this post, Phil Gervasi looks at the role of AI and LLM in networking and separates the hype from the reality.

LLMs explained: how to build your own private ChatGPT

Unraveling The Potential: The benefits of Generative AI in IT Departments

In this article, we delve into the extraordinary world of Generative AI and its profound impact on IT departments. As technology evolves at an unprecedented pace, IT professionals face the tremendous challenge of keeping up with complex systems and demanding tasks. However, with AI by their side, IT teams can unlock a realm of possibilities, empowering them to optimize performance and streamline operations. Join us as we explore the potential of Generative AI, uncovering its ability to revolutionize IT departments and ultimately, unleash unprecedented levels of productivity and efficiency.

Tech Trends: Discover 5 Cutting Edge Technologies Shaping Our Future

The Top 4 Use Cases for Generative AI in Customer Experience

6 Underutilized Ways to Use AI in Customer Service in 2023

How AI Is Challenging Cybersecurity Efforts

Generative AI at Grafana Labs: what's new, what's next, and our vision for the open source community

As you’d imagine, generative AI has been a huge topic here at Grafana Labs. We’re excited about its potential role in bridging the gap between people and the beyond-human scale of observability data we work with every day. We’ve also been talking a lot about where open source fits in — especially if that Google researcher is right and OSS will outcompete OpenAI and friends. What role can we play to bring the community along?

Unlocking Customer Service's Full Potential with Generative AI - Infraon

When Two Worlds Collide: AI and Observability Pipelines

The Unplanned Show, Episode 10: Mitra Goswami on Generative AI

AI and automation: Unlocking job opportunities for Australia's economy

As the Australian workforce braces for increased AI and automation, individuals must adapt by acquiring new skills. In doing so, Australians can expand the impact of their work, embark on more fulfilling career paths, and increase their income potential. Recent ServiceNow-commissioned research from Pearson points to major changes in the workforce—in Australia and around the world—due to the implementation of AI and other technologies.

Demo: Adding AI to the code blocks

AI and Big Data Solutions

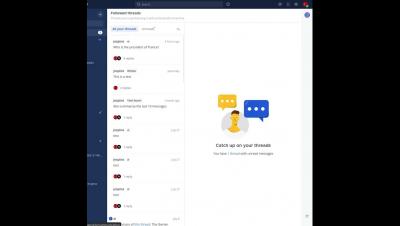

Demo: AI Summarization of new messages in channels and threads

Behind the Scenes: Mattermost OpenOps AI Mindmeld | August 22, 2023

The Future of Large Language Models (LLMs) in Transforming Industries

Large Language Models (LLMs) have emerged as a powerful force capable of reshaping industries across the board. From small startups to multinational corporations, organizations are actively experimenting with LLMs, recognizing their potential to disrupt the market. This blog explores the predictions from major industry leaders regarding the future of LLMs and provides insights on how businesses can leverage this technology to gain a competitive edge.

Zebra Technologies Developer Conference to Showcase Advances in AI, Mobility and UX

AI-Powered Knowledge Base: Why Do You Need One?

In the rapidly evolving landscape of AI applications, the need for a knowledge base, especially the AI-Powered Knowledge Base, has become crucial to harness the full potential of generative AI and its integration into customer service.

Revolutionizing IT Monitoring with AIOps and generative AI

Behind the Scenes: Mattermost OpenOps AI Mindmeld | August 17, 2023

What Is AI Monitoring and Why Is It Important

Artificial intelligence (AI) has emerged as a transformative force, empowering businesses and software engineers to scale and push the boundaries of what was once thought impossible. However as AI is accepted in more professional spaces, the complexity of managing AI systems seems to grow. Monitoring AI usage has become a critical practice for organizations to ensure optimal performance, resource efficiency, and provide a seamless user experience.

The Growing Adoption of LLMs in Production in the Enterprise

In today's fast-paced business landscape, Large Language Models (LLMs) have emerged as powerful tools with unimaginable potential, revolutionizing various industries, driving innovation and efficiency. In this blog, we will delve into the enterprise use cases of LLMs, highlighting how companies like Walmart, Stellantis, and Commvault are leveraging this technology to enhance customer experiences, streamline processes, and democratize content creation.

Generative AI and Observability Automation - Sajid Mehmood & Michael Gerstenhaber

Behind the Scenes: Mattermost OpenOps AI Mindmeld | August 10, 2023

Behind the Scenes: Mattermost OpenOps AI Mindmeld | August 15, 2023

4 ways to master employee growth and development with AI

Amid the whirlwind of today's job market and ever-evolving economy, one critical key to an organization's success emerges: empowering employees with growth opportunities that both entice top talent and secure the future of your business. As millennials and Gen Z progressively make up more of the workforce, investing in their career growth has become crucial to the future health of any organization.

Demo: Summarizing all unread messages in a channel with one click | August 14, 2023

Demo: New admin console configuration for the Mattermost AI Plugin | August 11, 2023

Chatbots vs. Conversational AI: Unveiling the Differences and Impact in Customer Service - Infraon

Write a Spark big data job with ChatGPT

AI With a Purpose: Top Moves for Strategically Applying IT Automation

Artificial intelligence (AI) isn’t the only thing at the heart of what organizations are doing to keep with digital transformation and drive business growth. People are, too. Development of AI actually began about 40 years ago, but for generative AI (genAI), that time is much less. The explosion of genAI has brought about an everlasting, first-of-its-kind innovation that’s accessible to just about everyone.

Maximizing Coding Productivity with Large Language Models

AI-Powered Ticket Automation: Revolutionizing Customer Support and Efficiency - Infraon

Why Andy Warhol would like - and dislike - AI

BigPanda's Resources for Navigating Change Through the AI Revolution

AI has revolutionized the way we engage online in 2023. From Chat GPT and AI Art Generators to healthcare, finance, and business, you can hardly read the news without reading the latest proclamation of how AI is poised to change every aspect of our lives. AI has brought fundamental changes to how we live and work, and we’re still scrambling to understand the impacts of these changes. Especially where their work is concerned, change can be difficult for people to embrace.

Operationalizing AI: MLOps, DataOps And AIOps

Combine Copilot and JFrog Artifactory for Maximum Efficiency

IDC Market Perspective published on the Elastic AI Assistant

IDC published a Market Perspective report discussing implementations to leverage Generative AI. The report calls out the Elastic AI Assistant, its value, and the functionality it provides. Of the various AI Assistants launched across the industry, many of them have not been made available to the broader practitioner ecosystem and therefore have not been tested. With Elastic AI Assistant, we’ve scaled out of that trend to provide working capabilities now.

Behind the Scenes: Mattermost OpenOps AI Mindmeld | July 27, 2023

Behind the Scenes: Mattermost OpenOps AI Mindmeld | August 3, 2023

Top tips: AI fatigue is a thing-navigating through AI challenges

Top tips is a weekly column where we highlight what’s trending in the tech world today and list out ways to explore these trends. This week we take a look at the effect of AI-related over-saturation and show you four ways to work around it.

Integration roundup: Monitoring your AI stack

Integrating AI, including large language models (LLMs), into your applications enables you to build powerful tools for data analysis, intelligent search, and text and image generation. There are a number of tools you can use to leverage AI and scale it according to your business needs, with specialized technologies such as vector databases, development platforms, and discrete GPUs being necessary to run many models. As a result, optimizing your system for AI often leads to upgrading your entire stack.

Introducing Bits AI, your new DevOps copilot

Business-critical infrastructure and services generate massive volumes of observability data from many disparate sources. It can be challenging to synthesize all this data to gain actionable insights for detecting and remediating issues—particularly in the heat of incident response.

AI in Customer Service: Revolutionizing the Helpdesk with 10 Cutting-Edge Examples

Crafting Prompt Sandwiches for Generative AI

Large Language Models (LLMs) can give notoriously inconsistent responses when asked the same question multiple times. For example, if you ask for help writing an Elasticsearch query, sometimes the generated query may be wrapped by an API call, even though we didn’t ask for it. This sometimes subtle, other times dramatic variability adds complexity when integrating generative AI into analyst workflows that expect specifically-formatted responses, like queries.