Operations | Monitoring | ITSM | DevOps | Cloud

September 2021

Using Helm with GitOps

This is the first of many posts highlighting GitOps topics that we’ll be exploring. Within this post, we will explore Helm, a tool used for Kubernetes package management, that also provides templating. Helm provides utilities that assist Kubernetes application deployment. In order to better understand how Helm charts are mapped to Kubernetes manifests, we’ll explain more details below and how to use Helm with and without GitOps.

Shipa Cloud with Your Minikube Cluster

Embracing any new technology stack can certainly be a journey. No matter if this is your first time using Kubernetes or you have been on the Google Borg Team, getting up and started with Shipa Cloud is a breeze. You can bring your own Kubernetes Cluster and sign up for a Shipa Cloud account and you are well on your way to Application as Code excellence. Leveraging minikube is a free way to take a look at Shipa Cloud.

Qovery raises $4m to build the future of the Cloud

I am thrilled to announce that we have raised a $4M seed round with top notch investors. Join us in building the future of the Cloud - we are hiring! ----- The round is led by Crane and joined by Speedinvest, with participation from Techstars and angels including Alexis Le-Quoc (CTO and co-founder at Datadog) and Ott Kaukver (CTO at Checkout, ex CTO at Twilio).

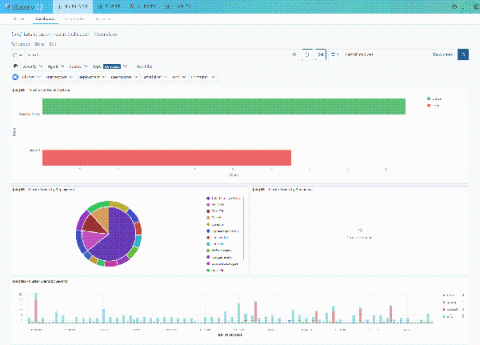

What's new in Sysdig - September 2021

Welcome to another monthly update on what’s new from Sysdig! Happy Janmashtami! Shanah Tovah! 中秋快乐! With lockdown lifting by varying degrees across the world, we hope you had a safe but pleasant holiday! It has certainly been long overdue. Here at Sysdig, we celebrated Labor Day in the USA with an extended weekend and a well being day for the team.

Fireside Chat: Going GitOps with Argo

Lightning-fast Kubernetes networking with Calico & VPP

Public cloud infrastructures and microservices are pushing the limits of resources and service delivery beyond what was imaginable until very recently. In order to keep up with the demand, network infrastructures and network technologies had to evolve as well. Software-defined networking (SDN) is the pinnacle of advancement in cloud networking; by using SDN, developers can now deliver an optimized, flexible networking experience that can adapt to the growing demands of their clients.

Import ECS Fargate into Spot Ocean

AWS Fargate is a serverless compute engine for containers that work with both Amazon Elastic Container Service (ECS) and Amazon Elastic Kubernetes Service (EKS). With Fargate handling instance provisioning and scaling, users don’t have to worry about spinning up instances when their applications need resources. While this has many benefits, it’s not without its share of challenges which can limit its applicability to a wide variety of use cases.

Demystifying Kubernetes RBAC

A Zero Trust Approach to Enterprise Kubernetes Deployment

Heroku vs AWS: What is the cheapest for your startup?

In today's digital age, the internet and computer technologies have become a part of our lives. Organizations are moving their applications to the cloud to gain benefits of flexibility and lower costs. Heroku and AWS are two popular cloud service providers. AWS is a cloud services platform offering computing power, database storage, content delivery, and many other functionalities. Users can choose individual features and services as required.

Troubleshooting vs. Debugging

The life of a developer these days is more complicated than ever, as they are increasingly required to expand their knowledge across the stack, understand abstract concepts, and own their code end-to-end. A major (and very frustrating) part of a developer’s day is dedicated to fixing what they’ve built – scouring logs and code lines in search of a bug. This search becomes even harder in a distributed Kubernetes environment, where the number of daily changes can be in the hundreds.

People over process - Civo DevOps Bootcamp 2021

Implementing a dashboard for your Kubernetes applications

How to Build and Run Your Own Container Images

The rise of containerization has been a revolutionary development for many organizations. Being able to deploy applications of any kind on a standardized platform with robust tooling and low overhead is a clear advantage over many of the alternatives. Viewing container images as a packaging format also allows users to take advantage of pre-built images, shared and audited publicly, to reduce development time and rapidly deploy new software.

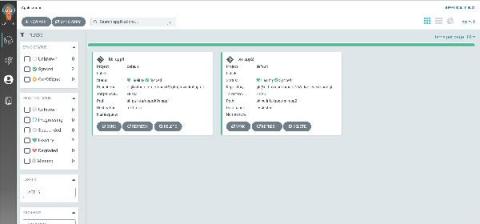

Kubernetes application dashboard

Giving developers a portal they can use to understand application dependencies, ownership, and more has never been more critical. As you scale your Kubernetes adoption, you want to make sure you avoid service sprawl, and if not done early, application support will become a nightmare.

Kubernetes Master Class Windows Container Support with Rancher

Civo update - September 2021

Welcome to the Civo update for September 2021. In case you missed the big news... this week we successfully launched our first region in Frankfurt, Germany. Plus we announced our new strategic partner, THG Ingenuity, who invested $2 million into the company. This will allow us to quickly invest in our infrastructure, including more regions across the globe throughout the next 12 months.

How ORAN is moving the industry to a cloud-native model

Open RAN or ORAN is a game-changing Radio Access Network (RAN) evolution combining RAN functionality with cloud-native design, scale and automation. Legacy RAN was and is still intentionally designed using closed and proprietary architectures that locked operators to a particular vendor, for both radio and supporting hardware (baseband units). Now with ORAN, operators can not only decouple vendors, but also software from hardware, facilitating the migration to a cloud-native model.

NIST 800-53 compliance for containers and Kubernetes

In this blog, we will cover the various requirements you need to meet to achieve NIST 800-53 compliance, as well as how Sysdig Secure can help you continuously validate NIST 800-53 requirements for containers and Kubernetes. NIST 800-53 rev4 is deprecated since 23 September 2021 Read about the differences between versions down below →

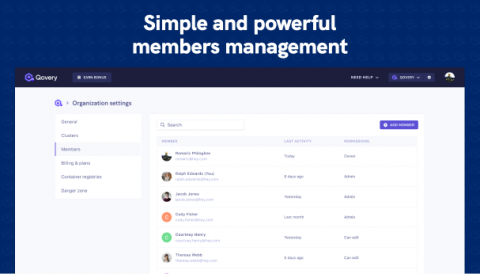

Simple and powerful user management feature

It is a super exciting day! Finally, you can invite your friends/team/colleagues (pick one) via the web console.

Benefits of switching to Civo Kubernetes - Keptn case study

Why securing internet-facing applications is challenging in a Kubernetes environment

Internet-facing applications are some of the most targeted workloads by threat actors. Securing this type of application is a must in order to protect your network, but this task is more complex in Kubernetes than in traditional environments, and it poses some challenges. Not only are threats magnified in a Kubernetes environment, but internet-facing applications in Kubernetes are also more vulnerable than their counterparts in traditional environments.

Application Resiliency for Cloud Native Microservices with VMware Tanzu Service Mesh

Modern microservices-based applications bring with them a new set of challenges when it comes to operating at scale across multiple clouds. While the goal of most modernization projects is to increase the velocity at which business features are created, with this increased speed comes the need for a highly flexible, microservices-based architecture. The result is that the architectural convenience created on day 1 by developers turns into a challenge for site reliability engineers (SREs) on day 2.

Kubernetes, Give Me a Queue

A few months ago, we introduced a new messaging topology operator. As we noted in our announcement post, this new Operator—we use the upper-cased “Operator” to denote Kubernetes Operators vs. platform or service human operators—takes the concept of VMware Tanzu RabbitMQ infrastructure-as-code another step forward by allowing platform or service operators and developers to quickly create users, permissions, queues, and exchanges, as well as queue policies and parameters.

Frankfurt region now live

We’re happy to announce that our new mainland Europe datacenter region in Frankfurt, Germany is now live and ready for use by all Civo customers. This will sit alongside our existing New York City and London regions, with a location in India planned by the end of this year, and more in 2022.

Policy as code for Kubernetes with Terraform

As you scale microservices adoption in your organization, the chances are high that you are managing multiple clusters, different environments, teams, providers, and different applications, each with its own set of requirements. As complexity increases, the question is: How do you scale policies without scaling complexity and the risk of your applications getting exposed?

Rancher Online Meetup - September 2021 - Introducing SUSE Rancher 2.6

Introducing The Next Generation of the D2iQ Kubernetes Platform (DKP): DKP 2.0

We are pleased and proud to announce the General Availability of the next generation of the D2iQ Kubernetes Platform (DKP): DKP 2.0, including D2iQ Konvoy 2.0 and D2iQ Kommander 2.0. This software is available now.

8 Common Mistakes When Using AWS ECS to Manage Containers

In this article, we’ll discuss the potential pitfalls that we came across when configuring ECS task definitions. While considering this AWS-specific container management platform, we’ll also examine some general best practices for working with containers in production.

THG Ingenuity investment in Civo

I’m hugely excited to announce that THG Ingenuity, the technology division of ecommerce giant THG, has invested $2 million to take a minority stake in Civo. We’re proud to be THG Ingenuity’s first strategic investment since it announced earlier this year that Softbank Group had invested $730 million in THG with an option for a further $1.6 billion investment in the THG Ingenuity platform.

Multi-Cloud BCDR on Kubernetes with Velero | Kublr Webinar

Terraform is Not the Golden Hammer

Terraform is probably the most used tool to deploy cloud services. It's a fantastic tool, easily usable, with descriptive language (DSL) called HCL, team-oriented, supporting tons of cloud providers, etc. On paper, it's an attractive solution. And it's easy to start delegating more and more responsibilities to Terraform, as it's like a swiss knife; it knows how to perform several kinds of actions against several varieties of technologies.

Scale for fully automated Kubernetes monitoring in minutes

Kubernetes Master Class Managing Teams at Scale with Multi-Tenancy

Automation In The Cloud: How Civo Uses Terraform - Civo Online Meetup #13

The importance of Calico's pluggable data plane

This post will highlight and explain the importance of a pluggable data plane. But in order to do so, we first need an analogy. It’s time to talk about a brick garden wall! Imagine you have been asked to repair a brick garden wall, because one brick has cracked through in the summer sun. You have the equipment you need, so the size of the job will depend to a great extent on how easily the brick can be removed from the wall without interfering with all the ones around it. Good luck.

A simplified stack monitoring experience in Elastic Cloud on Kubernetes

To monitor your Elastic Stack with Elastic Cloud on Kubernetes (ECK), you can deploy Metricbeat and Filebeat to collect metrics and logs and send them to the monitoring cluster, as mentioned in this blog. However, this requires understanding and managing the complexity of Beats configuration and Kubernetes role-based access control (RBAC). Now, in ECK 1.7, the Elasticsearch and Kibana resources have been enhanced to let us specify a reference to a monitoring cluster.

Deploying microservice apps on Kubernetes using Terraform

Terraform is a popular choice among DevOps and Platform Engineering teams as engineers can use the tool to quickly spin up environments directly from their CI/CD pipelines.

Benefits of containerization

As the demands placed on technology have grown, so has the size and complexity of our applications. Today’s developers often face the difficult task of managing huge applications. Further complicating matters is the underlying infrastructure, which can often be as expansive, diverse, and complicated as its applications. The complexity of modern applications introduces many challenges.

How To Ensure A Smooth Kubernetes Migration

Speedscale & Locust: Comparing Performance Testing Tools

Picking the right performance testing tool can be a challenge. What should you look for and what is important? Performance testing is a phrase many developers have come across at some point, but what is it exactly? In simple terms, performance testing is a software testing practice used to determine stability, responsiveness, scalability, and most important, speed of the application under a given workload.

Container Craziness: Meet us This Fall to Unlock the Knowledge of the Future of Enterprise Kubernetes

Gartner predicts that by 2022, more than 75% of global organizations will be running containerized applications in production, which is a significant increase from fewer than 30% today. Kubernetes is the future-proof solution that is going to provide flexibility, power, and scalability to improve productivity for the modern enterprise. It’s the key to modernization in a world moving at warp speed.

How to Simplify Your Kubernetes Helm Deployments

Is your Helm chart promotion process complicated and difficult to automate? Are rapidly increasing Helm chart versions making your head spin? Do you wish you had a way to quickly and easily see the differences between deployments across all of your environments? If you answered “yes” to any of these questions, then read on! My purpose for writing this article is to share a few of the techniques that I’ve seen make the biggest impact for Codefresh and our customers.

What Are Containers?

Containers, along with containerization technology like Docker and Kubernetes, have become increasingly common components in many developers’ toolkits. The goal of containerization, at its core, is to offer a better way to create, package, and deploy software across different environments in a predictable and easy-to-manage way.

Aiven launches Kubernetes Operator support for PostgreSQL and Apache Kafka

Unexpected: How we ended up product of the day on Producthunt with zero preparation

Josh Fetcher: “Product Hunt is a community where founders, product enthusiasts, and geeks go to check out the best new products and get attention for tech products that they've built.” If there is one sentence to define me it would be “done is better than perfect”. Saturday morning, after spent the last night released a new major version of our product while fixing bugs I came up with an idea: “Oh! It would be great time to make Qovery featured on Producthunt”.

The simplest way to deploy your apps on your AWS account

Automate Everything - Civo DevOps Bootcamp 2021

Data applications with Kubernetes and Snowflake

Data application developers using Snowflake as the data warehouse and who are new to Kubernetes, spinning up a single cluster on their laptop and deploying their first application can seem deceptively simple. As they start deploying data-driven applications using microservices and Kubernetes in production, the difficulty increases exponentially. It quickly throws the developer into a kind of configuration hellscape that drives productivity down for many data engineering teams.

Tanzu Talk: Container Strategy Notebook, kubernetes or PaaS, and why in the first place

observIQ Releases First PnP Solution for monitoring arm-based Kubernetes

Arm-based Kubernetes clusters have been in use for a while, albeit mostly for niche uses, by enthusiasts, and DIY hobbyists. But that is changing. Arm architecture offers an efficiency and scalability that other architectures do not, and that makes it appealing to businesses.

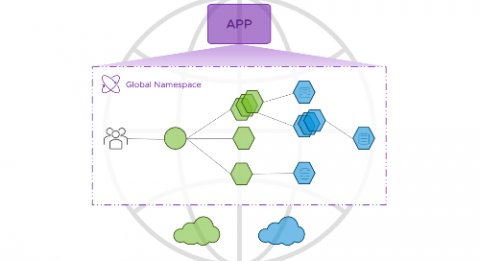

Step up your multi-cloud management game with Kubernetes

Managing containers effectively in multi-cloud environments is nearly impossible. Kubernetes makes it possible.

How deep is your love for YAML?

There is no doubt that YAML has developed a reputation for being a painful way to define and deploy applications on Kubernetes. The combination of semantics and empty spaces can drive some developers crazy. As Kubernetes advances, is it time for us to explore different options that can support both DevOps and Developers in deploying and managing applications on Kubernetes?

Announcing the General Availability of VMware Tanzu Kubernetes Grid 1.4

We are excited to announce the general availability of VMware Tanzu Kubernetes Grid 1.4. This release introduces improvements and updates to networking, packages, the user experience, and as always, Kubernetes versioning, with support for Kubernetes 1.21.2. In this post, we will focus on some of the new capabilities offered in Tanzu Kubernetes Grid 1.4 that further support our customers with their Kubernetes journey.

Mounting FSx for ONTAP volumes to Kubernetes pods

Last week, AWS and NetApp announced the general availability for AWS FSx for NetApp ONTAP. In this blog post, we’ll go through the steps to create a FSx for ONTAP filesystem, and you’ll learn how to create volumes using Kubernetes resources with Astra Trident, and how to mount those volumes to pods. As a prerequisite, make sure to register to the Spot platform, connect your AWS account and have a Kubernetes cluster connected to Ocean using the following guides.

Smart gardening with a Raspi and Prometheus

Let’s build a smart gardening system with Prometheus and a Raspberry pi. Having plants at home can reduce your stress levels and make your home look more delightful. Seeing your indoor oasis growing gives us a sense of accomplishment and makes us feel proud… until you see that first brown leaf. That’s when you start doubting your green fingers.

Product Update: What's New For September 2021

Last June, we released Qovery v2 - a brand new version of Qovery. Since then, we have worked on delivering the features you were waiting for AND made dozens of improvements based on your feedback. Thanks to our lovely dev community and customers.

Why Competing in E-Commerce Means Customizing Your Software

The world of retail has changed dramatically over the past decade, and in ways far beyond a black-and-white shift from shopping in stores to shopping online. Today, e-commerce is table stakes, meaning companies distinguish themselves—among other avenues—via user experience, promotions, fast shipping, and omnichannel experiences that integrate digital and brick-and-mortar locations.

Announcing support for EKS Anywhere

Amazon Elastic Kubernetes Service (EKS) is a cloud-based compute platform that includes a fully managed Kubernetes control plane in order to simplify cluster operations. AWS introduced EKS Anywhere to bring the operational ease of EKS to organizations that manage on-premise environments (e.g., to meet data sovereignty requirements).

How Okteto uses Civo for Kubernetes workloads

Using DevSecOps Flow to Operationalize Kubernetes

VMware Tanzu Labs: Own Your App Modernization Journey

Goodbye Dispatch, Hello FluxCD!

We are announcing the deprecation of Dispatch, our DKP 1.x CI/CD tool, based on Tekton and ArgoCD. As another step in the continuous improvement of the DKP platform, in DKP 2.0 we have made the move to FluxCD, a CNCF incubator project. Why did we make this decision? Our customers have significant investments in their build pipelines using battle tested technologies such as Jenkins, TeamCity, and CircleCI. It would be a significant change in their workflows to introduce a new CI tool like Tekton.

Removing CI/CD Blockers: Navigating K8s with Codefresh & Komodor

Kubernetes Master Class HA Rancher Managing EKS, GKE and AKS

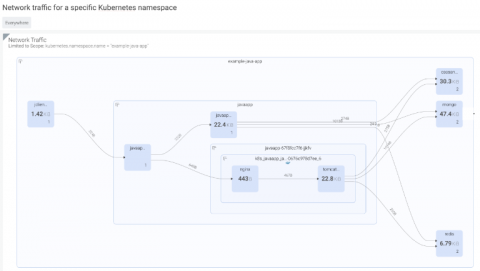

What's new in Calico Enterprise 3.9: Live troubleshooting and resource-efficient application-level observability

We are excited to announce Calico Enterprise 3.9, which provides faster and simpler live troubleshooting using Dynamic Packet Capture for organizations while meeting regulatory and compliance requirements to access the underlying data. The release makes application-level observability resource-efficient, less security intrusive, and easier to manage. It also includes pod-to-pod encryption with Microsoft AKS and AWS EKS with AWS CNI.

What's New? D2iQ Kaptain 1.2!

We are pleased and proud to announce the General Availability of D2iQ Kaptain 1.2. This new update includes new features and improvements to the overall user experience, including: The new dashboard enables users to visually monitor and observe the resource consumption of Kaptain Workloads, observe the state of those workloads, and easily identify and debug any issues.

Kubernetes CI/CD pipelines: What, why, and how

This blog can provide you with useful information on how to set up a Kubernetes CI/CD workflow using state-of-the-art of open source DevOps tools, whether you are.

5 Best Practices to Simplify Kubernetes Troubleshooting - DevOps.com Webinar

Fast feedback - Civo DevOps Bootcamp 2021

How to Handle Secrets Like a Pro Using Gitops

One of the foundations of GitOps is the usage of Git as the source of truth for the whole system. While most people are familiar with the practice of storing the application source code in version control, GitOps dictates that you should also store all the other parts of your application, such as configuration, kubernetes manifests, db scripts, cluster definitions, etc. But what about secrets? How can you use secrets with GitOps?

Using GitOps and ArgoCD to deploy applications

Shipa Application as Code Product Overview

[Webinar] Removing CI/CD Blockers: Navigating Kubernetes with Codefresh & Komodor

GitOps Workshop - Deploying Applications

GitOps is one method used by teams to deploy microservices, but challenges usually arise when deploying applications across multiple clusters and environments. For your GitOps initiative to be successful, you should consider implementing an application operating model. In this second workshop we covered.

Pipelines as Code

One of the reasons we define items as code is it allows for the programmatic creation of resources. This could be for infrastructure, for the packages on your machines, or even for your pipelines. Like many of our clients, at Codefresh we are seeing the benefits of an “everything as code” approach to automation. One of the great things about defining different layers in the stack as code is that these code definitions can start to build on each other.

Intellyx Brain Candy Brief

Speedscale is seeking to cut time and errors out of the Kubernetes and container delivery pipeline with their ability to discover API connections, automatically generate tests and data, replay traffic, and spin up realistic lab environments and reports within the tight time windows of cloud-native development.

How Kubernetes 1.22 addresses industry needs

On August 4th 2021, Kubernetes (K8s) upstream announced the general availability of Kubernetes 1.22, the latest version of the most popular container orchestration platform. At Canonical, we actively track upstream releases to ensure our Kubernetes distributions align with the latest innovations that developers and businesses need for their cloud native use cases.

VMware Tanzu Application Platform Creates a Better Developer Experience

Container Orchestration Explained Simply

Clubhouse Talk: Bleeding-Edge Kubernetes Projects

Below are main highlights from a recent Clubhouse talk featuring Elad Aviv, a software engineer at Komodor. The session was hosted by Kubernetes heavy-hitters; Mauricio Salatino, Staff Engineer at VMware, and Salman Iqbal, Co-founder of Cloud NativeWal.

GitOps Beyond Kubernetes

The idea to fully manage applications, in addition to infrastructure, using a Git-based workflow, or GitOps, is gaining a lot of traction recently. We are seeing an increasing number of users connecting their Shipa account with tools such as ArgoCD and FluxCD. Based on that, we conducted multiple user interviews to understand some of the challenges teams face when implementing GitOps, especially those introduced or faced by their developers.

Modernizing Apps through Containerization instead of Lift and Shift

Lately, organizations are experiencing the urgency to containerize their age-old legacy applications in order to offer the best experience to their existing and new customers. Despite the great pressure on IT systems and organizations, modernizing mission-critical applications to ensure business continuity and stability is more of a necessity.

Customize and Observe with VMware Spring Cloud Gateway for Kubernetes

VMware Spring Cloud Gateway for Kubernetes, the powerful distributed API gateway loved by application developers like you no matter what programming language you use, has been improved with some brand new capabilities. Spring Cloud Gateway for Kubernetes now supports the loading of your own extensions so you can customize them to your own specific needs. Capturing metrics and trace data into your observability tools of choice is also easier than ever before.

Announcing VMware Tanzu Application Platform: A Better Developer Experience on any Kubernetes

Today at VMware’s annual SpringOne developer conference, we announced the public beta* of Tanzu Application Platform. With Tanzu Application Platform, application developers and operations teams can build and deliver a better multi-cloud developer experience on any Kubernetes distribution, including Azure Kubernetes Service, Amazon Elastic Kubernetes Service, Google Kubernetes Engine, as well as software offerings like Tanzu Kubernetes Grid.

VMware Tanzu Application Service: The Best Destination for Mission-Critical Business Apps

It’s inspiring to see all of the customers that are delivering great applications securely and at scale with VMware Tanzu Application Service on any cloud as well as on-premises. One great example is Albertsons, which has managed a tremendous increase in e-commerce and grocery delivery traffic with zero downtime during the COVID-19 crisis.