Operations | Monitoring | ITSM | DevOps | Cloud

April 2022

Enlightning: Security For Application Developers

Centralized application dashboard for Kubernetes

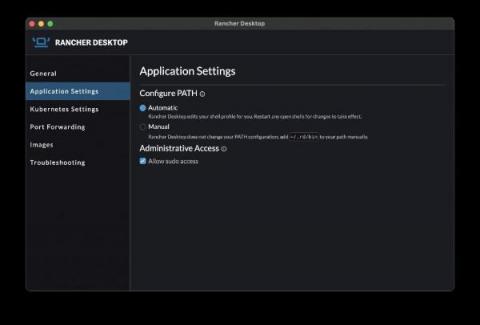

What's New in Rancher Desktop 1.3.0?

The release of Rancher Desktop 1.3.0 brings in some notable changes that are most visible on Mac and Linux while continuing to expand on experimental features.

Monitor Knative for Anthos with Datadog

Developed and released by Google in 2018 with contributions from IBM, VMWare, Red Hat, and other companies, the Knative project is designed to make it as simple as possible to build, deploy, and scale serverless containers across your existing Kubernetes infrastructure. By operating on top of Google Anthos, Knative for Anthos takes this even further by allowing developers to build and deploy applications across any hybrid environments that include both on-prem and cloud-hosted serverless clusters.

Best Tips to Get Most out of AWS Load Balancer

Troubleshooting in Kubernetes: The Shift-Left Approach

Kubernetes has become the de-facto container management solution of the last decade—and we have no doubt it will stay that way in the upcoming years. It provides a solid abstraction between the infrastructure layer and applications, so that developers can quickly develop, deploy, and operate their applications. Kubernetes is designed as a set of APIs that work together. If you deploy simple applications and make them run, Kubernetes will do it for you.

Deploying Microservices with GitOps

Learn how to adopt GitOps for your microservices deployments. This blog post will explain the highlights listed below and how it’s possible to adopt GitOps and the benefits of deploying with Argo for your microservices.

Getting Started With Terraform and Qovery on AWS

Monitor and troubleshoot Consul with Prometheus

In this article, you’ll learn how to Monitor Consul with Prometheus. Also, troubleshoot Consul control plane with Prometheus from scratch, following Consul’s docs monitoring recommendations. Also, you’ll find out how to troubleshoot the most common Consul issues.

Deployment-time testing with Grafana k6 and Flagger

When it comes to building and deploying applications, one increasingly popular approach these days is to use microservices in Kubernetes. It provides an easy way to collaborate across organizational boundaries and is a great way to scale. However, it comes with many operational challenges. One big issue is that it’s difficult to test the microservices in real-life scenarios before letting production traffic reach them. But there are ways to get around it.

Learn about Pod Labels and Selectors - Civo Academy

"Civo could be your primary cloud provider due to cost and efficiency" - Marino from solo.io

Kubernetes Incident Response Best Practices

Inevitably, organizations that use technology (regardless of the extent) will have something, somewhere, go wrong. The key to a successful organization is to have the tools and processes in place to handle these incidents and get systems restored in a repeatable and reliable way in as little time as possible.

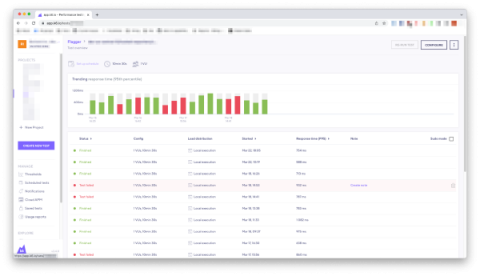

Shipa Product Updates - 1.7.0 Release

We are really excited today to announce the Shipa 1.7.0 Release. Lots of great features and enhancements are packed in the latest release of Shipa. Several features coming from our customers and community, and you can now submit your ideas via the Idea Portal. Let’s take a look at what has been cooking at Shipa and upcoming events that you can catch us at.

Shipa Product Updates - 1.7.0 Release

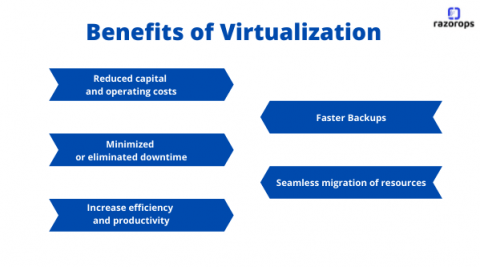

What is Virtualization & Top 5 Benefits of Virtualization

Virtualization uses software to create an abstract layer over the hardware. By doing this it creates a virtual computer system, known as the virtual machines (VMs). This will allow organizations to run multiple virtual computers, operating systems, and applications on a single physical server How Does Virtualization works: Virtual machine (VM) is a virtual representation of a physical computer.Virtual machines can’t interrelate directly with a physical computer, but.

VMware Tanzu Application Service Delivers Separate Log Cache

VMware Tanzu Application Service 2.13 unveils an improved Log Cache, which has been separated into its own virtual machine instance for enhanced scaling options. Historically, Log Cache has been colocated on Doppler virtual machine (VM) instances in order to reduce the footprint of foundations. This separation is critical as Log Cache is no longer subject to the formerly imposed Doppler maximum of 40 VM instances and can continue to scale up based on platform and application requirements.

The Case for Owning Your Infrastructure

Early technical decisions can help make or break a product. The effort to make the right decisions can be a great unifier between standout engineers and top notch executives At Cycle we've been obsessing over the nuances of infrastructure automation for years. So far, it's landed us on the G2 grid as a high performer in a category dominated by the goliaths of the cloud world.

Kubernetes vs Docker : A comprehensive comparison

If you’re new to the world of containers there are two words you would have certainly come across, but might not yet understand the difference between: Kubernetes and Docker. Although Kubernetes and Docker are somewhat different from each other, they also share some similarities. A container is a standard unit of software that packages the code along with the libraries and dependencies so that the application can run quickly, seamlessly and reliably from one environment to another.

How to create a Kubernetes Namespace - Civo Academy

What's new in Sysdig - April 2022

Welcome to another iteration of What’s New in Sysdig in 2022! Before starting, once again Happy Easter, Happy Passover, Happy Rama Navami, and Ramadan Mubarak! In general, happy spring break, and we hope you recovered from the chocolate egg drop.

Performance Tuning Tips on Real-World Customer Spark-on-Kubernetes pipelines

We built the "Netlify for backend" that runs on your AWS account!

GitOps your WordPress with ArgoCD, Crossplane, and Shipa

WordPress is a popular platform for editing and publishing content for the web. This tutorial will walk you through how to build out a WordPress deployment using Kubernetes, ArgoCD, Crossplane, and Shipa. WordPress consists of two major components: the WordPress PHP server and a database to store user information, posts, and site data. We will define these two components and store them in a Git repository.

AWS, Azure & GCP: Using Their Latest Instances Effectively

Learn more and schedule a 1:1 demo at at https://www.densify.com/product/demo

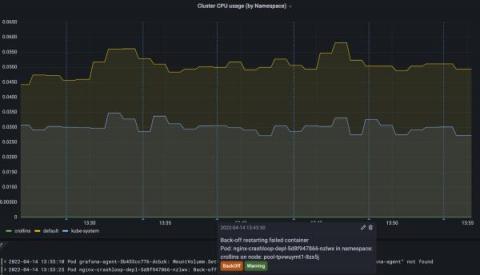

Infrastructure monitoring using kube-prometheus operator

Prometheus has emerged as the de-facto open source standard for monitoring Kubernetes implementations. In this tutorial, Kristijan Mitevski shows how infrastructure monitoring can be done using kube-prometheus operator. The blog also covers how the Prometheus Alertmanager cluster can be used to route alerts to Slack using webhooks. In this tutorial by Squadcast, you will learn how to install and configure infrastructure monitoring for your Kubernetes cluster using the kube-prometheus operator, displaying metrics with Grafana, and configuring alerting with Alertmanager.

Using Containers for Microservices: Benefits and Challenges for your Organization

How Bitso Empowers Its Devs to Troubleshoot K8s Independently

New in the Kubernetes integration for Grafana Cloud: Kubernetes events, Pod logs, and more

The Kubernetes integration for Grafana Cloud helps users easily monitor and alert on core Kubernetes metrics using the Grafana Agent, our lightweight observability data collector optimized for sending metric, log, and trace data to Grafana Cloud. It packages together a set of easy-to-deploy manifests for the Agent, along with prebuilt dashboards and alerts.

Announcing Stronger Istio Support with Istio Mode for VMware Tanzu Service Mesh

Today, Google submitted Istio to be considered as an incubating project with the Cloud Native Computing Foundation (CNCF), starting the process of handing off management of the project to the CNCF. In tandem with this announcement, we are introducing Istio Mode for VMware Tanzu Service Mesh, reinforcing our support and commitment to open source Istio.

Kubernetes Monitoring: An Introduction

One of the first things you’ll learn when you start managing application performance in Kubernetes is that doing so is, in a word, complicated. No matter how well you’ve mastered performance monitoring for conventional applications, it’s easy to find yourself lost inside a Kubernetes cluster.

Gimlet launches new learning resources to build a developer platform on Civo

Hey Civo users, at Gimlet, we think that best practices in the Kubernetes ecosystem have solidified so much, that they can be packaged into tools, so that you don't have to make nuanced decisions every corner. This is what we have been doing with our CLI tools, and nowdays doing with the Gimlet Dashboard! We have been following Civo for over a year now and see how much the Gimlet team shares the Civo community's spirit.

How to Create a Kubernetes Object - Civo Academy

Learn about Kubernetes Architecture - Civo Academy

How to Create a Kubernetes Cluster using Civo CLI - Civo Academy

Getting Started with the Civo platform - Civo Academy

Announcing support for Amazon EKS Blueprints

Amazon Elastic Kubernetes Service (EKS) is a managed container service designed to deploy and scale cloud-based or on-premise Kubernetes applications. AWS released EKS Blueprints to provide customers with a framework for creating internal development platforms on EKS.

Docker - CMD vs ENTRYPOINT

Containers are great for developers, but containers are also an excellent way to create reproducible, secure, and portable applications. Applications can be deployed reliably and migrated to multiple computing environments quickly using containers. It could be the developer's laptop to the testing environment or staging to the production environment. To use Dockers, Kubernetes, etc., it has been necessary to build containers.

Bug Hunting and improvements week - what we improve on Qovery

Why is Kubernetes Difficult? - Solve All My Problems K8s!

Another post inspired by our weekly internal enablement office hours [should we open this up to the public?] and a few conversations at DevOps Days Atlanta, talking about the experience with Kubernetes can reverberate some sighs. Though in our weekly enablement office hours, the blunt question was asked “well why is Kubernetes so difficult?”. Industry thought leaders would state that Kubernetes is a platform to build other platforms.

Splunk Operator 1.1.0 Released: Monitoring Console Strikes Back!

The latest version of the Splunk Operator builds upon the release we made last year with a whole host of new features and fixes. We like Kubernetes for Splunk since it allows us to automate away a lot of the Splunk Administrative toil needed to set up and run distributed environments. It also brings a resiliency and ease of scale to our heavy-lifting components like Search Heads and Indexer Clusters.

How to write optimized and secure docker file to create docker image.

How to Configure a Multi Node Cluster with Kubeadm & Containerd - Civo Academy

How to Speed Up Amazon ECS Container Deployments

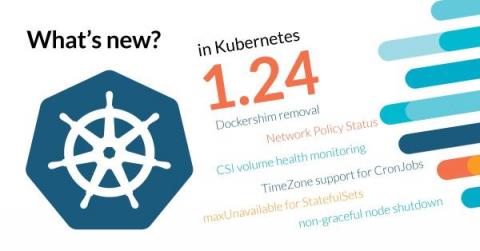

Try Kubernetes 1.24 release candidate with MicroK8s

The latest Kubernetes release, 1.24, is about to be made generally available. Today, the community announced the availability of the 1.24 release candidate. Developers, DevOps and other cloud and open source enthusiasts who want to experiment with the latest cutting edge K8s features can already do so easily with MicroK8s.

MYCOM OSI supports Amazon Elastic Kubernetes Service (Amazon EKS) for its suite of Service Assurance applications

Exploring Minikube Commands - Civo Academy

The Five Rs of Application Modernization

Most organizations realize that application modernization is essential in order to thrive in the digital age, but the process of modernizing can be highly complex and difficult to execute. Factors such as rapidly growing application volume, diversity of app styles and architectures, and siloed infrastructure can all contribute to the challenging nature of modernization. To add to this complexity, there are multiple ways to go about modernizing each individual application.

Installing Minikube on your local Kubernetes Cluster - Civo Academy

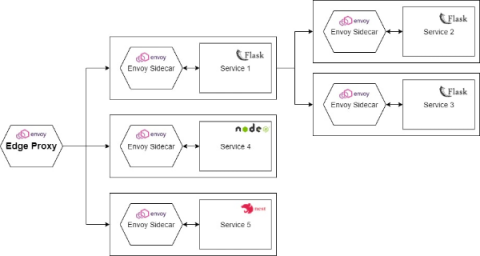

Stupid Simple Service Mesh: What, When, Why

Recently microservices-based applications became very popular and with the rise of microservices, the concept of Service Mesh also became a very hot topic. Unfortunately, there are only a few articles about this concept and most of them are hard to digest.

Stupid Simple Kubernetes: Everything You Need to Know to Start Using Kubernetes

In the era of Microservices, Cloud Computing and Serverless architecture, it’s useful to understand Kubernetes and learn how to use it. However, the official Kubernetes documentation can be hard to decipher, especially for newcomers. In this blog series, I will present a simplified view of Kubernetes and give examples of how to use it for deploying microservices using different cloud providers, including Azure, Amazon, Google Cloud and even IBM.

How to Install Kubectl - Civo Academy

How to create a local Kubernetes cluster? - Civo Academy

Application Service Adapter for VMware Tanzu Application Platform: New Beta Release Now Available

A new beta release of the Application Service Adapter is now available for download. This release has many new exciting features that continue to expand the functionality of the Application Service Adapter in conjunction with the release of VMware Tanzu Application Platform version 1.1.

Discovering applications on Kubernetes

Understanding Kubernetes pod pending problems

Kubernetes pod pending is ubiquitous in every cluster, even in different levels of maturity. If you ask any random DevOps engineer using Kubernetes to identify the most common error that torments their nightmares, a deployment with pending pods is near the top of their list (maybe only second to CrashLoopBackOff). Trying to push an update and seeing it stuck can make DevOps nervous.

How to Create a Docker Volume - Civo Academy

Connecting a Kubernetes Cluster - With Magic Link

Kubernetes Master Class: Securing the Supply Chain and Infrastructure for Kubernetes Deployments

Kubernetes: Tips, Tricks, Pitfalls, and More

If you’re involved in IT, you’ve likely come across the word “Kubernetes.” It’s a Greek word that means “boat.” It’s one of the most exciting developments in cloud-native hosting in years. Kubernetes has unlocked a new universe of reliability, scalability, and observability, changing how organizations behave and redefining what’s possible. But what exactly is it?

How to Dockerize an application & build a docker file image - Civo Academy

The History of Cloud Native

Cloud native is a term that’s been around for many years but really started gaining traction in 2015 and 2016. This could be attributed to the rise of Docker, which was released a few years prior. Still, many organizations started becoming more aware of the benefits of running their workloads in the cloud. Whether because of cost savings or ease of operations, companies were increasingly looking into whether they should be getting on this “cloud native” trend.

Best Practices for Container Security on AWS

DevOps vs. DevSecOps: What Are the Differences?

I've never really been sure how DevSecOps differs from plain-old DevOps, but over the past year I think there's finally something enough there to have a notion. To be concise, DevOps-think makes software delivery better by moving operations concerns closer to development with the help of a lot of automation and process change.

D2iQ Joins the Nutanix Ready Partner Program

We are excited to announce that D2iQ and Nutanix have partnered to provide a best-of-breed hybrid cloud solution for customers building cloud-native applications for Day 2 operations. The solution has been validated Nutanix Ready and the companies’ have a collaborative support relationship.

Are Microservices Right for Me - Enabling Microservices or Monoliths - Shipa

Another conversation that was recently had during our weekly field enablement office hours here at Shipa was “what exactly is a microservice?”. Certainly, a term that has been used to describe a paradigm and architectural practice. One can argue that the breaking down or decomposing of applications has been going on for decades and is not a monumental shift in computing. There are several definitions of microservices from a purist definition to a definition resembling a movement.

Kubernetes 1.24 - What's new?

Kubernetes 1.24 is about to be released, and it comes packed with novelties! Where do we begin? Update: Kubernetes 1.24 release date has been moved to May 3rd(from April 19th). This release brings 46 enhancements, on par with the 45 in Kubernetes 1.23, and the 56 in Kubernetes 1.22. Of those 46 enhancements, 13 are graduating to Stable, 14 are existing features that keep improving, 13 are completely new, and 6 are deprecated features.

What is the difference between Containers & Virtual Machines - Civo Academy

Announcing New Capabilities in Tanzu Application Platform to Enhance User Experience Across Multiple Clusters and Cloud

Earlier this year we launched VMware Tanzu Application Platform to help customers quickly build and deploy software on any public cloud or on-premises Kubernetes cluster. Tanzu Application Platform provides a rich set of developer tooling along with a pre-paved path to production-enabling enterprises to develop revenue-generating applications faster by reducing developer tooling complexity.

What's New in VMware Tanzu Application Platform 1.1: Image-Based Supply Chains

What's New in VMware Tanzu Application Platform 1.1: Multi-Cluster

Managing Multi-Cluster, Multi-Cloud Deployments with GitOps and ArgoCD - Ricardo Rocha (CERN)

Codefresh Demo Webinar

Distributed Observability: A Look into ESG Research

Civo Update - April 2022

In March, the Cloud Native Computing Foundation (CNCF) released its Annual Survey 2021. Civo featured alongside the likes of Amazon and Azure when CNCF asked developers "Does your organization use any certified Kubernetes installers?". Saiyam, Director of Technical Evangelism at Civo, also released an update on the new advancements so far for our 2022 Roadmap.

Introduction to containers - Civo Academy

Is Deploying Kubernetes in Air-Gapped Environments Hard? Read How This Security-Conscious Customer Radically Simplified the Deployment Process

Many federal and public sector organizations seek to capitalize on the benefits of a production-grade Kubernetes distribution in their own private data centers, which are often highly restricted and air-gapped environments. However, deploying and operating Kubernetes and other technologies in air-gapped environments is incredibly complex. Teams maintaining Kubernetes in these environments contend with restrictive network access and software supply chain security concerns.

Cilium network policy security tutorial on Civo

Terraform your EKS fleet - PART 2

Get Started Using VMware Tanzu Mission Control with Tanzu Kubernetes Grid

Today, there are growing pressures on operations and development teams to deploy software faster and into more environments, such as development, staging, or production. Organizations need self-service tools and operational efficiency, and VMware is meeting the challenge with solutions to help modernize operations and unburden their teams. VMware Tanzu Mission Control unifies cluster management to a single control plane and groups resources as a resource hierarchy.

Kubernetes Load Test Tutorial

In this blog post we use podtato-head to demonstrate how to load test kubernetes microservices and how Speedscale can help understand the relationships between them. No, that's not a typo, podtato-head is an example microservices app from the CNCF Technical Advisory Group for Application Delivery, along with instructions on how to deploy it in numerous different ways. There are more than 10 delivery examples, you will surely learn something by going through the project. We liked it so much we forked the repo to contribute our improvements.

Enlightning: What Is Knative Eventing?

Difference between monolithic & microservice architecture - Civo Academy

Qovery Demo Day April 2022

Heroku Vs. AWS: Data Security Comparison

Everything You Need to Know about K3s: Lightweight Kubernetes for IoT, Edge Computing, Embedded Systems & More

If you are a vivid traveler of the tech universe, you’ve likely come across this term: Kubernetes. Scratching your head? Let me make it clear: In simple terms, we know Kubernetes — or K8s — as a portable and extensible platform for managing containerized workloads and services. It can facilitate both declarative configurations as well as automation.

How I cut my AKS cluster costs by 82%

A practical guide to container networking

An important part of any Kubernetes cluster is the underlying containers. Containers are the workloads that your business relies on, what your customers engage with, and what shapes your networking infrastructure. Long story short, containers are arguably the soul of any containerized environment. One of the most popular open-source container orchestration systems, Kubernetes, has a modular architecture.

How to Choose the Right Tool for Kubernetes Deployment

The benefits of using Kubernetes (K8s) at an enterprise level are numerous. K8s is portable, extensible, scalable and reliable. When it comes time for your enterprise to deploy its K8s clusters, there are several deployment frameworks and tools to choose from. But which solution is right for your enterprise? Read on as we break down deployment solutions into categories and demonstrate how each framework and tool can enable you to operate, manage and deploy your K8s clusters in different ways.

How to use Linux commands for Kubernetes - Civo Academy

Containerizing and Modernizing Windows Apps using CloudHedge's OmniDeq

Heritage applications are critical to any business, and ensuring that they are running exceptional in the latest environment is a priority. Heritage applications nesting on Windows Server 2008 R2 do not get support from Microsoft directly, and that’s a major concern for enterprises who have their entire business running on such platforms.

Getting Started with SUSE Rancher Fleet and Shipa Cloud

As the GitOps paradigm continues to evolve, different interpretations and implementations will continue to appear. SUSE Rancher Fleet is a project creating a powerful, lightweight, and scalable GitOps engine. Recently, we showed the art of the possible between Shipa and SUSE Rancher Fleet. Make sure to check out our joint solution brief where the intersection of the SUSE Rancher and Shipa stacks come together.

What is Cloud-Native Monitoring?

Cloud and cloud-based technologies are at their peak today. More and more organizations are turning to intelligent architectures and systems to deploy their apps. And they are not wrong—the cloud has proven to be a great way of performance optimization and cost-cutting. However, there are issues to address with this growing trend. One of those is monitoring. Monitoring is a vital part of application maintenance.

Automated Canary Deployments with Rancher Fleet and Flagger

Cycle Podcast | EP 12 | Bret Fisher | Containers: Have They Delivered On Their Promise?

What to Watch on EKS - a Guide to Kubernetes Monitoring on AWS

It’s impossible to ignore AWS as a major player in the public cloud space. With $13.5billion in revenue in the first quarter of 2021 alone, Amazon’s biggest earner is ubiquitous in the technology world. Its success can be attributed to the wide variety of services available, which are rapidly developed to match industry trends and requirements.

The 10 Biggest Mistakes Startups Make on AWS

Deploy Tanzu Kubernetes Clusters with Additional Data Volumes Using Tanzu Mission Control

A common problem faced by platform operators is data management; specifically, the detrimental side effects that can manifest due to running out of disk space. For example, if debug logging is left enabled on an app and /var/logs fill the disk, containerd/etcd would now be unable to write their states to disk, causing the cluster to fail. VMware Tanzu Mission Control makes it easy to avoid disk consumption issues by exposing the option to add data partitions to a cluster or nodepool.

What is K3s? The lightweight Kubernetes distribution - Civo Academy

Q1 2022 product retrospective - Last quarter's top features

What is Environment as a Service (EaaS) and How is it Impacting Productivity?

Cilium - eBPF Powered Networking, Security & Observability

What makes Qovery secure?

Introducing Qovery NFTs

What is container orchestration and Kubernetes? - Civo Academy

Shipa Product Updates - April 2022

Depending if you are in the Northern Hemisphere, Spring is upon us. We have been busy at Shipa democratizing developer experience for all and making Kubernetes an afterthought. Grateful for all the community and customer feedback helping us make Shipa better. If you have an idea, check out our new Idea Portal where you can your own ideas. Let’s take a look at what we have delivered in the last pair of sprints.

Shipa Product Updates - March 2022

The Need for Speed: Why Fast Data Pipelines Are the Lifeblood of a Business

Today’s businesses are looking for ways to better engage customers, improve operational decision making, and capture new value streams. As the world of data continues to grow and its pace of change accelerates, it’s never been more important for businesses to have fast access to actionable data to serve customers with personalized services, in real-time, and at scale. To do this, they need a fast data pipeline.