Operations | Monitoring | ITSM | DevOps | Cloud

January 2024

Coralogix Secures 123 G2 Badges in 2024 G2 Awards

Mastering the Cloud Migration: The Ultimate Guide to Cloud Migration Tools

Just a Criblet of Learning Makes the Data Go Round

Log Less, Achieve More: A Guide to Streamlining Your Logs

Evaluating New Tools with Cribl

5 Guiding Principles of Digital Business Observability

Optimizing APM Costs and Visibility with Cribl Stream and Search

Mastering Resource Control with Cribl Search's Usage Groups

Major Hospital System Cuts Azure Sentinel Costs by Over 50% with Observo.ai

Exploring Splunk Alternatives: Deep Dive into Log Analysis

Generative AI: The latest example of systems of insight

Up Your Observability Game With Attributes

The Top 15 New Relic Dashboard Examples

5 Important Reasons Why You Need Application Observability

Elastic Observability monitors metrics for Microsoft Azure in just minutes

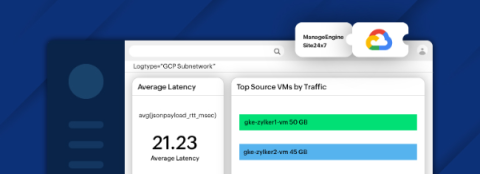

Forward logs from Google Cloud Platform to Site24x7 with Dataflow

Navigating IT and Security Consolidation in 2024

5 ways platform engineers can help developers create winning APIs

The Top 15 Datadog Dashboard Examples

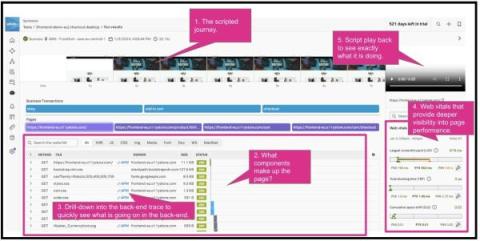

Why Knowing the Front-End and User's Experience of Your Platform is Key to Understanding How that Platform is Working

Securing the Future: The Critical Role of Endpoint Telemetry in Cybersecurity

Beyond Logs, Metrics and Traces

Sending Go Application Logs to Loggly

Observability and Machine Learning [Part 1]

Introducing 'Cribl Stream Fundamentals'

Scaling Platform Engineering: Shopify's Blueprint

Docker Logging One-Stop Beginner's Guide

The Top 15 Splunk Dashboard Examples

When to Automate Recurring Events

The Ultimate Guide to Windows Event Logging

Monitoring for every runtime: Managed Service for Prometheus now works with Cloud Run

Building the NextGen Factory with Splunk and Bosch Rexroth

How to Customise Detectors for Even Better Alerting

Why Splunk customers face a choice for observability and modernization

AI-Driven Alerting - Sumo Logic Customer Brown Bags - Monitoring & Observability - January 23rd 2024

Managing Kubernetes Events with Cribl Edge

Data Lake Strategy: Implementation Steps, Benefits & Challenges

Graylog vs Coralogix

All in the family Architecting and Managing Shared Graylog Clusters

Graylog Cluster: Navigating Shared Data Like a Pro

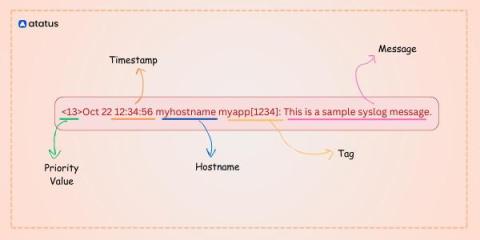

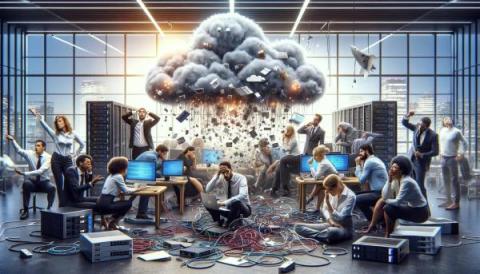

Overcoming Messy Cloud Migrations, Outdated Infrastructures, Syslog, and Other Chaos

Loki vs Elasticsearch - Which tool to choose for Log Analytics?

Top 11 Splunk Alternatives in 2024 [Includes Free & Open-Source Tools]

What's New in Open 360? January 2024 Update

Elastic recognized with 2024 EMA Allstars award for its AI-assisted observability

Scale Your Splunk Cloud Operations With The Splunk Content Manager App

How to Create Great Alerts

NGINX Access and Error Logs

Understand & Optimize Your Telemetry Data (Subtitled)

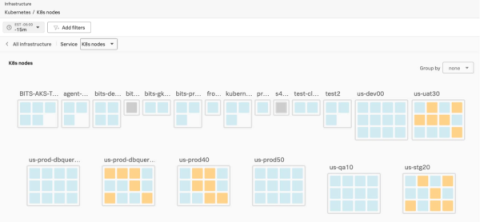

Managing Telemetry Data Overflow in Kubernetes with Resource Quotas and Limits

EMA explores Elastic AI Assistant for Security

AI at Splunk: Trustworthy Principles for Digital Resilience

How Cribl Helps the UK Public Sector Manage Challenges Around Growing Data Costs and Complexity

Why Your Logging Data and Bills Get Out of Hand

In the labyrinth of IT systems, logging is a fundamental beacon guiding operational stability, troubleshooting, and security. In this quest, however, organizations often find themselves inundated with a deluge of logs. Each action, every transaction, and the minutiae of system behavior generate a trail of invaluable data—verbose, intricate, and at times, overwhelming.

Monitoring-as-Code for Scaling Observability

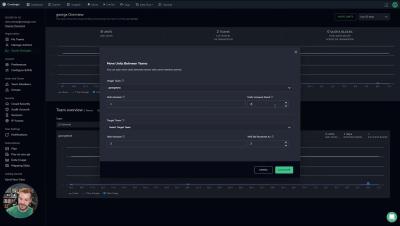

As data volumes continue to grow and observability plays an ever-greater role in ensuring optimal website and application performance, responsibility for end-user experience is shifting left. This can create a messy situation with hundreds of R&D members from back-end engineers, front-end teams as well as DevOps and SREs, all shipping data and creating their own dashboards and alerts.

How to easily add application monitoring in Kubernetes pods

Why Network Load Balancer Monitoring is Critical

Elastic Search 8.12: Making Lucene fast and developers faster

Elastic Observability 8.12: GA for AI Assistant, SLO, and Mobile APM support

Incident Response Plans: The Complete Guide To Creating & Maintaining IRPs

Collecting OpenShift container logs using Red Hat's OpenShift Logging Operator

Make Moves Without Making Your Data Move

How much of the data you collect is actually getting analyzed? Most organizations are focused on trying not to drown in the seas of data generated daily. A small subset gets analyzed, but the rest usually gets dumped into a bucket or blob storage. “Oh, we’ll get back to it,” thinks every well-intentioned analyst as they watch data streams get sent away, never to be seen again.

Docker Log Rotation Configuration Guide | SigNoz

Observability and Telecommunications Network Management [Part 1]

RUM Session Replay Or How "Watching Videos" Will Help Us With Digital Experience Monitoring!

Security Has a Big Data Problem, and an Even Bigger People Problem

Got cybersecurity problems? Well, the good news is the same as the bad news — you’re not alone. The world of security has a big data problem and an even bigger people problem. Enterprise connectivity has drastically increased in the last decade, meaning every employee, contractor, and vendor has some level of access to corporate networks. To support this growth, companies monitor exponentially increasing infrastructure and traffic, producing a steadily rising volume of data.

Debugging 5 Common Networking Problems With Full Stack Logging

Troubleshooting distributed applications: Using traces and logs together for root-cause analysis

When troubleshooting distributed applications, you can use Cloud Trace and Cloud Logging together to perform root cause analysis.

Laying the foundation for a career in platform engineering

A career in platform engineering means becoming part of a product team focused on delivering software, tools, and services.

How the All-In Comprehensive Design Fits Into the Cribl Stream Reference Architecture

In this livestream, Ahmed Kira and I provided more details about the Cribl Stream Reference Architecture, which is designed to help observability admins achieve faster and more valuable stream deployment. We explained the guidelines for deploying the comprehensive reference architecture to meet the needs of large customers with diverse, high-volume data flows. Then, we shared different use cases and discussed their pros and cons.

Exploring Observability's Role in Retail & E-Commerce

For retailers and ecommerce store owners, your bottom line is always affected whenever your service is down, due to today's consumers expecting their digital interactions to operate around the clock. This is particularly crucial during spikes in traffic due to sales, like Black Friday or Cyber Monday.

Cribl Stream's Replay vs Cribl Search's Send: Understanding the Differences

In today’s contemporary landscape, organizations produce more data than ever, which needs to be collected, stored, analyzed, and retained, but not necessarily in that order. Historically, most vendors’ analysis tools were also the retention point for that data. Still, while this may first appear to be the best option for performance, we have quickly seen it creates significant problems.

Data Architecture for Business Data & AI Projects

Organization Admin Console

Unified Observability: The Right Way Ahead

Observability, in modern software engineering, has evolved into a paramount concept, shedding light on the intricate inner workings of complex systems. Three essential pillars support this quest for clarity: logging, traces, and metrics. These interconnected elements collectively form the backbone of observability, enabling us to understand our software as never before. Think of a system as a bustling city.

Interview with CIO Chris Campbell

In the latest instalment of our interviews speaking to leaders throughout the world of tech, we’ve welcomed Chris Campbell, CIO at DeVry University.

Observability vs. APM: What to Know on Your Monitoring Journey

In the ever-evolving landscape of software development and IT operations, monitoring tools play a pivotal role in ensuring the performance, reliability, and availability of your applications. Two key disciplines in this domain are observability and Application Performance Management (APM). This post will help you understand the nuances between observability and APM, exploring their unique characteristics, similarities, benefits and differences.

TCO Overview

Multimodal AI Explained

How To Set Up Monitoring for Your Hybrid Environment

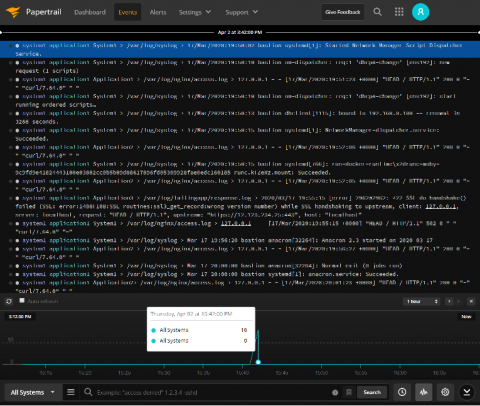

Dashboards - Sumo Logic Customer Brown Bag - Logging - January 8th, 2024

The Role of Observability in Media and Entertainment

Digital transformation is at the core of media and entertainment organizations, it’s vital for these firms to constantly evolve to provide the best user experience to their customers. These companies must seek new and interesting content, services, and tailored offerings that enhance the audience’s experience and supply personalization. However, whilst these investments are essential to remain competitive, they’re also particularly costly.

How to Monitor Your Hybrid Applications Without Toil

Performing Geolocation Lookups on IP Addresses to Use in Cribl Search

Are you tired of sifting through data without context? Cribl Search adds valuable depth to your data, making it much easier to understand and analyze. No more squinting at cryptic logs or puzzling over unknown IP addresses! ️ Some common examples of how Cribl Search can enrich your data are adding service names or matching to threat intelligence. Another popular data enrichment is adding geographical location to events based on IP addresses.

Log Monitoring 101 Detailed Guide [Included 10 Tips]

Looking at nth degree's Innovative Fractional Service Delivery Model

The Future of Higher Education: Observability As A Strategic Asset

Schools, universities and other organizations within higher education have been shifting to modernize their learning experiences. With the intake of new students each year, some of these being based remotely, these organizations are seeking to manage large-scale and highly distributed infrastructure.

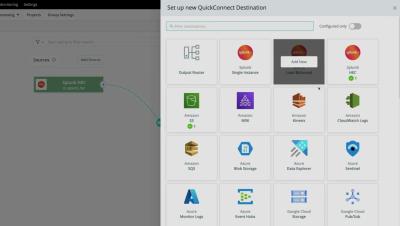

Integrating Cribl Stream with the Built-in Tables of Microsoft Sentinel

Cribl’s integration catalog is ever-expanding. At Cribl, we constantly collect feedback on where to integrate next and channel it to deliver more high-impact integrations into our catalog. Whether it is Sources, Collectors, or Destinations, we constantly add new integrations to expand our reach in the IT security and observability ecosystem.

Computer Servers: The Complete Guide

Business Intelligence and Log management - Opportunities and challenges

3 Straightforward Pros and Cons of Datadog for Log Analytics

The Importance of Traces for Modern APM [Part 2]

Generating and Comparing Statistics with Eventstats in Cribl Search

When exploring data, comparing individual data points with overall statistics for a large data set is often useful. For example, you might be interested in understanding when a performance metric rises above the historical average. Or possibly knowing when the variance of that metric increases past a certain threshold. Or maybe noting a change in the distinct number of IP addresses connecting to your public web portal.

Shadow IT & How To Manage It Today

The concise guide to Loki: How to work with out-of-order and older logs

For this week’s installment of “The concise guide to Loki,” I’d like to focus on an interesting topic in Grafana Loki’s history: ingesting out-of-order logs. Those who’ve been with the project a while may remember a time when Loki would reject any logs that were older than a log line it had already received. It was certainly a nice simplification to Loki’s internals, but it was also a big inconvenience for a lot of real world use cases.

RED Monitoring: Rate Errors, and Duration

Using the AWS API Dataset Provider in Cribl Search to Build Dashboards

This blog post discusses utilizing Cribl Search to pull and visualize data from the AWS API without ingesting data. This will allow you to collect, analyze, and visualize data from your AWS account in real time without ingesting the data first.

Observability 101: What is an Observability Pipeline?

Committed to Observability Excellence: Logz.io's Open 360 Observability Platform Takes Home Over a Dozen Winter G2 Badges

As we continue to iterate and help organizations meet their observability goals, Logz.io is thrilled to announce we’ve earned over a dozen Winter 2023 G2 Badges for our Logz.io Open 360™ essential observability platform! G2 Research is a tech marketplace where people can discover, review, and manage the software they need to reach their potential. Here are the Winter 2023 G2 Badges we’ve taken home for Application Performance Monitoring (APM) and Log Analysis.

How Observability Enhances Financial Services

Financial services and financial technology (FinTech) companies often depend upon complex infrastructure to handle their financial data. Security and compliance are paramount for these organizations, for gaining full visibility into the health and performance of these services to guarantee security is essential.

Evolving Cribl's Own Observability Practice at Blazing Speed

Cribl.Cloud has grown substantially since its launch, and our observability practice has developed in parallel. Gone are the early days of manageable logs and metrics. As we continue to grow, that problem will become even more challenging. We used Splunk internally, a well-used internal system, as our primary event management system. With Cribl Edge nodes deployed across our entire cloud fleet, we collect logs and metrics and send them to Cribl Stream for processing and routing.

Log Wrangling: Leveraging Logs to Optimize Your System

Coralogix vs Cloudwatch: Support, Pricing, Features & More

Cloudwatch is a standard component for any AWS user, with tight integrations into every AWS service. While Cloudwatch initially seems like a cost-effective solution, its lack of functionality and flexibility can result in higher costs. Let’s explore Coralogix vs Cloudwatch.