Operations | Monitoring | ITSM | DevOps | Cloud

January 2024

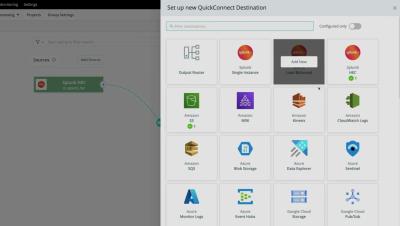

Evaluating New Tools with Cribl

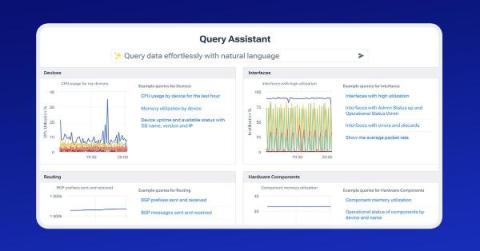

Reinventing Network Monitoring and Observability with Kentik AI

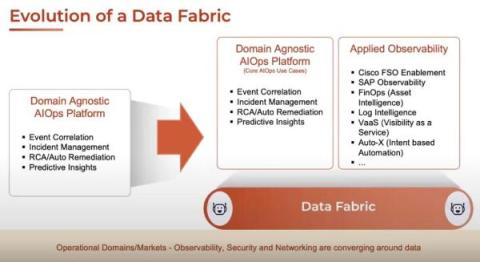

The Rise of Applied Observability, AIOps, and GenAI in Enterprises

5 Guiding Principles of Digital Business Observability

Seven innovative observability features to explore in the new year

Micrometer: The Gold Standard in Observability

Getting Started with OpenTelemetry Visualization

Up Your Observability Game With Attributes

How to improve your observability strategy: Introducing the Observability Journey Maturity Model

5 Important Reasons Why You Need Application Observability

Now Available: Honeycomb Launches Data Residency in Europe

Elastic Observability monitors metrics for Microsoft Azure in just minutes

Navigating IT and Security Consolidation in 2024

Inside TeleTracking's journey to build a better observability platform with Grafana Cloud

Improving Observability With Cloudsmith Logs

Observability with OpenTelemetry and Checkly

Introducing 'Cribl Stream Fundamentals'

Getting started with Application Observability for Java

Observability and Machine Learning [Part 1]

How To Choose the Best Observability Tools

The Cost Crisis in Observability Tooling

Why Splunk customers face a choice for observability and modernization

AI-Driven Alerting - Sumo Logic Customer Brown Bags - Monitoring & Observability - January 23rd 2024

Elastic recognized with 2024 EMA Allstars award for its AI-assisted observability

We've done it again: ManageEngine named a 2023 Gartner Peer Insights Customers' Choice for Application Performance Monitoring and Observability!

Alerts Are Fundamentally Messy

Effective Trace Instrumentation with Semantic Conventions

Observability for business decision making: technology challenges and approaches

Observability vs. Monitoring: Decoding Key Distinctions

Elastic Observability 8.12: GA for AI Assistant, SLO, and Mobile APM support

Monitoring-as-Code for Scaling Observability

As data volumes continue to grow and observability plays an ever-greater role in ensuring optimal website and application performance, responsibility for end-user experience is shifting left. This can create a messy situation with hundreds of R&D members from back-end engineers, front-end teams as well as DevOps and SREs, all shipping data and creating their own dashboards and alerts.

The Real Costs of Synthetics: New Relic vs. Checkly

Observability and Telecommunications Network Management [Part 1]

How We Leveraged the Honeycomb Network Agent for Kubernetes to Remediate Our IMDS Security Finding

Picture this: It’s 2 p.m. and you’re sipping on coffee, happily chugging away at your daily routine work. The security team shoots you a message saying the latest pentest or security scan found an issue that needs quick remediation. On the surface, that’s not a problem and can be considered somewhat routine, given the pace of new CVEs coming out. But what if you look at your tooling and find it lacking when you start remediating the issue?

Exploring Observability's Role in Retail & E-Commerce

For retailers and ecommerce store owners, your bottom line is always affected whenever your service is down, due to today's consumers expecting their digital interactions to operate around the clock. This is particularly crucial during spikes in traffic due to sales, like Black Friday or Cyber Monday.

The Last Mile of Observability - Fine-Tuning Notifications for More Timely Alerts

No one wants to get an alert in the middle of the night. No one wants their Slack flooded to the point of opting out from channels. And indeed, no one wants an urgent alert to be ignored, spiraling into an outage. Getting the right alert to the right person through the right channel — with the goal of initiating immediate action — is the last mile of observability.

Unified Observability: The Right Way Ahead

Observability, in modern software engineering, has evolved into a paramount concept, shedding light on the intricate inner workings of complex systems. Three essential pillars support this quest for clarity: logging, traces, and metrics. These interconnected elements collectively form the backbone of observability, enabling us to understand our software as never before. Think of a system as a bustling city.

Observability vs. APM: What to Know on Your Monitoring Journey

In the ever-evolving landscape of software development and IT operations, monitoring tools play a pivotal role in ensuring the performance, reliability, and availability of your applications. Two key disciplines in this domain are observability and Application Performance Management (APM). This post will help you understand the nuances between observability and APM, exploring their unique characteristics, similarities, benefits and differences.

Choosing the Right Observability Tools for Developers

This is the third and final blog post in a series about shifting Observability left. If you have not yet read the first two, you can find the first post here and the second post here. Observability is fundamental to modern software development, enabling developers to gain deep insights into their application’s behavior and performance.

Building a Secure OpenTelemetry Collector

The OpenTelemetry Collector is a core part of telemetry pipelines, which makes it one of the parts of your infrastructure that must be as secure as possible. The general advice from the OpenTelemetry teams is to build a custom Collector executable instead of using the supplied ones when you’re using it in a production scenario. However, that isn’t an easy task, and that prompted me to build something.

The Role of Observability in Media and Entertainment

Digital transformation is at the core of media and entertainment organizations, it’s vital for these firms to constantly evolve to provide the best user experience to their customers. These companies must seek new and interesting content, services, and tailored offerings that enhance the audience’s experience and supply personalization. However, whilst these investments are essential to remain competitive, they’re also particularly costly.

What is Observability? Monitoring vs Observability

Observability trends and predictions for 2024: CI/CD observability is in. Spiking costs are out.

From AI to OTel, 2023 was a transformative year for open source observability. While the advancements we made in open source observability will be a catalyst for our continued work in 2024, there is even more innovation on the horizon. We asked seven Grafanistas to share their predictions for which observability trends are on their “In” list for 2024. Here’s what they had to say.

Looking at nth degree's Innovative Fractional Service Delivery Model

The Future of Higher Education: Observability As A Strategic Asset

Schools, universities and other organizations within higher education have been shifting to modernize their learning experiences. With the intake of new students each year, some of these being based remotely, these organizations are seeking to manage large-scale and highly distributed infrastructure.

Escaping the Cost/Visibility Tradeoff in Observability Platforms

For developers, understanding the performance of shipped code is crucial. Through the last decade, a tablestake function in software monitoring and observability solutions has been to save and track app metrics. Engineers love tools that get out of your way and just work, and the appeal of today’s best-in-class application performance monitoring (APM) suites lies in a seamless day zero experience with drop-in agent installs, button click integrations, and immediate metrics collection.

Context deadline exceeded - What to do?

3 Straightforward Pros and Cons of Datadog for Log Analytics

Harmony in Chaos: Uniting Team Autonomy with End-to-End Observability for Business Success

Imagine a symphony where every musician plays their part flawlessly, but without a conductor to guide the orchestra, the result is just a discordant mess. Now apply that image to the modern IT landscape, where development and operations teams work with remarkable autonomy, each expertly playing their part. Agile methodologies and DevOps practices have empowered teams to build and manage their services independently, resulting in an environment that accelerates innovation and development.

With OpenTelemetry, ComplyAdvantage overhauled its observability (twice)

Product Managing to Prevent Burnout

I’m currently working on a small team within Honeycomb where we’re building an ambitious new feature. We’re excited—heck, the whole company is—and even our customers are knocking on our door. The energy is there. With all this excitement, I’ve been thinking about a risk that—if I'm not careful—could severely hinder my team's ability to ship on time, celebrate success, and continue work after launch: burnout.

Lightrun LogOptimizer Gets A Developer Productivity and Logging Cost Reduction Boost

Lightrun’s LogOptimizer stands as a groundbreaking automated solution for log optimization and cost reduction in logging. An integral part of the Lightrun IDE plugins, this tool empowers developers to swiftly scan their source code—be it a single file or entire projects—to identify and replace log lines with Lightrun’s dynamic logs, all within seconds.

Observability 101: What is an Observability Pipeline?

Uptrace v1.6 is available

Committed to Observability Excellence: Logz.io's Open 360 Observability Platform Takes Home Over a Dozen Winter G2 Badges

As we continue to iterate and help organizations meet their observability goals, Logz.io is thrilled to announce we’ve earned over a dozen Winter 2023 G2 Badges for our Logz.io Open 360™ essential observability platform! G2 Research is a tech marketplace where people can discover, review, and manage the software they need to reach their potential. Here are the Winter 2023 G2 Badges we’ve taken home for Application Performance Monitoring (APM) and Log Analysis.

How Observability Enhances Financial Services

Financial services and financial technology (FinTech) companies often depend upon complex infrastructure to handle their financial data. Security and compliance are paramount for these organizations, for gaining full visibility into the health and performance of these services to guarantee security is essential.

Evolving Cribl's Own Observability Practice at Blazing Speed

Cribl.Cloud has grown substantially since its launch, and our observability practice has developed in parallel. Gone are the early days of manageable logs and metrics. As we continue to grow, that problem will become even more challenging. We used Splunk internally, a well-used internal system, as our primary event management system. With Cribl Edge nodes deployed across our entire cloud fleet, we collect logs and metrics and send them to Cribl Stream for processing and routing.