Operations | Monitoring | ITSM | DevOps | Cloud

December 2021

Faster troubleshooting of microservices, containers, and Kubernetes with Dynamic Packet Capture

Troubleshooting container connectivity issues and performance hotspots in Kubernetes clusters can be a frustrating exercise in a dynamic environment where hundreds, possibly thousands of pods are continually being created and destroyed.

Helm - Package Manager for Kubernetes

Heroku vs AWS : what to choose in 2022? - Detailed comparison

As a developer, using Heroku (a Platform as a service (PaaS)) helps get our applications up and running quickly. Without worrying about servers, scaling, backup, network, and so many underground details. Heroku is the perfect solution to start a project. But as the project grows, the needs become more complex, and moving from Heroku to Amazon Web Services (AWS) becomes more and more a no-brainer choice (discover why so many CTOs decide to move from Heroku to AWS).

Kubernetes infographic: usage of cloud native technology in 2021

2021 has been an interesting year for the Kubernetes and cloud native ecosystem. Due to the pandemic, cloud adoption saw a big spike in adoption. As the year wraps up soon, we wanted to reflect on the top findings from the Kubernetes and cloud native operations report and we have a cool infographic for you. The new version of the report for 2022 is due some time in January so stay tuned!

The DevOps Handbook for Kubernetes Errors

Announcement: Pleco - the open-source Kubernetes and Cloud Services garbage collector

TLDR; Pleco is a service that automatically removes Cloud managed services and Kubernetes resources based on tags with TTL. When using cloud provider services, whether using UI or Terraform, you usually have to create many resources (users, VPCs, virtual machines, clusters, etc...) to host and expose an application to the outside world. When using Terraform, sometimes, the deployment will not go as planned.

Codefresh 2021: Year In Review

Codefresh has a very clear mission to enable enterprise teams to confidently deliver software at scale. We are incredibly grateful to our customers who are succeeding with deployments to the cloud, on-prem, and at the edge. Codefresh is powering critical software delivery for some of the world’s most popular gaming and media companies as well as regulated environments in hospitals, at banks, and for defense. So this post is dedicated to all of you who have enabled Codefresh to grow!

2021 Kubernetes on Big Data Report: Data Management | Pepperdata

Decoding Kubernetes: Is Robin the industry's answer to enterprise demands?

If the last few years have taught us anything, it is that digital transformation is an inevitable reality for all industries, across the globe. Enterprises are running thousands of applications to deliver to growing customer needs. Data centers are continuously evolving to cater to these applications, with yesterday’s siloed, on-premises versions eventually making way to the hybrid cloud models that we see today.

The rise of private 5G: Why enterprises are pushing play on private 5G deployments

Private 5G is becoming the technology of choice for organizations worldwide. Especially with the advent of Industry 4.0, private 5G has gained traction in emerging sectors, like smart manufacturing, where low latency, high capacity and data security are all critical parameters to business success. Recent studies have revealed that private 5G is becoming a key business strategy for CIOs, with the vast majority planning to deploy it in standalone or hybrid ecosystems within the next two years.

It's All About Developer Experience [DX]

Looking at where major DevOps trends are headed, a common theme across many tools and practices is improving the Developer Experience or DX. One paradigm of thinking is that if you improve your internal customer experience, then your external customers will benefit too. However, up until now, the Developer Experience has been quite siloed and segregated for a multitude of reasons, such as scaling or having best-of-breed technologies to support individual concerns. Presentation on DX.

How-To: Docker on Windows and Mac with Multipass

If you’re looking for an alternative to Docker Desktop or to integrate Docker into your Multipass workflow, this how-to is for you. Multipass can host a docker engine inside an Ubuntu VM in a manner similar to Docker Desktop. That Docker instance can be controlled either directly from the VM, or remotely from the host machine with no additional software required. This allows you to run Docker locally on your Windows or Mac machine directly from your host terminal.

Protect Cloud Native Applications from Log4Shell with VMware Tanzu Service Mesh

VMware has published a detailed analysis of the Log4Shell exploitation, explaining how VMware security products are helping in multiple ways to detect and contain the exploit. Source: Swiss Government Computer Emergency Response Team.

How To Use Buildpacks To Run Containers

The high demand to deliver software that is both highly available and able to meet customer requests has, in part, led to the adoption of microservice architecture, a software architecture pattern that makes it easier to deploy applications as self-contained entities called containers. These containers are nothing but processes that run as long as the application in them is running.

Monitor Kubernetes with Fairwinds Insights' offering in the Datadog Marketplace

Fairwinds Insights is Kubernetes governance and security software that enables DevOps teams to monitor and prevent configuration problems in their infrastructure and applications. Not only does Fairwinds simplify Kubernetes complexity, but it also reduces risk by surfacing security and reliability issues in your Kubernetes clusters.

Kubewarden Global Online Meetup 2021

Make Your Move to Multi-Cloud Kubernetes with VMware Tanzu

Harvester: A Modern Infrastructure for a Modern Platform

Cloud platforms are not new — they have been around for a few years. And containers have been around even longer. Together, they have changed the way we think about software. Since the creation of these technologies, we have focused on platforms and apps. And who could blame anyone? Containers and Kubernetes let us do things that were unheard of only a few years ago.

Automatically Manage DNS for Kubernetes with ExternalDNS and Tanzu Mission Control Catalog

If you have ever deployed Kubernetes services, you understand the pain of having to maintain DNS records for an ever-growing number of internal and external services. ExternalDNS helps address this pain and reduces the amount of toil required for manual record keeping by programmatically updating DNS servers. Before we get into the details of how that works, let’s quickly review what functionality the ExternalDNS package provides.

What's new in Sysdig - December 2021

Here we are with the final “What’s new in Sysdig” monthly newsletter of the year. First of all, Merry Christmas, メリークリスマス, Buon Natale, 성탄을 축하드려요, С рождеством!, Vrolijk kerstfeest, Feliz Navidad! Whatever you may be celebrating, we wish you a wonderful holiday season from all of us at Sysdig!

Are you ready for 2022?

2021 has been a crazy year for the Qovery team. When I look back, 1 year ago we were 4 in the team, now we are 15! We expect to double the size of the team for 2022 while keeping our developer DNA. 2022 looks bright, and we strive to make the cloud simple for everyone.

Stop Using Branches for Deploying to Different GitOps Environments

In our big guide for GitOps problems, we briefly explained (see points 3 and 4) how the current crop of GitOps tools don’t really cover the case of promotion between different environments or how even to model multi-cluster setups. The question of “How do I promote a release to the next environment?” is becoming increasingly popular among organizations that want to adopt GitOps.

Log4j and VMware Tanzu Application Service

Experiment with Calico BGP in the Comfort of Your Own Laptop!

Yes, you read that right – in the comfort of your own laptop, as in, the entire environment running inside your laptop! Why? Well, read on. It’s a bit of a long one, but there is a lot of my learning that I would like to share. I often find that Calico Open Source users ask me about BGP, and whether they need to use it, with a little trepidation. BGP carries an air of mystique for many IT engineers, for two reasons.

Deploy your application on AWS in 3 minutes with John Gramila

Our $350M funding round will accelerate our cloud and container security momentum into global scale

I am excited to announce today that we have raised an additional $350M at a valuation of $2.5B, more than doubling our valuation and bringing our cumulative funding since inception to ~$750M. This funding reflects investor conviction in our ability to be the dominant cloud and container security platform, and brings us closer to our vision of helping every organization to confidently run modern, cloud-native applications.

Sysdig 2021: End of year business announcement

Continuous Innovation With D2iQ Kubernetes Platform 2.1

Here at D2iQ, we spend a lot of time listening to our customers, and we welcome any feedback that can make it easier for organizations to get into production at scale with Kubernetes. Today, we’re pleased to announce the general availability of the D2iQ Kubernetes Platform (DKP) version 2.1, including D2iQ Konvoy 2.1 and Kommander 2.1.

Deploying Your App Using Shipa and Azure Pipelines

In this article, you will learn a bit about how you can deploy an app in IaC way to Kubernetes using Shipa and Azure Pipelines. Shipa is a unique product that solves one of the main issues that developers face while developing Cloud Native applications on Kubernetes. The underlying issue of learning Kubernetes in a faster phase is a difficult task for most of the new developers, that is where Shipa comes to the rescue.

FinOps Tools: Supercharge Your Investment with Optimization

2021 Pepperdata Survey: The Reality of Kubernetes in Action

GitHub Actions for Kubernetes application policies

A while ago, we wrote about using GitHub Actions to enable a developer-friendly CD process for Kubernetes. The goal was to show how DevOps or Platform Engineers could give developers an easy way to define and deploy their applications themselves with the target of implementing a self-service model.

Bare metal Kubernetes: The 6 things you wish you knew before 2022

2022 is right around the corner, and it’s not just time to prepare for christmas, play video games, buy presents, or share anti-christmas memes. It’s time to start making some predictions for bare metal Kubernetes! Take a minute and let’s think about it. Developers have advent of code so they’re busy right now. Sysadmins and devops can play games like predicting what’s going to happen next year for bare metal Kubernetes.

VMware Tanzu Kubernetes Grid Integrated: A Year in Review

The modern application world is advancing at an unprecedented rate. However, the new possibilities these transformations make available don’t come without complexities. IT teams often find themselves under pressure to keep up with the speed of innovation. That’s why VMware provides a production-ready container platform for customers that aligns to upstream Kubernetes, VMware Tanzu Kubernetes Grid Integrated (formerly known as VMware Enterprise PKS).

IT Leaders Expect to Move More Big Data Jobs to Kubernetes by January, 2022

Using GitOps for Infrastructure and Applications With Crossplane and Argo CD

If you have been following the Codefresh blog for a while, you might have noticed a common pattern in all the articles that talk about Kubernetes deployments. Almost all of them start with a Kubernetes cluster that is already there, and then the article explains how to deploy an application on top. The reason for this simplification comes mainly from brevity and simplicity. We want to focus on the deployment part of the application and not its infrastructure just to make the article easier to follow.

The 5 main reasons why startups leave Heroku for AWS

Heroku is a cloud-based platform that helps companies build, deliver, monitor, and scale applications with high velocity. Heroku's popularity is due to its simplicity, usability, elegance, and focus on the developer experience. Developers find Heroku helpful as they can get their application ready and running with only minimal focus on configuring infrastructure. Heroku scores on easiness in architecting apps, deploying them to flexible cloud infrastructure, and scaling them as required.

Civo Hackathon highlights - Cluster API provider for Civo

Install Qovery on your AWS account in 5 minutes with John Gramila

Taznu Talk: How to Draw an Owl, and, how VMware Tanzu helps you transform

Everything You Should Know About How Kubernetes Works

So you’ve heard of Kubernetes, and you’ve decided it’s time to dive into what everyone’s talking about. Or maybe you’ve worked with it a little, but you’d like to level up.

Observing Kubernetes With LM Logs

FinConDX 2021 Talk "Developer Experience (DX), Crucial to any Financial Services Transformation"

Tackling Your Application Portfolio Modernization Strategy

By Annie Lin (Director of Digital Transformation, VMware), Matt Campbell (Solutions Architect, VMware) and Brandon Blincoe (Program Strategist, VMware) IT teams face a growing web of complexity in their application portfolios. These portfolios are ever-changing and often include in-house custom apps, new apps added through acquisitions, or commercial off-the-shelf software.

Tanzu Talk: Figuring storage in kubernetes, or, how do you do storage in kubernetes?

Unifying VM and microservice monitoring with Kubernetes, Prometheus, and Grafana

According to a 2020 CNCF survey, the use of containers in production has been rapidly increasing for the past several years. Nutanix, a global leader in cloud software and a pioneer in hyperconverged infrastructure solutions, is part of that trend.

Overview of VMware Tanzu Application Platform Beta 2

Overview of VMware Tanzu Application Platform Beta 3

Preventing Kubernetes misconfigurations and deprecations with Datree

Civo Hackathon highlights - COVID-19 prediction with AI powered by Civo

Building application-ready clusters with Crossplane

Much has been written over the years about DevOps and, maybe a bit more recently, about Platform Engineering. Both jobs focus heavily on designing, building, maintaining, extending, and automating underlying infrastructure components (e.g., Kubernetes, monitoring, security, pipelines, etc.), so their end-users, often developers, can consume it as an integrated platform.

Share and Reuse Your Argo Workflows with the Codefresh Hub for Argo

Anyone who builds a lot of Argo workflows knows that after a while you end up reusing the same basic steps over and over again. While Argo Workflows has a great mechanism to prevent duplicate work, with templates, these templates have mostly stayed in people’s private repositories and haven’t been shared with the broader community.

Terraform vs Pulumi: What to Use in 2022?

Traditionally, provisioning an infrastructure meant a team of field engineers, system admins, storage admins, backup admins, and an application team would all provision and maintain an on-premises data center. Although this system works, it has a few flaws—slow deployment, high cost of setup and maintenance, limited automation, human error, inconsistency, and the underutilization of resources during off-peak periods.

Helping You Benefit from our Pluggable eBPF Data Plane - Introducing the New Calico eBPF Data Plane Certification

Calico is the industry standard for Kubernetes networking and security. It offers a proven platform for your workloads across a huge range of environments, including cloud, hybrid, and on-premises. Calico has had a high-quality, production-ready, performant, eBPF data plane option for some time! However, although many users are deploying it in production and benefitting, we still sometimes see users who don’t know that Calico has an eBPF data plane or feel confident deploying it, and.

Automating and Operationalizing Shipa - Shipa Autowire Framework

Shipa in your organization/team can help usher in the next generation of engineering efficiency and developer experience. Though like any platform, there requires some wiring to bind Shipa to infrastructure. In this modern example, can plug into your IaC strategy in creating Kubernetes clusters then auto-wires all of the needed Shipa pieces at cluster creation time.

How to Delete Pods from a Kubernetes Node

When administering your Kubernetes cluster, you will likely run into a situation where you need to delete pods from one of your nodes. You may need to debug issues with the node itself, upgrade the node, or simply scale down your cluster. Deleting pods from a node is not very difficult, however there are specific steps you should take to minimize disruption for your application.

Improving continuous verification: deploy fast and safely to production

Kubernetes and microservices have opened the door to smaller and more frequent releases, while DevOps CI/CD practices and tools have sped up software development and deployment processes. The dynamic nature of these cloud native architectures makes modern applications not just complex, but also difficult to monitor, find and fix problems.

Crossplane and Shipa Webinar

The Pain of Infrequent Deployments Webinar (Part 2 of 3)

How to Increase Developer Productivity with a Local Kubernetes Cluster

At VMware Tanzu we firmly believe that a deployment platform should improve developer productivity and drive the DevSecOps model of working. VMware Tanzu Labs ANZ is doing an engagement with the customer which illustrates this in the real world.

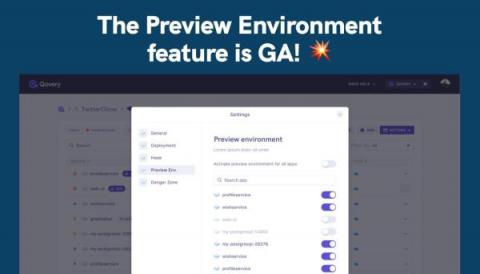

The Preview Environment feature is GA!

I am super excited to announce that our Preview Environment feature is GA (Globally Available) for everyone 🥳.

How Suborbital utilises K3s features with Civo

Bare metal Kubernetes hands on tutorial with MAAS and Juju

Using Codefresh with GKE Autopilot for native Kubernetes pipelines and GitOps deployment

Several companies nowadays offer a cloud-native solution that manages Kubernetes applications and services. While these solutions seem easy at first glance, in reality, they still require manual maintenance. As an example, an important decision for any Kubernetes cluster is the number of nodes and the autoscaling rules you define.

Kubernetes Identity and Access Management Made Easier with D2iQ

Managing Multi-cloud Infrastructure and Compute Cost

Machine Learning for the Financial Sector using D2iQ's Kaptain

Machine Learning for Healthcare using D2iQ Kaptain

Kubernetes at Scale on the Public Cloud - D2iQ with Forrester

Civo update - December 2021

Last month saw the first ever Civo Hackathon take place and we saw individuals and teams from across the globe submit some really exciting, innovative projects. We've also launched a new Partner Program designed to help your fledgling business grow and scale with ease. Continue reading to find out more.

Expanding VMware Tanzu Application Service with the Cloud Service Broker for AWS

Getting Started with Tanzu Community Edition: A Technical Overview

How Tanzu Application Platform Profiles Work

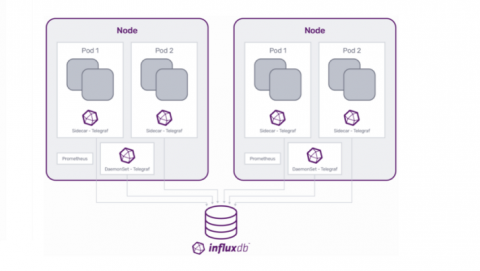

Expand Kubernetes Monitoring with Telegraf Operator

Monitoring is a critical aspect of cloud computing. At any time, you need to know what’s working, what isn’t, and have the ability to respond to changes occurring in a given environment. Effective monitoring begins with the ability to collect performance data from across an ecosystem and present it in a useful way. So the easier it is to manage monitoring data across an ecosystem, the more effective those monitoring solutions are and the more efficient that ecosystem is.

Civo Hackathon highlights - Speech to text using Civo Kubernetes

We lost 3800 stars on Github in 1 click

Yesterday was my worst day for a very long time. We spent so much time promoting our Qovery Engine to the open-source community and getting those ~3800 stars. I woke up and discovered that we had lost all the stars and forks from our repository. But what happened?

Modernizing Kubernetes Security and App Development

Running Mission-Critical Applications on Kubernetes in Production at Scale with DKP

New in the Kubernetes integration for Grafana Cloud: curated dashboards, built-in alerts, and more

Back in May, we announced the Kubernetes integration to help users easily monitor and alert on core Kubernetes cluster metrics using the Grafana Agent, our lightweight observability data collector optimized for sending metric, log, and trace data to Grafana Cloud. The integration allows Grafana Cloud users to monitor and alert on Kubernetes cluster metrics. Since the original release, we’ve added new features and enhancements to help our users go even further.

Calico WireGuard support with Azure CNI

Last June, Tigera announced a first for Kubernetes: supporting open-source WireGuard for encrypting data in transit within your cluster. We never like to sit still, so we have been working hard on some exciting new features for this technology, the first of which is support for WireGuard on AKS using the Azure CNI. First a short recap about what WireGuard is, and how we use it in Calico.

Your First Shipa Webhook - Microsoft Teams Integration

One more “ops” phoneme like DevOps is ChatOps; or conversation-based development/operations. ChatOps has been growing in popularity as communication platforms such as Slack is ingrained in our day-to-day engineering lives. A team lead once told me “if it didn’t happen in Slack, it didn’t happen” showing the emphasis of communication platforms as a system of record.

Deploy to Any Kubernetes Cluster Type with New Tanzu Mission Control Catalog Feature

Deploying packages to distributed Kubernetes clusters is time-consuming. Those in charge of provisioning and preparing infrastructure for application teams know the pain of preparing clusters for production. Provisioning is only the start of a laborious process required to prepare a cluster. Once the cluster is up and running, deploying tools for things like monitoring and security is a DevOps imperative.

Bare metal Kubernetes hands on tutorial with MAAS and Juju

Applied GitOps with Kustomize

Have you always wanted to have different settings between production and staging but never knew how? You can do this with Kustomize! Kustomize is a CLI configuration manager for Kubernetes objects that leverage layering to preserve the base settings of the application. This is done by overlaying the declarative YAML artifacts to override default settings without actually making any changes to the original manifest.

Demo: VMware Tanzu Application Platform with Amazon EKS

How to Install Rancher Desktop on Linux

Shipa Application Discovery

Application Discovery Tutorial

Systems and platforms continue to grow more complex and distributed. The march towards distributed microservices has been accelerated with Kubernetes; arm yourself with a Kubernetes manifest and up your replica count and like magic, you have more than one endpoint for your workload. In the Kubernetes ecosystem, there has been a lot of investment on the infrastructure side of the house for example in making sure clusters are performant and have the ability to scale.

Access a Streamlined DevX for Amazon EKS and Extend the Power of AWS to More Apps with VMware

We’ve all heard the proverb “necessity is the mother of invention.” But have you stopped to consider how very true that is for enterprise applications? Docker invented the lightweight container runtime to answer the needs of agile development teams building cloud native apps. The growing ubiquity of containers necessitated the invention of a way to manage them in large numbers across fleets of machines—what we now know as Kubernetes.