Operations | Monitoring | ITSM | DevOps | Cloud

October 2022

Tales from the Kernel Parameter Side

Users live in the sunlit world of what they believe to be reality. But, there is, unseen by most, an underworld. A place that is just as real, but not as brightly lit. The Kernel Parameter side (apologies to George Romero). Kernel parameters aren’t really that scary in actuality, but they can be a dark and cobweb-filled corner of the Linux world. Kernel parameters are the means by which we can pass parameters to the Linux (or Unix-like) kernel to control how that it behaves.

"How was your KubeCon experience?" - Civo TV

How To Unlock Granular Kubernetes Cost Metrics

Is Kubernetes Still the Best Container Orchestration Tool?

What's new in Sysdig - October 2022

October has, as usual, been a busy month, and Sysdig announced many new features. In Sysdig Monitor, we announced the release of four new Advisories and Yaml config support for Advisor. In Sysdig Secure, we released Severity filtering in Insights, Pod and Node activity view in Insight and four new Falco rules added to the Rules Library. Each of these are discussed in detail below.

FutureNet Asia Fireside Chat: The Journey to Cloud-Native

Kubewarden 1.3 is Here

The Kubewarden development team is happy to announce the release of the Kubewarden 1.3 stack. In addition to the usual amount of small fixes, this release focused on the following themes. If you’re not familiar with Kubewarden, it is a policy engine for Kubernetes. Its mission is to simplify the adoption of policy-as-code.

25 Kubernetes Monitoring Tools And Best Practices In 2022

Advanced Kubernetes interview questions

In the second part of our “Kubernetes interview questions” series, we have outlined ten questions to help those that want to take their Kubernetes knowledge to the next level. Read on to learn more about the difference between Kubernetes and Docker Swarm. We’ll also be covering how an organization can keep costs low using Kubernetes. If you missed part one, check it out here.

Should you put all your trust in the tools?

My father worked with some of the very first computers ever imported to Italy. It was a time when a technician was a temple of excellence built on three pillars: on-the-field experience, a bag of technical manuals, and a fully-stocked toolbox. It was not uncommon that missing the right manual or the correct replacement part turned into a day-long trip from the customers’ site to headquarters and back.

Run self-hosted CI jobs in Kubernetes with container runner

Container runner, a new container-friendly self-hosted runner, is now available for all CircleCI users. Self-hosted runners are a popular solution for customers with unique compute or security requirements. Container runner reduces the barrier to entry for using self-hosted runners within a containerized environment and makes it easier for central DevOps teams to manage running containerized CI/CD jobs behind a firewall at scale.

Harvester 1.1.0: The Latest Hyperconverged Infrastructure Solution

The Harvester team is pleased to announce the next release of our open source hyperconverged infrastructure product. For those unfamiliar with how Harvester works, I invite you to check out this blog from our 1.0 launch that explains it further. This next version of Harvester adds several new and important features to help our users get more value out of Harvester. It reflects the efforts of many people, both at SUSE and in the open source community, who have contributed to the product thus far.

Success in the Cloud: How to Avoid Kubernetes Deployment Pitfalls

For organizations looking to succeed in their modernization efforts, our upcoming webinar will offer insights that could help you avoid the missteps that have caused other Kubernetes efforts to fail. Although Kubernetes has become the de facto standard platform for cloud-native digital innovation, it is a complex technology that requires sophisticated expertise to implement correctly, and that expertise is in short supply.

SUSE Edge 2.0: A Cloud Native Solution to Manage Edge

We are proud to introduce SUSE Edge 2.0, which will empower customers to accelerate and scale edge infrastructures and transform edge operations.

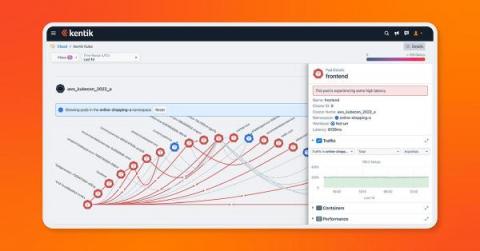

Kentik Kube extends network observability to Kubernetes deployments

We’re excited to announce our beta launch of Kentik Kube, an industry-first solution that reveals how K8s traffic routes through an organization’s data center, cloud, and the internet. With this launch, Kentik can observe the entire network — on prem, in the cloud, on physical hardware or virtual machines, and anywhere in between.

5 Developer Horror Stories by the Qovery Team

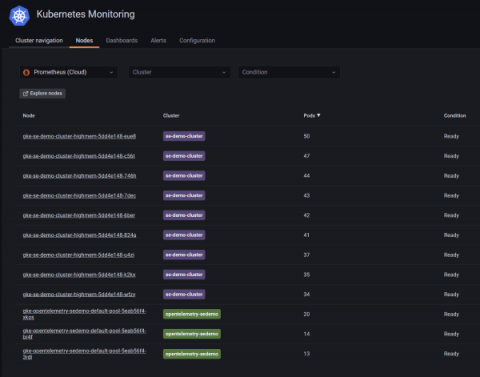

How to monitor the health and resource usage of Kubernetes nodes in Grafana Cloud

The spine is essential to perform every activity, like crawling, walking, or swimming. Just as the spine is necessary to enable these functions, your Kubernetes infrastructure needs a backbone to be efficient and effective. So if Kubernetes clusters act as the spine of your architecture, then Kubernetes nodes are like the vertebrae — they make up a Kubernetes cluster in the same way the vertebrae form the spinal column.

Unified Observability: Announcing Kubernetes 360

Ask any cloud software team using Kubernetes (and most do); this powerful container orchestration technology is transformative, yet often truly challenging. There’s no question that Kubernetes has become the de-facto infrastructure for nearly any organization these days seeking to achieve business agility, developer autonomy and an internal structure that supports both the scale and simplicity required to maintain a full CI/CD and DevOps approach.

Scanning Secrets in Environment Variables with Kubewarden

We are thrilled to announce you can now scan your environment variables for secrets with the new env-variable-secrets-scanner-policy in Kubewarden! This policy rejects a Pod or workload resources such as Deployments, ReplicaSets, DaemonSets , ReplicationControllers, Jobs, CronJobs etc. if a secret is found in the environment variable within a container, init container or ephemeral container. Secrets that are leaked in plain text or base64 encoded variables are detected.

Komodor Introduces New Companion Tool For Helm

Today, I am happy to see the public release of Helm-Dashboard, Komodor’s second open-source project, after ValidKube, and my first since joining the team as Head of Open Source. It’s a compelling challenge to try and solve the pain points of Helm users, but more than anything it’s a labor of love. So it is with love that we’re now sharing this project with the community, and I’m excited to imagine where it will go from here.

Getting started with Civo Academy

Here at Civo, we have created over 50 free video guides and tutorials to help you navigate Kubernetes: from understanding the basic need for and function of containers, to launching and scaling your first clusters. You can start learning everything you need to know to get started with Kubernetes today with our nine modules which were created by in-house experts at Civo!

Celebrating Over 13,000 Students And Thousands Achieving GitOps Certification with Argo

Earlier this year, when Codefresh announced the first course in our GitOps for Argo certification program – GitOps Fundamentals – we had high hopes that the course would satisfy the community’s pent-up demand for practical GitOps knowledge. To meet this demand, we designed a course that features lab environments to dramatically improve the learning experience. Each student gets a lab environment pre-configured with everything they need to learn GitOps using Argo CD.

SUSE Rancher and Komodor - Continuous Kubernetes Reliability

With 96% of organizations either using or evaluating Kubernetes and over 7 million developers using Kubernetes around the world, according to a recent CNCF report, it’s safe to say that Kubernetes is eating up the world and has become the de-facto orchestrating system of cloud-native applications. The benefits of adopting K8s are obvious in terms of efficiency, agility, and scalability.

Using CI/CD to deploy web applications on Kubernetes with ArgoCD

GitOps modernizes software management and operations by allowing developers to declaratively manage infrastructure and code using a single source of truth, usually a Git repository. Many development teams and organizations have adopted GitOps procedures to improve the creation and delivery of software applications. For a GitOps initiative to work, an orchestration system like Kubernetes is crucial.

Zen and the Art of Kubernetes Monitoring

The real beauty of this modern, cloud-fueled, DevOps-driven world that we are living in is that it’s so highly composable. In so many ways, we’ve been freed from the limitations and structures of the previous annals of software and technology history to build things the way that we want to, and however we choose to do so.

When Cloud Native Stacks Misbehave - Pitfalls and Lessons Learned | Itiel Shwartz (Komodor)

How to Orchestrate your Django application with Kubernetes

Do you have an application built with Django and PostgreSQL that you’d like to run on Kubernetes? If so, you’re in luck! In this tutorial, you’ll learn how to orchestrate your Django application with Kubernetes. Since we’re working with multiple microservices, it can be difficult to ensure all parts work together. This tutorial will demystify all that.

How to manage high cardinality metrics in Prometheus and Kubernetes

Over the last few months, a common and recurring theme in our conversations with users has been about managing observability costs, which is increasing at a rate faster than the footprint of the applications and infrastructure being monitored. As enterprises lean into cloud native architectures and the popularity of Prometheus continues to grow, it is not surprising that metrics cardinality (a cartesian combination of metrics and labels) also grows.

Iterating on an OpenTelemetry Collector Deployment in Kubernetes

When you want to direct your observability data in a uniform fashion, you want to run an OpenTelemetry collector. If you have a Kubernetes cluster handy, that’s a useful place to run it. Helm is a quick way to get it running in Kubernetes; it encapsulates all the YAML object definitions that you need. OpenTelemetry publishes a Helm chart for the collector. When you install the OpenTelemetry collector with Helm, you’ll give it some configuration.

This is Komodor

Gain Competitive Advantage Through Cloud Native Technology

Organizations around the globe recognize the importance of digital transformation to respond to the demands of the modern world. Organizations can advance their business by adapting technology, processes and tools. By doing so, they can increase flexibility, efficiency, security and improve customer experiences to boost success and gain competitive advantage. However, digital transformation is not always easy.

What is container orchestration?

Containerization is a type of virtualization in which a software application or service is packaged with all the components necessary for it to run in any computing environment. Containers work hand in hand with modern cloud native development practices by making applications more portable, efficient, and scalable.

Cost Advisor: Optimize and Rightsize your Kubernetes Costs

Kubernetes has broken down barriers as the cornerstone of cloud-native application infrastructure in recent years. In addition, cloud vendors offer flexibility, speedy operations, high availability, SLAs (service-level agreement) that guarantee your service availability, and a large catalog of embedded services. But as organizations mature in their Kubernetes journey, monitoring and optimizing costs is the next stage in their cloud-native transformation.

Sysdig Cost Advisor: Optimize your Kubernetes Costs

Intel Optimization Hub

Cloud Service Providers (CSPs) offer an ever-expanding array of instance types, ensuring that for any given workload there exists the perfect hosting option that matches the exact needs of that app or business service. But with this expansion comes an ever-increasing challenge to match the workloads to the offerings – there are many things to consider.

Kubernetes Load Balancers Guide - Civo Academy

Five Reasons to Use Base Container Images

Nowadays, the software development paradigm is based on containerizing applications to deploy on pods to let Kubernetes manage it. Containerized applications can then allow Kubernetes to manage its deployment, replication, high availability, metrics and other capabilities so that the application can focus on doing what it was designed to do. This technology is used for projects and by customers all over the globe.

Building a Fleet of GKE Clusters with Argo CD with Nick Ebert, Google

Container Observability

The Ultimate Guide to Containers and Why You Need Them

Containers have long been used in the transportation industry. Cranes pick up containers and shift them onto trucks and ships for transportation. Container technology is handled in a similar vein in the software world. A container is a new and efficient way of deploying applications. A container is a lightweight unit of software that includes application code and all its dependencies such as binary code, libraries, and configuration files for easy deployment across different computing environments.

0 to Observable: From Kubernetes Logs to Container Observability with Coralogix

NodePort and Headless Service for Kubernetes - Civo Academy

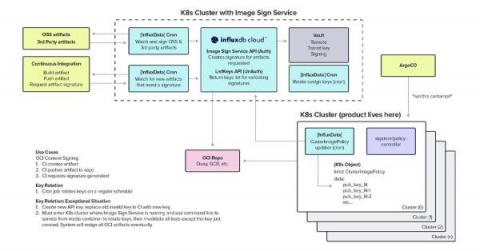

Stop Trusting Container Registries, Verify Image Signatures

One of InfluxData’s main products is InfluxDB Cloud. It’s a cloud-native, SaaS platform for accessing InfluxDB in a serverless, scalable fashion. InfluxDB Cloud is available in all major public clouds. InfluxDB Cloud was built from the ground up to support auto-scaling and handling different types of workloads. Under the hood, InfluxDB Cloud is a Kubernetes-based application consisting of a fleet of micro-services that runs in a multi-cloud, multi-region setup.

How Calico CNI solves IP address exhaustion on Microsoft AKS

Companies are increasingly adopting managed Kubernetes services, such as Microsoft Azure Kubernetes Service (AKS), to build container-based applications. Leveraging a managed Kubernetes service is a quick and easy way to deploy an enterprise-grade Kubernetes cluster, offload mundane operations such as provisioning new nodes, upgrading the OS/Kubernetes, and scaling resources according to business needs.

Under 10 minutes: Kubernetes Logs into Coralogix using Open Telemetry

How Cortex can help you get the most out of Kubernetes

Bridging the Gap Between Applications and Kubernetes Environments

Organizations are eagerly adopting containers and Kubernetes, investing in cloud-native to foster innovation and growth. According to the CNCF and Slashdata, nearly 5.6 million developers use Kubernetes. That’s 31% of all backend developers. We all know that Kubernetes is a great container management platform.

Learn about the Basics of ClusterIP - Civo Academy

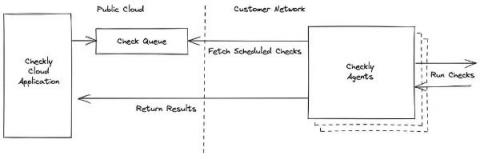

Autoscaling Checkly Agents with KEDA in Kubernetes

Checkly private locations enable you to run browser and API checks from within your own infrastructure. This requires one or more Checkly agents installed in your environment where they can reach your applications and our Checkly management API. You need to ensure you have enough agents installed in order to run the number of checks configured in the location. We have a guide to planning for redundancy and scaling in our documentation.

From DevOps to Platform Engineer: 6 Things You Should Consider

How to Install and Upgrade Argo CD

We have already covered several aspects of Argo CD in this blog such as best practices, cluster topologies and even application ordering, but it is always good to get back to basics and talk about installation and more importantly about maintenance. Chances are that one of your first Argo CD installations happened with kubectl as explained in the getting started guide.

EKS Cost Optimization: 7 Best Practices To Apply Immediately

How to Tail Kubernetes Logs: Using the Kubectl Command to See Pod, Container, and Deployment Logs

Logs are a critical aspect of any production workload, as they give you insight into what is happening in your system and tell you which components may be having issues. The traditional method of looking at logs involves basic Linux commands like tail, less, or sometimes cat.

How to monitor Istio with Sysdig

In this previous article, we talked about how to monitor the Istio service mesh in Kubernetes with the out-of-the-box observability stack. This time, we will walk you through monitoring the Istio service mesh with Sysdig Monitor and how to troubleshoot issues. Istio service mesh provides special characteristics and functionalities for microservices running on Kubernetes.

5 key benefits of Kubernetes monitoring

Kubernetes made it much easier to deploy and scale containerized applications, but it also introduced new challenges for IT teams trying to keep tabs on these newly distributed systems. Ops teams need proper visibility into their Kubernetes clusters so they can track performance metrics, audit changes to deployed environments, and retrieve logs that help debug application crashes.

Beginners Guide to Kubernetes Services - Civo Academy

Fargate Vs. Lambda: The Last Comparison You'll Ever Need

Content creation best practices for DevRels by @Eddie Jaoude

If Jimi Hendrix Were Your CIO, You'd Be Rocking Smart Cloud Native

Jimi Hendrix was an innovator who pushed musical boundaries by employing leading-edge technologies as fast as they were invented. The new guitar effects he adopted in the late 1960’s included fuzz, Octavia, wah, and Uni-Vibe pedals. Jimi would gobble up these guitar pedals and incorporate them into his sound to create wildly creative sonic experiences.

Viewing OpenTelemetry Metrics and Trace Data in Observability by Aria Operations for Applications

Modern application architectures are complex, typically consisting of hundreds of distributed microservices implemented in different languages and by different teams. As a developer, site-reliability engineer, or DevOps professional, you are responsible for the reliability and performance of these complex systems. With observability, you can ask questions about your system and get answers based on the telemetry data it produces.

Hyperscaling the open-RAN ecosystem, exploring virtualized open-RAN and cloud-native deployment

New Vulnerability Scanning Features in Tanzu Application Platform 1.3

From Static to Dynamic Environments (Why and How)

VMware Tanzu Application Platform 1.3 Improves Developer Productivity and Simplifies DevSecOps

At VMware Explore 2022, we pre-announced new capabilities in VMware Tanzu Application Platform 1.3. Today, we’re excited to announce general availability of these capabilities to further enhance developer and application operator experiences on any Kubernetes environment, increase supply chain security, and offer additional ecosystem integrations.

Containers vs virtual machines: what is the difference?

In computing, virtualization is the creation of a virtual — as opposed to a physical — version of computer hardware platforms, storage devices, and network resources. Virtualization creates virtual resources from physical resources, like hard drives, central processing units (CPUs), and graphic processing units (GPUs). By virtualizing resources, you can combine a network of resources into what appears to users as one object.

Production Data Simulation: Record in One Environment, Replay in Another

Have you ever experienced the problem where your code is broken in production, but everything runs correctly in your dev environment? This can be really challenging because you have limited information once something is in production, and you can't easily make changes and try different code. Speedscale production data simulation lets you securely capture the production application traffic, normalize the data, and replay it directly in your dev environment. There are a lot of challenges with trying to replicate the production environment in non-prod.

Demystifying the complexity of cloud-native 5G network functions deployment using Robin CNP - Part II

Now that we have discussed the networking part , the next step is placing the application into a host. Robin.io’s cloud platform has the concept of master, compute, and storage nodes. Typically, the hardware servers would have multiple NUMA nodes. In order to achieve the best performance, the platform should utilize the resources from the same NUMA node. Failing this – if users are consuming a resource from another NUMA node – then their performance would degrade.

The Power Of Combining Kubernetes And Non-Kubernetes Cloud Spend

Don't sweat the network costs, Ocean provides application cost visibility to your Kubernetes cluster

A Beginners Guide to Container Network Interfaces (CNI) - Civo Academy

The 11 Best Docker Alternatives In 2022

Automate Troubleshooting of Applications Running on Kubernetes

StackState is an out-of-the-box solution to observe your entire Kubernetes stack, identify problems, automatically highlight the changes that cause them and provide the full context you need for efficient and effective troubleshooting. Our clear and affordable pricing makes it easy to get started today.

How to build an EKS kubernetes cluster with Ubuntu 20.04 on FIPS mode

Kubernetes Node-to-node Networking - Civo Academy

More on the State of Kubernetes 2022 survey

Why is Open Source Important to Enterprise IT Leaders?

A recent global survey demonstrates the importance of open source tools and technologies to IT professionals and their organizations. In Foundry’s MarketPulse Survey for SUSE, 2022, more than 600 IT professionals from enterprises around the world shared their experience and opinions on cloud native technology and open source. The results? Sixty-three percent of those surveyed said it was highly important for their organizations to choose open source tools and technologies.

Rancher Desktop 1.6.0: With Troubleshooting Diagnostics and More

A new version of Rancher Desktop with a troubleshooting diagnostics feature and several other improvements has just been released!

VMware Tanzu Application Service 3.0 Now Generally Available

The next major release of VMware Tanzu Application Service is here. Tanzu Application Service is a modern application platform that enables enterprises to continuously deliver and run microservices across clouds, providing their application development teams with an automated path to production for custom code, while offering their operations teams a secure, highly available runtime.

Pod to Pod Networking in Kubernetes - Civo Academy

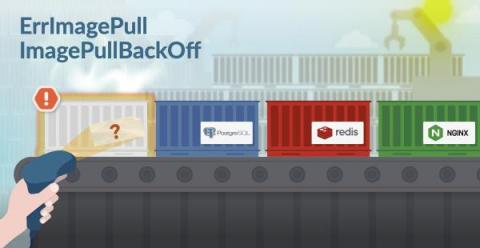

Kubernetes ErrImagePull and ImagePullBackOff in detail

Pod statuses like ImagePullBackOff or ErrImagePull are common when working with containers. ErrImagePull is an error happening when the image specified for a container can’t be retrieved or pulled. ImagePullBackOff is the waiting grace period while the image pull is fixed. In this article, we will take a look at.

Common Kubernetes Challenges in 2022 and How to Solve Them

This year’s VMware Explore saw a great deal of excitement from the multi-cloud community. It’s evident that organizations are seeking reliable ways to transform their businesses and become digitally smart. It’s also becoming increasingly more apparent that organizations are looking towards Kubernetes to help them do so. In fact, the State of Kubernetes 2022 report has shown us that not only is Kubernetes here to stay, but it’s growing at a rapid pace.

Our Top 10 Kubernetes and Cloud Native Guides 2022

Since the beginning, our community has been at the forefront of what we do. Over the years, we have been able to highlight the knowledge and talent of our community by showcasing tutorials submitted to us via Write For Us. As we reach the end of 2022, we wanted to highlight some of our top guides from the Civo community that were published throughout the year.

Kubernetes Jobs Deployment Strategies in Continuous Delivery Scenarios

Continuous Delivery (CD) frameworks for Kubernetes, like the one created by Rancher with Fleet, are quite robust and easy to implement. Still, there are some rough edges you should pay attention to. Jobs deployment is one of those scenarios where things may not be straightforward, so you may need to stop and think about the best way to process them. We’ll explain here the challenges you may face and will give some tips about how to overcome them.

Monitoring Kubernetes with Hosted Graphite by MetricFire

In this article, we will be looking into Kubernetes monitoring with Graphite and Grafana. Specifically, we will look at how your whole Kubernetes set-up can be centrally monitored through Hosted Graphite and Hosted Grafana dashboards. This will allow Kubernetes Administrators to centrally manage all of their Kubernetes clusters without setting up any additional infrastructure for monitoring.

Kubernetes alternatives to Spring Java framework

Top Three Kubernetes Myths: What C-Level Executives Should Know

In the past few years, we’ve seen a rapid increase in container adoption as the go-to strategy for accelerating software development. After all, why wouldn’t you move towards containerization considering its advantages? Benefits such as application portability, IT resource efficiency, and increased agility would make any infrastructure or operations leader interested in adopting containers with Kubernetes.

Automate Calico Cloud and EKS cluster integration using AWS Control Tower

Productive, scalable, and cost-effective, cloud infrastructure empowers innovation and faster deliverables. It’s a no-brainer why organizations are migrating to the cloud and containerizing their applications. As businesses scale their cloud infrastructure, they cannot be bottlenecked by security concerns. One way to release these bottlenecks and free up resources is by using automation.

Meet Epinio: The Application Development Engine for Kubernetes

Epinio is a Kubernetes-powered application development engine. Adding Epinio to your cluster creates your own platform-as-a-service (PaaS) solution in which you can deploy apps without setting up infrastructure yourself. Epinio abstracts away the complexity of Kubernetes so you can get back to writing code. Apps are launched by pushing their source directly to the platform, eliminating complex CD pipelines and Kubernetes YAML files.

Container to Container Networking - Civo Academy

Q3 2022 product retrospective - Last quarter's top features

Qovery V3 Beta is Out

Fundamentals: What Sets Containers Apart from Virtual Machines

Containers have fast become one of the most efficient ways of virtually deploying applications, offering more agility than a virtual machine (VM) can typically provide. Both containers and VMs are great tools for managing resources and application deployment, but what is the difference between the two, and how do we manage containers?

Civo Update - October 2022

In September, we announced that Steve Wozniak will be joining us at Civo Navigate to discuss his time at Apple and share his thoughts on the future of technology. Grab your tickets for Civo Navigate today! We also hosted the Cloud Native Community meetup in Florida where we had talks from Mark Boost, CEO at Civo, Kunal Kushwaha, Dev Rel Manager at Civo, and a guest speaker from Defense.com speaking about security.

Demystifying the complexity of cloud-native 5G network functions deployment using Robin CNP - Part I

Robin.io simplifies the operations and lifecycle management of 5G applications at scale and demystifies the complexity around 5G and network functions management. The simplified end-to-end automation and App-Store-like user interface makes the management of applications easy for operators. This is relevant for several reasons.

Cloud Monitoring further embraces open source by adding PromQL

As Kubernetes monitoring continues to standardize on Prometheus as a form factor, more and more developers are becoming familiar with Prometheus’ built-in query language, PromQL. Besides being bundled with Prometheus, PromQL is popular for being a simple yet expressive language for querying time series data. It’s been fully adopted by the community, with lots of great query repositories, sample playbooks, and trainings for PromQL available online.

Accelerating Application Development on Kubernetes with Tanzu Application Platform

Mphasis is a trusted VMware services partner and is a participating member of the Tanzu Partner Advisory Council. They’ve also participated in the Tanzu Application Platform Design Partner Program, which afforded them the unique opportunity to influence the future of VMware Tanzu Application Platform, by having first access to features before the general release and by providing valuable feedback to VMware’s product teams about both the developer and operator experiences.