Operations | Monitoring | ITSM | DevOps | Cloud

June 2023

How to Successfully Transition from DevOps to Platform Engineering

Are you thinking about switching from platform engineering to DevOps? You're not alone. In order to remain competitive, many organizations are making this crucial change as the world of software development and operations continues to change. This article will discuss the reasons behind the transition, what it implies, and most importantly, how your organization may successfully manage the change.

Q2 2023 Product Retrospective - Last Quarter's Top Features

Billy Beane Shows How AI Can Give You a Competitive Edge

As general manager of the Oakland Athletics, Billy Beane applied statistical analysis (also known as sabermetrics) to the evaluation of baseball players, which enabled the team to excel in the 2020 season. Beane was the subject of Michael Lewis’s book “Moneyball,” which was made into a movie starring Brad Pitt as Beane.

What's New in Sysdig - May and June 2023

This month, Sysdig has released Process Tree which enriches the Events feed for workload-based events. This helps with identifying all the processes that led up to the offending process. This is in technical preview status. Sysdig has also released Sysdig Secure Live.

Beyond SaaS: Multi-cluster Kubernetes Management for Regulated Industries and Sovereign Clouds

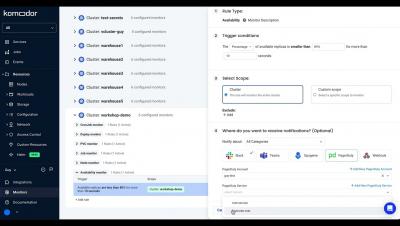

VMware Tanzu Mission Control is a centralized hub for simplified, multi-cloud, multi-cluster Kubernetes management. It helps platform teams take control of their Kubernetes clusters with visibility across environments by allowing users to group clusters and perform operations, such as applying policies, on these groupings.

GitOps the Planet #14: Building Open Source Communities with Itay Shakury

How to Handle Kubernetes Resource Quotas

A containerized approach to software deployment means you can deploy at scale without having to worry about the configuration of each unit. In Kubernetes, clusters do the heavy lifting for you—they’re the pooled resources that run the pods that hold your individual containers. You can divide each cluster by namespace, which allows you to assign nodes (ie the machine resources in a cluster) to different roles or different teams. Resource quotas limit what each namespace can use.

Leveraging Calico flow logs for enhanced observability

In my previous blog post, I discussed how transitioning from legacy monolithic applications to microservices based applications running on Kubernetes brings a range of benefits, but that it also increases the application’s attack surface. I zoomed in on creating security policies to harden the distributed microservice application, but another key challenge this transition brings is observing and monitoring the workload communication and known and unknown security gaps.

The Cost of Upgrading Hundreds of Kubernetes Clusters

Cloud Expenditure - A Storm is Brewing

Expenditure on cloud computing services reached a mammoth 225 billion dollars in 2022. Companies start their cloud-native journeys with the best intentions and consume the many benefits including: But current cloud expenditure growth levels are unsustainable for many organizations and with 82% of organizations investing in FinOps staff it shows that cloud expenditure is top of mind in the c-suite.

Machine Learning Made Simple - Civo Navigate NA 2022

Troubleshoot with Kubernetes events

When Kubernetes components like nodes, pods, or containers change state—for example, if a pod transitions from pending to running—they automatically generate objects called events to document the change. Events provide key information about the health and status of your clusters—for example, they inform you if container creations are failing, or if pods are being rescheduled again and again. Monitoring these events can help you troubleshoot issues affecting your infrastructure.

Expand Your Monitoring Capabilities with AppSignal's Standalone Agent Docker Image

Want to monitor all of your application's services? Our Standalone Agent allows you to monitor processes our standard integrations don't monitor by default, helping you effortlessly expand your monitoring capabilities. To help simplify the process of configuring our standalone agent, we're excited to announce the launch of our Standalone Agent's Docker image, available on Docker Hub under the name appsignal/agent.

The Curious Case Of Kubernetes Health Checks

Health checks for cloud infrastructure refer to the mechanisms and processes used to monitor the health and availability of the components within a cloud-based system. These checks are essential for ensuring that the infrastructure is functioning correctly and that any issues or failures are detected and addressed promptly. Health checks typically involve monitoring various parameters such as system resources, network connectivity, and application-specific metrics.

Cloudify 7 Goes Native

Kubernetes has revolutionized the management of containerized applications, but what about non-Kubernetes resources? Can Kubernetes extend its capabilities to encompass those as well? Cloudify, known for its ability to fit into highly distributed and heterogeneous environments, is making a significant stride in this direction with the release of Cloudify 7. In this blog, we explore how Cloudify brings its powerful capabilities natively into the Kubernetes ecosystem.

Densify Named a Market Leader in GigaOm Radar Report for Cloud Resource Optimization

June 27, 2023 We were excited to learn last week that GigaOm named Densify as both a Leader and an “Outperformer” in its new report “Radar for Cloud Resource Optimization”. This was particularly meaningful to us for a few reasons.

Top 8 Tools to Build Your Own PaaS

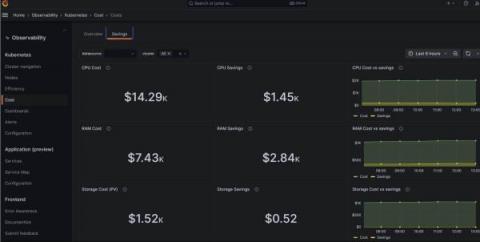

Rein in spending with Kubernetes cost monitoring in Grafana Cloud

As your Kubernetes infrastructure — and your business — grows, so too does the headache of managing your stack. And since controlling costs is crucial for your organization’s well-being, you need visibility into your complex system to ensure you’re spending your money wisely. That’s why we’re excited to introduce Kubernetes cost monitoring as a new feature in Grafana Cloud.

A Mid-Year Review of Civo's Journey and What's Next

As we are now 6 months into the year, I thought this would be a great time to sit and reflect on everything that has happened at Civo so far (and some of the plans we have in the pipeline). If you’re interested in taking a look at our previous roadmaps.

One Cluster to Rule Them All

Reviewing the Current State of Infrastructure as Code (IaC), its Challenges, the Emergence of Crossplane, Adoption Difficulties, and the Road Ahead! Infrastructure as code (IaC) has become an indispensable practice for managing and deploying cloud-native applications. By defining infrastructure through code, developers can efficiently and consistently manage their infrastructure. In this post, we’ll delve into the state of IaC, the problems it poses, and the new approach offered by Crossplane.

The Essential Kubectl Commands: Handy Cheat Sheet

As Kubernetes continues to gain popularity as a container orchestration system, mastering its command-line interface becomes increasingly vital for DevOps engineers and developers alike. Kubectl, the Kubernetes command-line tool, is an essential component in managing and deploying applications in a Kubernetes cluster. This tool allows you to interact directly with the Kubernetes API server and control the state of your cluster.

The Importance of Pure Open-Source Kubernetes

In a short time, the open-source ecosystem has evolved from niche projects with limited corporate backing to the de facto way to build software. Today, organizations large and small are adopting open-source software to accelerate product development and innovation. In the government sector, the U.S. Department of Defense issued a memorandum on adopting open source software as its preference versus proprietary software, calling open-source “critical in delivering software faster.”

Configure Multi-Tenant namespace isolation in Openshift4 using NeuVector

In this post we will examine using NeuVector Network rules to enable multi-tenancy isolation in openshift. Also how we can isolate external traffic from specific workload.

Deploy & Configure NeuVector prometheus-exporter on Openshift 4

In this post we will explain how to use OpenShift monitoring (alert manager) to monitor NeuVector using NeuVector prometheus-exporter NeuVector has published a Prometheus exporter which we will use in the post.

Disrupting the Status Quo with Digital Wallets

There’s no shortage of innovation in the financial services industry. Financial institutions have always placed a high value on innovation, as demonstrated by their willingness to fund technology-led initiatives. According to Gartner® Research Inc., “Global enterprise IT spending in the banking and investment services market is forecast to increase by 7.7% in 2023 to $666.5 billion in constant US dollars.

Qovery is a G2 Momentum Leader for 2023

Ocean CD is now available: Control Kubernetes application changes with reliable continuous delivery automation

GitOps the Planet #13: eBPF - what's all the buzz about with Liz Rice

Using the Elastic Agent to monitor Amazon ECS and AWS Fargate with Elastic Observability

AWS Fargate is a serverless pay-as-you-go engine used for Amazon Elastic Container Service (ECS) to run Docker containers without having to manage servers or clusters. The goal of Fargate is to containerize your application and specify the OS, CPU and memory, networking, and IAM policies needed for launch. Additionally, AWS Fargate can be used with Elastic Kubernetes Service (EKS) in a similar manner.

Demystified Service Mesh Capabilities for Developers

Icinga Kubernetes Helm Charts

Before attending Icinga Berlin in May this year, Daniel Bodky and Markus Opolka from our partner NETWAYS developed the very first Icinga Kubernetes Helm Charts and released it in an alpha version. If you have ever wanted to deploy an entire Icinga stack in your Kubernetes cluster, now is your chance. I also want to highlight Daniel’s talk again on how Icinga can run on Kubernetes and the challenges involved.

Built for Multi-cloud Fleet Management

As the latest surveys show, organizations are struggling to manage multiple clouds and to realize the expected benefits of moving to the cloud. In the Flexera 2023 State of the Cloud Report, for example, enterprises cited managing cloud spend and managing multi-cloud environments as their top cloud challenges. The root of these problems can be traced to cloud and cluster sprawl and the complexity of managing Kubernetes.

Fleet: Multi-Cluster Deployment with the Help of External Secrets

Fleet, also known as “Continuous Delivery” in Rancher, deploys application workloads across multiple clusters. However, most applications need configuration and credentials. In Kubernetes, we store confidential information in secrets. For Fleet’s deployments to work on downstream clusters, we need to create these secrets on the downstream clusters themselves.

Cloud Native Security for the Rest of Us

Top Trends in DevOps - ChatGPT

The world of DevOps is constantly evolving and adapting to the needs of the software development industry. With the increasing demand for faster and more efficient software delivery, organizations are turning to modern technologies and practices to help them meet these challenges. In a series of articles on the Kublr blog, we will take a look at some of today’s top DevOps trends.

Choosing a container orchestration tool | Docker Swarm vs. Kubernetes

HA Kubernetes Monitoring using Prometheus and Thanos

In this article, we will deploy a clustered Prometheus setup that integrates Thanos. It is resilient against node failures and ensures appropriate data archiving. The setup is also scalable. It can span multiple Kubernetes clusters under the same monitoring umbrella. Finally, we will visualize and monitor all our data in accessible and beautiful Grafana dashboards.

Why eBPF is Poised to Revolutionize Kubernetes [Without Anyone Noticing]

Have you heard about eBPF? It’s the technology that’s set to transform the Kubernetes landscape. In this article, we’ll explore what eBPF is and why it’s poised to become the next big thing in Kubernetes. But here’s the catch – despite its game-changing potential, it seems that few people are truly aware of its impact. Let’s delve into the details and discover why you should care.

Scaling Kubernetes on AWS: Everything You Need to Know

What Is AWS EKS, and How Does It Work With Kubernetes?

GitOps the Planet #12: Building Argo with Michael Crenshaw

Understanding k3s: Architecture, setup, and uses

If you are looking into the cloud-native world, then the chances of coming across the term “Kubernetes” is high. Kubernetes, also known as K8s, is an open-source system that has the primary responsibility of being a container orchestrator. This has quickly become a lifeline for managing our containerized applications through creating automated deployments.

Workshop: 2023 Kubernetes Troubleshooting Challenge

3 Tips to Improve Your Dockerfile Build time

How Our Love of Dogfooding Led to a Full-Scale Kubernetes Migration

The benefits of going cloud-native are far reaching: faster scaling, increased flexibility, and reduced infrastructure costs. According to Gartner®, “by 2027, more than 90% of global organizations will be running containerized applications in production, which is a significant increase from fewer than 40% in 2021.” Yet, while the adoption of containers and Kubernetes is growing, it comes with increased operational complexity, especially around monitoring and visibility.

How to Do Kubernetes Multi-cloud and Hybrid Management Right

The latest surveys show that organizations are struggling to manage multi-cloud environments and overcome problems that include cloud cost, complexity, security, and visibility. In the Flexera 2023 State of the Cloud Report, for example, enterprises cited managing cloud spend and managing multi-cloud environments as their top cloud challenges.

The Art of Using Execution tags to Troubleshoot ECS

In the grand tapestry of software engineering, our journey often winds through labyrinthine layers of application logic. Here, bugs play a compelling game of hide-and-seek, and features dance in an unpredictable ballet. During these instances of fervent exploration, we find ourselves longing for a reliable compass—a secret weapon—to help us decipher the riddles that lie ahead. Cue execution tags, our luminous lighthouse cutting through the dense fog of complexity.

Quickly create performance and regression tests from a Postman collection

Kubernetes Simplified: Understanding its Inner Workings

Tanzu Application Platform on the Disconnected Edge

Kubernetes has become the de-facto platform for containerized applications. But it’s a fractured environment and each Kubernetes distribution has their own nuances. Further, much of today’s cloud native software design assumes a healthy Internet connection, which is not always available. What’s missing is a standardized way to deliver applications on top of any Kubernetes, without an Internet connection (also known as air-gapped deployments).

Kubernetes Architecture Part 3: Data Plane Components

This Kubernetes Architecture series covers the main components used in Kubernetes and provides an introduction to Kubernetes architecture. After reading these blogs, you’ll have a much deeper understanding of the main reasons for choosing Kubernetes as well as the main components that are involved when you start running applications on Kubernetes.

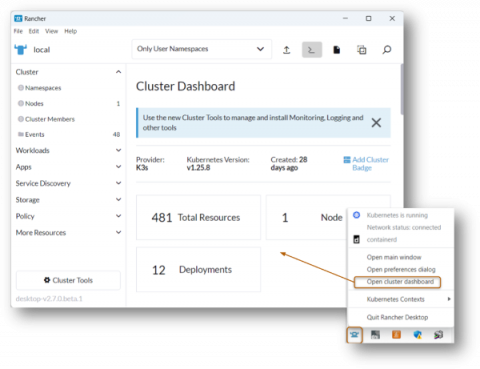

Container Management: A Closer Look at Rancher Desktop's Settings

Rancher Desktop is equipped with convenient and powerful features that make it stand out as one of the best developer tools and the fastest ways to build and deploy Kubernetes locally. In this blog, we will tackle Rancher Desktop´s functionalities and features to guide you and help you take full advantage of all the benefits of using Rancher Desktop as a container management platform and for running local Kubernetes.

What is DevOps? Practices, impacts, and challenges

Bridging the gap between development and operations has become essential for the cultural shifts seen in organizations today. DevOps allows these concepts to be brought together, creating an influential blend of cultural philosophies, practices, and technological instruments, facilitating a quicker delivery of products and services. Throughout this blog, I will explore how we should all be embracing DevOps to allow our organizations to compete in an ever-changing digital world.

Introduction to Sysdig Monitor

How Honeycomb Monitors Kubernetes

While Kubernetes comes with a number of benefits, it’s yet another piece of infrastructure that needs to be managed. Here, I’ll talk about three interesting ways that Honeycomb uses Honeycomb to get insight into our Kubernetes clusters. It’s worth calling out that we at Honeycomb use Amazon EKS to manage the control plane of our cluster, so this document will focus on monitoring Kubernetes as a consumer of a managed service.

Kubernetes Management | DKP 2.5 Announcement

K3s vs Talos Linux - Civo.com

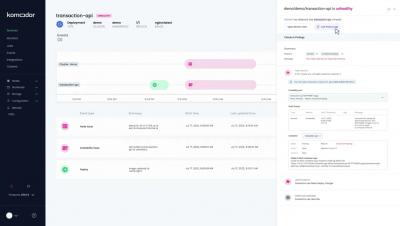

Streamline Incident Response with Komodor and Squadcast

With the growing popularity of Kubernetes as a container orchestration platform powering the microservices revolution, comes greater complexity with managing, monitoring, and responding to incidents at scale. Challenges with real production environments include full visibility into your clusters and environment’s health, alongside real-time incident management and response.

10 Best Internal Developer Platforms to Consider in 2023

Top Trends of the AWS Summit London

Container Optimization Techniques that Cloud Providers Hate!

How FireHydrant Implemented Honeycomb to Streamline Their Migration to Kubernetes

Kubernetes is the gold standard for container orchestration at scale. While massive global companies like Google, Spotify, and Pinterest rely on Kubernetes to run their software in production, so do many small but mighty developer teams. (Full disclosure: Honeycomb joined the Kubernetes brigade last year, when we migrated some of our services.)

Secret Sauce: Stevie Award Shines Spotlight on D2iQ Customer Support

When deploying a mission-critical Kubernetes infrastructure, the support you receive from your vendor is as important as the product. This is why we at D2iQ are especially pleased and proud that our D2iQ Customer Operations Team has won the Gold Stevie Award for Support Team of the Year for 2023. The American Business Awards, also known as the Stevie Awards, are billed as the “world’s premier business awards” and are judged by a panel of more than 200 industry experts.

Kubernetes Monitoring - Why It Matters

From Git to Kubernetes

Codefresh Build and Deploy '23

Easily monitor Docker Desktop containers with Grafana Cloud

18 million — that’s the number of developers around the world who use Docker, the popular tool for containerization. Docker Desktop, a software application for Mac, Windows, and Linux, is one of the most widely used tools within the Docker ecosystem, especially among developers who want to build, test, and deploy applications in containers on their local machines.

DevOps Evolution and the Rise of Platform Engineering

As we continue to see rapid technological advancements, organizations must evolve and adapt to maintain a competitive edge. The principles of DevOps - collaboration, automation, continuous integration, and delivery - have emerged as critical success factors in this landscape, enabling organizations to navigate the ever-changing environment.

DNS observability and troubleshooting for Kubernetes and containers with Calico

In Kubernetes, the Domain Name System (DNS) plays a crucial role in enabling service discovery for pods to locate and communicate with other services within the cluster. This function is essential for managing the dynamic nature of Kubernetes environments and ensuring that applications can operate seamlessly. For organizations migrating their workloads to Kubernetes, it’s also important to establish connectivity with services outside the cluster.

Optimizing Your K8s Nodes Using FinOps Tools? Try This Instead...

June 7, 2023 It’s no secret that Kubernetes is one of the fastest-growing technologies in use today for deploying and operating applications of all types in the cloud. It’s also no secret that Kubernetes’ popularity is a significant contributor to fast-growing cloud bills. FinOps teams are constantly looking for ways to lower their cloud spend, in cooperation with the DevOps, Engineering and App owner teams that control this infrastructure.

Using Docker Desktop and Artifactory for Enterprise Container Management

Collecting Kubernetes Data Using OpenTelemetry

Running a Kubernetes cluster isn’t easy. With all the benefits come complexities and unknowns. In order to truly understand your Kubernetes cluster and all the resources running inside, you need access to the treasure trove of telemetry that Kubernetes provides. With the right tools, you can get access to all the events, logs, and metrics of all the nodes, pods, containers, etc. running in your cluster. So which tool should you choose?

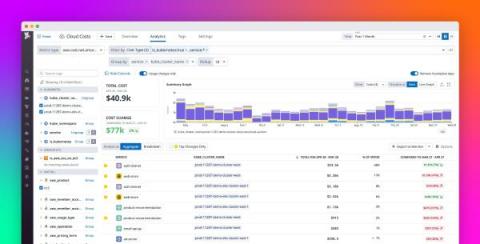

Understand your Kubernetes and ECS spend with Datadog Cloud Cost Management

Rising container usage has fueled a growing reliance on container orchestration systems such as Kubernetes, EKS, and ECS. As organizations increasingly opt to run these systems in the cloud, their cloud spend tends not only to grow but also to become more opaque due to the dynamic complexity of these environments. Typically, various services, teams, and products share cluster resources, and as nodes are added and removed, those resources continuously shift.

What Is an Application Programming Interface (API)? - VMware Tanzu Fundamentals

Merging to Main #3: CI/CD Secrets

The Future of DevOps and Platform Engineering - Civo.com

Visualizing service connectivity, dependencies, and traffic flows in Kubernetes clusters

Today, the cloud platform engineers are facing new challenges when running cloud native applications. Those applications are designed, deployed, maintained and monitored unlike traditional monolithic applications they are used to working with. Cloud native applications are designed and built to exploit the scale, elasticity, resiliency, and flexibility the cloud provides. They are a group of micro-services that are run in containers within a Kubernetes cluster and they all talk to each other.

8 Business Benefits of Ephemeral Environments

Ask What Air-Gapping Can Do for You

In our recent webinar on air-gapped security, D2iQ VP of Product Dan Ciruli shared a new way of thinking about air-gapping, explaining how air-gapping could be applied in places that are not usually considered candidates for air-gapping. In an exchange of insights with Paul Nashawaty, principal analyst at Enterprise Strategy Group, Ciruli explained how the need for air-gapped security has become more critical as more organizations move to the cloud.

Understanding Kubernetes Logs and Using Them to Improve Cluster Resilience

In the complex world of Kubernetes, logs serve as the backbone of effective monitoring, debugging, and issue diagnosis. They provide indispensable insights into the behavior and performance of individual components within a Kubernetes cluster, such as containers, nodes, and services.

Kubernetes Architecture Part 2: Control Plane Components

This Kubernetes Architecture series covers the main components used in Kubernetes and provides an introduction to Kubernetes architecture. After reading these blogs, you’ll have a much deeper understanding of the main reasons for choosing Kubernetes as well as the main components that are involved when you start running applications on Kubernetes. This blog series covers the following topics.

Rancher Desktop: The Ultimate Local Kubernetes Solution for Developers

Kubernetes has created a major impact on modern software development because it is a powerful open source container orchestration platform that enables organizations to deploy and manage complex containerized applications at scale. In this blog, we will cover how Rancher Desktop can help developers run and manage Kubernetes locally.

Seamless Transition to Kubernetes: Ninetailed's Path to Production with Qovery

Escape the Legacy Trap: 5 Keys to Successful Application Modernization

Michael Coté and Marc Zottner co-wrote this article. Legacy software can slow—or even stop—business growth. As organizations hit a legacy wall, they face an unfortunate reality: When IT systems are too old and unchangeable, it’s impossible to transform the way their core business works. In fact, 76 percent of executives say that legacy software is holding them back, according to a Forrester report commissioned by VMware. What does that mean for today’s businesses?

The Care and Feeding of Internal Developer Platforms

If you’ve ever attempted to cultivate a backyard vegetable garden, flower garden for your balcony, hydroponic tower, or other system to grow plants, you’ll understand that it’s not a matter of tossing down some seeds in soil and letting nature take its course. Without proper monitoring and management, ecosystems may grow out of control, or simply wither and die off.

Ensure Kubernetes Compliance with New Private Registry Support for VMware Tanzu Mission Control

Corey Dinkens, Sneha Narang, and Lauren Britton contributed to this blog post. VMware Tanzu Mission Control is a centralized hub for simplified, multi-cloud, multi-cluster Kubernetes management. It helps platform teams take control of their Kubernetes clusters with visibility across cloud, on-premises, and edge environments by allowing users to group clusters and perform operations on these groupings.

Choosing the Best Options to Run Kubernetes on AWS

Monitoring a K8s Cluster with MetricFire

Kubernetes (K8s) is a popular container orchestration solution, but monitoring its performance can be quite challenging. Luckily, there's a solution that makes it easier - MetricFire. It's a cloud-based monitoring and visualization platform that provides comprehensive metrics, alerts, and dashboards for K8s clusters. The platform offers amazing cloud-based monitoring and visualization services that can make the K8s monitoring seamless.

Mastering AWS Fargate pricing and optimization with CloudSpend: A comprehensive guide

AWS Fargate is a powerful tool for running containerized workloads on AWS. It’s a serverless compute engine that allows you to run containers and focus on developing and deploying your applications while AWS controls the cloud infrastructure. This can make a real difference for an organization, saving both time and resources that would otherwise go towards managing servers. This guide will discuss AWS Fargate pricing and provide tips for cost optimization.