Operations | Monitoring | ITSM | DevOps | Cloud

August 2023

Automating Kubernetes Deployments with GitHub Actions

Kubernetes orchestrates the management of containerized applications, with an emphasis on declarative configuration. A DevOps engineer creates deployment files specifying how to spin up a Kubernetes cluster, which establishes a blueprint for how containers should handle the application workloads.

What's New in Sysdig - August 2023

“What’s New in Sysdig” is back with the August 2023 edition! My name is Jonathon Cerda, based in Dallas, Texas, and the Sysdig team is excited to share our latest feature releases with you.

The Double-Edged Sword of Modern Software Delivery

Kubernetes offers undeniable benefits—scalability, portability, reliability—and enterprises everywhere are jumping on the bandwagon to adopt it. However, as incredible as Kubernetes is, its adopters are learning a difficult lesson: Without taking the steps to standardize Kubernetes adoption across the organization, costs and risk can skyrocket.

Next-Gen Defense: Unleashing the Power of Kubernetes

The U.S. Department of Defense’s Software Modernization Strategy calls for gaining a competitive advantage to achieve strategic and tactical superiority. Leveraging artificial intelligence (AI) and implementing zero trust security are critical parts of the movement to modernize the U.S. military. To this end, U.S. Deputy Secretary of Defense Kathleen H. Hicks issued a memorandum in February 2022 establishing the formation of the DoD Chief Digital and Artificial Intelligence Officer (CDAO).

Simplifying Microservices Debugging on Kubernetes with Istio, OTel, and Apica

Microservices architecture has become increasingly popular in modern software development due to its scalability, resilience, and flexibility. However, with the benefits of microservices come the challenges of debugging and monitoring these distributed systems. Using the Istio service mesh, OpenTelemetry distributed tracing, and Apica’s Kubernetes-native observability platform, developers can easily collect and visualize performance data in real-time to identify and fix issues quickly.

Cycle's New Interface, Part 1

After a span of 5 long years, we've bid farewell to Cycle's old portal. Our engineering team has been working tirelessly over the last 10 months to bring a fresh, new interface to the platform for our users. This new design encapsulates the wealth of insights we've gained during this period. Just last week, we took the decisive step of launching it into production, and the initial feedback has been overwhelmingly positive.

Choosing an Infrastructure as Code Solution - Civo Navigate NA 2023

Why you should monitor Kubernetes in SCOM

Things We've Learned About Software Delivery Principles Through A Pandemic - Civo Navigate NA 2023

Advanced Monitoring and Observability Tips for Kubernetes Deployments

Cloud deployments and containerization let you provision infrastructure as needed, meaning your applications can grow in scope and complexity. The results can be impressive, but the ability to expand quickly and easily makes it harder to keep track of your system as it develops. In this type of Kubernetes deployment, it’s essential to track your containers to understand what they’re doing.

Helm Dry Run: Guide & Best Practices

Statefulset vs. Deployment in Kubernetes

As Kubernetes continues its ascent as a leading container orchestration platform, it's common for users to encounter a perplexing choice between two prominent workload controllers: StatefulSets and Deployments. Despite both controllers being instrumental in managing high-availability workloads, they diverge significantly in terms of features and use cases. Grasping these distinctions is pivotal for fine-tuning the performance and scalability of your Kubernetes infrastructure.

Everything You Need to Know About Kubernetes

Welcome to the world of Kubernetes - a powerful container orchestration platform. Before we dive deep into the concepts of Kubernetes, let's grasp the concept of containers - a lightweight, and isolated units that package applications along with their dependencies, ensuring seamless deployment and portability. In this blog, you will witness Kubernetes incredible abilities. It can handle the ups and downs of your applications, ensuring they scale seamlessly, even when facing tough challenges.

Exploring Kubernetes Nodes: Essential Components of Container Orchestration

Kubernetes serves as a robust tool for managing and orchestrating applications across multiple computers. These computers are referred to as 'nodes.' Picture nodes as fundamental units in the ecosystem of your applications. Every node possesses its own computing resources, encompassing memory, processing capabilities, and storage capacity. Your apps are hosted and run by nodes. They give your apps the room and resources they need to work.

5 Best Environment as a Service (EaaS) Platforms in 2023

Architecting a Data Infrastructure with Kubernetes - Civo Navigate NA 2023

The Key to Scalable Kubernetes Clusters | Load Test by Simulating Traffic

Modernizing Cybersecurity: New Challenges, New Practices

The practice of cybersecurity is undergoing radical transformation in the face of new threats introduced by new technologies. As a McKinsey & Company survey notes, “an expanding attack surface is driving innovation in cybersecurity.” Kubernetes and the cloud are infrastructure technologies with many moving parts that have introduced new attack surfaces and created a host of new security challenges.

ExpressJS Container Debugging

In recent years, the landscape of application development has experienced a paradigm shift, largely driven by the rise of containerization and microservices architectures. Amid this transformation, Express.js has emerged as a dynamic and versatile framework that stands as a one-stop shop for crafting robust web applications. Its popularity owes much to its minimalist approach, allowing developers to swiftly build APIs and web applications with ease.

Choosing the Right Kubernetes Cluster Setup: A Comprehensive Guide

Kubernetes has revolutionized how modern applications are deployed, managed, and scaled. As the container orchestration platform of choice, Kubernetes provides a dynamic and highly efficient environment for running containerized applications. At the heart of this ecosystem lies the intricate relationship between Kubernetes and the applications residing within its clusters. Applications within Kubernetes clusters are arranged through Pods, which are managed and scaled by various controllers.

A Guide to Kubernetes Core Components

In the ever-evolving landscape of modern software development and deployment, Kubernetes has emerged as a prominent solution to manage and orchestrate applications. This technology has redefined how applications are deployed and maintained, offering a flexible and efficient framework that abstracts the underlying infrastructure complexities. In Kubernetes, you define how network traffic should be routed to different services and pods.

Down to the Dollar: Turning Logs into Serverless Estimates - Civo Navigate NA 2023

How To Containerize an Application Using Docker

Most development projects involve a wide range of environments. There is production, development, QA, staging, and then every developer's local environments. Keeping these environments in sync so your project runs the same (or runs at all) in each environment can be quite a challenge. There are many reasons for incompatibility, but using Docker will help you remove most of them.

What's New in the Kubernetes 1.28 Second Release

From its humble beginnings, Kubernetes’ growth story continues to be a testament to the power of open-source collaboration, and its current 1.28 second release is certainly no exception. It’s not just a product of ingenious coding but also the sweat and night oil of a global community – from seasoned industry stalwarts to students just making their debut in the open-source world.

Fixing Docker's Slow Performance on MacOS

Docker is designed for Linux. It works most efficiently on Linux systems due to its close integration with the Linux kernel. When handling large filesystems, like the ones built with PHP and Node, Docker desktop (MacOS Environment) experiences significant lag. The main reason is how file synchronization is implemented in Docker for Mac. Plus, disk space consuming behavior of such big PHP Projects.

New report: The state of Calico Open Source 2023

We are excited to announce the publication of our 2023 State of Calico Open Source, Usage & Adoption report! The report compiles survey results from more than 1,200 Calico Open Source users from around the world, who are actively using Calico in their container and Kubernetes environments. It sheds light on how they are using Calico across various environments, while also highlighting different aspects of Calico’s adoption in terms of platforms, data planes, and policies.

Orchestrating Kubernetes with Terraform + Kustomize - Civo Navigate NA 2023

Merging to Main #5: Coexisting Between Kubernetes & Legacy Tech with Mark Panthofer, Nvisia

Develop, Operate, and Optimize with VMware Tanzu: A VMware Explore Round-Up

Applications are the center of your organization’s business. Success (or failure) depends on how quickly you can respond to dynamic market demands driven by cultural shifts, technical innovation, and global events. This business agility is driven by fast, predictable application delivery. The past few years have seen IT leaders in the public sector and private industry alike rushing to get better and faster at delivering applications and services to their customers, employees, and constituents.

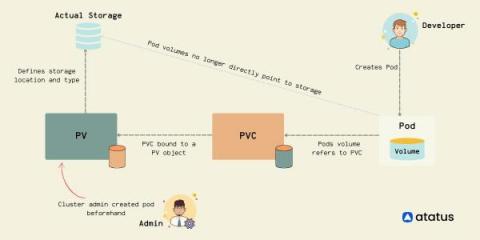

Exploring Kubernetes Storage: Persistent Volumes and Persistent Volume Claims

In today's world of container-based applications, the role of storage has become more critical than ever. One of the most significant challenges of containerization is the management of stateful applications. Kubernetes, one of the popular container orchestration platforms, provides a solution to this problem - Persistent Volumes (PVs). PVs allow the storage provision to be decoupled from the lifecycle of the Pod, making it easier to manage stateful applications.

Configuring Kubernetes and OpenShift Monitoring with DX UIM by Broadcom

How to Achieve Zero-Downtime Application with Kubernetes

Best Practices and Potential Loopholes for Successful Microservices Architecture

Microservices architecture is a software development approach where an application is built as a collection of small, loosely coupled, independently deployable services. Each service focuses on a specific business capability and operates as an autonomous unit, communicating with other services through well-defined APIs. This architectural style is often used in the context of DevOps to create more efficient, scalable, and manageable systems.

Simplifying the Tooling you Need to Manage Infrastructure at Scale - Civo Navigate NA 2023

Shifting Left Stateful Applications In Kubernetes - Civo Navigate NA 2023

How to import EKS clusters into Ocean in 5 easy steps

Cloud & Kubernetes Tagging Best Practices

Top 5 Preview Environments Products to Consider in 2023

Top 10 End-to-End Testing Products for Web Applications in 2023

Everything I Needed to Know about Securing a DevOps Platform - Civo Navigate NA 23

Using Kubernetes with AWS Lambda: Scaling Up Your Serverless Applications

In today’s world, with Large tech giants and businesses looking forward to moving toward serverless architecture, there has been a significant demand for scaling the applications. It’s therefore no surprise that millions of companies worldwide have adopted, or are planning on migrating to a Kubernetes and AWS Lambda solution to take their serverless applications to the next level.

Maximizing Developer Efficiency with Civo and StackState

Impact of Kubernetes cluster maintenance on application availability

#kubernetes #eks #chaosengineering

In this video, we will be exploring an interesting scenario that might happen in real life. Let's imagine we have an application running in a Kubernetes cluster inside EKS. If for any reason, two of our three nodes are cordoned and can't be scheduled anymore, what would happen to our users should the last node be cordoned as well? And what if we need to reschedule something?

How to Strengthen Kubernetes with Secure Observability

Kubernetes is the leading container orchestration platform and has developed into the backbone technology for many organizations’ modern applications and infrastructure. As an open source project, “K8s” is also one of the largest success stories to ever emanate from the Cloud Native Computing Foundation (CNCF). In short, Kubernetes has revolutionized the way organizations deploy, manage, and scale applications.

How to Effortlessly Deploy Cribl Edge on Windows, Linux, and Kubernetes

Collecting and processing logs, metrics, and application data from endpoints have caused many ITOps and SecOps engineers to go gray sooner than they would have liked. Delivering observability data to its proper destination from Linux and Windows machines, apps, or microservices is way more difficult than it needs to be. We created Cribl Edge to save the rest of that beautiful head of hair of yours.

How Qovery Could Have Saved Time and Effort in Compare the Market's EKS Migration

Maximize Long-Term Savings From Cloud Providers with Densify

One of the first considerations for FinOps teams trying to lower their public cloud spend is investing in long-term savings vehicles available from their Cloud Service Provider. These programs can provide customers with upwards of 72% savings off on-demand prices, in return for a 1-to-3-year usage commitment, so it’s pretty common that we see them in use by our customers.

Monitoring Kubernetes with Prometheus

In part I of this blog series, we understood that monitoring a Kubernetes cluster is a challenge that we can overcome if we use the right tools. We also understood that the default Kubernetes dashboard allows us to monitor the different resources running inside our cluster, but it is very basic. We suggested some tools and platforms like cAdvisor, Kube-state-metrics, Prometheus, Grafana, Kubewatch, Jaeger, and MetricFire.

Neon and Qovery - The Perfect Match for Preview Environments

Troubleshooting and Fixing Kubernetes CrashLoopBackOff

In this post, we'll dive into what CrashLoopBackOff actually is and explore the quickest way to fix it. Fasten your seat belts and get ready to ride. Everyone working with Kubernetes will sooner or later see the infamous CrashLoopBackOff in their clusters. No matter how basic or advanced your deployments are and whether you have a tiny dev cluster or an enterprise multi-cloud cluster, it will happen anyway. So, let’s dive into what CrashLoopBackOff actually is and the quickest way to fix it.

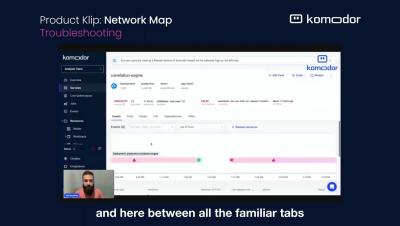

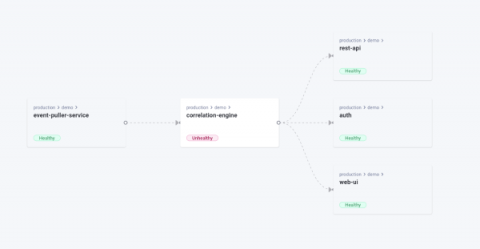

Unveiling Komodor's Network Mapping Capability

I am happy to share that thanks to the power of the open-source community, and our friends over at Otterize, we have now enhanced our Kubernetes offering for developers with another visual aid to streamline operations and troubleshooting – Dependencies Map. The Otterize network mapper is a zero-config tool that aims to be lightweight and doesn’t require you to adapt anything in your cluster.

Database Migrations in the Era of Kubernetes Microservices

In our extensive guide of best ci/cd practices we included a dedicated section for database migrations and why they should be completely automated and given the same attention as application deployments. We explained the theory behind automatic database migrations, but never had the opportunity to talk about the actual tools and give some examples on how database migrations should be handled by a well disciplined software team.

Running Reliably experiments from a Kubernetes cluster

#reliably #chaosengineering #resilience #kubernetes #k8s

Reliably lets you run experiments not only from the Reliably cloud but from your own environment. This video will focus on running a chaos engineering experiment in a Kubernetes cluster.

Simplify Building Applications for Kubernetes - Civo Navigate NA 2023

Docker on Mac - a lightweight option with Multipass

Controlling Our Destiny: Building When Open-Source Is No Longer Open-Source

The dev world was on fire this weekend, as news of yet another major open-source project was revealed to be in the midst of an identity crisis. The unsettling trend is clear: hit a certain adoption threshold, and then swap the licensing in an attempt to turn dedicated fans into revenue streams. With more companies searching for a sustainable business model and attempting to appease shareholders, the only certainty we have is, what was free yesterday, might be paid tomorrow.

Air-Gapping Should Be Head-Slappingly Obvious

When you think of air-gapped security, you imagine a protective distancing that separates your sensitive data from those who would steal it. In practice, the separation is a disconnection from the Internet. If no one can get to your data, no one can steal it. However, air-gapped deployments that are completely disconnected from the Internet are not the case in all instances. It’s true that many clusters are fully air-gapped, particularly in classified government installations.

Leveraging Neon's Serverless Postgres with Qovery Preview Environments

How a Service Mesh enhances EdgeComputeOps - Civo Navigate NA 2023

The Sound of Code: Instrument with OpenTelemetry - Civo Navigate NA 2023

Efficient Kubernetes Cluster Management: Building Infrastructure-Agnostic Clusters with Cluster API

With the widespread adoption of Kubernetes, the Cloud Native Computing Foundation (CNCF) ecosystem has evolved to include projects that address the challenges of using a container orchestrator system. One such challenge is managing and deploying clusters, which can become complex as organizations scale their Kubernetes requirements. Fortunately, Cluster API (CAPI) provides a solution.

Best Practices for implementing DevOps in Organizations

Are you curious about DevOps and how it’s transforming the world of technology? Look no further! In this blog, we will dive into the fascinating world of DevOps and explore its significance and need in today’s fast-paced digital landscape. From its definition and importance to real-world examples of epic fails and their solutions, we’ll cover it all. So, grab a cup of coffee, sit back, and let’s embark on this DevOps journey together!

Integrating Calico statistics with Prometheus

Metrics are important for a microservices application running on Kubernetes because they provide visibility into the health and performance of the application. This visibility can be used to troubleshoot problems, optimize the application, and ensure that it is meeting its SLAs. Some of the challenges that metrics solve for microservices applications running on Kubernetes include: Calico is the most adopted technology for Kubernetes networking and security.

Managing Kubernetes Log Data at Scale | Civo Navigate NA 2023

VMware Tanzu Application Service and MySQL: Better Together

VMware SQL with MySQL for Tanzu Application Service is a top choice for customers seeking a multi-cloud, easy-to-use, on demand MySQL service for enterprise applications. Customers who have adopted our solution affectionately refer to it as MySQL tile. Our solution provides tangible benefits over open source and third-party offerings for the VMware Tanzu Application Service platform. To call out a few.

Container Security Fundamentals - Linux Namespaces (Part 4): The User Namespace

Calico monthly roundup: July 2023

Welcome to the Calico monthly roundup: July edition! From open source news to live events, we have exciting updates to share—let’s get into it!

What's new in Longhorn 1.5

Longhorn version 1.5 has been released, along with the latest patch. This release includes a number of new features and improvements that can benefit users. Here are some of the highlights.

Restarting Kubernetes Pods: A Detailed Guide

This blog will help you learn all about restarting Kubernetes pods and give you some tips on troubleshooting issues you may encounter. Kubernetes pods are one of the most commonly used Kubernetes resources. Since all of your applications running on your cluster live in a pod, the sooner you learn all about pods, the better.

Git a Handle on it! A Scalable Approach to GitOps Configuration Patterns - Civo Navigate NA 2023

Modernizing the Air Force: DAFITC 2023

D2iQ is excited to be participating in the Department of the Air Force Information Technology and Cyberpower (DAFITC) 2023, in Montgomery, Alabama, from August 28-30. The theme of this year’s DAFITC conference is “Digitally Transforming the Air & Space Force: Investing for Tomorrow’s Fight.” Digital transformation of the Air Force and Space Force is part of a wider modernization effort that is accelerating across all U.S.

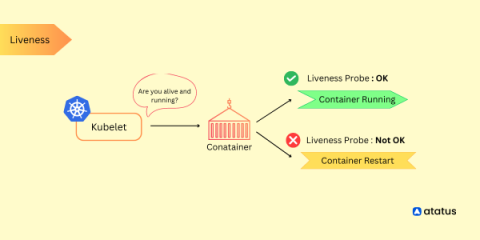

Kubernetes Liveness Probe Guide

Kubernetes liveness probes are a critical component for monitoring the health and availability of application containers running within a Kubernetes cluster. They allow Kubernetes to determine whether a container is running as expected and take appropriate actions if it is found to be unresponsive or in an unhealthy state. Liveness probes periodically check the health of containers by sending requests to a specified endpoint or executing a command within the container.

9 Popular Kubernetes Distributions You Should Know About

Kubernetes has become the go-to platform for container orchestration, allowing teams to more efficiently manage their containerized applications. Vanilla Kubernetes, as well as managed Kubernetes, are the two options available when building up a Kubernetes system. A group of programmers using vanilla Kubernetes must download the source code files, follow the code route, and set up the machine's environment.

Kubernetes Delivers Business Value Beyond IT

Since 2018, our annual State of Kubernetes survey has consistently found that organizations achieve significant operational benefits from using Kubernetes, especially “improved resource utilization.” This year, we wanted to understand how Kubernetes impacts the business as a whole. The results are unequivocal.

How to Plan for a Crisis with Infrastructure-Agnostic Recovery of Kubernetes Applications

Corey Dinkens and Carol Pereira contributed to this blog post. As enterprises deploy modern containerized applications to their Kubernetes clusters, managing data protection centrally is necessary to run critical business applications, especially in multi-cloud distributed environments.

Kubernetes at the Edge with Portainer | Civo Navigate NA 2023

25+ Best Kubernetes Tools By Category In 2023

Benefits and challenges of containerization for IT operations

Is Kubernetes Too Complicated - Civo Navigate NA 2023

Mastering Kubernetes Pod Restarts with kubectl

Managing containerized applications efficiently in the dynamic realm of Kubernetes is essential for smooth deployments and optimal performance. Kubernetes empowers us with powerful orchestration capabilities, enabling seamless scaling and deployment of applications. However, in real-world scenarios, there are situations that necessitate the restarting of Pods, whether to apply configuration changes, recover from failures, or address misbehaving applications.

DZone Survey 2023 Tracks Range of Container Experiences

DZone has released the results of its latest annual container management survey, entitled “Containers: Modernization and Advancements in Cloud-Native Development.” The survey findings reflect the experiences of developers and engineers in their deployment of containerized applications.

Understanding and Optimizing CI/CD Pipelines

Building, testing and deploying software is a time-consuming process that many organizations aim to minimize by automating repeatable work wherever possible. To do so, many organizations are utilizing a continuous integration, continuous delivery (CI/CD) philosophy in combination with cloud native tools like Kubernetes to develop and deploy software at scale.

SMS Alerts for GitHub Actions - Civo Navigate NA 2023

New Feature: Instantly Clone Your Service

Export Your Qovery Configuration into Terraform Manifest in One Click

The Future Skills People Need to Succeed in Tech - Civo Navigate NA 23

What is Docker Swarm and How Does it Work?

How to get started with Grafana and Docker (Grafana Office Hours #07)

Troubleshooting ECS Container Crashes

Amazon Elastic Container Service (ECS) is a versatile platform that enables developers to build scalable and resilient applications using containers. However, containerized services, like Node.js applications, may face challenges like memory leaks, which can result in container crashes. In this blog post, we’ll delve into the process of identifying and addressing memory leaks in Node.js containers running on ECS. First, let’s look closer at what a memory leak is.

Cloud Native Security Must Go Beyond the Perimeter

One month after the MOVEit vulnerability was first reported, it continues to wreak havoc on U.S. agencies and commercial enterprises. Unfortunately, the victim list keeps growing and includes organizations such as the U.S. Department of Health and Human Services, the U.S. Department of Energy, Merchant Bank, Shell, and others.

Kubernetes Community Days Munich Recap

A couple of weeks ago I had the absolute joy of attending KCD Munich for the first time, with my friend and colleague Guy Menahem (whom some of you know simply as The Good Guy on Twitter and YouTube). Besides rooting for Guy and his co-speaker, Arsh Sharma of Okteto, during their session on Backstage.io and IDPs, I enjoyed being untethered from ‘booth duty’ and free to engage with all the beautiful human beings that gathered together for this Kubetastic event!

Demo Roundup: PagerDuty Operations Cloud for Kubernetes

cert-manager can do SPIFFE? - Civo Navigate NA 2023

Kubernetes Incident Management Best Practices

Creating just any infrastructure on Kubernetes is not enough. There are so many basic configurations you could apply and create the infrastructure for your application for the time being and it might work just fine. The incident responses won’t always remain 100% reliable. You will run into newer potholes, and that’s okay.

GitOps the Planet #16: Using SLOs to Improve Software Delivery

How to save on container costs efficiently using Kubernetes cost reporting in CloudSpend

Kubernetes reports in CloudSpend In the current era focused on cloud computing, it is essential for businesses to streamline costs. As containerization and Kubernetes become increasingly popular, efficiently managing costs related to Amazon Elastic Kubernetes Service (EKS) and Azure Kubernetes Service (AKS) is crucial for maintaining a successful infrastructure.

Using Helm Dashboard and Intents-Based Access Control for Pain-Free Network Segmentation

Helm Dashboard is an open-source project which graphically shows installed Helm charts, revisions, and changes to their Kubernetes resources. The intents operator is an open-source Kubernetes operator which makes it possible to roll out network policies in a Kubernetes cluster, chart by chart, and gradually achieve zero trust or network segmentation.

Securing Access to Cloud Native Resources with Certificates - Civo Navigate NA 2023

Kubernetes Troubleshooting Reimagined: Operators and Auto-Tracing

Solving the Never Ending Requirements of Authorization - Civo Navigate NA 2023

Exploring AKS networking options

At Kubecon 2023 in Amsterdam, Azure made several exciting announcements and introduced a range of updates and new options to Azure-CNI (Azure Container Networking Interface). These changes will help Azure Kubernetes Services (AKS) users to solve some of the pain points that they used to face in previous iterations of Azure-CNI such as IP exhaustion and big cluster deployments with custom IP address management (IPAM).

An Insider Look at Zero Trust with GDIT DevSecOps Experts

As cyber attacks have become ever more sophisticated, the means of protecting against cyber attacks have had to become more stringent. With zero trust security, the model has changed from “trust but verify” to “never trust, always verify.” Joining D2iQ VP of Product Dan Ciruli for an in-depth discussion of zero trust security was Dr. John Sahlin, VP of Cybersolutions at General Dynamics Information Technology (GDIT), and David Sperbeck, DevSecOps Capability Lead at GDIT.

Enable and use GKE Control plane logs

Kubernetes Troubleshooting with Operators and Auto-Tracing

Kubernetes has revolutionized the way we manage and deploy applications, but as with any system, troubleshooting can often be a daunting task. Even with the multitude of features and services provided by Kubernetes, when something goes awry, the complexity can feel like finding a needle in a haystack. This is where Kubernetes Operators and Auto-Tracing come into play, aiming to simplify the troubleshooting process.