Operations | Monitoring | ITSM | DevOps | Cloud

July 2022

Basic Docker Commands | Tutorial for Beginners | Useful List with Examples -Sematext

Spayr Manages Multiple Environments On Kubernetes With Qovery

Deploying web applications on Kubernetes with continuous integration

Containers and microservices have revolutionized the way applications are deployed on the cloud. Since its launch in 2014, Kubernetes has become a de-facto standard as a container orchestration tool. In this tutorial, you will learn how to deploy a Node.js application on Azure Kubernetes Service (AKS) with continuous integration and continuous deployment (CI/CD).

Making the Most Out of PromQL with VMware Tanzu Observability

Rachna Srivastava contributed to this blog post. Given the popularity of Prometheus and the open source community behind it, it’s no surprise that customers often ask about support for the Prometheus Query Language, PromQL. Many users are already comfortable with PromQL but need the additional performance and scalability of the VMware Tanzu Observability platform.

1/6 - Introduction to Playground Environment

Kubernetes Load Testing Comparison: Speedscale vs K6

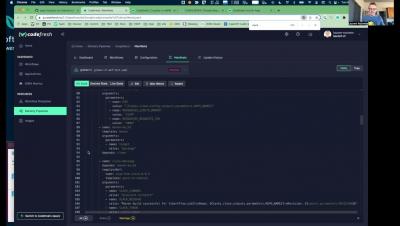

Codefresh GitOps CD Launch Webinar

A Beginners Guide to Init Containers - Civo Academy

2/6 - Create a Playground Environment

3/6 - Check Playground Environment

4/6 - Delete Playground Environment

5/6 - Intro Multi Kubernetes Cluster for Playground Environment

6/6 - Deploy Playground Environment on Playground Kubernetes Cluster

The New Hosted Gitops Platform Experience from Codefresh

Last month we announced the 3 major features we are adding to the Codefresh platform. Dashboards for DORA metrics, support for any external Continuous Integration system and a hosted GitOps service. The hosted GitOps experience (powered by Argo CD) is now available to all new Codefresh accounts (even free ones) so that simply by signing up you can start deploying applications right away to your Kubernetes cluster without having to maintain your own Argo CD installation.

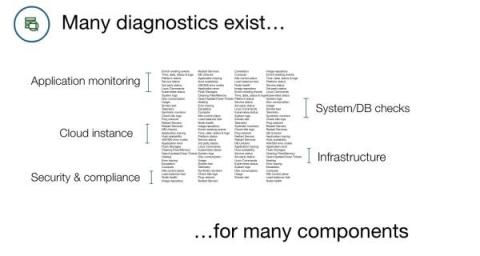

Automating Common Diagnostics for Kubernetes, Linux, and other Common Components

This is the second piece in a series about automated diagnostics, a common use case for the PagerDuty Process Automation portfolio. In the last piece, we talked about the basics around automated diagnostics and how teams can use the solution to reduce escalations to specialists and empower responders to take action faster. In this blog, we’re going to talk about some basic diagnostics examples for components that are most relevant to our users.

Kubewarden v1.1.1 Is Out: Policy Manager For Kubernetes

We are happy to announce the first minor release of Kubewarden v1.0: v1.1.1 is now available! For those of you new to Kubewarden, it is a policy manager for Kubernetes.

Why Preview Environments Are The New Thing in DevOps

Kubernetes on the Edge: Getting Started with KubeEdge and Kubernetes for Edge Computing

Developers are always trying to improve the reliability and performance of their software, while at the same time reducing their own costs when possible. One way to accomplish this is edge computing and it’s gaining rapid adoption across industries. According to Gartner, only 10% of data today is being created and processed outside of traditional data centers.

What's new in Sysdig - July 2022

It’s time for another publication of What’s New in Sysdig in 2022! I’m in charge of the “What’s new in Sysdig” blog for the month of July! Hello, I’m Tom Linkin, a Sr. Solutions Engineer based in the Poconos up in Pennsylvania. I joined the incredible group of people at Sysdig nine months ago and have been helping support sales in the greater NYC region ever since.

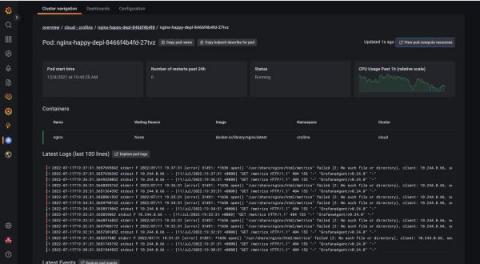

Introducing instant Kubernetes logging with Kubernetes Monitoring in Grafana Cloud

Kubernetes, Prometheus, and Grafana are a trio of technologies that have transformed cloud native development. However, despite how powerful these three technologies are, developers still face gaps in the process of implementing a mature Kubernetes environment.

Understanding the Kubernetes Pod's Lifecycle - Civo Academy

The Definitive Guide to Kubernetes in Production

Kubernetes has quickly grown in popularity, also due to its flexibility and power as a container orchestration system. It can scale virtually indefinitely, which has enabled it to provide the backbone for many of the world’s most popular online services. Plus, it is accessible and easy to set up. But, Kubernetes also comes with a few challenges in production.

Key metrics for monitoring Cilium

Cilium is a Container Network Interface (CNI) for securing and load-balancing network traffic in your Kubernetes environment. As a CNI provider, Cilium extends the orchestrator’s existing network capabilities by giving teams more control over how they build their applications and monitor traffic. For example, vanilla Kubernetes installations typically rely on traditional firewalls and Linux-based network utilities like iptables to filter pod-to-pod traffic by an IP address or port.

Monitor Cilium and Kubernetes performance with Hubble

In Part 1, we looked at some key metrics for monitoring the health and performance of your Cilium-managed Kubernetes clusters and network. In this post, we’ll look at how Hubble enables you to visualize network traffic via a CLI and user interface. But first, we’ll briefly look at Hubble’s underlying infrastructure and how it provides visibility into your environment.

Kubernetes Cluster Sprawl: How to Effectively Manage It Across Distributed, Heterogeneous Environments

If you’re managing multiple Kubernetes clusters at scale, you’ve probably run into Kubernetes cluster sprawl. And if you haven’t, brace yourself, because you’ll likely cross that bridge in the near future.

Four Ways to Run Containers on AWS

AWS provides multiple ways to deploy containerized applications. From small, ready-made WordPress instances on Lightsail, to managed Kubernetes clusters running hundreds of instances across multiple availability zones. When deciding on the architecture of your application, you should consider building it serverless. Being free from (virtual) server management enables you to focus more on your unique business logic while reducing your operational costs and increasing your speed to market.

On demand webinar: Containers and Lambdas

An Introduction to Kubernetes Pods - Civo Academy

Codefresh Platform integration with Slack

Everything You Need to Know About Deployment Environments

The Future is Smart Cloud-Native

Managing Your Hyperconverged Network with Harvester

Hyperconverged infrastructure (HCI) is a data center architecture that uses software to provide a scalable, efficient, cost-effective way to deploy and manage resources. HCI virtualizes and combines storage, computing, and networking into a single system that can be easily scaled up or down as required.

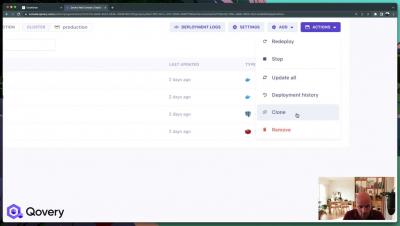

Announcing Qovery V3: What's new?

How to Monitor Docker Metrics | Container Performance Monitoring Explained - Sematext

How to gain Kubernetes visibility in just a few clicks

Verify image signatures with GitHub Actions and KeylessPrefix

With the latest releases of Kubewarden v1.1.0 and the verify-image-signatures policy, it’s now possible to use GithubActions or KeylessPrefix for verifying images. Read our previous blog post if you want to learn more about how to verify container images with Sigstore using Kubewarden.

How to Monitor PHP-FPM with Prometheus

PHP is one of the most popular open source programming languages on the internet, used for web development platforms such as Magento, WordPress, or Drupal. In addition to all PHP bases, PHP-FPM is the most popular alternative implementation of PHP FastCGI. It has additional features which are really useful for high-traffic websites. In this article, you’ll learn how to monitor PHP-FPM with Prometheus.

How to gain Kubernetes visibility in a few clicks

CICD Tool - Razorops integration with GITLAB

CICD Tool - Razorops integration with GITHUB

CICD Tool - Razorops integration with BITBUCKET

Container Management Report 2022: Timely Advice for a Surging Enterprise Kubernetes Market

2022 Gartner® Market Guide for Container Management identifies the major trends in the container and Kubernetes market and offers guidance for organizations deploying containerized platforms. D2iQ, whose offerings we believe align closely with Gartner recommendations, is listed as a Representative Vendor for container management. The findings in the Gartner container management report should be taken in context with the analyst firm’s predictions for widespread cloud-native adoption.

How Tanzu Application Platform and the Backstage Developer Portal Improve DevX

As cloud native concepts and adoption take hold, many enterprises are now considering and implementing ways to achieve the primary objective of cloud native technology: enabling engineers to make significant changes to systems easily, frequently, and confidently. More and more enterprises are recognizing that cloud native technologies, such as Kubernetes, can indeed serve as the foundational infrastructure for building their own in-house platforms, greatly empowering their operations teams.

4 day work week. What have we learned? - Civo Spaces

What should you choose? Docker Swarm vs Kubernetes

Since the introduction of containerisation by Linux many years ago, maturity has shifted from the traditional virtual machine to these containers. These tools have made application development much easier than the initial process. Docker Swarm and Kubernetes came into action when the number of containers increased within a system, they helped orchestrate these containers. A question that arises is, which one is the better option?

Building a Custom Grafana Dashboard for Kubernetes Observability

Distributed systems open us up to myriad complexities due to their microservices architecture. There are always little problems that arise in the system. Therefore, engineering teams must be able to determine how to prioritize the challenges. Viewing logs and metrics of such systems enables engineers to know the shared state of the system components, thereby informing the decision-making on what challenge needs to be solved most immediately.

How to Build Multi-Arch Docker Images

With ARM based dev machines and servers becoming more common, it is become increasingly important to build Docker images that support multiple architectures. This guide will show you how to build these Docker images on any machine of your choosing.

How Does Docker Network Host Work?

Docker is a platform as a service product. With Docker, you can easily deploy applications into Docker containers. Containers are software "packages" that bundle together an application's source code with its libraries, configurations, and dependencies. This helps software run more consistently on different machines. To use Docker containers, you need to understand how Docker networking works. Below, we'll answer the question: "what is Docker network host?". We'll also take a look to see how it works.

Is Kubernetes Hard? 12 Reasons Why, and What to Do About It

Getting Kubernetes right is hard. If you’ve ever checked out Kelsey Hightower’s “Kubernetes the Hard Way,” you’ll know what we are talking about. Tell your family and friends you’ll see them sometime in the not-so-near future because Kubernetes will be consuming your life. Although Kubernetes adoption is skyrocketing, not all deployments succeed, and the issues that cause deployments to fail can occur between Day 0 planning and Day 2 operation phases.

The power of edge computing

The Multi-access Edge Compute (MEC) framework enables mobile operators, application developers, and content providers to deploy predictable cloud-computing capabilities at the network’s edge and in the immediate proximity of mobile networks.

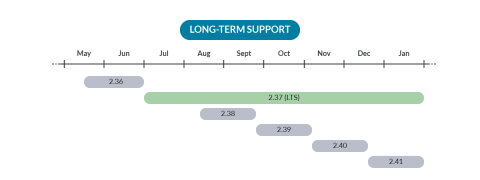

Prometheus 2.37 - The first long-term supported release!

Prometheus 2.37 is out and brings exciting news: this is the first long-term supported release. It’ll be supported for at least six months.

Getting Started With Observability on Kubernetes | Webinar with Ricardo Santos and Andreas Prins

The power of edge computing

The Multi-access Edge Compute (MEC) framework enables mobile operators, application developers, and content providers to deploy predictable cloud-computing capabilities at the network’s edge and in the immediate proximity of mobile networks.

Navigating and Optimizing within the Sea of Azure Instance Types

How to Avoid Getting Your Pod OOMKilled

In this blog, understand why your pod has OOMKilled errors when provisioning Kubernetes resources and how Speedscale can aid with automated testing. When creating production-level applications, enterprises want to ensure the high availability of services. This often results in a lengthy development process that requires extensive testing for the applications or a new release.

Enhance Kubernetes data plane monitoring by scraping Ocean metrics via Prometheus

Manage Kubernetes Clusters using the Civo Pulumi Provider - Civo

Why DevOps Engineers Love and Recommend Qovery

Collect critical AWS metrics faster with Sysdig

Today, we are excited to announce support for Amazon CloudWatch Metric Streams. This support will enable our customers to ingest metrics from AWS CloudWatch in real time, increase metric and state fidelity and time to ingestion while decreasing MTTR, and support cloud metrics at scale without the need to customize or re-configure new AWS service metrics. In this blog, we dig deep into.

What's New with VMware Tanzu RabbitMQ for Kubernetes 1.3

Paula Stack and Roser Blasco co-wrote this post. As a refresher, VMware Tanzu RabbitMQ is based on the hugely popular open source technology RabbitMQ, which is a message broker with event streaming capabilities that connects multiple distributed applications and processes high-volume data in real-time and at scale.

Kubernetes 101: How To Set Up "Vanilla" Kubernetes

Kubernetes is an open source platform that, through a central API server, allows controllers to watch and adjust what’s going on. The server interacts with all the nodes to do basic tasks like start containers and pass along specific configuration items such as the URI to the persistent storage that the container requires. But Kubernetes can quickly get complicated. So, let’s look at Vanilla Kubernetes — the nickname for a a K8s setup that’s as basic and elementary as it gets.

CICD Pipeline | Case Study | Razorops | 72pi

An Introduction to Kubernetes Observability

If your organization is embracing cloud-native practices, then breaking systems into smaller components or services and moving those services to containers is an essential step in that journey. Containers allow you to take advantage of cloud-hosted distributed infrastructure, move and replicate services as required to ensure your application can meet demand, and take instances offline when they’re no longer needed to save costs.

Monitor your T2A-powered GKE workloads with Datadog

Arm processors have become increasingly popular in recent years, providing energy-efficient, cost-effective processing power to both mobile and cloud computing ecosystems. As a part of this growth, more and more organizations are choosing to leverage the many benefits of Arm-based architectures for their containerized workloads. Today, Google Cloud announced its Arm-based Tau T2A virtual machines (VMs), which you can also use to run workloads in Google Kubernetes Engine (GKE).

Introducing Kubernetes Monitoring in Grafana Cloud

Kubernetes has quickly become the standard container orchestration technology for developers and companies who want to deploy at scale, iterate quickly, and manage a large number of applications and services. At Grafana Labs, we recognized the need for something more powerful for our users to be able to successfully keep an eye on everything happening inside their clusters.

3 Pro Tips To Get The Most Out Of Qovery - Part 1

Migrate your PSPs to Kubewarden Policies!

As announced in past blog posts, Kubewarden has 100% coverage of the deprecated, and soon to be removed, Kubernetes PSPs. If everything goes as expected the PSPs will be removed in Kubernetes v1.25 due for release on 23rd August 2022. The Kubewarden team has written a script that leverages the migration tool written by AppVia, to migrate PSP automatically. The tool is capable of reading PSPs YAML and can generate the equivalent policies in many different policy engines.

Top 15 Docker Container Monitoring tools in 2022

Container Registry Scans with NeuVector

Elevate App Development and DevSecOps Experience with New Integrations in VMware Tanzu Application Platform

Many businesses today rely on delivering modern applications that provide the best customer experience and competitive advantage on any cloud. Modern applications require a modern cloud native infrastructure. One of the clearest signs of cloud native technology mainstreaming (i.e., Kubernetes) is the rapid growth in the number of clusters being deployed in the multi-cloud environment.

Building World Class Solutions Together

With the launch of the Cycle Partner Program, we are committing to making it easier for companies to work with Cycle by creating more transparent and predictable relationships, offering training, resources, incentives, and benefits, some of which will roll out over time as the program evolves. Interested in partnering with Cycle? Contact our partner lead to schedule a meeting.

Kubernetes Monitoring in Grafana Cloud: Getting started

State of Kubernetes 2022: Report Roundup

According to recent surveys and reports on the industry, Kubernetes and containers are more popular than ever. Containers and serverless functions are being mainstream and ubiquitous – with a more than 300% increase in container production usage in the past 5 years. This trend is especially true for large organizations, which are often using managed platforms and services.

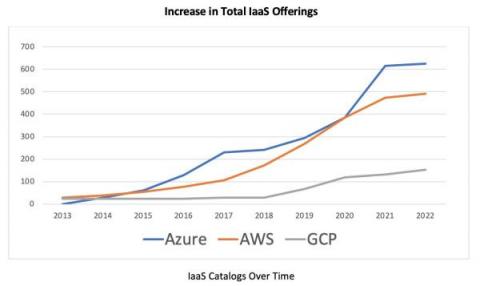

Public Cloud IaaS Catalogs Update

So, you’re looking for the right instance type for your public cloud workload, but how do you decide? Major cloud providers such as Amazon Web Services (AWS), Microsoft Azure Cloud and Google Cloud Platform (GCP) now offer such a large catalog of IaaS instances that it can become difficult to make sense of it all.

Is Open-Source Kubernetes Free? Yes, "Like a Puppy." Here's Why.

The common misconception of open-source Kubernetes is that it is free—but in reality, it has a lot of associated costs, including labor and potential business losses from wasted time, effort, and being late to market. Just like a puppy, Kubernetes software itself might be free, but a do-it-yourself (DIY) deployment involves a lot of care, patience, and unforeseen costs.

Blueprint for Secure OSS Supply Chains

Creating competitive differentiation in telcos through cloud-native

It’s never too early or late to start talking about cloud-native. By 2025, more than 95% of new workloads will be deployed on cloud-native platforms. Clearly, a lot of organizations are on their way to cloud-native adoption, among them some of the prominent telecom operators of our time. After all, the benefits of cloud-native are most pronounced in the telecom sector, where the need for scale, automation and predictable cost structure at optimal OPEX and CAPEX is more persistent than ever.

VMware Application Catalog Now Accessible through VMware Marketplace

Neeharika Palaka and Shagun Tewari co-wrote this blog post. VMware Marketplace is VMware’s one-stop shop for all ecosystem solutions, with a robust catalog of more than 2,000 solutions covering open source software, first-party tools, and commercial software. VMware Marketplace is currently used by thousands of people to download, deploy, subscribe to, and purchase these solutions in a direct and easy way.

That's a Wrap for DevOps Loop 2022: Recap and Highlights

For the second year in a row, the DevOps community came together virtually for our DevOps Loop conference. This event allowed us to examine DevOps and its core principles in the context of modern applications, multi-cloud, and Kubernetes. Organizations are increasingly looking to internal platform teams to deliver an awesome developer experience while ensuring reliability, scalability, and security, by unlocking the path to production for modern apps and helping their products soar!

Cut Your Cloud Burn with Intel and Densify

CICD Pipeline Using Razorops | Continuous Integration | Continuous Deployment

What Is CICD Pipeline | Container Native CICD | Razorops | Best CICD Tool

Kubernetes Cluster Autoscaler vs Karpenter

Enabling Trust Driven Development - Shipa Insights

When you think of TDD, you might lean towards Test-Driven-Development. Though in Tomasz Manugiewicz’s ACE 2022 talk, the ‘T’ in TDD could also mean Trust e.g Trust-Driven-Development. The talk, boils down to if there is trust, there is autonomy. If there is autonomy, creativity flourishes. Building trust is done incrementally, incremental success builds success. Software engineering is a team sport and an exercise in iteration.

Introducing VMware Tanzu GemFire for Redis Apps

The release of VMware Tanzu GemFire 9.15 introduces compatibility with the VMware Tanzu GemFire for Redis Apps add-on. This add-on enables compatibility between Redis applications and Tanzu GemFire for the first time ever, unlocking enterprise-ready features for your Redis applications.

The future of K3s and Kubernetes

"Civo is inspiring us to build lightning quick demos" - Tao Hansen from Garden.io

Drive Tanzu Mission Control Cluster Configuration and Add-ons with Flux CD

VMware Tanzu Mission Control users can now drive clusters via GitOps. This new feature of Tanzu Mission Control is built on Flux CD and enables users to attach a git repository to a cluster and sync YAML artifacts (using Kustomize) from the repository to the cluster. This feature provides a method for managing cluster configurations with Tanzu Mission Control via continuous delivery from a git repository.

Community Spotlight series: Calico Open Source user insights from Cloud Native Technologist, Jintao Zhang

In this issue of the Calico Community Spotlight series, I’ve asked Jintao Zhang from API7.ai to share his experience with Kubernetes and Calico Open Source. API7.ai is an open-source infrastructure software company that helps businesses manage and visualize business-critical traffic, such as APIs and microservices to accelerate business decisions through data.

Building Everything-as-Code? Learn These CI/CD Processes and Tools First

Here at Kublr we always emphasize how important it is to understand the foundations of Kubernetes (K8s) and its operations tools so you can more efficiently manage your applications and simplify your cloud-native development workflow. Understanding these components on the front end is equally important as we begin our build processes, especially when building with an everything-as-code approach.

A Path to Legacy Application Modernization Through Kubernetes

Modern application deployments rely heavily on containerization for its scalability, availability and ease of maintenance. Legacy applications implemented before the containerization era often use monolithic, hardware-centric architectures that are difficult to scale and manage. These legacy applications may have multiple services bundled into the same deployment unit without a logical grouping.

Q2 2022 product retrospective - Last quarter's top features

Heroku Dilemma: The Growing Startups' Journey

Automating ConfigMap changes with GitOps and Argo CD

It's Time for a Straight-Forward Pricing Model

Today, we’re excited to announce the release of Cycle’s new pricing model! With this new model, we aim to make our pricing far more straightforward and better suited for larger deployments and customers. While our current pricing model solved the needs of our customers for the last few years, we’ve learned enough that it’s now time to make a change. Before talking about the new model, let’s dive into how we got here.

Civo Update - July 2022

In June, we hosted our online meetup with ContainIQ surrounding k8s monitoring and observability. You can catch up on the discussion between Matthew Lenhard (Co-founder & CTO of ContainIQ) and Kai Hoffman (Developer Advocate at Civo) here if you missed it. Meanwhile, Kamesh Sampath from our Developer Advocate demo program explains how Civo’s speed and developer experience is great to work with in our latest Civo Shorts.

Using Argo CD and Kustomize for ConfigMap Rollouts

Kubernetes offers a way to store configuration files and manage them via a ConfigMap. Functionally, they seem very similar to Kubernetes Secrets, where both constructs are used to store information that can be used in a Pod. This information could be usernames and passwords of a connection string to a database.