Operations | Monitoring | ITSM | DevOps | Cloud

July 2023

We Let Our Developers Choose Their Own K8S Requests and Limits and This Happened!

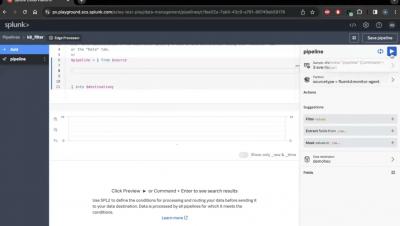

Splunk Edge Processor: Filtering Kubernetes Data to Remove Events

Bridging ClickOps and GitOps

Kubernetes Monitoring Best Practices

Kubernetes can be installed using different tools, whether open-source, third-party vendor, or in a public cloud. In most cases, default installations have limited monitoring capabilities. Therefore, once a Kubernetes cluster is running, administrators must implement monitoring solutions to meet their requirements. Typical use cases for Kubernetes monitoring include: Effective Kubernetes monitoring requires a mix of tools, strategy, and technical expertise. To help you get it right, this article will explore seven essential Kubernetes monitoring best practices in detail.

Ep. 5: Scaling the Cloud: The Growth & Future of AKS featuring Jorge Palma

Best Practices for Monitoring Kubernetes with Grafana

There are tons of tools to choose from when it comes to visualizing data, but Grafana has become one of the best ways for organizations to visualize information and get notified about events happening within their infrastructure or data. According to Kubernetes: In this article, we will take a look at the best practices for monitoring Kubernetes using Grafana.

Kubernetes Gateway API (Everything You Should Know)

The Kubernetes networking landscape is shifting. The traditional Kubernetes Ingress approach is being complemented and, in some cases, replaced by a more powerful, flexible, and extensible standard: the Kubernetes Gateway API. Kubernetes has become the go-to platform for orchestrating and managing containerized applications. A key aspect of Kubernetes that's crucial for the functionality of these applications? Networking.

GitOps the Planet #17: Ditching Old and Busted CI/CD with Kat Cosgrove

Introduction to Azure Kubernetes Service

In the constantly evolving world of technology, managing containerized applications at a scale that can match growing business demands is a challenging task. Microsoft, however, has emerged as a leader in this field, offering the Azure Kubernetes Service (AKS). AKS is a managed container orchestration service that provides a rich and robust platform for developers to deploy, scale, and manage their applications.

The 3 ways of K3s - Civo Navigate NA 2023

Komodor Announces the Availability of Amazon Elastic Kubernetes Service [Amazon EKS] Blueprints as an Add-On

We’re pleased to announce that the Komodor platform has published an Amazon Elastic Kubernetes Service (Amazon EKS) Blueprints CDK Add-On. Amazon EKS is a managed Kubernetes service that streamlines the deployment and scaling of cloud-based or on-prem K8s clusters.

Troubleshooting Kubernetes Deployment at Every Level!

Kubernetes has emerged as the de facto standard for container orchestration, with its ability to automate deployment, scaling, and management of containerized applications. However, even with the best practices and expertise, Kubernetes deployment can sometimes be a complex and challenging process. It involves multiple layers of infrastructure, including the application, Kubernetes cluster, nodes, network, and storage, and each layer can have its own set of issues and challenges.

ClickOps over GitOps - Civo Navigate NA 2023

Dynamic Kubernetes PersistentVolumeClaim (PVC) reuse with Ocean for Apache Spark

How Infrastructure as Code Helps IT Teams Cope with Employee Churn

One day a few years ago, Sushil Kumar, our head of DevOps, was worried. A key admin on his team in charge of private and public cloud infrastructure had just given notice that he was leaving in two weeks. Even after scheduling a knowledge transfer session to other team admins, Kumar saw that their operations would be at risk. And he was right. Kumar and his team spent the next 18 months dealing with the fallout.

Cloud-Based vs Cloud-Native: What's the difference?

Get to know the differences between cloud-native and cloud-based applications, their benefits, and why a cloud-native tool like Cloudsmith is a game-changer for efficient and secure software artifact management. The term 'cloud' has become a buzzword in the tech industry, often used interchangeably to describe anything from online storage to complex computing services. But what does it really mean? And more importantly, what does it mean for your business?

D2iQ's Groundbreaking AI Chatbot Levels the Kubernetes Skills Gap

D2iQ has introduced an AI assistant that helps organizations resolve problems more quickly and easily, including coding errors and system failures. The new DKP AI Navigator can help reduce the duration and cost of system and application bottlenecks, misconfigurations, and downtime, and ultimately help organizations overcome the Kubernetes skills gap.

How to monitor your Apache Mesos clusters with Grafana Cloud

We’re excited to introduce a dedicated Grafana Cloud solution for Apache Mesos, an open-source project for managing clusters in your data center and at cloud scale. Apache Mesos is a distributed systems kernel, running on every machine in a cluster and providing easy orchestration of every resource in the cluster. This allows you to treat compute units, memory, and disk as a single pool of resources.

Civo's Kubernetes & Cloud Native Glossary

The Kubernetes & Cloud Native Glossary This post will provide you with an A-Z guide of terms associated with Kubernetes and Cloud Native. Through this, we have compiled a list of over 100 terms with the aim of helping you understand the terminology required to start learning about Kubernetes and Cloud Native. Back to Start.

Container Security Fundamentals - Linux Namespaces (Part 3): The Network Namespace

A GitOps Approach to Infrastructure Management with Codefresh (Ovais Tariq & Robert Barabas, Tigris)

Say Goodbye to Your Staging Environment. Use Ephemeral Environments!

A Software Developer's Guide to Getting Started With Kubernetes: Part 2

In Part 1 of this series, you learned the core components of Kubernetes, an open-source container orchestrator for deploying and scaling applications in distributed environments. You also saw how to deploy a simple application to your cluster, then change its replica count to scale it up or down. In this article, you’ll get a deeper look at the networking and monitoring features available with Kubernetes.

Building a Reliable and Scalable Infrastructure: Partoo's Journey with Qovery

Kubernetes Community Day Munich Recap: A Meeting of Tech Minds and Ideas

This July, the community spirit was profoundly vibrant in the scenic city of Munich, as Kubernetes Community Day (KCD) Munich brought together a meeting of minds and inspired the open-source collaboration we all know and love. The event was a testament to the strength and vitality of the Kubernetes community, which pulsed with an energy of shared intellectual curiosity and passion for all things Kubernetes.

Optimizing Network Performance using Topology Aware Routing with Calico eBPF and Standard Linux dataplane

In this blog post, we will explore the concept of Kubernetes topology aware routing and how it can enhance network performance for workloads running in Amazon. We will delve into topology aware routing and discuss its benefits in terms of reducing latency and optimizing network traffic flow. In addition, we’ll show you how to minimize the performance impact of overlay networking, using encapsulation only when necessary for communication across availability zones.

DevOps vs Platform Engineering: The Common Goal

In the dynamic landscape of software development, the terms DevOps and Platform Engineering have garnered attention. Both concepts, although distinct, aim at a shared goal: to build efficient, streamlined systems that simplify code deployment within organizations.

Elevate the Security of Your Kubernetes Secrets with VMware Application Catalog and Sealed Secrets

Alfredo García, manager R&D, VMware contributed to this blog post. VMware Application Catalog now includes enterprise support for Sealed Secrets, enabling customers to add an asymmetric cryptography-based protection to their Kubernetes Secrets stored in shared repositories.

A Detailed Guide to Docker Secrets

This post was written by Talha Khalid, a full-stack developer and data scientist who loves to make the cold and hard topics exciting and easy to understand. No one has any doubt that microservices architecture has already proven to be efficient. However, implementing security, particularly in an immutable infrastructure context, has been quite the challenge.

10 Burning Questions CTOs Have About Kubernetes

As enterprise architecture and technology innovation leaders, it's crucial to understand the benefits, limitations and best practices associated with building cloud native apps and modernizing legacy workloads. Gartner recently published a worthwhile read addressing what keeps CTOs up at night while assessing Kubernetes and container adoption.

Making Sense: AI Effect, Red Hat Ruckus, Monoliths vs. Microservices

Each day the news assails us with a jumbled wave of trends, hype, provocative claims, and skirmishes. From news venues around the globe, the D2iQ brain trust is called upon to provide insights and commentary to help make sense of the hot topics and controversies affecting the cloud-native and Kubernetes communities.

Using AKS with workload identities in terraform

We all use Kubernetes on a daily basis, and the more we use it, the more it is apparent that Kubernetes alone will not be as fruitful as it will be with deeper integrations. One of these integrations is Microsoft Azure, which provides the ability to connect, use, and retrieve information from services on your behalf.

Architecting Cloud Instrumentation

Architecting cloud instrumentation to secure a complex and diverse enterprise infrastructure is no small feat. Picture this: you have hundreds of virtual machines, some with specialized purposes and tailor-made configurations, thousands of containers with different images, a plethora of exposed endpoints, s3 buckets with both public and private access policies, backend databases that need to be accessed through secure internet gateways, etc.

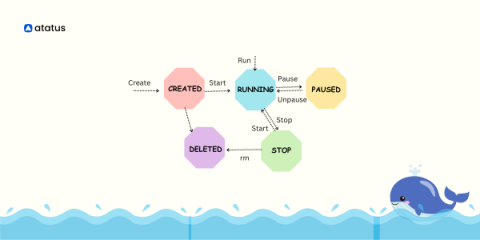

Docker Container Lifecycle Management

Managing an application's dependencies and tech stack across numerous cloud and development environments is a regular difficulty for DevOps teams. Regardless of the underlying platform it uses, it must maintain the application's stability and functionality as part of its regular duties. However, one possible solution to this problem is to create an OS image that already contains the required libraries and configurations needed to run the application.

How to Validate Error Handling | A Kubernetes Guide

Embracing Asynchronous Communication at Qovery

Comparing Networking Solutions for Kubernetes: Cilium vs. Calico vs. Flannel

In Kubernetes, networking holds immense significance as it enables seamless communication among various components and facilitates uninterrupted data flow. To allow pods within a Kubernetes cluster to engage with other pods and cluster services, each of them requires an exclusive IP address. Consequently, networking solutions in Kubernetes encompass more than mere interconnecting machines and devices.

Hacking our Way Towards ML-first Jupyter Notebooks - Civo Navigate NA 2023

Unlocking the Power of Containerization: Strategies for Technology Leaders

Understanding the Basics of Application Autoscaling

Simplify troubleshooting in Google Kubernetes Engine with new playbooks

New playbooks can help detect issues automatically and provide support when troubleshooting your GKE environment.

A Software Developer's Guide to Getting Started With Kubernetes: Part 1

Put simply, Kubernetes is an orchestration system for deploying and managing containers. Using Kubernetes, you can operate containers reliably across different environments by automating management tasks such as scaling containers across Nodes and restarting them when they stop. Kubernetes provides abstractions that let you think in terms of application components, such as Pods (containers), Services (network endpoints), and Jobs (one-off tasks).

Discover, Learn, and Experience: The Qovery Playground is Now Open!

A Chasm Crossed: The Unstoppable Surge of Kubernetes Adoption

In the ever-evolving landscape of enterprise technology, certain milestones mark the transition from innovation to recognition by early adopters to widespread adoption. Gone are the days when Kubernetes was just a segmented buzzword or something only the tech-savvy companies played with. Today, it's a force to be reckoned with, and we need to take a closer look at what it means for us.

How to monitor Kubernetes network and security events with Hubble and Grafana

Anna Kapuścińska is a Software Engineer at Isovalent, who has a rich experience wearing both developer and SRE hats across the industry. Now she works on Isovalent observability products such as Hubble, Tetragon, and Timescape, as well as the respective Grafana integrations for all of them.

Choosing the Right DevOps Stack in 2023: A Guide for Startups

Deploy Kubernetes Dashboard through Civo Marketplace - Civo.com

Installing FerretDB through Civo Marketplace - Civo.com

Canonical Kubernetes 1.28 pre-announcement

VMware Celebrates Istio's Graduation to CNCF

As we acknowledge Istio's graduation to the Cloud Native Computing Foundation (CNCF), it's important to note that it’s not just a momentous occasion for Istio, but for the entire cloud native community. This milestone marks a significant growth trajectory for Istio and paves the way for future innovation and success in the years to come. In the realm of microservices, the significance of Istio's role cannot be overstated.

Monitor the past, present, and future of your Kubernetes resource utilization

Greetings, Kubernetes Time Lords! Through a series of recent updates to our multi-purpose Kubernetes Monitoring solution in Grafana Cloud, we’ve made it easier than ever to assess your resource utilization, whether you’re looking at yesterday, today, or tomorrow. All companies that use Kubernetes, regardless of size, should monitor their available resource utilization. If a fleet is under-provisioned, the performance and availability of applications and services are at serious risk.

Your Guide to Kubernetes Air-Gapping Success

Military and intelligence agencies, hospitals and corporations all deploy air-gapped environments to protect their sensitive information from breaches and theft. An air-gapped environment aims to isolate and limit access to classified and sensitive data. An air-gapped environment can be as simple as five PCs in a room connected only to one another, whereas air-gapped environments like the U.S.

Announcing Kubernetes 360 Updates for Deeper Visibility into Kubernetes Performance

We’re thrilled to announce new feature updates for Logz.io’s Kubernetes 360 to provide deeper visibility and additional troubleshooting capabilities for your Kubernetes environment.

[Product Klip] Application View

Take Your Kubernetes Game From Zero to Operations Hero

Merging to Main #4: Feature Flags

VMware Tanzu Aligns to Multi-Cloud Industry Trends

Businesses today are prioritizing software agility to remain competitive in a rapidly changing world. However, the perks of having access to different clouds, tools, and methodologies can also bring along with it layers of complexity. As the cloud native landscape continues to grow, it’s vital that organizations are investing in capabilities that accelerate developer productivity, drive revenue, and maintain a competitive edge.

8 GKE Monitoring Best Practices To Apply ASAP

DevOps and Kubernetes: We've Been Doing It Wrong

Platform engineering as a replacement for DevOps has become a hot topic, with provocative critics stoking the controversy by pronouncing DevOps dead. The underlying reason for these pronouncements is that the once-radical DevOps model is at odds with the new cloud-native container management model to which the now-obsolete DevOps model is being applied. Let’s take a closer look.

Real-World Container & Image Security: Present & Future - Civo Navigate NA 2023

Using SigNoz to Monitor Your Kubernetes Cluster

Deploy Your Production Infrastructure on AWS in 15 Minutes with Qovery

Top Container Monitoring Tools

Container monitoring refers to the process of monitoring and managing containers deployed within a containerization platform, such as Docker or Kubernetes. As containerization has become increasingly popular in software development and deployment, monitoring and managing containerized environments has become increasingly important.

[Product Klip] ArgoCD & FluxCD Integration

How to use Kubernetes to deploy Postgres

Tightening Security by Shifting Left

The growing need for a secure software development lifecycle has prompted a discussion around the concept of “shift left.” Security has been treated at the end of the development cycle for decades, and software development has been mostly linearly planned. As cloud-native applications evolve and users demand real-time and 24/7 software services, scheduling security and testing at the end of the development cycle can create significant development, operational, and cost implications.

Day 2 Challenges - Why Hiring a Platform Team is Not Enough

If you’ve been anywhere in the DevOpsphere in recent times, you have certainly encountered the Platform Engineering vs. DevOps vs. SRE debates that are all the rage. Is DevOps truly dead?! Is Platform Engineering all I need?! Have I been doing it wrong all along? These have become more popular than the mono vs. multi-repo flame wars from a few years back.

GitOps the Planet #15: AI Comes to Kubernetes with Alex Jones

Turbocharging host workloads with Calico eBPF and XDP

In Linux, network-based applications rely on the kernel’s networking stack to establish communication with other systems. While this process is generally efficient and has been optimized over the years, in some cases it can create unnecessary overhead that can impact the overall performance of the system for network-intensive workloads such as web servers and databases.

The Fundamentals of Portfolio Modernization

Application modernization has been all the rage for years, but we’ve seen firsthand here at VMware Tanzu Labs that most organizations struggle to achieve the outcomes they initially hoped for. Modernization complexity goes far beyond technology, especially within larger organizations that contain dozens of business units, many stakeholders, and multiple—sometimes conflicting—priorities. Many of the organizations we work with have experienced these common roadblocks.

Troubleshooting Bad Health Checks on Amazon ECS

Health checks are an important factor when working with containerized applications in the cloud and are the source of truth for many applications in terms of their running status. In the context of AWS Elastic Container Service (ECS), health checks are a periodic probe to assess the functioning of containers. In this blog, we will explore how Lumigo, a troubleshooting platform built for microservices, can help provide insights into container crashes and failed health checks.

The Secret Ingredients to Building a Thriving Company Culture - Civo Navigate NA 2023

Densify Talks, Automated Cloud Instance Selection with Josh Hilliker from Intel

On this episode of Densify Talks, Josh and Andrew discuss automated instance selection and the importance of letting machines make the resource decisions as cloud environments scale. Speaking from the perspective of a hardware manufacturer, Josh offers unique insight into some of the criteria that affect the performance and cost of hosting apps in the cloud, and how to leverage “optimization as code” to automate the optimization process.

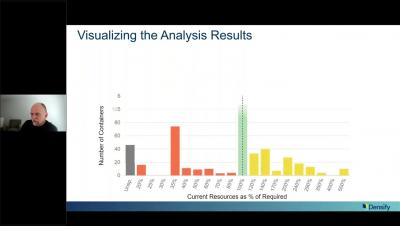

Kubernetes Optimization: Focus on the Highest Impact Actions

Meet Elemental: Cloud Native OS Management in Kubernetes

Wouldn’t it be great if Rancher could provision and manage not only Kubernetes clusters but also the OS running on the cluster nodes? This is the goal we had in mind when we started working on Elemental. Elemental adds to Rancher the ability to install and manage a minimal OS based on SUSE Linux Enterprise technology, delivered and managed in a fully cloud native way. This simplifies the infrastructure (you only need a container registry) and day 2 operations.