Operations | Monitoring | ITSM | DevOps | Cloud

December 2023

How To Build Your DevOps Toolchain Effectively

Software development, the adoption of DevOps practices has become imperative for teams aiming to streamline workflows, boost collaboration, and deliver high-quality products efficiently. At the heart of successful DevOps implementation lies a robust toolchain—a set of interconnected tools and technologies designed to automate, monitor, and manage the software development lifecycle.

GoReplay vs. Speedscale for Kubernetes Load Testing

2023: A Year to Remember

With such an eventful year of releases and development, we wanted to take a moment to reflect on all thats been accomplished this trip around the sun. Before we do that, a quick message to the users who've made this year so special. Thank you so much for joining us on this amazing journey. It is your trust and unwavering support which has helped us grow this vision into a reality.

Komodor Announces GA of New Kubernetes Cost Optimization Capabilities

Kubernetes has revolutionized how we manage and scale containerized applications, the flip side of this robustness is often a rising cloud bill. As you navigate the complexities of cluster growth across teams and applications, cost management can become a genuine headache. Enter Komodor’s newly released Cost Optimization Suite. In this blog post, we’ll unpack how this feature-rich addition to the Komodor platform will empower you to optimize costs without sacrificing performance.

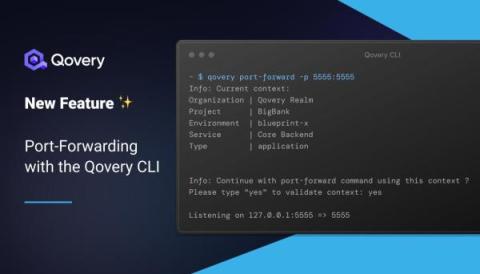

New Feature: Port-Forwarding with Qovery CLI

The Complete kubectl Cheat Sheet

Using Karpenter With EKS Fargate To Cut Costs On EKS Infrastructure

What's an Internal Developer Platform?

Kubernetes

Kubernetes (also known as k8s or “kube”) is an open source container orchestration platform that automates many of the manual processes involved in deploying, managing, and scaling containerized applications.

Rancher Live: k3s-as-a-service

WebAssembly Arrives: Predictions for 2024

Many will rightly point to 2023 as a year of AI, and it certainly was that. But what has really struck me in 2023 were the incremental, often less headline-grabbing changes, that have made developers’ lives easier and unlocked so much innovation. Kubernetes has continued to mature this year, going ‘under the hood’ in organization’s infrastructure and regularly used to support major use cases by large, enterprise-level businesses.

The Year Tech Went Green: Reflections and Predictions for 2024

2023 was a big year for sustainability. More and more tech teams are realizing that sustainability is not a nice to have but a strategic priority for businesses. This conviction extends from leadership through to developers. I have been blown away by developers leading the charge to create innovative new tools for accountability and Corporate Social Responsibility (CSR).

Kubernetes and Beyond: A Year-End Reflection with Kelsey Hightower

With 2023 drawing to a close, the final OpenObservability Talks of the year focused on what happened this year in open source, DevOps, observability and more, with an eye towards the future. I was delighted to be joined by a special guest, Kelsey Hightower, a renowned figure in the tech community, especially known for his contributions to the Kubernetes ecosystem.

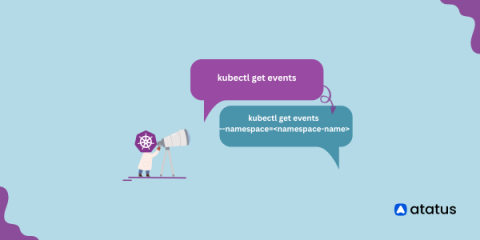

Kubernetes Events- A Complete Guide!

Kubernetes stands out as a powerful orchestrator, managing the deployment, scaling, and operation of containerized workloads. A key component of Kubernetes observability and troubleshooting capabilities is the generation of events. These events serve as vital records, documenting incidents and changes within the cluster, offering real-time insights into the health and dynamics of the system.

Top 11 Kubernetes Monitoring Tools[Includes Free & Open-Source] in 2024

Leveraging Argo Workflows for MLOps

As the demand for AI-based solutions continues to rise, there’s a growing need to build machine learning pipelines quickly without sacrificing quality or reliability. However, since data scientists, software engineers, and operations engineers use specialized tools specific to their fields, synchronizing their workflows to create optimized ML pipelines is challenging.

Top 10 Openshift Alternatives & Competitors

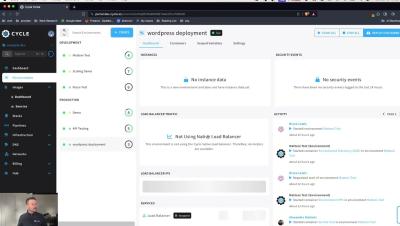

Introducing Cycle's Native Load Balancer

Over the last few months, you may have noticed that many of our changelog releases mentioned the release of, and improvements to, Cycle’s native load balancer. While this load balancer still officially remains in beta status, we wanted to begin diving into the details around it a bit more thoroughly: What is it? Why did we build it? How can you use it?

What's new in Calico v3.27

Calico v3.27 is out 🎉 and there are a lot of new features, updates, and improvements that are packed into this release. Here is a breakdown of the most important changes.

Cloud Resource Governance

Platform Engineering Predictions for 2024

How to troubleshoot unschedulable Pods in Kubernetes

What's new in Kubernetes v1.29: Mandala

As Kubernetes continues its journey of innovation and improvement, the release of Kubernetes v1.29, also known as the Mandala release, marks another significant milestone. Through this update, there are a total of 49 enhancements, and of these enhancements, 11 have graduated to Stable, 19 have entered Beta, and 19 have graduated to Alpha. In this blog, we'll dive into the key updates of Kubernetes v1.29, highlighting how these changes will shape the future of container orchestration.

Looking Back on 2023 and Our Plans for 2024

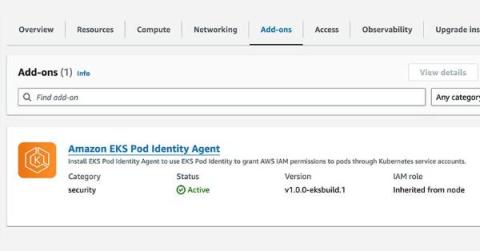

Exploring the new EKS Pod Identity Functionality

One announcement that caught my attention in the EKS space during this year’s AWS re:Invent conference was the addition of the Amazon EKS Pod Identities feature. This new addition helps simplify the complexities of AWS Identity and Access Management (IAM) within Elastic Kubernetes Service (EKS). EKS Pod Identities simplify IAM credential management in EKS clusters, addressing a problematic area over the past few years as Microservice adoption has risen across the industry.

Fleet & Discovery | Sematext

Kubernetes Reliability Risks: How to monitor for critical issues at scale

3 Myths About Backstage

Applied Category Theory for Cloud Native Innovation with Sal Kimmich - Navigate Europe 23

Deploying a Python Application with Kubernetes

A powerful open-source container orchestration system, Kubernetes automates the deployment, scaling, and management of containerized applications. It’s a popular choice in the industry these days. Automating tasks like load balancing and rolling updates leads to faster deployments, improved fault tolerance, and better resource utilization, the hallmarks of a seamless and reliable software development lifecycle.

Monitor Docker With Telegraf and MetricFire

Monitoring your Docker environment is critical for ensuring optimal performance, security, and reliability of your containerized applications and infrastructure. It helps in maintaining a healthy and efficient environment while allowing for timely interventions and improvements. In general, monitoring any internal services or running process helps you track resource usage (CPU, memory, disk space), allowing for efficient allocation and optimization.

How Authorization Evolves with Alex Olivier: From Basic Roles to ABAC - Navigate Europe 23

Best Rancher Alternatives & Competitors

Navigating Engineering Tradeoffs

In part 1 of this 2 part blog we looked at some common engineering tradeoffs. But how might someone navigate these tradeoffs and build a model that works for their product? Here are some core concepts that can help along the way.

Container Deployments 101

How to fix Kubernetes init container errors

Canonical Kubernetes 1.29 is now generally available

The Year of AI: Reflections and Predictions for 2024

Who could have predicted that 2023 would see such a huge leap forward in Artificial Intelligence (AI)? That this was going to be the year industries decided that, this is the decade, we would solve AI. From the earliest research as far back as the 1940s, we’ve all been holding our breath, wondering when AI will live up to the expectations painted by science fiction writers and futurists. With the arrival of ChatGPT from OpenAI, we’ve been catapulted into the next generation.

Accelerate Microservice Debugging with Telepresence with Edidiong Asikpo - Navigate Europe 23

Troubleshooting CrashLoopBackOff Errors in Kubernetes

DevOps Insights with Dennis: Kubernetes cluster orchestration with Ansible and Terraform

What you can't do with Kubernetes network policies (unless you use Calico): Policies to all namespaces or pods

Continuing from my previous blog on the series, What you can’t do with Kubernetes network policies (unless you use Calico), this post will be focusing on use case number five — Default policies which are applied to all namespaces or pods.

How K8s Preview Environments Revolutionize the Software Development Lifecycle - Navigate Europe 23

Five reasons why every CIO should consider Kubernetes

You should read this if you are an executive (CIO/CISO/CxO) or IT professional seeking to understand various Kubernetes business use cases. You’ll address topics like: Many enterprises adopting a multi-cloud strategy and breaking up their monolithic code realize that container management platforms like Kubernetes are the first step to building scalable modern applications.

Best Kubernetes Alternatives & Competitors

Top 10 Ephemeral Environments Solutions in 2024

How to Run Puppet in Docker

Real World, Server Side, WebAssembly with Spin with David Flanagan - Navigate Europe 23

Building Modern Data Microservices with VMware Tanzu Data Services

As many VMware customers have found in recent years, cloud native microservice-based applications improve IT operations efficiency and security along with developer speed and productivity. When data is at the heart of those mission-critical applications, VMware Tanzu Data Services, a portfolio of popular enterprise-grade cloud native data solutions for data in motion and at rest, can help.

We are talking with Jeroen van Erp from StackState at KubeCon NA 2023

StackState with Rancher Demo by Jeroen van Erp

EKS Cost Traps: 3 Common Mistakes And How To Avoid Them

The Benefits of using containerization in DevOps workflows

Software development cycles demand rapid deployment and scalability, traditional infrastructure struggles to keep pace. Enter containerization—a revolutionary technology empowering DevOps teams to streamline their workflows, enhance portability, and drive efficiency in software development and deployment.

Yay CPU Limits or Nay CPU Limits 001 by Natan Yellin - Navigate Europe 23

Ephemeral Environments for Every PR - Are We There Yet? by Yshay Yaacobi - Navigate Europe 23

Automating Remote Edge Deployments session live from KubeCon NA 2023

Equipping Dev Teams for Peak Observability Performance 001 with Andreas Prins - Navigate Europe 23

Introducing Products: A Tool to Model Argo CD Application Relationships and Promotions

At Codefresh, we are always happy to see companies and organizations as they adopt Argo CD and get all the benefits of GitOps. But as they grow we see a common pattern: It is at this point that organizations come to Codefresh and ask how we can help them scale out the Argo CD (and sometimes Argo Rollouts) initiative in the organization. After talking with them about the blockers, we almost always find the same root cause.

What is a Kubernetes cluster mesh and what are the benefits?

Kubernetes is an excellent solution for building a flexible and scalable infrastructure to run dynamic workloads. However, as our cluster expands, we might face the inevitable situation of scaling and managing multiple clusters concurrently. This notion can introduce a lot of complexity for our day-to-day workload maintenance and adds difficulty to keep all our policies and services up to date in all environments.

Deploying a Golang Microservice to Kubernetes

With the rise of cloud computing, containerization, and microservices architecture, developers are adopting new approaches to building and deploying applications that are more scalable and resilient. Microservices architecture, in particular, has gained significant popularity due to its ability to break down monolithic applications into smaller, independent services.

Rancher Live: What is a Service Mesh

Build Your Own kubectl in Node, Python and Go by Kyle Quest - Navigate Europe 23

Kubernetes Vs. Docker Vs. OpenShift: What's The Difference?

Tradeoffs In Software Engineering

Tradeoff: a balance achieved between two desirable but incompatible features; a compromise. Schooling often promotes the idea that there is a right and wrong answer to questions… It does little to prepare us for how many times that there are multiple right answers and no definitive best path forward. In a time where we have unlimited information at our fingertips, you can throw a stone and hit a thousand people with an opinion.

A deep dive into CPU requests and limits in Kubernetes

In a previous blog post, we explained how containers’ CPU and memory requests can affect how they are scheduled. We also introduced some of the effects CPU and memory limits can have on applications, assuming that CPU limits were enforced by the Completely Fair Scheduler (CFS) quota. In this post, we are going to dive a bit deeper into CPU and share some general recommendations for specifying CPU requests and limits.

Hacking Kubernetes for Fun and KWasm with Sven Pfennig - Navigate Europe 23

Helm OCI support in JFrog Artifactory

Powering Forecasting with Machine Learning: The Future of Managing Cloud Costs

As cloud computing continues to grow in popularity, so does the need for accurate cloud cost forecasting. With cloud costs being variable and unpredictable, it can be difficult to know how much you’re going to spend each month, a problem that can lead to budget overruns and financial problems. This makes cloud cost forecasting a necessary step in owning and managing your cloud efficiently. In simple terms, cloud cost forecasting is the process of predicting future cloud costs.

Multi-Cluster Observability Part 3: Practical Tips for Operational Success

This is the final article of a three-part series. To start at the beginning, read Part 1: Benefiting from multi-cluster setups requires familiarity with common variations and Part 2: Exploring the facets of a multi-cluster observability strategy. As companies scale software production, they lean on Kubernetes as a crucial container orchestration platform for managing, deploying and ensuring software availability.

Sematext Kubernetes Monitoring Demo

The case for Kubernetes resource limits: predictability vs. efficiency

This blog post by Grafana Labs Senior Software Engineer Milan Plžík was originally published on the Kubernetes.io blog on Nov. 16, 2023. There’s been quite a lot of posts suggesting that not using Kubernetes resource limits might be a fairly useful thing (for example, For the Love of God, Stop Using CPU Limits on Kubernetes or Kubernetes: Make your services faster by removing CPU limits ).

Leveling up your Grafana Skills with Syed Usman Ahmad - Navigate Europe 23

Build Your Own DBaaS with Peter Szczepaniak: Open Source & Customizable - Navigate Europe 23

Things to Keep in Mind when Building Internal Developer Portal by Port - KubeCon NA 23 - Civo TV

Datadog on Kubernetes Node Management #datadog #kubernetes #observability #infrastructure #shorts

Expanding OCI support in JFrog Artifactory with dedicated OCI repos

Amazon EKS Monitoring with OpenTelemetry [Step By Step Guide]

Build An Internal Developer Platform IDP In 30 Minutes with Viktor Farcic - Navigate Europe 23

The Importance of Strengthening Your Security with Sysdig at KubeCon NA 23 - Civo TV

Troubleshooting K8S EKS with Lightrun Developer Observability Platform

Chatting with Jamie Li from Dell at KubeCon NA 2023

Main Challenges When Managing Kubernetes Environments and Data Protection

The Internal Developer Platform Revolution: A CTO's Guide to Transforming Software Development

Releasing IAM EKS User Mapper in open-source

6 Essential Components of an Internal Developer Platform

Learning to be Cloud Native, building my project on Civo by Martyn Bristow - Navigate Europe 23

Dynatrace Carbon Impact: Measuring Emissions and Energy Consumption - KubeCon NA 23 - Civo TV

Catching up with Dinesh from Civo at KubeCon NA 2023

Getting the latest with Tim Irnich from the SUSE Edge team

Quick chat with Ezequiel Lanza from Intel at KubeCon NA 2023

Talking with Kai Wombacher from Kubecost here at KubeCon NA 2023

5 Docker Extensions to make your development life easier

Docker has revolutionized the way developers build, ship, and run applications. Its simplicity and efficiency in creating lightweight, portable containers have made it a go-to tool in modern software development. However, enhancing Docker’s capabilities with extensions can significantly boost productivity and simplify complex tasks. Here are five Docker extensions that can make your development life easier.