Operations | Monitoring | ITSM | DevOps | Cloud

January 2024

Advancing Platform Engineering with Northflank and Civo

Kubernetes Tutorial for Developers

Micrometer: The Gold Standard in Observability

What to expect from Civo in 2024: GPUs, Navigate, and ML

Kubernetes Volume Snapshots: Ensuring Data Integrity and Recovery

Basics of Backup and Recovery

Scaling Platform Engineering: Shopify's Blueprint

DevOps Insights with Dennis: Efficient DevOps - GitLab CI/CD for Docker Builds and ArgoCD Deployments on Kubernetes

How to manage Grafana instances within Kubernetes

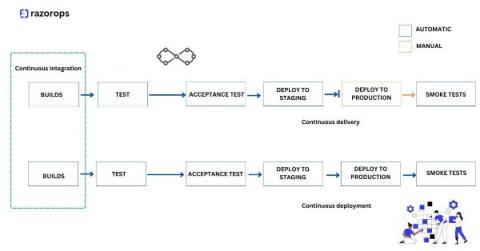

What Is Continuous Delivery and How Does It Work?

Docker Logging One-Stop Beginner's Guide

10 Top Kubernetes Alternatives (And Should You Switch?)

Confused by Kubernetes Multi-Tenancy? A Workshop with Dario Tranchitella - Navigate Europe 23

Ask Reliably Assistant to build and run a chaos engineering experiment on Kubernetes

Demo: Kubernetes support in Grafana Beyla

#016 - Kubernetes for Humans Podcast with Marcelo Quadros & Juliano Martins (Mercado Libre)

Grafana Beyla 1.2 release: eBPF auto-instrumentation with full Kubernetes support

Streamline Azure container monitoring with the Datadog AKS cluster extension

Introducing Rainbow Deployments with Zero Downtime

Kubernetes Vs. Openshift

Managing Kubernetes Events with Cribl Edge

Tanzu Talk - Developer Platforms and Productivity, with Serdar Badem

Transforming DevOps with IaC and GitOps - John Dietz & Jared Edwards - Navigate Europe 23

What Is Kubernetes? What You Need To Know As A Developer

How to make CI Pipelines better, explained by Solomon Hykes

Managing Telemetry Data Overflow in Kubernetes with Resource Quotas and Limits

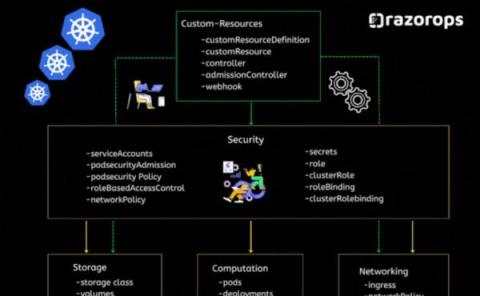

Conceptual Pillars Of Kubernetes

Experience Omni Dev with CodeZero with Narayan Sainaney - Navigate Europe 23

How to Start Contributing to Open Source Project with Mauricio Salatino

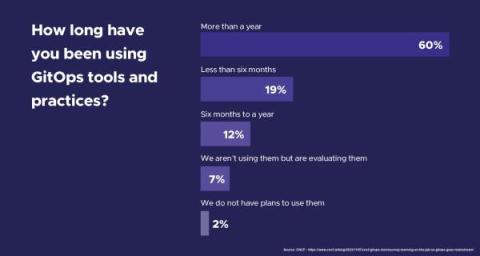

New CNCF Survey Highlights GitOps Adoption Trends - 91% of Respondents Are Already Onboard

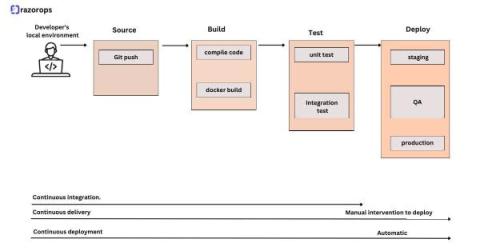

Stages of a CI/CD Pipeline

Rancher Live: Spotlight on Kubernetes v1.29

A Quick Guide to Get You Started with Spark on Kubernetes (K8s)

From Git to Deployed - Fast Platform Delivery - Colin Griffin & Dinesh Majrekar - Navigate Europe 23

Kelsey Hightower on retiring as a Distinguished Engineer from Google at 42

IaaS Providers Making Early Changes in 2024

A new year has started and some of the major IaaS providers are making major changes early on. AWS and GCP have both announced major changes that might be a signal for what's to come this year.

How to easily add application monitoring in Kubernetes pods

What is Git ?

EKS Add-ons And Integrations: Evaluating Cost Impacts

Unlock the Secrets of Machine Learning: A Beginner's Guide with Josh Mesout - Navigate Europe 23

Docker Log Rotation Configuration Guide | SigNoz

Supercharge FinOps Programs with Resource Guardrails

Time and time again we hear the same statements from FinOps teams with respect to what is holding back optimization of wasteful cloud resource consumption. Engineers and App Owners are interested in helping but stop short at actually taking actions to reduce that waste. There are many reasons for this main sticking point when it comes to application owners and developers taking action.

Building Controllers with Python Made Easy with Steve Giguere - Navigate Europe 23

Why Kubernetes For Developers is the Next Big Thing

Supercharge FinOps Programs with Cloud Resource Optimization

Set Resource Requests and Limits Correctly: A Kubernetes Guide

Kubernetes has revolutionized the world of container orchestration, enabling organizations to deploy and manage applications at scale with unprecedented ease and flexibility. Yet, with great power comes great responsibility, and one of the key responsibilities in the Kubernetes ecosystem is resource management. Ensuring that your applications receive the right amount of CPU and memory resources is a fundamental task that impacts the stability and performance of your entire cluster.

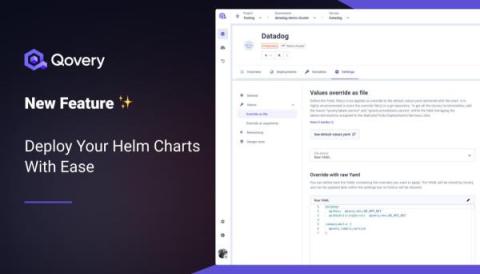

Introducing GitOps Versions: A Unified Way to Version Your Argo CD Applications

Last month, we announced our new GitOps Environment dashboard that finally allows you to promote Argo CD applications easily between different environments.

How We Leveraged the Honeycomb Network Agent for Kubernetes to Remediate Our IMDS Security Finding

Picture this: It’s 2 p.m. and you’re sipping on coffee, happily chugging away at your daily routine work. The security team shoots you a message saying the latest pentest or security scan found an issue that needs quick remediation. On the surface, that’s not a problem and can be considered somewhat routine, given the pace of new CVEs coming out. But what if you look at your tooling and find it lacking when you start remediating the issue?

How to Build an App with Spin and Wasm with Matt Butcher & Saiyam Pathak - Navigate Europe 23

Scaling Down Kubernetes Clusters

DevOps Insights with Dennis: Harnessing MetalLB - A Deep Dive into Kubernetes Load Balancing

Rancher Vs. OpenShift

How Can You Navigate the CNCF Ecosystem? Insights from Kunal Kushwaha - Navigate Europe 23

Monitoring Docker Containers Using OpenTelemetry [Full Tutorial]

Provisioning and Autoscaling

Is YAML Essential for Kubernetes? Engin Diri Explores Alternatives - Navigate Europe 2023

What is an Internal Developer Portal?

Managed GCP GKE Autopilot Released in Public Beta

How Can Kubernetes Thrive in Regulated Environments? Insights from Ryan Gutwein - Navigate Europe 23

Understanding roles in software operators

Building a Custom Read-only Global Role with the Rancher Kubernetes API

In 2.8, Rancher added a new field to the GlobalRoles resource (inheritedClusterRoles), which allows users to grant permissions on all downstream clusters. With the addition of this field, it is now possible to create a custom global role that grants user-configurable permissions on all current and future downstream clusters. This post will outline how to create this role using the new Rancher Kubernetes API, which is currently the best-supported method to use this new feature.

The role of the CI/CD pipeline in cloud computing

The Continuous Integration/Continuous Deployment (CI/CD) pipeline has evolved as a cornerstone in the fast-evolving world of software development, particularly in the field of cloud computing. This blog aims to demystify how CI/CD, a set of practices that streamline software development, enhances the agility and efficiency of cloud computing.

An Introduction to Civo Cloud - A Complete Guide with Field CTO Saiyam Pathak - Civo.com

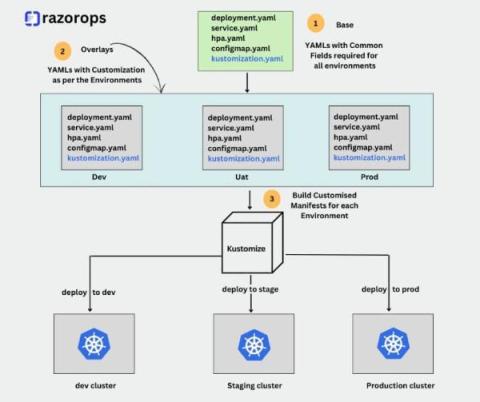

What is Kustomize ?

In the dynamic realm of container orchestration, Kubernetes stands tall as the go-to platform for managing and deploying containerized applications. However, as the complexity of applications and infrastructure grows, so does the challenge of efficiently managing configuration files. Enter Kustomize, a powerful tool designed to simplify and streamline Kubernetes configuration management.

5 Tools to Optimize Your Kubernetes Costs Without Sacrificing Performance

As Kubernetes environments become increasingly complex, the balance between reducing expenses and maintaining high performance is paramount. Businesses must leverage cost optimization tools to navigate this complexity without compromising on efficiency. These specialized tools provide crucial visibility into clusters, nodes, pods, and containers, allowing for precise management of resources and costs.

Team Komodor Does Klustered with David Flannagan (AKA Rawkode)

An elite DevOps team from Komodor takes on the Klustered challenge; can they fix a maliciously broken Kubernetes cluster using only the Komodor platform? Let’s find out! Watch Komodor’s Co-Founding CTO, Itiel Shwartz, and two engineers – Guy Menahem and Nir Shtein leverage the Continuous Kubernetes Reliability Platform that they’ve built to showcase how fast, effortless, and even fun, troubleshooting can be!

Kubernetes Networking: Understanding Services and Ingress

Within the dynamic landscape of container orchestration, Kubernetes stands as a transformative force, reshaping the landscape of deploying and managing containerized applications. At the core of Kubernetes' capabilities lies its sophisticated networking model, a resilient framework that facilitates seamless communication between microservices and orchestrates external access to applications. Among the foundational elements shaping this networking landscape are Kubernetes Services and Ingress.

Popular Kubernetes Distributions You Should Know About

In the realm of modern application deployment, orchestrating containers through Kubernetes is essential for achieving scalability and operational efficiency. This blog deals with diverse Kubernetes distribution platforms, each offering tailored solutions for organizations navigating the intricacies of containerized application management.

Rancher Live: What's the buzz with Cilium?

Announcing the Rancher Kubernetes API

It is our pleasure to introduce the first officially supported API with Rancher v2.8: the Rancher Kubernetes API, or RK-API for short. Since the introduction of Rancher v2.0, a publicly supported API has been one of our most requested features. The Rancher APIs, which you may recognize as v3 (Norman) or v1 (Steve), have never been officially supported and can only be automated using our Terraform Provider.

Enlightning - Be a Security Hero with Kubescape as Your Sidekick

Revolution in Development: Mark Allen's Code Zero & Kubernetes Top Tips - Navigate Europe 23

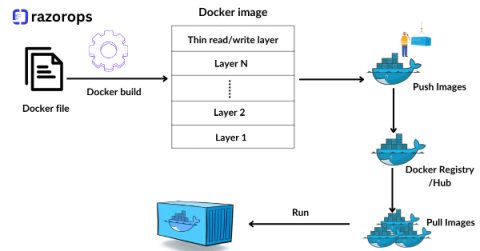

Boost Your Software Deliveries with Docker and Kubernetes

Software delivery are paramount. The ability to swiftly deploy, manage, and scale applications can make a significant difference in staying ahead in the competitive tech industry. Enter Docker and Kubernetes, two revolutionary technologies that have transformed the way we develop, deploy, and manage software.

Securing Software Development: Marino Wijay's Expert Insights - Navigate Europe 23

Empowerment without Clarity Is Chaos

The companies we work with at Tanzu by Broadcom are constantly looking for better, faster ways of developing and releasing quality software. But digital transformation means fundamentally changing the way you do business, a process that can be derailed by any number of obstacles. In his recent video series, my colleague Michael Coté identifies 14 reasons why it’s hard to change development practices in large organizations.

The Cost Benefits Of Using Scaling Within An EKS Cluster

VMware Tanzu vs Openshift

Implementing OTEL for Kubernetes Monitoring

Kubernetes is a top container orchestration platform. The Kubernetes clusters manage everything much from collecting to storing vast magnitudes of data from your multiple applications. It is this very property that can sometimes boom into an unending data pile later on. Imagine a large warehouse of apparel, it has every size of clothing for men, women, and children. Now if you are asked to pick out one particular type from it within a small time frame, I know you will totally dread it.

What's New in Docker 2023? Discover New Docker Features with Francesco Ciulla! - Navigate Europe 23

Top 10 Platform Engineering Tools You Should Consider in 2024

Harnessing the Power of Metrics: Four Essential Use Cases for Pod Metrics

In the dynamic world of containerized applications, effective monitoring and optimization are crucial to ensure the efficient operation of Kubernetes clusters. Metrics give you valuable insights into the performance and resource utilization of pods, which are the fundamental units of deployment in Kubernetes. By harnessing the power of pod metrics, organizations can unlock numerous benefits, ranging from cost optimization to capacity planning and ensuring application availability.

Boosting Kubernetes Stability by Managing the Human Factor

As technology takes the driver’s seat in our lives, Kubernetes is taking center stage in IT operations. Google first introduced Kubernetes in 2014 to handle high-demand workloads. Today, it has become the go-to choice for cloud-native environments. Kubernetes’ primary purpose is to simplify the management of distributed systems and offer a smooth interface for handling containerized applications no matter where they’re deployed.

Striking the Balance: Tips for Enhancing Access Control and Enforcing Governance in Kubernetes

Kubernetes, with its robust, flexible, and extensible architecture, has rapidly become the standard for managing containerized applications at scale. However, Kubernetes presents its own unique set of access control and security challenges. Given its distributed and dynamic nature, Kubernetes necessitates a different model than traditional monolithic apps.

Docker File Best Practices For DevOps Engineer

Containerization has become a cornerstone of modern software development and deployment. Docker, a leading containerization platform, has revolutionized the way applications are built, shipped, and deployed. As a DevOps engineer, mastering Docker and understanding best practices for Dockerfile creation is essential for efficient and scalable containerized workflows. Let’s delve into some crucial best practices to optimize your Dockerfiles.