Operations | Monitoring | ITSM | DevOps | Cloud

September 2023

Observability Pillars: Exploring Logs, Metrics and Traces

What Is Tiered Pricing? 5 Tiered Pricing Examples

An update on CircleCI's reliability

This month, we’re focused on sharing a more comprehensive picture of our reliability and availability. We experienced 6 extended incidents, 2 of which were due to an upstream third party. While we always want to be transparent with our performance against our stated goals, it’s crucial to note that, while they lasted 60 minutes or longer, the impact of these August incidents on our business operations was relatively minimal.

Alternatives to SMS alerts

While SMS alerts are handy, they also tend to be tricky. Across 120+ countries, we continuously deal with compliances & regulations from Vendors, Government, and Phone carrier companies. Other alert channels similar to SMS are a lot less cumbersome with higher delivery rates. Let’s take a look at the available options to switch from SMS.

Amplifying Developer Efficiency: Leveraging Internal Developer Platforms for Optimal Performance

Digital innovation in finance - the open source imperative

The Leading Release Management Tools

In today's ever-changing digital development landscape organizations face the challenge of delivering high-quality software quickly and efficiently. Developing and producing new products and updates is a compelling but fundamental part of any technology business. But ensuring the process runs smoothly to make certain that your release reaches your customers as expected can be challenging. This is where release management tools come in.

How to configure API Management to use Business Activity Monitoring?

How to optimize cost of Logic Apps Consumption?

How do log analytics and Business Activity Monitoring differ for business users?

EP1: How AI is Shaping the Future of Data Center Management w/ David Cappuccio

How to operationalize FinOps to drive cloud and cost efficiency

2bcloud Named Microsoft Azure Expert Managed Services Provider for Four Consecutive Years

Blameless Announces New Google Docs and Google Drive Integration to Help Engineering Teams Enhance Their Incident Management and Retrospectives

Unveiling Past Incidents: Accelerating Incident Resolution with Historical Context

Kubernetes Load Testing: Top 5 Tools & Methodologies

How to get your security team on board with your cloud migration

Test Automation - A Key to Telco Cloud Adoption

Beyond savings: Overlooked aspects of container optimization

DIY or managed suite: Choosing the right AWS container optimization solution

What Is a Feature Flag? Best Practices and Use Cases

Do you want to build software faster and release it more often without the risks of negatively impacting your user experience? Imagine a world where there is not only less fear around testing and releasing in production, but one where it becomes routine. That is the world of feature flags. A feature flag lets you deliver different functionality to different users without maintaining feature branches and running different binary artifacts.

Telco Operations: More Powerful and Efficient, One IT Automation at a Time

Technological advancements in telecommunications are keeping everyone on their toes, as communication service providers (CSPs) obsess over the next big thing to roll out and change the way the world communicates, stays informed, manages its daily lives, and more.

What is Apache Tomcat server and how does it work?

Product Spotlight: Enhancing Incident Resolution with Blameless' Microsoft Teams Integration

The CEO Pocket Guide to Internal Developer Portals

Hands-on Hacking Containers and ways to Prevent it - Civo Navigate NA 23

Serverless Microservices on Azure

Microsoft Fabric Explained: All you need to know

Five mindset shifts for effective reliability programs

When people think about reliability, it’s easy to focus on incident response and moving fast to fix outages. This reactive approach to reliability can very quickly lead to burnout as you bounce from incident to incident. But that’s not the only way to think about reliability.

How to SSH into Docker containers

A Docker container is a portable software package that holds an application’s code, necessary dependencies, and environment settings in a lightweight, standalone, and easily runnable form. When running an application in Docker, you might need to perform some analysis or troubleshooting to diagnose and fix errors. Rather than recreating the environment and testing it separately, it is often easier to SSH into the Docker container to check on its health.

Navigating Multi-Cloud Environments: Managing Deployments with Ease

Multi-cloud seems like an obvious path for most organizations, but what isn’t obvious is how to implement it, especially with a DevOps centric approach. For Cycle users, multi-cloud is just something they do. It’s a native part of the platform and a standardized experience that has led to 70+% of our users consuming infrastructure from more than 1 provider.

The importance of Azure cost to DevOps

Alerts Config Manager and Netdata Assistant Chat! | Netdata Office Hours #7

Unlocking Kubernetes Deployment Excellence with CI CD Automation

Software development, agility and efficiency are paramount. Continuous Integration and Continuous Deployment (CI/CD) practices have revolutionised the way we build, test, and deploy software. When coupled with the power of Kubernetes, an open-source container orchestration platform, organisations can achieve a level of deployment excellence that was once only a dream.

Sean Astin Dives into Developer Well-Being: GitKon 2023 Spotlight!

How to build LLMs with open source

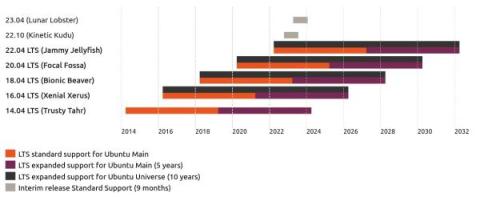

Still running Ubuntu 18.04? What you need to know

State of DevOps: Takeaways on the 2023 DORA Report

Deploy or defer the latest macOS 14 Sonoma update with Endpoint Central

The wait for the latest macOS 14 update is finally over. The newest macOS Sonoma update comes with a plethora of security and privacy features intended to make your computing environment safer. Apple users can now explore new video conferencing features and advanced game mode, enable password and passkey sharing, and so much more. While there’s plenty of excitement that comes with an update like this, it’s important to proceed with caution.

Implementing Backstage 1: Getting Started

Backstage is a platform for building developer portals. Originally developed internally at Spotify, it’s now open source and available through GitHub. Backstage allows DevOps teams to create a single-source, centralized web application for sharing and finding software (through the software catalog feature), as well as templates and documentation.

2bcloud Named Microsoft Azure Expert Managed Services Provider for Four Consecutive Years

New York, September 27, 2023 – 2bcloud, a leading next-generation, multi-cloud managed service provider for tech companies on their cloud journey, today announced it has maintained its elite status as a Microsoft Azure Expert Managed Services Provider (MSP). The Azure Expert MSP is the highest level of partner certification from Microsoft on Azure, and the partner status underlines 2bcloud’s position as a leading Microsoft Azure partner.

Security in the cloud: Whose responsibility is it?

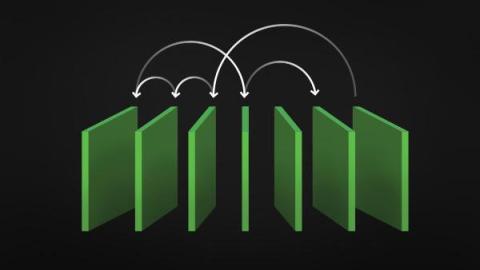

Reaping the Benefits of Multi-Cluster Orchestration in Kubernetes

The rise of containerization has precipitated an unprecedented shift in the software development landscape, with Kubernetes emerging as the de facto standard for managing large-scale containerized applications. One of the more nuanced aspects of Kubernetes that is gaining attention is multi-cluster orchestration. This approach to cluster management offers several compelling advantages that reshape how businesses operate and innovate in a cloud-native context.

Virtual Kubernetes Clusters - Tips and Tricks - Civo Navigate NA 2023

Introducing the Datadog Open Source Hub

At Datadog, we have always been deeply involved with open source software—producing it, using it, and contributing to it. Our Agent, tracers, SDKs, and libraries have been open source from the beginning, giving our customers the flexibility to extend our tools for their own needs. The transparency of our open source components also allows them to fully audit the Datadog software that is running on their systems. But our commitment to open source only starts there.

Monitoring Machine Learning

I used to think my job as a developer was done once I trained and deployed the machine learning model. Little did I know that deployment is only the first step! Making sure my tech baby is doing fine in the real world is equally important. Fortunately, this can be done with machine learning monitoring. In this article, we’ll discuss what can go wrong with our machine-learning model after deployment and how to keep it in check.

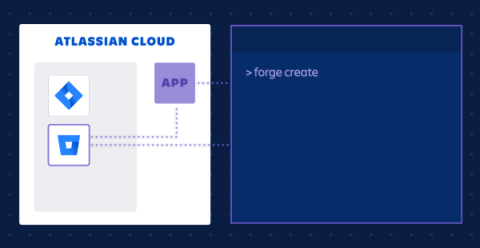

Announcing the EAP of Forge in Bitbucket Cloud

An Overview on Azure API Center

Better learning from incidents: A guide to incident post-mortem documents

If you’re just starting out in the world of incident response, then you’ve probably come across the phrase “post-mortem” at least once or twice. And if you’re a seasoned incident responder, the phrase probably invokes mixed feelings. Just to clarify, here, we’re talking about post-mortem documents, not meetings. It’s a distinction we have to make since lots of teams use the phrase to refer to the meeting they have after an incident.

Convergence of Observability and Security: A New Era

Observability and security are converging, benefiting dev and security teams. Runtime observability is the missing component to this important endeavor, providing much-needed data and insights to DevSecOps and AppSec teams.

What is IIS?

In this post, we’re going to take a close look at IIS (Internet Information Services). We’ll look at what it does and how it works. You’ll learn how to enable it on Windows. And after we’ve established a baseline with managing IIS using the GUI, you’ll see how to work with it using the CLI. Let’s get started!

GitKraken September Video Newsletter (GitKraken Client & GitLens)

GitKraken September Video Newsletter (Git Integration for Jira)

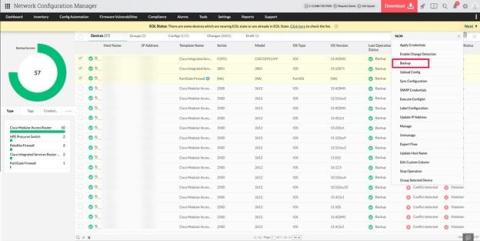

Streamlining network efficiency: Unveiling the power of ManageEngine Network Configuration Manager

Status Pages 101: Everything You Need to Know About Status Pages

Status Pages are critical for effective Incident Management. Just as an ill-structured On-Call Schedule can wreak havoc, ineffective Status Pages can leave customers and stakeholders, adrift, underscoring the need for a meticulous approach. Here are two, Matsuri Japon, a Non-Profit Organization and Sport1, a premier live-stream sports content platform, both integrate Squadcast Status Pages to enhance their incident response strategies discreetly. You may read about them later. Crafting these Status Pages demands precision, offering dynamic updates and collaboration.

31 Crucial DevOps Automation Tools Your Team Needs In 2023

Canonical releases Charmed MLFlow

Infrastructure Monitoring Today: How It Works & What It Does

Failing in the Cloud-How to Turn It Around

Success in the cloud continues to be elusive for many organizations. A recent Forbes article describes how financial services firms are struggling to succeed in the cloud, citing Accenture Research that found that only 40% of banks and less than half of insurers fully achieved their expected outcomes from migrating to cloud. Similarly, a 2022 KPMG Technology Survey found that 67% of organizations said they had failed to receive a return on investment in the cloud.

Netdata, Prometheus, Grafana Stack

In this blog, we will walk you through the basics of getting Netdata, Prometheus and Grafana all working together and monitoring your application servers. This article will be using docker on your local workstation. We will be working with docker in an ad-hoc way, launching containers that run /bin/bash and attaching a TTY to them. We use docker here in a purely academic fashion and do not condone running Netdata in a container.

Netdata Processes monitoring and its comparison with other console based tools

Netdata reads /proc/

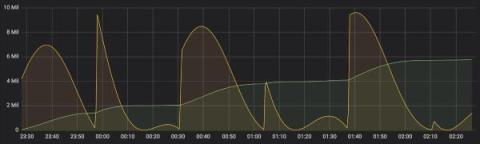

Netdata QoS Classes monitoring

Netdata monitors tc QoS classes for all interfaces. If you also use FireQOS it will collect interface and class names. There is a shell helper for this (all parsing is done by the plugin in C code - this shell script is just a configuration for the command to run to get tc output). The source of the tc plugin is here. It is somewhat complex, because a state machine was needed to keep track of all the tc classes, including the pseudo classes tc dynamically creates. You can see a live demo here.

Eight Cybersecurity Tips for Businesses in 2023

The online playing field for businesses in multiple niches has expanded, with the internet enjoying an overarching presence in various facets. New and larger markets have become more accessible through online platforms. All an established business needs is computer-based tools and an internet connection that won’t falter. Expansion is often rewarding but has its fair share of risks; thus, melding a nice blend of cybersecurity with a growing company is the safe way to go about it.

Make the Most of your System Center Orchestrator Deployment

By licensing the Microsoft System Center suite, customers unlock a comprehensive array of tools encompassing server management, virtual machine administration, and automation capabilities. Frequently, customers are observed deploying automation use cases with System Center Orchestrator to meet specific infrastructure management needs.

How to Connect Your AWS and Microsoft Azure Environments: A Complete Guide

We explain why you should connect the leading two cloud providers, the options available, and which one is right for your business.

Automate Kubernetes Platform Operations

Clouds, caches and connection conundrums

We recently moved our infrastructure fully into Google Cloud. Most things went very smoothly, but there was one issue we came across last week that just wouldn’t stop cropping up. What follows is a tale of rabbit holes, red herrings, table flips and (eventually) a very satisfying smoking gun. Grab a cuppa, and strap in. Our journey starts, fittingly, with an incident getting declared... 💥🚨

The Limitations Of Combining CloudHealth And Kubecost

Scaling in Kubernetes: An Overview

Kubernetes has become the de facto standard for container orchestration, offering powerful features for managing and scaling containerized applications. In this guide, we will explore the various aspects of Kubernetes scaling and explain how to effectively scale your applications using Kubernetes. From understanding the scaling concepts to practical implementation techniques, this guide aims to equip you with the knowledge to leverage Kubernetes scaling capabilities efficiently.

Rootless Containers - A Comprehensive Guide

Containers have gained significant popularity due to their ability to isolate applications from the diverse computing environments they operate in. They offer developers a streamlined approach, enabling them to concentrate on the core application logic and its associated dependencies, all encapsulated within a unified unit.

Joining the Power of AI and Automation: Today's Business-critical Opportunity

Artificial intelligence (AI) won’t fade anytime soon, and since Generative AI (genAI) joined the party in Nov. 2022, innovative business strategies will only get louder. The not-so-fun part of AI and genAI’s growth shows up when businesses resist change and the adoption of emerging technologies. But the truth is – business leaders must step up.

Install MLflow in less than 5 minutes

Run Azure Functions locally in Visual Studio 2022

Azure Event Grid dead letter monitoring

How to ensure your Kubernetes Pods have enough memory

Memory (or RAM, short for random-access memory) is a finite and critical computing resource. The amount of RAM in a system dictates the number and complexity of processes that can run on the system, and running out of RAM can cause significant problems, including: This problem can be mitigated using clustered platforms like Kubernetes, where you can add or remove RAM capacity by adding or removing nodes on-demand.

Monitor multiple Azure subscriptions in a single dashboard

Operationalizing Kubernetes within DoD - Civo Navigate NA 2023

Cycle's New Interface Part III: The Future is LowOps

We recently covered some of the complex decisions and architecture behind Cycle’s brand new interface. In this final installment, we’ll peer into our crystal ball and glimpse into the future of the Cycle portal. Cycle already is a production-ready DevOps platform capable of running even the most demanding websites and applications. But, that doesn’t mean we can’t make the platform even more functional, and make DevOps even simpler to manage.

[Webinar] Unified container visibility: Managing multi-cluster Kubernetes environments

Charmed Kubeflow 1.8 Beta Release

How to host a multiple-application project on Platform.sh

We’re here to shed a little light on how you can host and configure your multi-app projects on Platform.sh with a step-by-step guide on how to set up a project on our platform. Enabling your team to focus more on creating incredible user experiences and less on multi-app infrastructure management. As well as a few multi-app development tips along the way. We’re going to look at this through the lens of a customer on the lookout for multi-application hosting with a few specific constraints.

Why Unit Cost Must Be Your North Star Metric In The Cloud

Optimizing Workloads in Kubernetes: Understanding Requests and Limits

Kubernetes has emerged as a cornerstone of modern infrastructure orchestration in the ever-evolving landscape of containerized applications and dynamic workloads. One of the critical challenges Kubernetes addresses is efficient resource management – ensuring that applications receive the right amount of compute resources while preventing resource contention that can degrade performance and stability.

Webinar: Best Practices for Measuring and Growing Developer Productivity

Webinar: Still using spreadsheets to track your services? You need a developer portal.

Rescue Struggling Pods from Scratch

Containers are an amazing technology. They provide huge benefits and create useful constraints for distributing software. Golang-based software doesn’t need a container in the same way Ruby or Python would bundle the runtime and dependencies. For a statically compiled Go application, the container doesn’t need much beyond the binary.

The Ultimate Guide to DORA Metrics for DevOps

In the world of software delivery, organizations are under constant pressure to improve their performance and deliver high-quality software to their customers. One effective way to measure and optimize software delivery performance is to use the DORA (DevOps Research and Assessment) metrics. DORA metrics, developed by a renowned research team at DORA, provide valuable insights into the effectiveness of an organization's software delivery processes.

Building a Forge app in Bitbucket Cloud | Atlassian

Intro to Speedscale

Build a CIS hardened Ubuntu Pro server image on the AWS Console

Heroku Monitoring: What To Look For In Your Addons

Heroku is a cloud-based platform that supports multiple programming languages. It functions as a Platform as a Service (PaaS), allowing developers to effortlessly create, deploy, and administer cloud-based applications. With its compatibility with languages like Java, Node.js, Scala, Clojure, Python, PHP, and Go, Heroku has become the preferred choice for developers who desire powerful and adaptable cloud capabilities.

AI adoption for software: a guide to learning, tool selection, and delivery

This post was written with valuable contributions from Michael Webster, Kira Muhlbauer, Tim Cheung, and Ryan Hamilton. Remember the advent of the internet in the 90s? Mobile in the 2010s? Both seemed overhyped at the start, yet in each case, fast-moving, smart teams were able to take these new technologies at their nascent stage and experiment to transform their businesses. This is the moment we’re in with artificial intelligence. The technology is here.

10 Best Internal Developer Portals to Consider in 2023

Day 0 Configuration: Building Long Term Success Beyond Server Provisioning

Azure SQL Database monitoring

Speed up your Team by Moving your Development Environments to Kubernetes - Civo Navigate NA 2023

OpenTelemetry vs. OpenTracing

OpenTelemetry vs. OpenTracing - differences, evolution, and ways to migrate to OpenTelemetry.

SharePoint Admin Guide for Beginners

SharePoint is a Microsoft-owned platform that provides an extensive range of solutions for content management and collaboration within and outside an organization. Built on a web-based technology stack, it integrates seamlessly with Microsoft Office 365 and offers features like document libraries, team sites, intranets, extranets, and advanced search functionalities. It can be deployed both on-premises or in the cloud.

Unlocking Microsoft SharePoint

Before you dive into SharePoint, you may wonder, “Why do I need a technical guide?” The simple answer? To unlock SharePoint’s full potential. Understanding its nuts and bolts will empower you to customize it to your needs, optimize its functionality, and elevate your overall user experience. This article goes beyond the surface-level features to explain the underlying architecture, data storage mechanisms, and much more. Ready to unlock the mysteries of SharePoint? Buckle up!

Acing server performance: Don't overlook these crucial 11 monitoring metrics

A server, undeniably, is one of the most crucial components in a network. Every critical activity in a hybrid network architecture is somehow related to server operations. Servers don’t just serve as the spine of modern computing operations—they are also pivotal for network communications. From sending emails to accessing databases and hosting applications, a server’s reliability and performance have a direct impact on the organization’s growth.

What Is an IP Address Management Service and Why Might You Need It?

Single-Tenant Vs. Multi-Tenant Cloud: When To Use Each

Differences Between SharePoint On-Premise and SharePoint Online

So, you’re knee-deep in the world of Microsoft SharePoint, huh? If you’re an IT professional, you’re well aware that SharePoint is no longer just a “nice-to-have” but more of a “must-have.” You’ve got two flavors to choose from: SharePoint On-Premise and SharePoint Online. Which one is the right fit for your organization? Buckle up, because we’re about to dive deep into the nitty-gritty differences, pros, cons, and everything in between.

Starting the XLA Journey: A Next-level Perspective for Enhanced Experiences

There’s a profound shift happening today that is taking businesses in a fresh, new direction. Outcomes are at the forefront of IT leaders’ minds, and they’re rightfully becoming a core business accelerator. It’s clear that employee and customer experiences are critical for growing businesses. The trend stems from a shift in priorities.

The importance of SDT and how to successfully schedule planned downtime

Merging to Main #7: Scaling CI/CD Across Languages & Technologies

How we've made Status Pages better over the last three months

A few months ago we announced Status Pages – the most delightful way to keep customers up-to-date about ongoing incidents. We built them because we realized that there was a disconnect between what customers needed to know about incidents, and how easily accessible this information was. For example: As we built them, we focused on designing a solution that powered crystal-clear communication, without the overhead — all beautifully integrated into incident.io.

Using Imposter Syndrome to your Advantage - Civo Navigate NA 2023

Bill Kennedy: The mistake boot, building ACs, Black boxes & AI in software - The Reliability Podcast

What is CMDB?

A Configuration Management Database (CMDB) like ServiceNow CMDB serves as a centralized repository for comprehensive information about the various components of an information system. These components, known as Configuration Items (CIs), encompass hardware (such as servers and switches), software applications, network paths, and even individuals or documentation.

Microsoft SharePoint: From Its Inception to Future Prospects

Hello, tech aficionados and IT professionals! If you’re in the business of managing digital assets, workflows, or intranets, chances are you’ve crossed paths with SharePoint. But do you ever wonder how this versatile platform has evolved over the years? Or perhaps you’re curious about what future enhancements are on the horizon? Well, buckle up, because we’re about to embark on a comprehensive journey through the fascinating world of SharePoint.

Circonus Launches Open Beta for Passport, Ushering in a New Era of Flexible Observability

Sky-high observability costs or visibility gaps? This is the unfortunate trade-off many organizations have to make when it comes to determining how much telemetry data they should collect and send to their observability tools. Teams either collect more data than they need and pay the price, or they collect less and suffer visibility gaps. Today, this all changes.

The Evolution of IT Monitoring

What I Learnt Fixing 50+ Broken Kubernetes Clusters - Civo Navigate NA 23

Terraform is No Longer Open Source. Is OpenTofu (ex OpenTF) the Successor?

Terraform, a powerful Infrastructure as Code (IAC) tool, has long been the backbone of choice for DevOps professionals and developers seeking to manage their cloud infrastructure efficiently. However, recent shifts in its licensing have sent ripples of concern throughout the tech community. HashiCorp, the company behind Terraform, made a pivotal decision last month to move away from its longstanding open-source licensing, opting instead for the Business Source License (BSL) 1.1.

How a simple metric drives reliability culture at Slack

How do you track reliability in an organization with hundreds of engineers, dozens of daily production changes, and over 32 million monthly users? Even more, how do you do this in a way that's simple, presentable to executives, and doesn't dump a ton of extra work on to engineers' plates? Slack recently wrote about how they created the Service Delivery Index for Reliability (SDI-R), a simple yet comprehensive metric that became the basis for many of their reliability and performance indicators.

Automate Agent installation with the Datadog Ansible collection

Ansible is a configuration management tool that helps you automatically deploy, manage, and configure software on your hosts. By turning manual workflows into automated processes, you can quicken your deployment lifecycle and ensure that all hosts are equipped with the proper configurations and tools. The Datadog collection is now available in both Ansible Galaxy and Ansible Automation Hub.

How I cut my AKS cluster costs by 85%

Master Class: Deep Dive with SLE BCI

Securing Open Source Dependencies on Public Cloud

How to Build an Internal Developer Platform: Everything You Need to Know

Sharing data across hybrid cloud and local CI/CD environments

This short tutorial demonstrates how you can work on data stored on your own infrastructure or in hybrid cloud CI/CD environments using CircleCI’s shared workspaces functionality — without having to configure VPNs, SSH tunnels, or other additional infrastructure.

Mono vs MultiRepo Challenges

What is Graphite Monitoring?

Today we are going to touch up on the topic of why Graphite monitoring is essential. In today’s current climate of extreme competition, service reliability is crucial to the success of a business. Any downtime or degraded user experience is simply not an option as dissatisfied customers will jump ship in an instant. Operations teams must be able to monitor their systems organically, paying particular attention to Service Level Indicators (SLIs) pertaining to the availability of the system.

CapEx Vs. OpEx In The Cloud: 10 Key Differences

Application Dependency Maps: The Secret Weapon for Troubleshooting Kubernetes

Picture this: You're knee-deep in the intricacies of a complex Kubernetes deployment, dealing with a web of services and resources that seem like a tangled ball of string. Visualization feels like an impossible dream, and understanding the interactions between resources? Well, that's another story. Meanwhile, your inbox is overflowing with alert emails, your Slack is buzzing with queries from the business side, and all you really want to do is figure out where the glitch is. Stressful? You bet!

Building a Secure By-Design Pipeline with an Open Source Stack - Civo Navigate NA 23

Database Schema Migrations in Ephemeral Environments: Best Practices

Navigating IT Transformation with Automation: Your Two-pronged Strategy to Future-proof IT

Daunting. It’s one of the first words that comes to mind for IT and business leaders tackling the challenges of 2023 and looking to future-proof their organizations. IT operations (ITOps) departments are working to balance priorities during a time of growing uncertainty and pressure. ITOps is the team that keeps the lights on, and today, it must do so with enough speed to meet business demands.

Deploying a multi-availability zone Kubernetes cluster for High Availability

Many cloud infrastructure providers make deploying services as easy as a few clicks. However, making those services high availability (HA) is a different story. What happens to your service if your cloud provider has an Availability Zone (AZ) outage? Will your application still work, and more importantly, can you prove it will still work? In this blog, we'll discuss AZ redundancy with a focus on Kubernetes clusters.

Revolutionize Data Ingestion: Introducing Terraform Support for Splunk Cloud Platform

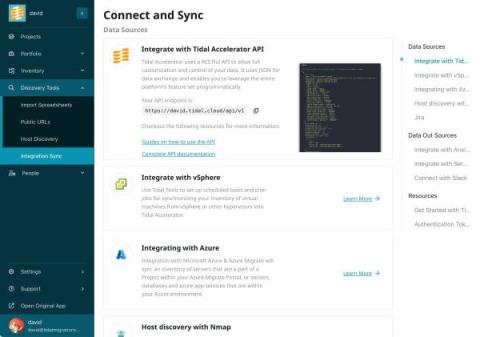

Azure Integration Automates Asset Discovery on Tidal Accelerator

We’re excited to share an update on our Microsoft Azure integration that automates discovery and mapping of key cloud assets into Tidal Accelerator. Tidal has enabled a new integration that pulls information on Azure Virtual Machines (VMs), Azure App Service, and Azure Database instances, Elastic Pools and Servers, directly into Tidal Accelerator for further analysis.

Top 5 Resiliency Trends of 2023

In today’s world, resilience is no longer a conditioned desire or methodology to try but has become a necessity for sustained success in software development and IT operations. As DevOps and Agile teams keep moving forward to cross boundaries, come up with new methodologies, and drive innovation, it is now important to have the ability to quickly recover from failures, adapt to changing conditions, and maintain high performance under pressure.

Graphite vs Prometheus

Graphite and Prometheus are both great tools for monitoring networks, servers, other infrastructure, and applications. Both Graphite and Prometheus are what we call time-series monitoring systems, meaning they both focus on monitoring metrics that record data points over time. At MetricFire we offer a hosted version of Graphite, so our users can try it out on our free trial and see which works better in their case.

Monitoring Kubernetes tutorial: Using Grafana and Prometheus

Behind the trends of cloud-native architectures and microservices lies a technical complexity, a paradigm shift, and a rugged learning curve. This complexity manifests itself in the design, deployment, and security, as well as everything that concerns the monitoring and observability of applications running in distributed systems like Kubernetes. Fortunately, there are tools to help developers overcome these obstacles.

The Difficulties of Measuring Engineering

The report is so absurd and naive that it makes no sense to critique it in detail. - Kent Beck responding to the McKinsey Report. Luckily this was a hollow threat, because a few days later he and fellow blogger Gergely Orosz released a two part blog series critiquing not exactly Mckinsey's report but... any report that tried to put “effort based” metrics at the top of the list for things to track.

What is new in Rancher Desktop 1.10

We are delighted to announce the release of a new version of Rancher Desktop. This release includes significant enhancements to features such as Deployment Profiles, mount types support, networking proxy configuration, and other important bug fixes.

3 new metrics CEOs want from Engineering leaders

Back to school: Ubuntu Desktop in Education

The Role and Responsibilities of a Data Center Technician

A data center technician holds the responsibility of installing, maintaining, and monitoring the equipment and systems within a data center. They ensure the proper functioning and updated status of both hardware and software, playing a vital role in safeguarding data security and availability.

Our Cloud Cost Optimization Framework That Works

We’ve seen two general approaches to getting optimization done to reduce cloud costs in our customers; the first and most popular is “the stick” where FinOps teams campaign against lines of business development teams with a mantra of “spend less”!

Intro to Cycle.io: Build Container from Git Repo

New Release: Galileo SMARTboards

The Ultimate RDS Instance Types Guide: What You Need To Know

Announcing the Harvester v1.2.0 Release

Ten months have elapsed since we launched Harvester v1.1 back in October of last year. Harvester has since become an integral part of the Rancher platform, experiencing substantial growth within the community while gathering valuable user feedback along the way. Our dedicated team has been hard at work incorporating this feedback into our development process, and today, I am thrilled to introduce Harvester v1.2.0!

Understanding Volume Shadow Copy Service (VSS)

Hello, and welcome to this deep dive into one of the most underappreciated yet profoundly useful technologies in the Windows operating system—Volume Shadow Copy Service, commonly known as VSS. Have you ever been caught in a situation where your computer crashes, and you lose hours, days, or even weeks of work? It’s a heart-stopping moment that most of us have unfortunately experienced. But here’s where VSS comes into play.

Accelerating Decision Advantage of Command and Control with Mattermost

In the fast-paced, dynamic landscape of multi-domain operations, military commanders need to be able to make rapid, data-driven decisions and seamlessly coordinate units to achieve mission success. The multi-domain fight is incredibly complex, incorporating ground, air, space, and cyber, and as a result, has driven the evolution of traditional command and control (C2).

Understanding Monoliths and Microservices - VMware Tanzu Fundamentals

The balancing act of reliability and availability

As consumers, we expect the products and software we buy to work 100% of the time. Unfortunately, that’s impossible. Even the most reliable products and services experience some disruption in service. Crashes, bugs, timeouts. There are a ton of contributing factors, so it's impossible to distill disruptions down to a single cause. That said, technology is becoming more and more sophisticated, and so is the infrastructure that supports it.

Using Ephemeral Environments for Chaos Engineering and Resilience Testing

How to Gain the Advantages of an Immutable and Self-Healing Infrastructure

Among the benefits D2iQ customers gain by deploying the D2iQ Kubernetes Platform (DKP) is an immutable and self-healing Kubernetes infrastructure. The benefits include greater reliability, uptime, and security, reduced complexity, and easier Kubernetes cluster management. The key to gaining these capabilities is Cluster API (CAPI). DKP uses CAPI to provision and manage Kubernetes clusters, which imposes an immutable deployment model and enables state reconciliation for Kubernetes clusters.

IT Pro Day 2023

Modeling and Unifying DevOps Data Part 2: Code

GitLab vs. GitHub: Choosing the right version control service

Version control is a system for tracking and managing changes to a software project over time. It provides a structured way to document modifications, ensuring that every alteration is recorded along with details such as who made the change and when it occurred. This history allows multiple team members to work on the same project without overwriting each other’s work and to easily revert to previous versions of the project when necessary.

Introducing Teneo

Five exciting new features coming to Bitbucket Cloud

Cloud monitoring vs. On-premises - Prometheus and Grafana

Prometheus and Grafana are the two most groundbreaking open-source monitoring and analysis tools in the past decade. Ever since developers started combining these two, there's been nothing else that they've needed. There are many different ways a Prometheus and Grafana stack can be set up.

How to Compare DCIM Vendors

The process of choosing the right Data Center Infrastructure Management (DCIM) software can have a significant impact on your organization’s success or failure. With a proven track record of helping thousands of customers evaluate their DCIM options, we at Sunbird recognize the importance of making an informed decision. We understand the unique challenges faced during the selection process, and we are committed to helping you navigate through them.

Realtime Collaboration in Kubernetes to Supercharge Team Productivity - Civo Navigate NA 2023

VMware Named an Outperformer in 2023 GigaOm Radar Report for GitOps

VMware is pleased to reveal that we have been named an Outperformer in the 2023 GigaOm Radar Report for GitOps. In the outperformer ring and moving closer to a leadership position, VMware has been placed in the Platform Play and Innovation quadrant. The GigaOm Radar for GitOps shows VMware as an outperformer.

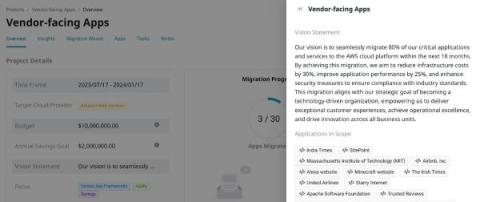

Unlocking Cloud Migration Excellence with a Vision Statement

A successful cloud migration begins with a well-defined vision of the desired outcomes and alignment to the organization’s strategic goals. At Tidal, we often work with customers to develop vision statements and success metrics that provide a North Star to guide the migration journey. Vision statements help balance tactical project objectives with the customer’s broader mission.

Creating HTTP triggers in Azure Functions

PagerDuty Appoints Eric Johnson as Chief Information Officer

Feature Flags Management with Ephemeral Environments

How to Authenticate Access to the JFrog Platform through Your IDE

Navigating Common Azure Files Issues and Solutions

Azure Files is a cornerstone of modern cloud-based file sharing. As IT professionals dive deeper into its offerings, several challenges may arise. This guide provides an in-depth look into these challenges and elucidates their solutions.

Fast SDV prototyping in automotive with real-time kernel

SQL Sentry Then and Now

Kubernetes Management | A Short History of D2iQ

Heroku Monitoring: Visualization and Understanding Data

Data visualization is a way to make sense of the vast amount of information generated in the digital world. By converting raw data into a more understandable format, such as charts, graphs, and maps, it enables humans to see patterns, trends, and insights more quickly and easily. This helps in better decision making, strategic planning, and problem-solving. Visualization and understanding data are critical in platform-as-a-service (PaaS) offerings like Heroku.

Your Comprehensive Guide to HAProxy Protocol Support

Internet protocols are the lifeblood of internet communication, powering important connections between servers, clients, and networking devices. These rules and standards also determine how data traverses the web. Without these protocols, internet traffic as we know it would be severely fragmented or even grind to a screeching halt. And without evolving protocol development, the web couldn’t properly support the applications driving massive traffic volumes worldwide (or vice versa).

Best Global Cities For A Career In Tech

Continuous Verification - It's More Than Just Breaking Things On Purpose - Civo Navigate NA 23

A complete guide on Azure Service Bus connection string

Testing Microservices at Scale: Using Ephemeral Environments

Internal Developer Platform vs. Internal Developer Portal: What to choose?

Brocade switch configuration management with Network Configuration Manager

Brocade network switches encompass a variety of switch models that cater to diverse networking needs. In today’s intricate networking landscape, manually handling these switches with varying configurations and commands within a large network infrastructure can be a daunting task. This complexity often leads to human errors such as misconfigurations. How can you optimize your network environment effectively when utilizing a variety of Brocade switches and eliminate the need for manual management?

Monitoring virtual machines with Prometheus and Graphite

Virtual machines give you a flexible and convenient environment where people can access different operating systems, networks, and storage while still using the same computer. This prevents them from purchasing extra machines, switching to other devices, and maintaining them. This helps companies to save costs and increase task efficiency. Although using VMs for everyday tasks may be enjoyable, ensuring consistent performance and performing maintenance can be daunting.

ECS Vs. EC2 Vs. S3 Vs. Lambda: The Ultimate Comparison

What are Microservices? Code Examples, Best Practices, Tutorials and More

Microservices are increasingly used in the development world as developers work to create larger, more complex applications that are better developed and managed as a combination of smaller services that work cohesively together for more extensive, application-wide functionality. Tools such as Service Fabric are rising to meet the need to think about and build apps using a piece-by-piece methodology that is, frankly, less mind-boggling than considering the whole of the application at once.

Internal Developer Platform vs Internal Developer Portal: What's The Difference?

Implementing Zero Trust: A Practical Guide

Mastering Incident Resolution: Process and Best Practices

Ep. 8: Building a Better Internet with John Engates

Config.tips | Building the largest collaborative library of config tips and tricks

What is a Service Mesh? And Why Would You Want One?

New secure collaboration solutions in AI, Dev/Sec/ChatOps, and Zero Trust from Mattermost partners

In Mattermost v9.0, secure, purpose-built collaboration allows your team to focus and thrive. Whether delivered in high-security infrastructure, deployed to the edge, or interconnecting every aspect of your digital landscape, our partner community can help your enterprise leverage the Mattermost secure collaboration hub. Their services include not only streamlining deployment and optimizing for scale but also innovating and extending the platform to put your unique needs first.

How to resubmit & delete messages in Azure Service Bus dead letter queue?

The connection between incident management and problem management

Sometimes, two concepts overlap so much that it’s hard to view them in isolation. Today, incident management and problem management fit this description to a tee. This wasn’t always the case. For a long time, these two ITIL concepts were seen as distinct—with specialized roles overseeing each. Incident management existed in one corner and problem management in the other. Then came the DevOps movement and the lines suddenly became blurred. So where do they stand today?

Monitoring Kubernetes with Graphite

In this article, we will be covering how to monitor Kubernetes using Graphite, and we’ll do the visualization with Grafana. The focus will be on monitoring and plotting essential metrics for monitoring Kubernetes clusters. We will download, implement and monitor custom dashboards for Kubernetes that can be downloaded from the Grafana dashboard resources. These dashboards have variables to allow drilling down into the data at a granular level.

How to monitor Python Applications with Prometheus

Prometheus is becoming a popular tool for monitoring Python applications despite the fact that it was originally designed for single-process multi-threaded applications, rather than multi-process. Prometheus was developed in the Soundcloud environment and was inspired by Google’s Borgmon. In its original environment, Borgmon relies on straightforward methods of service discovery - where Borg can easily find all jobs running on a cluster.

Grafana vs. Datadog

Before we jump into the specifics of Grafana and Datadog, let's look at the main comparison points. Grafana is a great dashboard that allows you to plug in essentially any data source in the world. Grafana is most commonly paired with Prometheus, Graphite, and Elasticsearch to provide a full APM, time-series, and logs monitoring stack.

#90DaysOfDevOps - The DevOps Learning Journey - Civo Navigate NA 23

Azure Monitoring Tool: Here's What's New in Serverless360 for August 2023

7 Strategies for Azure Cost Optimization

Streamlining Incident Management with our latest feature update: Merge Incidents

10 Key Benefits of DevOps

DevOps is a practice that combines software development and IT operations to improve the speed, quality, and efficiency of software delivery. By breaking down traditional silos between development and operations teams and promoting a culture of continuous improvement, DevOps helps organizations achieve their goals and remain competitive in today’s fast-paced digital landscape. To better understand how we asked engineers what key DevOps benefits they noticed since working with this approach.

Graphite Monitoring Tool Tutorial

In this post, we will go through the process of configuring and installing Graphite on an Ubuntu machine. What is Graphite Monitoring? In short; Graphite stores, collects, and visualizes time-series data in real time. It provides operations teams with instrumentation, allowing for visibility on varying levels of granularity concerning the behavior and mannerisms of the system. This leads to error detection, resolution, and continuous improvement. Graphite is composed of the following components.

How a real-time kernel reduces latency in telco edge clouds

Auto Filter Messages into Subscriptions in Azure Service Bus Topic

Journey from Junior to Senior SRE: Key Insights and Strategies

As Site Reliability Engineering (SRE) continues to grow in popularity, many professionals are looking for ways to advance from junior to senior roles. While there is no one-size-fits-all approach, the transition from junior to senior SRE is marked by a gradual increase in experience and a set of key skills. In this blog, we will explore the valuable insights and strategies shared by experienced SREs.

Sarbanes-Oxley (SOX) Compliance: How SecOps Can Stay Ready + Pass Your Next SOX Audit

4 ITSM Automation Moves to Boost Business Growth Through Change

The life of an L1 engineer … receiving all the tickets, providing all the IT services, and interacting with all the stakeholders. Tickets, like requests for access to an application or system, account unlocks, onboarding and offboarding employees, and more are here to stay.

Blocking deployments with your IDP

What's the Difference Between an Agile Retrospective and an Incident Retrospective?

Two key features to speed up your builds on CircleCI

Top use cases for running CI jobs on a self-hosted infrastructure

Automate development best practices across your toolchain | Sleuth Automations

AWS KMS Use Cases, Features and Alternatives

A Key Management Service (KMS) is used to create and manage cryptographic keys and control their usage across various platforms and applications. If you are an AWS user, you must have heard of or used its managed Key Management Service called AWS KMS. This service allows users to manage keys across AWS services and hosted applications in a secure way.

Kubernetes Logging with Filebeat and Elasticsearch Part 1

This is the first post of a 2 part series where we will set up production-grade Kubernetes logging for applications deployed in the cluster and the cluster itself. We will be using Elasticsearch as the logging backend for this. The Elasticsearch setup will be extremely scalable and fault-tolerant.

Kubernetes Logging with Filebeat and Elasticsearch Part 2

In this tutorial, we will learn about configuring Filebeat to run as a DaemonSet in our Kubernetes cluster in order to ship logs to the Elasticsearch backend. We are using Filebeat instead of FluentD or FluentBit because it is an extremely lightweight utility and has a first-class support for Kubernetes. It is best for production-level setups. This blog post is the second in a two-part series. The first post runs through the deployment architecture for the nodes and deploying Kibana and ES-HQ.

Can Your Racks Support NVIDIA DGX H100 Systems?

AI is booming. The AI market is projected to grow 37.3% annually from 2023 to 2030. With so many organizations adopting or considering AI applications, data centers need to be ready to support the new demand. However, without the right tools and data, it is difficult to understand if your existing facilities have the capacity to support systems like the “gold standard for AI infrastructure,” the NVIDIA DGX H100.

Mobile APP teaser and eBPF functions! | Netdata Office Hours #6

Redefining Financial Services with On-Demand Virtual Servers

AWS Savings Plans Vs. Reserved Instances: When To Use Each

Top 5 Server Monitoring Tools

The need to monitor the health of servers and networks is unanimous. You don't want to be a blind pilot who is headed for an inevitable disaster. Fortunately, there are many open source and commercial tools to help you do the monitoring. As always, good and expensive are not as attractive as good and cheap. So, we've put together the most valuable cloud and windows monitoring tools to get you started.

Day 2 Navigate Europe 2023 Wrap Up

We kicked off the start of the Day 2 with our host Nigel Poulton as he prepared us with a quick rundown of the highlights from the first day before giving attendees a taste of what to expect from the rest of the event. After this point, Nigel brought Kelsey Hightower to the stage for his keynote session with Mark Boost and Dinesh Majrekar. If you missed our Day 1 recap, check it out here.

Deploy fully configured VMs in minutes on Google Cloud, using gcloud CLI and cloud-init

Multi-cluster Failover With A Service Mesh - Civo Navigate NA 2023

Take Your Pick! The Best Server Monitoring Tools on the Market

IT professionals are always presented with myriad solutions when seeking additional software for their network infrastructure. When it comes to server monitoring solutions, there are multiple options available. After all, every organization has its own needs, individual infrastructure and software requirements. With that in mind, the following list is a guide to help IT professionals select what they believe may be the best possible server monitoring solution for their organization.

Why GDIT Chose D2iQ for Military Modernization

General Dynamics Information Technology (GDIT) is among the major systems integrators that have chosen D2iQ to create Kubernetes solutions for their U.S. military customers. I spoke with Todd Bracken, GDIT DevSecOps Capability Lead for Defense, about the reasons GDIT chose D2iQ and the types of solutions his group was creating for U.S. military modernization programs using the D2iQ Kubernetes Platform (DKP).

Hyperview Integrates Digitalor for Rack-Unit RFID Asset Tracking and Environmental Sensors

Vancouver, BC—September 13, 2023— Hyperview, a leading cloud-based data center infrastructure management (DCIM) platform provider, and Digitalor, a global leader in rack-unit MC-RFID asset tracking, have announced a strategic partnership that offers Hyperview users automated, real-time life cycle management for data centers and hybrid IT environments.

Deploying Single Node And Clustered RabbitMQ

RabbitMQ is a messaging broker that helps different parts of a software application communicate with each other. Think of it as a middleman that takes care of sending and receiving messages so that everything runs smoothly. Since its release in 2007, it's gained a lot of traction for being reliable and easy to scale. It's a solid choice if you're dealing with complex systems and want to make sure data gets where it needs to go.

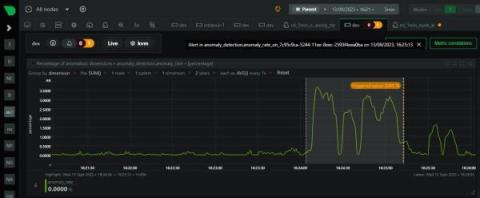

Our first ML based anomaly alert

Over the last few years we have slowly and methodically been building out the ML based capabilities of the Netdata agent, dogfooding and iterating as we go. To date, these features have mostly been somewhat reactive and tools to aid once you are already troubleshooting. Now we feel we are ready to take a first gentle step into some more proactive use cases, starting with a simple node level anomaly rate alert. note You can read a bit more about our ML journey in our ML related blog posts.

Unlocking IT: Considerations for a Powerful Observability Strategy

In today's cloud-native landscapes, observability is more than a buzzword; it's a critical element for software development teams looking to master the complexities of modern environments like Kubernetes. There’s a multi-faceted nature to observability with all its various levels and dimensions — from basic metrics to comprehensive business insights. It’s complex and can continue indefinitely…if you let it.

Accessibility testing with Cypress

Effective user experience (UX) design is a key factor in creating compelling software products. UX considers the quality of interaction that users have with a product and takes the user’s point of view as the most sacred thing in software and product design. A great UX includes accessibility, which ensures that software is inclusive and usable by the widest possible audience.

Connect Over Coffee | Cross-Cloud with Megaport Cloud Router (MCR)

How to Centralize and Automate Your Network Device Configuration Management

Getting started with PromQL

This article will focus on the popular monitoring tool Prometheus, and how to use PromQL. Prometheus uses Golang and allows simultaneous monitoring of many services and systems. In order to enable better monitoring of these multi-component systems, Prometheus has strong built-in data storage and tagging functionalities. To use PromQL to query these metrics you need to understand the architecture of data storage in Prometheus, and how the metric naming and tagging works.

VMware Tanzu Service Mesh Named a Leader in 2023 GigaOm Radar Report on Service Mesh

GigaOm has once again placed VMware Tanzu Service Mesh within the leader ring of its Radar Report on Service Mesh. This year Tanzu Service Mesh has been upgraded to the Outperformer label, moving closer to the center and marking its heightened recognition as an industry leader. This is not only a testament to our robust enterprise capabilities and broad support for various application platforms, public clouds, and runtime environments, but also a validation of our strategic approach.

Blameless Garners Acclaim in Industry Reports from G2 and Gartner for Site Reliability and Incident Management

Seven Models of Cloud Native Applications

K3s Vs K8s: What's The Difference? (And When To Use Each)

Using GitOps for Databases

In our previous article about Database migrations we explained why you should treat your databases with the same respect as your application source code. Database migrations should be fully automated and handled in a similar manner to applications (including history, rollbacks, traceability etc).

Comparing Your Multicloud Connectivity Options

As multicloud adoption surges, so too do the choices for connecting to your clouds. We break down the key solutions and their benefits.

Monitoring Weather At The Edge With K3s and Raspberry Pi Devices - Civo Navigate NA 2023

Ubuntu updates, releases and repositories explained

Real-time Ubuntu is now available in AWS Marketplace

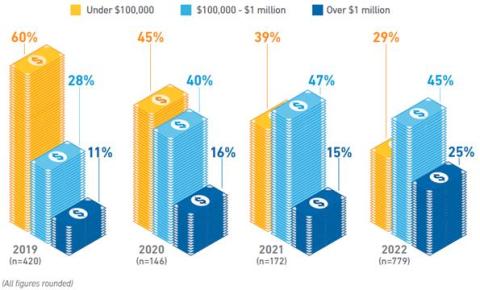

More than downtime: the cultural drain caused by poor incident management

The costs of lackluster incident management are truly far-reaching. We’ve learned they go beyond explicit costs, like lost revenue and labor expenses. And that they go beyond the opportunity cost of engineers being diverted from building revenue-building features. The final area of incident cost that’s often overlooked is cultural drain.

Automation, Rain or Shine: A Fortune 500 Network Communications Enterprise's Story of Enhanced Alarm Management

A leading provider of advanced network communications and technology solutions for consumers, small businesses, enterprise organizations, and carrier partners across the U.S. wanted to become more powerful, using automation, as to better understand the customer impact of bad weather and proactively improve their customer experience.

Navigating the risk of sharing database access

Getting Started with Cluster Autoscaling in Kubernetes

Autoscaling the resources and services in your Kubernetes cluster is essential if your system is going to meet variable workloads. You can’t rely on manual scaling to help the cluster handle unexpected load changes. While cluster autoscaling certainly allows for faster and more efficient deployment, the practice also reduces resource waste and helps decrease overall costs.

Cloud Efficiency Rate: A New Metric To Quantify Cloud-Native Business Value

How to keep your Kubernetes Pods up and running with liveness probes

Getting your applications running on Kubernetes is one thing: keeping them up and running is another thing entirely. While the goal is to deploy applications that never fail, the reality is that applications often crash, terminate, or restart with little warning. Even before that point, applications can have less visible problems like memory leaks, network latency, and disconnections. To prevent applications from behaving unexpectedly, we need a way of continually monitoring them.

What is a PaaS? A Definitive Guide

A platform as a service, or PaaS, is one of the three major cloud computing service models. In our opinion, it’s the only one that successfully delivers all benefits of the cloud to software developers, including control, cost-effectiveness, flexibility, and scalability. Of course, other as-a-service models are still useful. In fact, all three main cloud computing models offer different advantages to organizations.

Understanding App Hosting: Definition and Functionality

Developing apps takes a lot of blood, sweat, and tears. It can feel like a marathon that doesn’t even have the courtesy to end once you cross the finish line. From managing infrastructure to scaling, operations, and security (to name just a few things), it takes plenty of work to ensure that your cherished creation is loved by users and customers. App hosting takes much of this responsibility off your shoulders, and a solid Platform as a Service (PaaS) provider can go even further.

VPN vs. SD-WAN tunnels

Release Lifecycle Management - JFrog Artifactory

Day 1 Navigate Europe 2023 Wrap Up

Navigate Europe 2023 has come to an end, and we couldn’t be more grateful for everyone involved in this, from our sponsors, attendees, and most importantly, the Civo team. Whilst we have already announced the next event for next year in Austin, Texas, we want to spend some time reflecting on the amazing few days we’ve just had, and everything we took away from it.

Microsoft OneDrive: Your Ultimate Guide to Cloud Storage

Hey there, cloud wanderer! Ever found yourself juggling multiple USB drives or emailing files to yourself just to have access to them on another device? Well, Microsoft OneDrive is here to make your life a whole lot easier. This article will be your ultimate guide to understanding what OneDrive is, how to use it, and why it might just be the cloud storage solution you’ve been looking for.

How improved network performance is a key part of Web 3.0 delivery

Custom domains now available on preview environments

You’re probably all very familiar with the URLs we currently generate automatically for your environments on Platform.sh by now. And while they are very useful, they’re not the most friendly-looking as, we build them using the following pattern: This approach is important because it ensures that our URLs are unique for all projects and their environments but it also makes them pretty long, overly complicated, and let’s face it—not the prettiest. But this is the case no more!

A detailed guide on Azure architecture diagram

Top Cloud Cost News From August 2023

JFrog's cloud migration story

Manage Kubernetes environments with GitOps and dynamic config

Most modern infrastructure architectures are complex to deploy, involving many parts. Despite the benefits of automation, many teams still chose to configure their architecture manually, carried out by a deployment expert or, in some cases, teams of deployment engineers. Manual configurations open up the door for human error. While DevOps is very useful in developing and deploying software, using Git combined with CI/CD is useful beyond the world of software engineering.

Integrating MetricFire and Heroku for Web Hosting

Today's fast-paced digital landscape demands efficient and reliable web hosting solutions. As websites and applications become increasingly complex, businesses are constantly seeking ways to optimize their performance and ensure seamless user experiences. One crucial aspect of this optimization process is the effective monitoring and tracking of vital metrics.

How to Integrate CloudWatch and Sentry with MetricFire

CloudWatch and Sentry are two powerful tools that play crucial roles in monitoring and error tracking, making them essential for any organization that wants to ensure the smooth operation of its applications and systems. CloudWatch, developed by Amazon Web Services (AWS), offers comprehensive monitoring capabilities for AWS resources and applications, providing real-time insights into system performance and resource utilization.

How To Gain Back Your Velocity When Working With Kubernetes - Civo Navigate NA 2023

Simplifying your Kubernetes infrastructure with cdk8s

Kubernetes has become the backbone of modern container orchestration, enabling seamless deployment and management of containerized applications. However, as applications grow in complexity, so do the challenges of managing their Kubernetes infrastructure. Enter cdk8s, a revolutionary toolset that transforms Kubernetes configuration into a developer-friendly experience.

How to monitor power automate flows involving Azure services?

Webinar: Internals of How we tame High Cardinality

Webinar: Uncovering High Cardinality with Piyush Verma

Onboarding New Devs - GitKraken Workspaces

Helios Joins the AWS Marketplace!

We are thrilled to announce that Helios, the applied observability platform for developers, is now available on the AWS Marketplace! This marks a significant milestone in providing visibility and runtime insights for easy troubleshooting and reduced MTTR. This further cements our commitment to providing top-tier services to our customers and to AWS users. By bringing Helios directly to the AWS Marketplace, it is easier than ever to access and onboard our platform.

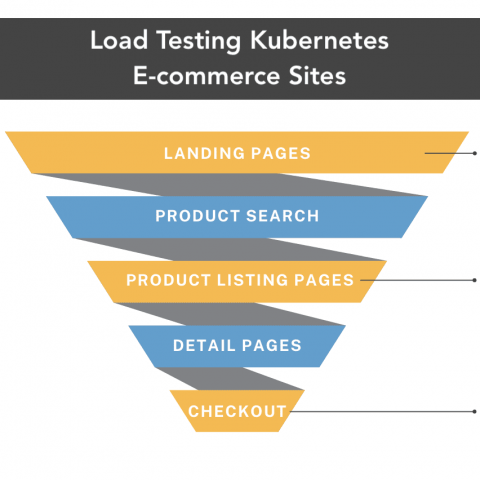

5 Steps to Optimizing eCommerce Site Performance with Kubernetes Load Testing

Introducing Cloudsmith Navigator: Your Trusted Guide to OSS Package Quality

Discover Cloudsmith Navigator: a revolutionary tool designed to guide software engineering teams in selecting top-quality open source packages. By analyzing and scoring thousands of packages based on security, maintenance, and documentation, Navigator simplifies the package selection process. Choosing the right software package for your project can sometimes feel like finding a needle in a haystack.

How to Set Up an IT War Room

IT issues can happen at any time and significantly impact an organization. Hence, it's essential to have a plan to handle these issues quickly and efficiently. And one way to do this is to create an IT war room. An IT war room is a dedicated space for teams to collaborate and resolve issues. Establishing an IT war room enhances an organization's capacity to swiftly and efficiently address IT problems, ultimately reducing their impact on the business.

Postman Load Test Tutorial

Enhancing Incident Management: Seven Integrations to Complete Your Ticketing Systems

Amazon ECS Vs. EKS Vs. Fargate: The Complete Comparison

Azure SQL database cost optimization to maximize savings

How to make your software team awesome: Get the inside scoop with DORA

How to Develop a Modern Monitoring & Observability Strategy for Businesses of Any Size

In the dynamic world of IT, the way we monitor systems has seen a remarkable evolution. Gone are the days when monitoring was limited to basic server checks or infrastructure health. With the rise of cloud-native applications, serverless architectures, and container orchestration platforms like Kubernetes, the digital landscape has become a multi-dimensional maze.

It's out of the Oven: Bun 1.0 support is here

Today Bun 1.0 is being announced—one of our friends in the ‘.sh’ tld—so it’s an absolute pleasure to share a small celebration and our first thoughts on this fully-baked runtime.

Using Tailscale for Authentication of Internal Tools

JWT is a popular way for authentication and authorization, especially for service to service communications. When it comes to internal tools, distribution and renewal of JWT can become a challenge. Our internal support systems use JWT to authenticate and authorize access and they are written in a few different languages and run on different hosting options.

Building an E2E Ephemeral Testing Environments Pipeline with GitHub Actions and Qovery

How telcos are building carrier-grade infrastructure using open source

Using cloud unit metric costs to right size your AWS bill and improve productivity

Argo Rollouts at CircleCI: Progressive deployment for agile and efficient releases

At Circle, our traditional approach to Kubernetes (k8s) deployments likely looks familiar to many of you: Run the workflow, create the image, build the Helm chart and deliver it to k8s. At that point, k8s takes over with its rolling update. This method gets the job done, but we knew it wasn’t ideal. Limited support for canary releases and the need for time-consuming error monitoring and manual rollbacks added friction and risk to our release processes.

DevOps Speakeasy with Bruce Schneier

Practical guidance for getting started as a site reliability engineer

At the beginning of May, I joined incident.io as the first site reliability engineer (SRE), a very exciting but slightly daunting move. With only some high-level knowledge of what the company and its systems looked like prior to this point, it’s fair to say that I didn’t have much certainty in what exactly I’d be working on or how I’d deliver it.

What is VMware NSX?

The Misunderstood Troll - A compliance and audit fairy tale

Maximizing Efficiency and Collaboration with Top-tier DevOps Services

In today’s fast-paced digital landscape, where software development and deployment happen at lightning speed, DevOps has emerged as the key to achieving operational excellence and maintaining a competitive edge. DevOps is more than just a buzzword; it’s a culture, a set of practices, and a collection of powerful tools that streamline collaboration between development and operations teams.

Nagios vs. MetricFire

The world of IT monitoring has evolved significantly in recent years, with businesses relying more than ever on robust and efficient tools to keep their systems running smoothly. In this fast-paced digital landscape, it's crucial to have a monitoring solution that can provide real-time insights into the health and performance of your infrastructure. In this blog post, we will explore the advantages of using MetricFire over Nagios as your go-to monitoring tool.

SAML, SSO and Automatic User Provisioning

We’ve been busy building a few features that are going to be very useful for teams at larger companies using Cloud 66: Automatic User Provisioning and SAML SSO.

Mastering Multi-Repo Management with GitKraken: Insights

Blameless Announces New CommsFlow Upgrade to Elevate Incident Management Communication

Understanding Kubernetes Network Policies

Kubernetes has emerged as the gold standard in container orchestration. As with any intricate system, there are many nuances and challenges associated with Kubernetes. Understanding how networking works, especially regarding network policies, is crucial for your containerized applications' security, functionality, and efficiency. Let’s demystify the world of Kubernetes network policies.

Speakers & Sponsors Highlights from Navigate Europe 2023

What's going on with the Developer Productivity debate?

Ubuntu Security Bootcamp: Configuring hardware security keys for OpenSSH

Welcoming new leadership at Cloudsmith - a note from Alan Carson

Alan Carson writes about his experience and journey with Cloudsmith, as new CEO Glenn Weinstein steps in as leadership. I heard something recently, that resonated, about success. In a simple (but not easy) three-step plan; success happens when the following three things align: A great example is, of course, Steve Jobs and Apple. The contrarian idea was that every single human would need a personal computer. He was proven right. And he executed expertly (with a few ups and downs obviously!)

TPM-backed Full Disk Encryption is coming to Ubuntu

Do you have a Cloud Exit Strategy?

In the modern digital age, the allure of cloud computing has been nothing short of mesmerizing. From startups to global enterprises, businesses have been swiftly drawn to the promise of scalability, flexibility, and the potential for reduced capital expenditure that cloud platforms like Azure offer. Considering the diverse Azure VM types and the attractive Azure VMs sizes, it’s easy to understand the appeal.

Resolve Automation Capabilities Framework: From Tactical to Strategic End-to-end Automation

All business eyes seem to be focused on the current challenges of an unsteady economic environment, and organizational leaders are working to figure out the best plan to overcome them. Leaders have their own collection of key initiatives as no two companies are the same. Most commonly; however, they want to double capacity and productivity, cut costs, enhance customer experiences, and future-proof their organizations.

Should You Reload or Restart HAProxy?

Managing your load balancer instances is important while using HAProxy. You might encounter errors, need to apply configurations, or periodically upgrade HAProxy to a newer version (to name a few examples). As a result, reloading or restarting HAProxy is often the secret ingredient to restoring intended functionality. Whether you’re relatively inexperienced with HAProxy or you’re a grizzled veteran, understanding which method is best in a given situation is crucial.

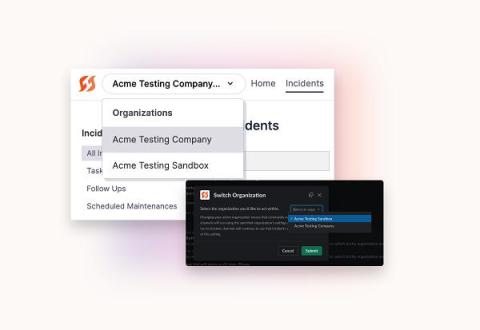

Multi-Org takes FireHydrant for enterprise to the next level

Too often, complexity means confusion — and confusion is your worst enemy when it comes to efficient incident response. We recently found that poor incident management practices (like confusion about what to do or how to escalate an incident) can cost companies as much as $18 million a year.

A Comprehensive Guide to Network Device Monitoring

Automate reliability testing in your CI/CD pipeline using the Gremlin API

For many software engineering teams, most testing is done in their CI/CD pipeline. New deployments run through a gauntlet of unit tests, integration tests, and even performance tests to ensure quality. However, there's one key test type that's excluded from this list, and it's one that can have a critical impact on your application and your organization: reliability tests. As software changes, reliability risks get introduced.

Jira vs. Trello: Which Tool is Best for Developer Collaboration?

As if coordinating multiple team members and competing deadlines wasn’t hard enough, project managers often face another daunting task: which project management tool facilitates better dev-to-dev collaboration? Today, we’ll be looking at two of the leading project management tools, Jira and Trello. On the surface, these two options appear relatively similar, but each tool offers its own unique capabilities.

What is a virtual private cloud in AWS?

Heroku Monitoring: Getting Started with Metrics

It is important to monitor Heroku applications’ performance to ensure their productive and stable operation. In this article, we will talk about what tools Heroku provides for monitoring applications, which are the most important metrics to monitor, and how MetricFire can help you with this.

Cycle's New Interface, Part II: The Engineering Behind Cycle's New Portal

In our last installment, we covered the myriad of new UI changes added to Cycle’s portal. In this part, we walk through five of the tough engineering choices made when developing the new interface, discussing the alternatives that were considered, and shining a light on some of the technology our engineering team utilizes today.

How to Use PowerShell to Automate Office 365 Installations

In the fast-paced and demanding world of IT, every tool that saves time and simplifies tasks is worth its weight in gold. Today, we're going to explore how PowerShell scripts can be utilized to automate the installation of Office 365, a critical operation that can save you countless hours in the long run. In fact, with a well-written script, you can manage installations across an entire network from your desk.

12 DevOps Best Practices Teams Should Follow

DevOps is a software development philosophy that helps organizations achieve faster delivery, better quality, and more reliable software, making it easier to adapt to changing business needs and customer demands. However, implementing DevOps can be challenging on many levels. It requires changes in culture, processes, skills, knowledge, and tools, which can encounter resistance from traditional silos within organizations. So, how can you successfully implement DevOps within an organization?

Azure Key Vault: A Comprehensive Overview

Azure Key Vault is Microsoft’s dedicated cloud service, designed to safeguard cryptographic keys, application secrets, and other sensitive data. In an era where digital security is paramount, it functions as a centralized repository. Here, sensitive data is encrypted, ensuring that only designated applications or users can access them. Imagine having a hyper-secure, digital vault where you can store all your essential digital assets.

Using Argo CDs new Config Management Plugins to Build Kustomize, Helm, and More

Starting with Argo CD 2.4, creating config management plugins or CMPs via configmap has been deprecated, with support fully removed in Argo CD 2.8. While many folks have been using their own config management plugins to do things like `kustomize –enable-helm`, or specify specific version of Helm, etc – most of these seem to have not noticed the old way of doing things has been removed until just now!

DKP 2.6 Features New AI Navigator to Bridge the Kubernetes Skills Gap

The latest release of the D2iQ Kubernetes Platform (DKP) represents yet another significant boost to DKP’s multi-cloud and multi-cluster management capabilities. D2iQ Kubernetes Platform (DKP) 2.6 features the new DKP AI Navigator, an AI assistant that enables DevOps to more easily manage Kubernetes environments. As Forbes noted in Addressing the Kubernetes Skills Gap, “The Kubernetes skills shortage is impacting companies across sectors.”

The Benefits and Challenges of Managing Hybrid Estates

FinOps Focus: Cost Management vs. Cost Optimization

Rethinking Cost Optimization Cost Optimization is a term that has been around for a while when discussing Cloud cost, and to a larger extent the practice of FinOps. It is usually what most people associate with FinOps when they hear those terms initially, but is that the correct term to use?

Into the Labyrinth: Revealing the Mantic Minotaur

Cloud storage for enterprises

Cloud repatriation: What's behind the return to on-premises?

Find out why cloud repatriation is on the rise — and what makes on-premises the ideal approach for some businesses. Over the last ten years, the cloud has been touted as a game-changer. But, like magpies, have we all jumped on the “shiny object syndrome” bandwagon? Spending on public cloud services continues to show strong growth, with Gartner forecasting that by the end of 2023, worldwide end user spending on public cloud services will total nearly $600 billion.

SLA vs. SLI vs. SLO: Understanding Service Levels

Ubuntu 23.10 Mantic Minotaur Mascot Animation

Prometheus vs. Datadog