Operations | Monitoring | ITSM | DevOps | Cloud

Logging

The latest News and Information on Log Management, Log Analytics and related technologies.

Capture and Enrich ELB Logs in ONE MINUTE

Instantly Analyze MILLIONS of Logs with Loggregation

Logging for public sector: How to make the most of your mission-critical data

With governments doubling down on logging compliance, many public sector organizations have been focusing on optimizing their log management, especially to ensure they retain logs for required periods of time. Logs — though seemingly straightforward — are the backbone of many mission-based use cases and therefore have the potential to accelerate mission success when centrally organized and leveraged strategically. In public sector, logs are instrumental in.

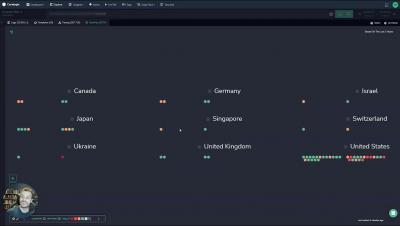

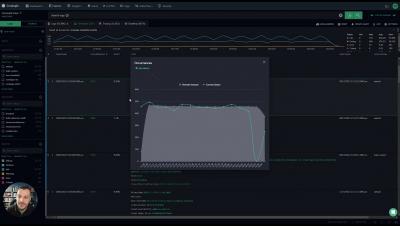

Mastering Event Breaking Management with Cribl Stream

Log events come in all sorts of shapes and sizes. Some are delivered as a single event per line. Others are delivered as multi-line structures. Some come in as a stream of data that will need to be parsed out. Still, others come in as an array that should be split into discrete entries. Because Cribl Stream works on events one at a time, we have to ensure we are dealing with discrete events before o11y and security teams can use the information in those events.

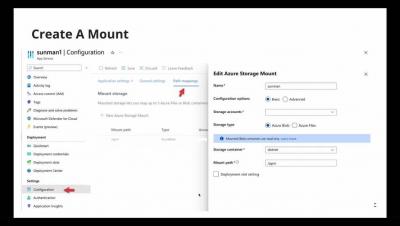

Instrumenting Java APM agent with Azure App Service

Reducing Log Volume with Log-based Metrics

What is Platform Engineering and Why Does It Matter?

In the era of cloud-native development, as businesses rely on a growing number of software tools to enable agile application delivery, platform engineering has emerged as a crucial discipline for building the technology platforms that drive DevOps efficiency. In this blog post, we explain the growing importance of platform engineering in high-performance DevOps organizations and how platform teams enable DevOps efficiency, agility, and productivity.

Technical Education Can Drive Long Term Success for Organizations

Root cause analysis with logs: Elastic Observability's AIOps Labs

In the previous blog in our root cause analysis with logs series, we explored how to analyze logs in Elastic Observability with Elastic’s anomaly detection and log categorization capabilities. Elastic’s platform enables you to get started on machine learning (ML) quickly. You don’t need to have a data science team or design a system architecture. Additionally, there’s no need to move data to a third-party framework for model training.