Operations | Monitoring | ITSM | DevOps | Cloud

Elasticsearch Documents and Mappings

In Elasticsearch parlance, a document is serialized JSON data. In a typical ELK setup, when you ship a log or metric, it is typically sent along to Logstash which groks, mutates, and otherwise handles the data, as defined by the Logstash configuration. The resulting JSON is indexed in Elasticsearch.

5 places cron jobs live in Linux

It might surprise you that the version of cron that runs on your server today is largely compatible with the crontab spec written in the 1970s. One downside of this careful backwards compatibility is that jobs, even on the same server, can be created and scheduled differently.

Barclays Automates Container Monitoring with CA Application Performance Management

How Customers Save Time and Money with Mattermost

First, an introduction. My name is Matt Yonkovit. In September, I joined Mattermost as the head of Customer Success. For the last 13 years, I’ve helped customers succeed using open source software. My goal at Mattermost is to build out a world-class support and success program that enables our customers and community to use Mattermost to revolutionize their business processes and workflow.

Splunk Enterprise Security: Investigation Workbench

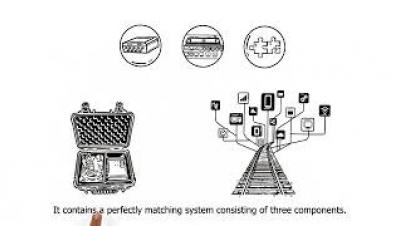

DRIVE: Digital Rail Informatics and Visualisation Engine for Railway Digitalisation

EKS vs. KOPS

In the past, applications would be deployed by installation on a host, using the operating system package manager. This was a heavy solution with tremendous reliance on the operating system package manager and increased complexity with libraries, configuration, executables and so on all interconnected. Then came containers. Containers are small and fast, and are isolated from each other and from the host.

Recipe: How to integrate rsyslog with Kafka and Logstash

This recipe is similar to the previous rsyslog + Redis + Logstash one, except that we’ll use Kafka as a central buffer and connecting point instead of Redis. You’ll have more of the same advantages.

Elasticsearch Ingest Node vs Logstash Performance

Starting from Elasticsearch 5.0, you’re able to define pipelines within it that process your data, in the same way you’d normally do it with something like Logstash. We decided to take it for a spin and see how this new functionality (called Ingest) compares with Logstash filters in both performance and functionality. Is it worth sending data directly to Elasticsearch or should we keep Logstash?